Notice: The repo is left as-is, the Slavic BERT model is now as part of DeepPavlov repo.

BERT is a method of pre-training language representations, meaning that we train a general-purpose "language understanding" model on a large text corpus (like Wikipedia), and then use that model for downstream NLP tasks that we care about (like question answering). For details see original BERT github.

The repository contains Bulgarian+Czech+Polish+Russian specific:

Our academic paper which describes tuning Transformers for NER task in detail can be found here: https://www.aclweb.org/anthology/W19-3712/.

The Slavic model is the result of transfer from 2018_11_23/multi_cased_L-12_H-768_A-12 Multilingual BERT model to languages of Bulgarian (bg), Czech (cs), Polish (pl) and Russian (ru). The fine-tuning was performed with a stratified dataset of bg, cs and pl Wikipedias and ru news.

The model format is the same as in the original repository.

BERT, Slavic Cased: 4 languages, 12-layer, 768-hidden, 12-heads, 110M parameters, 600Mb

Named Entity Recognition (further, NER) is a task of recognizing named entities in text, as well as detecting their type.

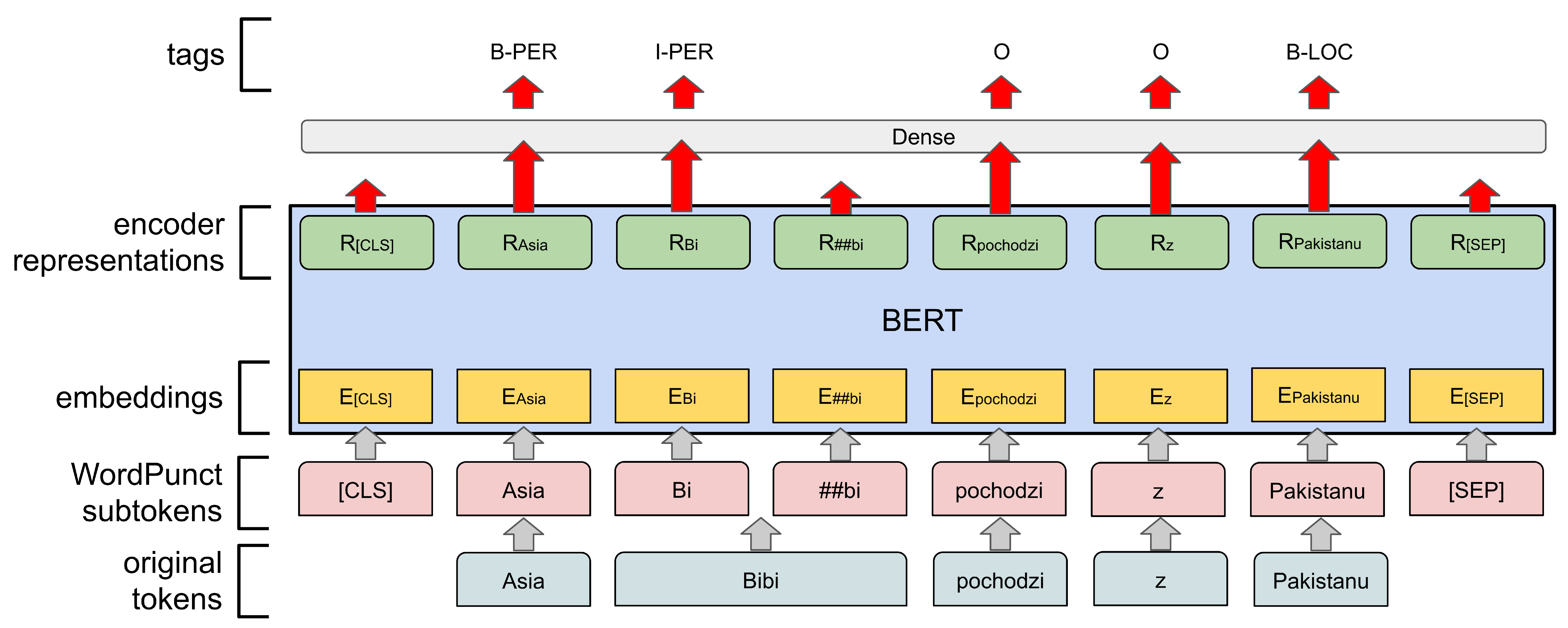

We used Slavic BERT model as a base to build NER system. First, we feed each input word into WordPiece case-sensitive tokenizer and extract the final hidden representation corresponding to the first subtoken in each word. These representations are fed into a classification dense layer over the NER label set. A token-level CRF layer is also added on top.

The model was trained on BSNLP-2019 dataset. The pre-trained model can recognize such entities as:

- Persons (PER)

- Locations (LOC)

- Organizations (ORG)

- Products (PRO)

- Events (EVT)

The metrics for all languages and entities on test set are:

| Language | Tag | Precision | Recall | RPM (Relaxed Partial Matching) |

|---|---|---|---|---|

| cs | 94.3 | 93.4 | 93.9 | |

| ru | 88.1 | 86.6 | 87.3 | |

| bg | 90.3 | 84.3 | 87.2 | |

| pl | 93.3 | 93.0 | 93.2 | |

| PER | 94.2 | 95.6 | 94.9 | |

| LOC | 96.6 | 96.4 | 96.5 | |

| ORG | 84.3 | 92.1 | 88.0 | |

| PRO | 87.6 | 51.3 | 64.7 | |

| EVT | 39.4 | 27.7 | 93.9 | |

| 89.8 | 91.8 | 90.8 |

For detailed description of evaluation method see BSNLP-2019 Shared Task page.

NER, Slavic Cased: 4 languages, 13-layer + CRF, 768-hidden, 2.0Gb

The toolkit support Python 3.6 and Python3.7. To install required packages use:

$ pip3 install -r requirements.txtCAUTION: Python<=3.5 and Python>=3.8 are not supported, see DeepPavlov rep for details.

from deeppavlov import build_model

# Download and load model (set download=False to skip download phase)

ner = build_model("./ner_bert_slav.json", download=True)

# Get predictions

ner(["To Bert z ulicy Sezamkowej"])

# [[['To', 'Bert', 'z', 'ulicy', 'Sezamkowej']], [['O', 'B-PER', 'O', 'B-LOC', 'I-LOC']]]

ner(["Это", "Берт", "из", "России"])

# [[['Это'], ['Берт'], ['из'], ['России']], [['O'], ['B-PER'], ['O'], ['B-LOC']]]The Slavic Bert model can be used in any way proposed by the BERT developers.

One approach may be:

import tensorflow as tf

from bert_dp.modeling import BertConfig, BertModel

from deeppavlov.models.preprocessors.bert_preprocessor import BertPreprocessor

bert_config = BertConfig.from_json_file('./bg_cs_pl_ru_cased_L-12_H-768_A-12/bert_config.json')

input_ids = tf.placeholder(shape=(None, None), dtype=tf.int32)

input_mask = tf.placeholder(shape=(None, None), dtype=tf.int32)

token_type_ids = tf.placeholder(shape=(None, None), dtype=tf.int32)

bert = BertModel(config=bert_config,

is_training=False,

input_ids=input_ids,

input_mask=input_mask,

token_type_ids=token_type_ids,

use_one_hot_embeddings=False)

preprocessor = BertPreprocessor(vocab_file='./bg_cs_pl_ru_cased_L-12_H-768_A-12/vocab.txt',

do_lower_case=False,

max_seq_length=512)

with tf.Session() as sess:

# Load model

tf.train.Saver().restore(sess, './bg_cs_pl_ru_cased_L-12_H-768_A-12/bert_model.ckpt')

# Get predictions

features = preprocessor(["Bert z ulicy Sezamkowej"])[0]

print(sess.run(bert.sequence_output, feed_dict={input_ids: [features.input_ids],

input_mask: [features.input_mask],

token_type_ids: [features.input_type_ids]}))

features = preprocessor(["Берт", "с", "Улицы", "Сезам"])[0]

print(sess.run(bert.sequence_output, feed_dict={input_ids: [features.input_ids],

input_mask: [features.input_mask],

token_type_ids: [features.input_type_ids]}))[Jan 2021] fixed 'Model bert_ner is not registered' issue, updated to

deeppavlov==0.17.2, tensorflow==1.15.5

@inproceedings{arkhipov-etal-2019-tuning,

title = "Tuning Multilingual Transformers for Language-Specific Named Entity Recognition",

author = "Arkhipov, Mikhail and

Trofimova, Maria and

Kuratov, Yuri and

Sorokin, Alexey",

booktitle = "Proceedings of the 7th Workshop on Balto-Slavic Natural Language Processing",

month = aug,

year = "2019",

address = "Florence, Italy",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/W19-3712",

doi = "10.18653/v1/W19-3712",

pages = "89--93"

}

[2] - Mozharova V., Loukachevitch N.: Two-stage approach in Russian named entity recognition, 2016

[3] - BSNLP-2019 Shared Task