[CVPR 2024 Accepted] Task-Driven Exploration: Decoupling and Inter-Task Feedback for Joint Moment Retrieval and Highlight Detection

Task-Driven Exploration: Decoupling and Inter-Task Feedback for Joint Moment Retrieval and Highlight Detection

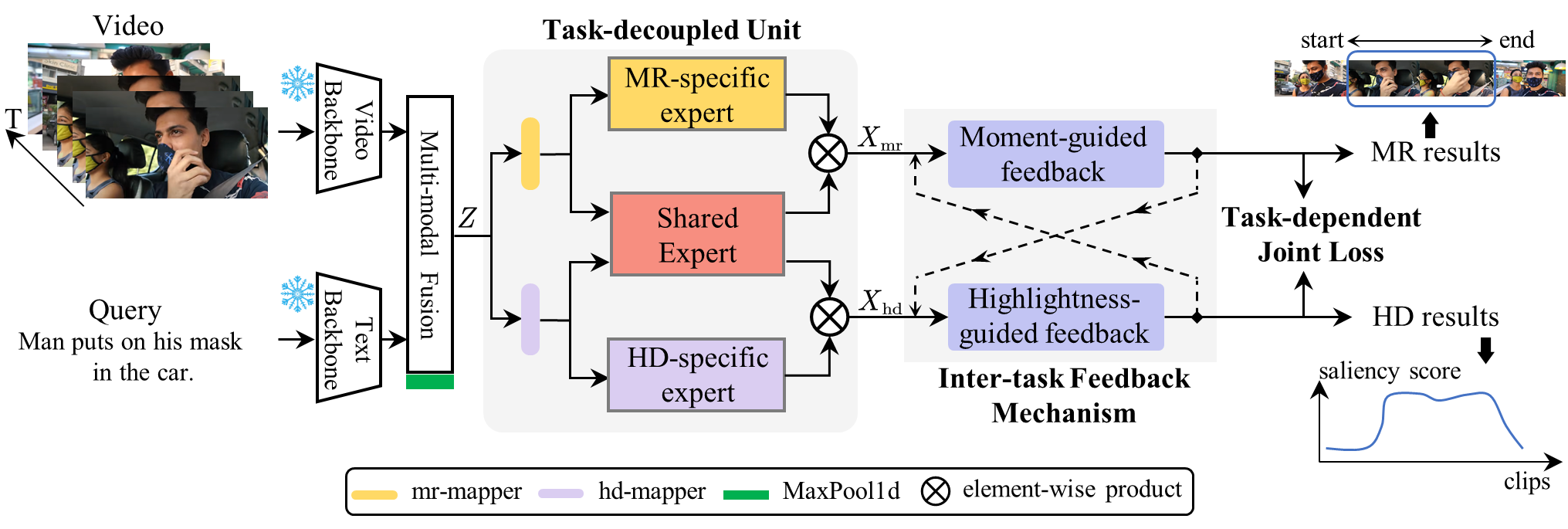

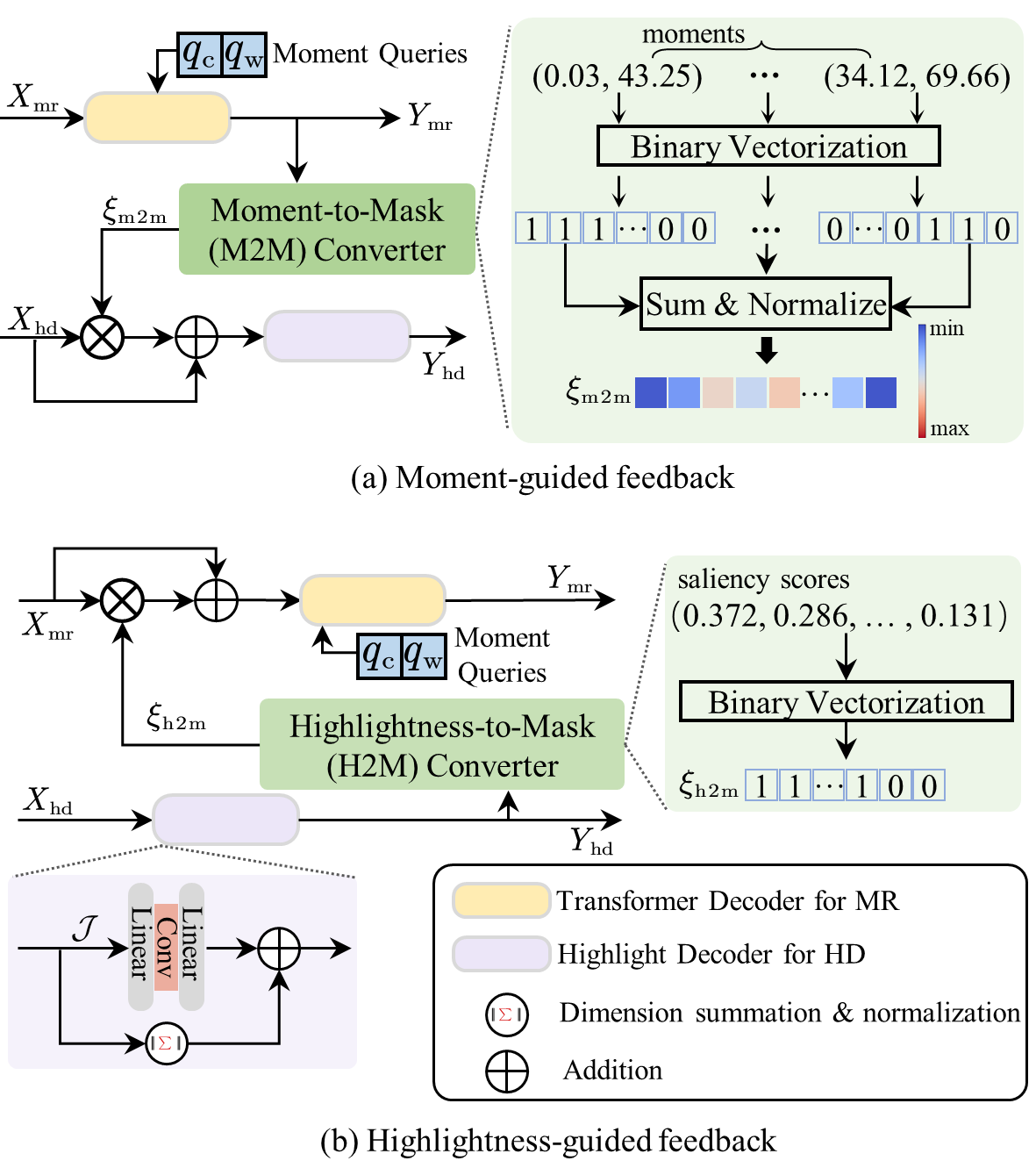

This code repo implements TaskWeave in CVPR 2024, the first attempt to explore the task-driven paradigm for joint Moment Retrieval and Highlight Detection. In this paper, we present the first task-driven top-down framework, named TaskWeave. We introduce a task-decoupled unit to capture task-specific and common representations. To further investigate the interactions between these two tasks, we propose an inter-task feedback mechanism. It transforms the results of one task into guiding masks to assist the other task. Lastly, different from existing methods, we present a task-dependent joint loss function to optimize the model. As far as we are aware, this is the first framework to address this joint task from the task-centric perspective. Comprehensive experiments and in-depth ablation studies on QVHighlights, TVSum, and Charades-STA datasets corroborate the effectiveness and flexibility of the proposed framework.

Please refer to MomentDETR for more details. Please refer to UMT for more details. Please refer to QD-DETR for more details.

- Train(Take

QVHighlightsas an example)

bash taskweave/scripts/train.sh

bash taskweave/scripts/train_audio.sh - Evaluation (Take

QVHighlightsas an example)

bash taskweave/scripts/inference.sh results/{direc}/model_best.ckpt 'val'

bash taskweave/scripts/inference.sh results/{direc}/model_best.ckpt 'test'If you are using our code, please consider citing the following paper.

@inproceedings{yang2024taskweave,

title={Task-Driven Exploration: Decoupling and Inter-Task Feedback for Joint Moment Retrieval and Highlight Detection},

author={Yang, Jin and Wei, Ping and Li, Huan and Ren, Ziyang}

booktitle={CVPR},

year={2024}

}