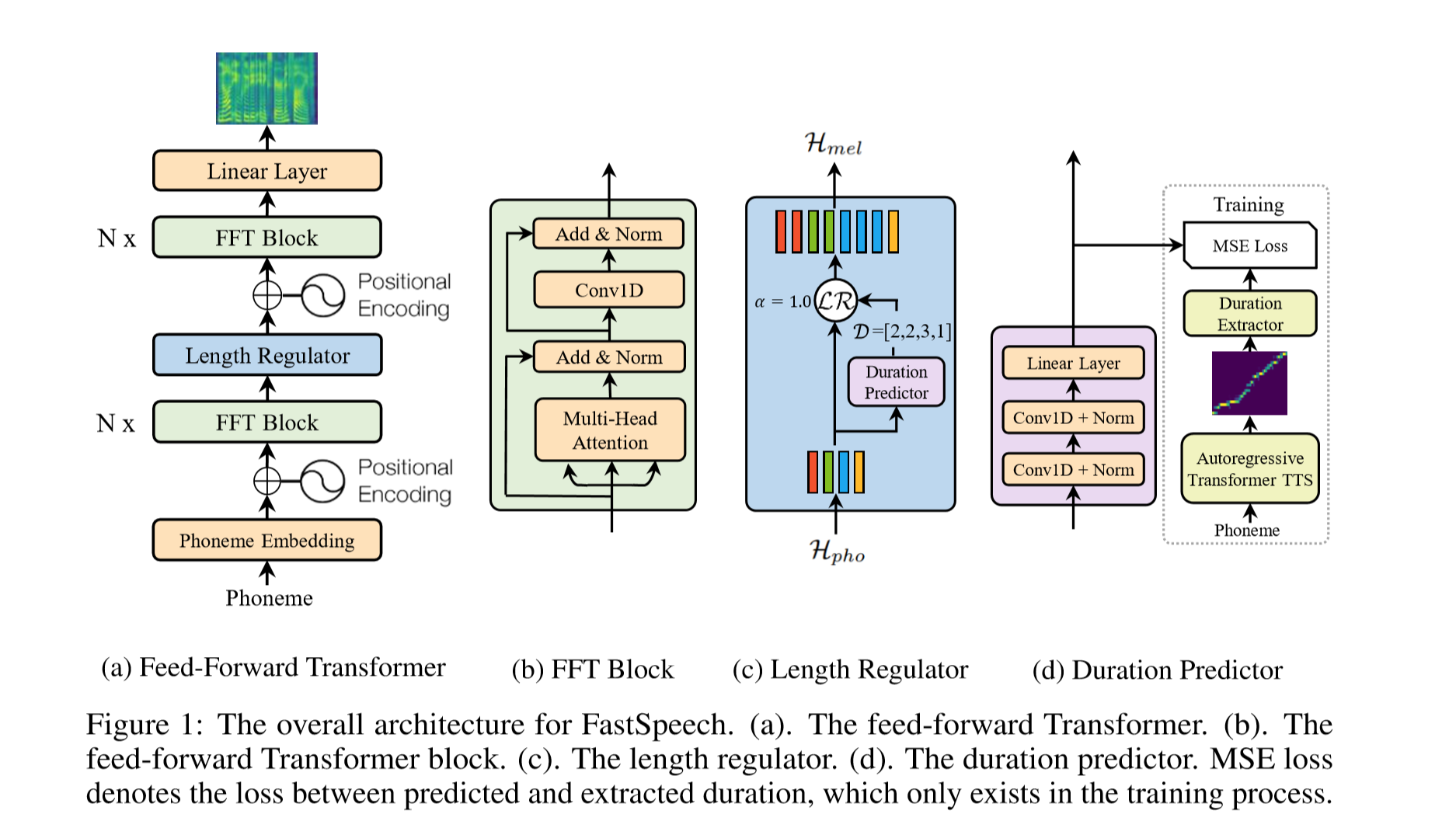

The Implementation of FastSpeech Based on Pytorch.

- Download and extract LJSpeech dataset.

- Put LJSpeech dataset in

data. - Run

preprocess.py. - If you want to get the target of alignment before training(It will speed up the training process greatly), you need download the pre-trained Tacotron2 model published by NVIDIA here.

- Put the pre-trained Tacotron2 model in

Tacotron2/pre_trained_model - Run

alignment.py, it will take long time. - Change

pre_target = Trueinhparam.py. - Run

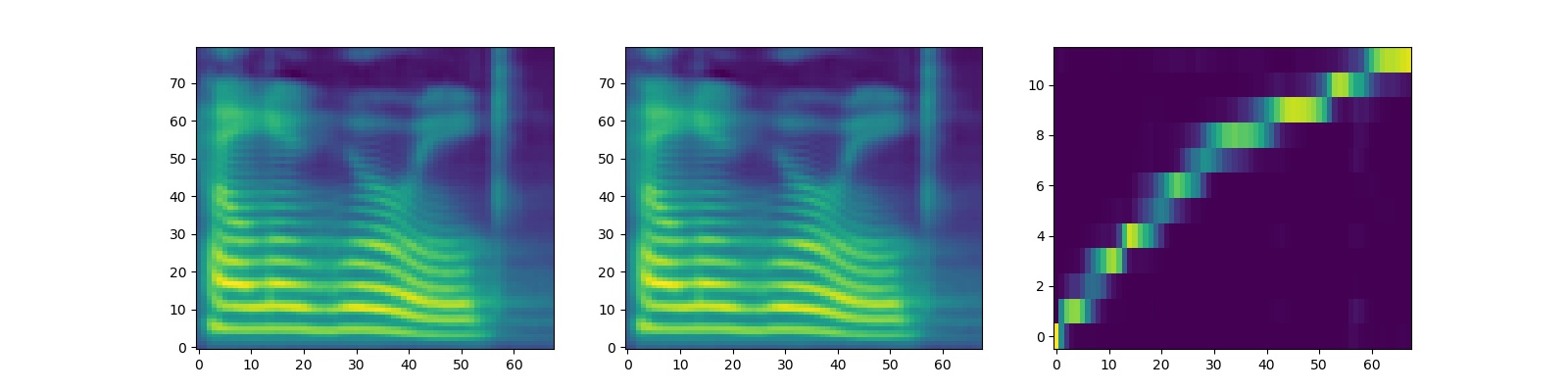

train.py. - The tacotron2 outputs of mel spectrogram and alignment are shown as follow:

- python 3.6

- pytorch 1.1.0

- numpy 1.16.2

- scipy 1.2.1

- librosa 0.6.3

- inflect 2.1.0

- matplotlib 2.2.2

- If you don't prepare the target of alignment before training, the process of training would be very long.

- In the paper of FastSpeech, authors use pre-trained Transformer-TTS to provide the target of alignment. I didn't have a well-trained Transformer-TTS model so I use Tacotron2 instead.

- If you want to use another model to get targets of alignment, you need rewrite

alignment.py. - The returned value of

alignment.pyis a tensor whose value is the multiple that encoder's outputs are supposed to be expanded by. - For example:

test_target = torch.stack([torch.Tensor([0, 2, 3, 0, 3, 2, 1, 0, 0, 0]),

torch.Tensor([1, 2, 3, 2, 2, 0, 3, 6, 3, 5])])