Left is the naive RF, right is the logit-normal time-sampling RF. Both are trained on MNIST.

This repository contains a minimal implementation of the rectified flow models. I've taken SD3 approach of training along with LLaMA-DiT architecture. Unlike my previous repo this time I've decided to split the file into 2: The model implementation and actual code, but you don't have to look at the model code.

Everything is still self-contained, minimal, and hopefully easy to hack. There is nothing complicated goin on if you understood the math.

Install torch, pil, torchvision

pip install torch torchvision pillow

Run

python rf.pyto train the model on MNIST from scratch.

If you are cool and want to train CIFAR instead, you can do that.

python rf.py --cifarOn 63'th epoch, your output should be something like:

This is for gigachads who wants to train Imagenet instead. Don't worry! IMO Imagenet is the new MNIST, and we will use my imagenet.int8 dataset for this.

First go to advanced dir, download the dataset.

cd advanced

pip install hf_transfer # just do install this.

bash download.shThis shouldn't take more than 5 min if your network is decent.

Run

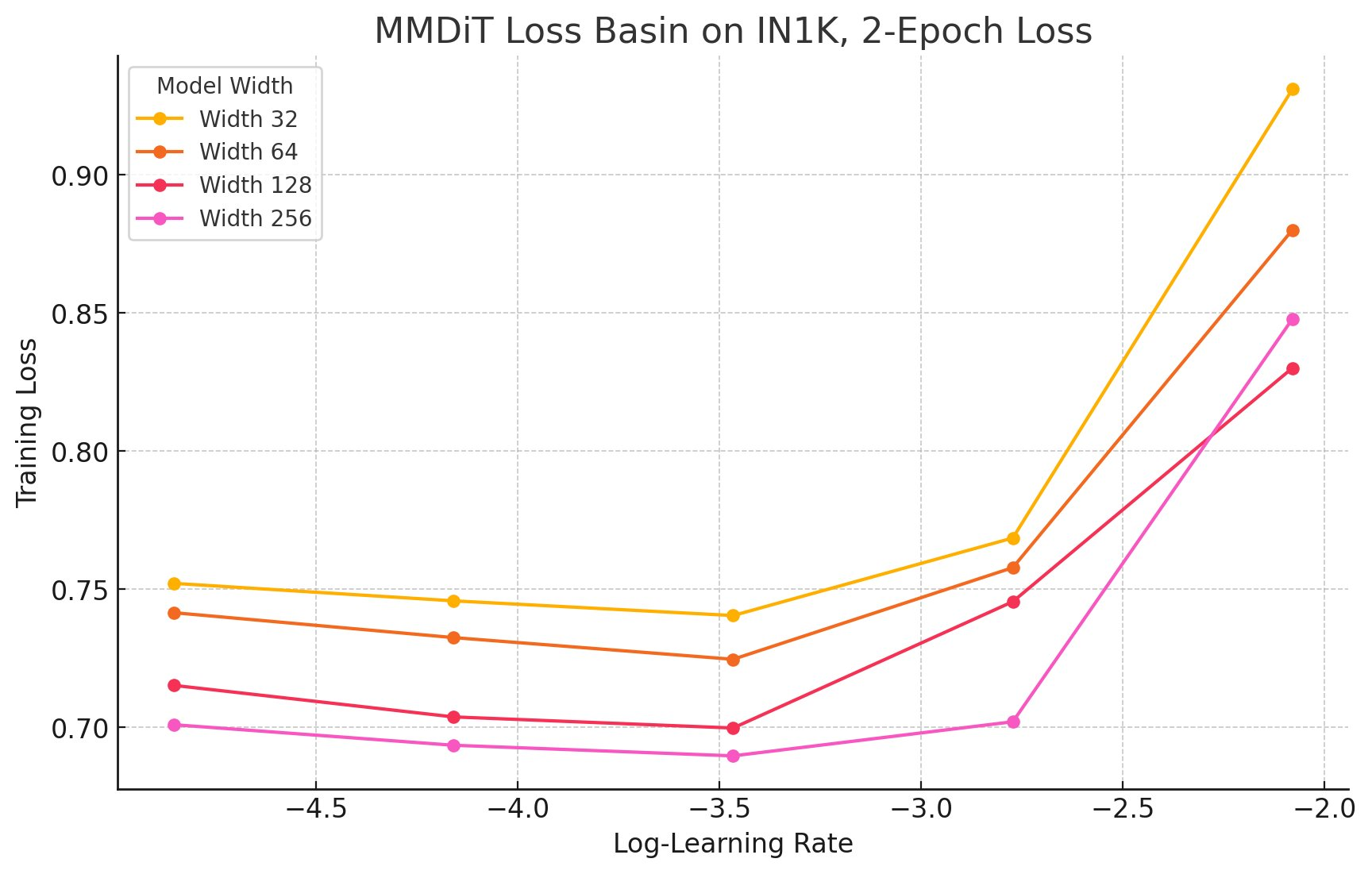

bash run.shto train the model. This will train Imagenet from scratch, do a muP grid search to find the aligned basin for the loss function, you unlock the zero-shot LR transfer for Rectified Flow models!

This uses multiple techniques and codebases I have developed over the year. Its a natural mixture of min-max-IN-dit, min-max-gpt, ez-muP

If you use this material, please cite this repository with the following:

@misc{ryu2024minrf,

author = {Simo Ryu},

title = {minRF: Minimal Implementation of Scalable Rectified Flow Transformers},

year = 2024,

publisher = {Github},

url = {https://github.com/cloneofsimo/minRF},

}