[Project Page] [Paper] [Demo Video]

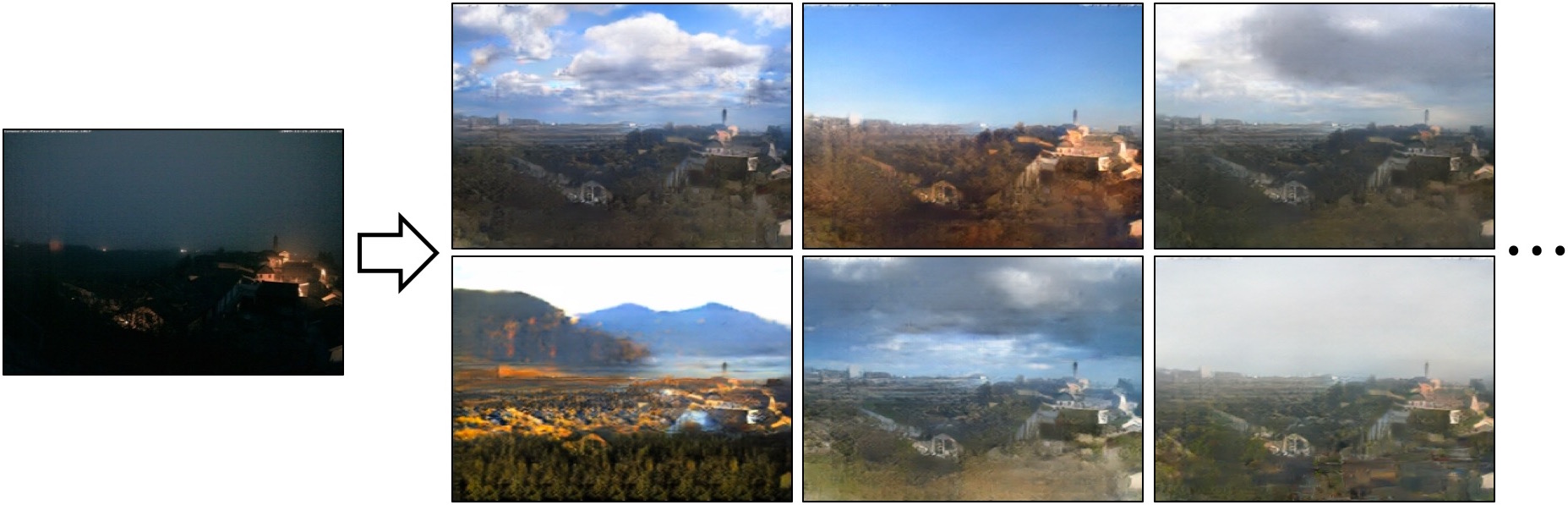

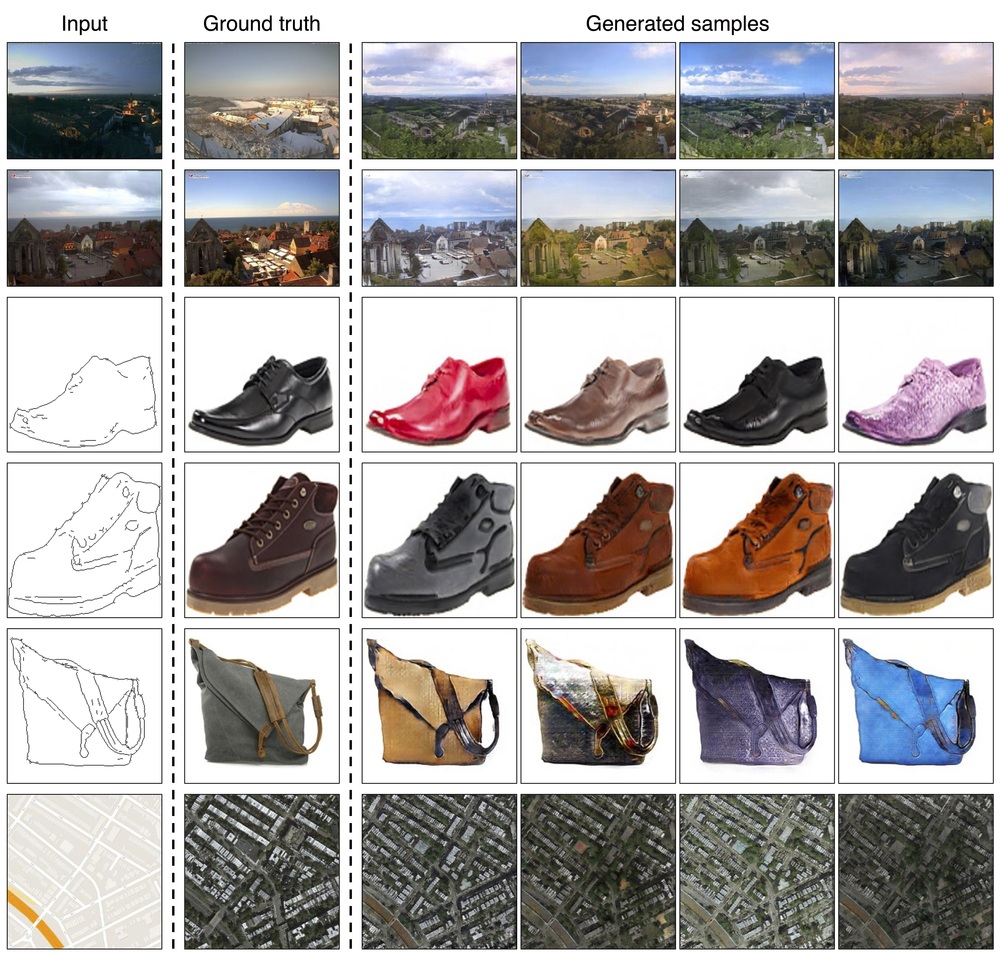

Pytorch implementation for multimodal image-to-image translation. For example, given the same night image, our model is able to synthesize possible day images with different types of lighting, sky and clouds. The training requires paired data.

Toward Multimodal Image-to-Image Translation.

Jun-Yan Zhu,

Richard Zhang, Deepak Pathak, Trevor Darrell, Alexei A. Efros, Oliver Wang, Eli Shechtman.

UC Berkeley and Adobe Research

In NIPS, 2017.

- Linux or macOS

- Python 2 or 3

- CPU or NVIDIA GPU + CUDA CuDNN

- Clone this repo:

git clone -b master --single-branch https://github.com/junyanz/BicycleGAN.git

cd BicycleGAN- Install PyTorch and dependencies from http://pytorch.org

- Install python libraries visdom, dominate, and moviepy.

For pip users:

bash ./scripts/install_pip.shFor conda users:

bash ./scripts/install_conda.sh- Download some test photos (e.g. edges2shoes):

bash ./datasets/download_testset.sh edges2shoes- Download a pre-trained model (e.g. edges2shoes):

bash ./pretrained_models/download_model.sh edges2shoes- Generate results with the model

bash ./scripts/test_shoes.shThe test results will be saved to a html file here: ./results/edges2shoes/val/index.html.

- Generate results with synchronized latent vectors

bash ./scripts/test_shoes.sh --syncResults can be found at ./results/edges2shoes/val_sync/index.html.

bash ./scripts/video_shoes.shResults can be found at ./videos/edges2shoes/.

Coming soon!

The training requires paired input-output images (similar to pix2pix. Currently, we are working on merging our internal code with the public pix2pix/CycleGAN codebase, and retraining the models with the new code.

Download the datasets using the following script. Many of the datasets are collected by other researchers. Please cite their papers if you use the data.

- Download the testset.

bash ./datasets/download_testset.sh dataset_name- Download the training and testset.

bash ./datasets/download_dataset.sh dataset_namefacades: 400 images from CMP Facades dataset. [Citation]maps: 1096 training images scraped from Google Mapsedges2shoes: 50k training images from UT Zappos50K dataset. Edges are computed by HED edge detector + post-processing. [Citation]edges2handbags: 137K Amazon Handbag images from iGAN project. Edges are computed by HED edge detector + post-processing. [Citation]

Download the pre-trained models with the following script.

bash ./pretrained_models/download_model.sh model_nameedges2shoes(edge -> photo): trained on UT Zappos50K dataset.

More models are coming soon!

If you find this useful for your research, please use the following.

@incollection{zhu2017multimodal,

title = {Toward Multimodal Image-to-Image Translation},

author = {Zhu, Jun-Yan and Zhang, Richard and Pathak, Deepak and Darrell, Trevor and Efros, Alexei A and Wang, Oliver and Shechtman, Eli},

booktitle = {Advances in Neural Information Processing Systems 30},

year = {2017},

}

This code borrows heavily from the pytorch-CycleGAN-and-pix2pix repository.