- This is a Keras implementation for DCGANs model using 3 different methods

- Vanilla GAN loss

- Wasserstein GAN

- Wasserstein GAN - Gradient Penalizing

- To show difference between the above mentioned training loss functions, in terms of stability and speed of convergence

- Motivated by Improved Training of Wasserstein GANs paper

-

To install the repo requirements

pip install -r requirements.txt -

config.yamlfile decides which method you can use- Vanilla GAN

- Wasserstein GAN (Weight Clipping)

- Wasserstein GAN (Gradient Penalizing),

and what kind of optimizer

- Adam with Decay

- Adam

- RMSProp

-

To run the code

python3 code/main.py- What to expect?

- You have the dataset downloaded (don't worry everything is mentioned in the next section)

- A folder with the name of the method in the

config.yamlfile will be created as followsf'{method_name}_running_dir'which will contain your- TensorBoard logs

- Sampled images

- Model and its weights

- Model summaries and visualization

- What to expect?

- For Vanilla-GANs & Wasserstein-GANs (Weight clipping) I used "MNIST" dataset with shapes of 28 x 28 x 1

- Wasserstein GAN (Gradient Penalizing) I used "Celeb_A" dataset

- The dataset mentioned in the paper (LSUN Bedrooms' Training dataset) was 42.77GB and using Colab it was a little bit messy using that to download and unzip every-time the runtime was disconnected.

- How to download?

- Access their folder on GDrive, then download the folder called

img_align_celeba.zip - Unzip the zip file to

./data/train/

- Access their folder on GDrive, then download the folder called

- Dataset images had target shapes of 64 x 64

- I implemented DCGANs as per the paper, with some amends

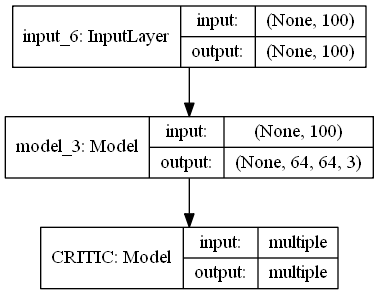

The WGAN-GP Model:

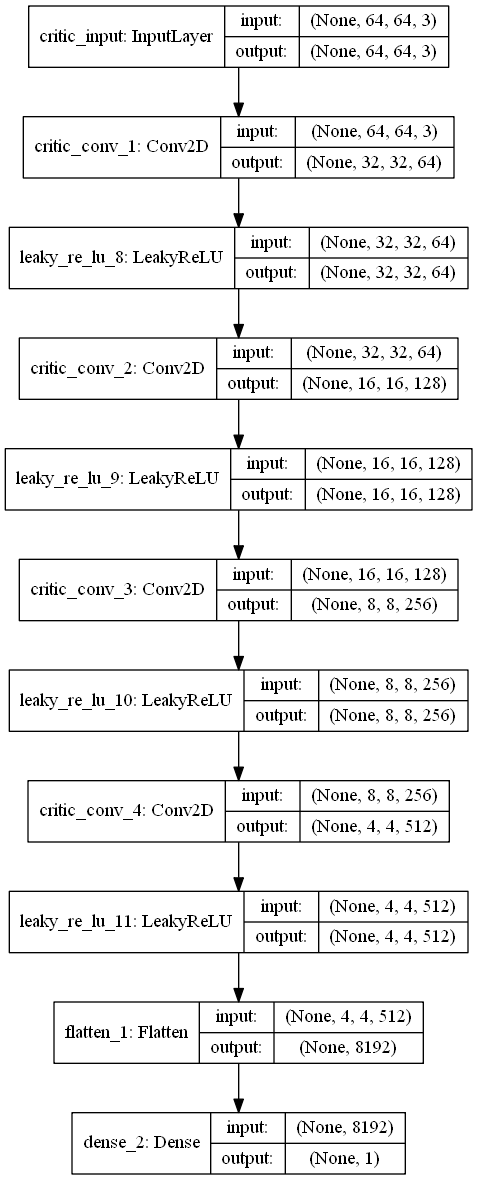

Its Discriminator:

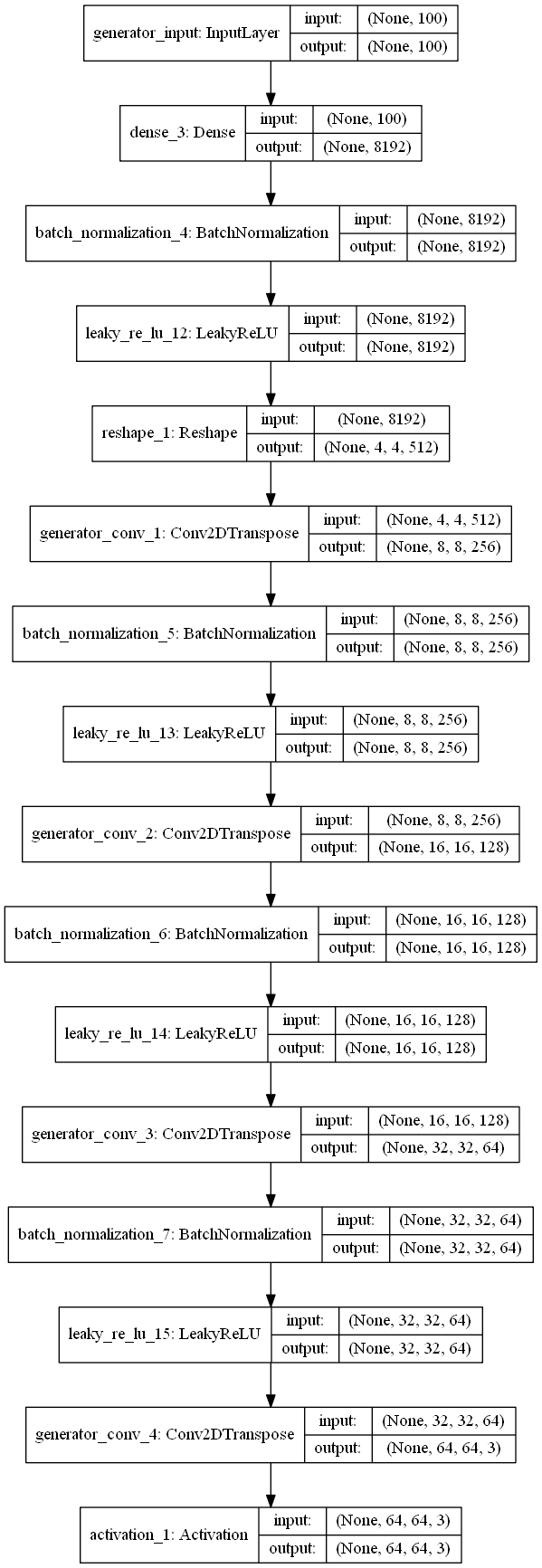

Its Generator:

- To be added soon

- To be added soon