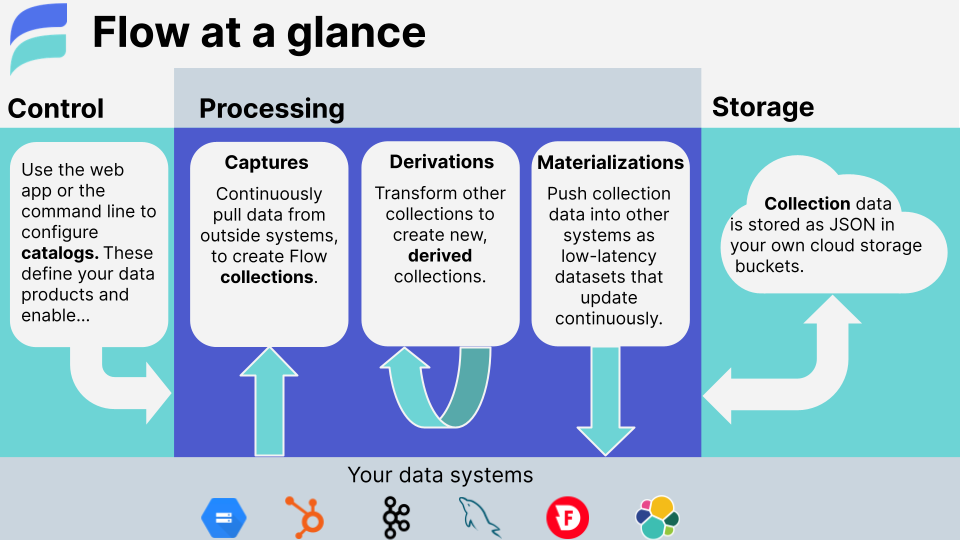

Estuary Flow is a DataOps platform that integrates all of the systems you use to produce, process, and consume data.

Flow unifies today's batch and streaming paradigms so that your systems – current and future – are synchronized around the same datasets, updating in milliseconds.

With a Flow pipeline, you:

-

📷 Capture data from your systems, services, and SaaS into collections: millisecond-latency datasets that are stored as regular files of JSON data, right in your cloud storage bucket.

-

🎯 Materialize a collection as a view within another system, such as a database, key/value store, Webhook API, or pub/sub service.

-

🌊 Derive new collections by transforming from other collections, using the full gamut of stateful stream workflow, joins, and aggregations — in real time.

Flow combines a low-code UI for essential workflows and a CLI for fine-grain control over your pipelines. Together, the two interfaces comprise Flow's unified platform. You can switch seamlessly between them as you build and refine your pipelines, and collaborate with a wider breadth of data stakeholders.

- The UI-based web application is at dashboard.estuary.dev.

- The flowctl CLI can be downloaded per these instructions.

➡️ Sign up for a free Flow account here.

See the BSL license for information on using Flow outside the managed offering.

-

🧐 Examples and tutorials

- Documentation tutorials

- Blog & GitHub tutorials

- Many examples/ are available in this repo, covering a range of use cases and techniques.

The best (and fastest) way to get support from the Estuary team is to join the community on Slack.

You can also email us.

Captures and materializations use connectors: plug-able components that integrate Flow with external data systems. Estuary's in-house connectors focus on high-scale technology systems and change data capture (think databases, pub-sub, and filestores).

Flow can run Airbyte community connectors using airbyte-to-flow, allowing us to support a greater variety of SaaS systems.

See our website for the full list of currently supported connectors.

If you don't see what you need, request it here.

Flow builds on a real-time streaming broker created by the same founding team called Gazette.

Because of this, Flow collections are both a batch dataset – they're stored as a structured "data lake" of general-purpose files in cloud storage – and a stream, able to commit new documents and forward them to readers within milliseconds. New use cases read directly from cloud storage for high-scale backfills of history, and seamlessly transition to low-latency streaming on reaching the present.

Flow mixes a variety of architectural techniques to achieve great throughput without adding latency:

- Optimistic pipelining, using the natural back-pressure of systems to which data is committed.

- Leveraging

reduceannotations to group collection documents by key wherever possible, in memory, before writing them out. - Co-locating derivation states (registers) with derivation compute: registers live in an embedded RocksDB that's replicated for durability and machine re-assignment. They update in memory and only write out at transaction boundaries.

- Vectorizing the work done in external Remote Procedure Calls (RPCs) and even process-internal operations.

- Marrying the development velocity of Go with the raw performance of Rust, using a zero-copy CGO service channel.