Unofficial pytorch implementation of Momentum Contrast for Unsupervised Visual Representation Learning (Paper).

The environment that this repository is created is as follows.

- Python 3.6.2

- PyTorch 1.4.0

- torchvision 0.5.0

- PIL 7.0.0

- matplotlib 2.0.2

- PyYAML 3.12 (Optional)

Note that torchvision < 0.5.0 does not operate with PIL == 7.0.0 (link). To use torchvision < 0.5.0, PIL < 7.0.0 is needed.

Download the dataset and untar. It will create subdirectories for each class with images belonging to that class.

cd YOUR_ROOT

wget http://www.image-net.org/challenges/LSVRC/2012/nnoupb/ILSVRC2012_img_train.tar

tar xvf ILSVRC2012_img_train.tar

As a result, the subdirectories for training dataset will be located in YOUR_ROOT/ILSVRC/Data/CLS-LOC/train.

One can download this dataset from here, or just use torchvision. This repository handles STL-10 dataset via torchvision. Please check dataloader.py for details.

All results in this repository are produced with 6 NVIDIA TITAN Xp GPUs. To produce the best performance, multi-gpu is necessary.

You can train Resnet-50 encoder in self-supervised manner with ImageNet dataset by running the command below.

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5 python train.py --dataset_root=YOUR_ROOT/ILSVRC/Data/CLS-LOC/train --shuffle_bn The training output such as loss graph and weight of the encoder will be saved in MoCo/output/IMAGENET-64/v1. You can change this location by changing the arguments --output_root, --dataset_name, and --exp_version.

If you train the model by running the command above, you can evaluate the pretrained encoder with STL-10 dataset by running the command below. You can designate the checkpoint to load with --load_pretrained_epoch.

CUDA_VISIBLE_DEVICES=0 python test.py --dataset_root=YOUR_ROOT/STL-10 --load_pretrained_epoch=100This command will train a linear feature classifier that takes the feature vectors from pretrained encoder as inputs with STL-10 dataset. After training a linear feature classifier, it will calculate a classifcation accuracy and plot a graph. If you train the encoder by running the command above without changing anything, this command will automatically load pretrained weight from MoCo/output/IMAGENET-64/v1 and save the test results including loss graph, accuracy graph, and weight of a linear classifier in MoCo/output/IMAGENET-64/v1/eval. If you changed any arguments among --output_root, --dataset_name, and --exp_version when training, you should consistently change --encoder_output_root, encoder_dataset_name, and encoder_exp_version when testing.

The results below show the effectiveness of main contributions of this paper, but the performance can be improved by careful consideration on data augmentation manner, increasing number of keys, or changing the backbone model.

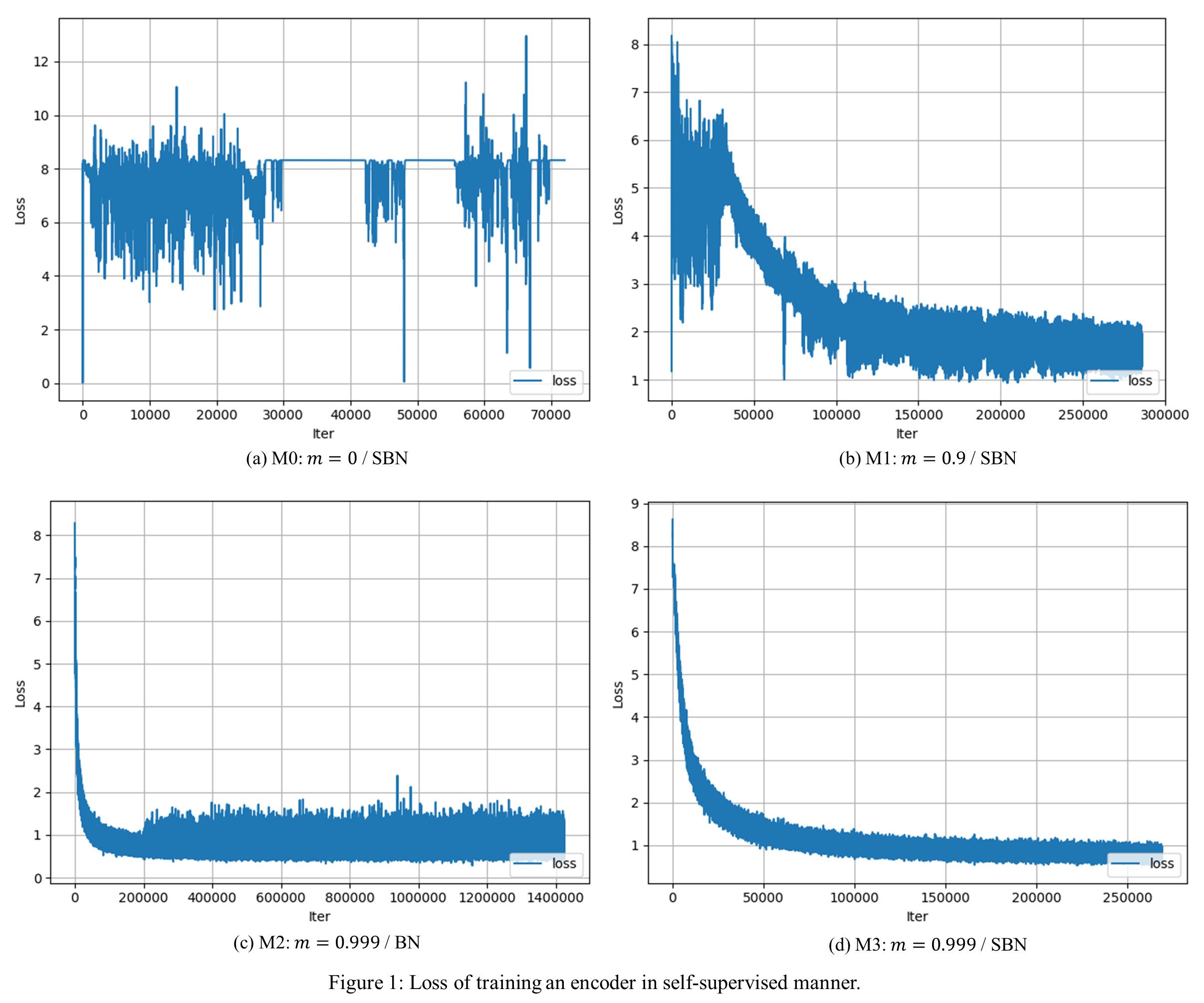

Results are produced with four models. Each model has difference with other models on momentum value (m) and whether to use shuffled batch norm (SBN) or not (BN). Descriptions below show each setting with training command.

- M0: m = 0 / SBN

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5 python train.py --dataset_root=YOUR_ROOT/ILSVRC/Data/CLS-LOC/train --exp_version=M0 --momentum=0 --shuffle_bn- M1: m = 0.9 / SBN

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5 python train.py --dataset_root=YOUR_ROOT/ILSVRC/Data/CLS-LOC/train --exp_version=M1 --momentum=0.9 --shuffle_bn- M2: m = 0.999 / BN

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5 python train.py --dataset_root=YOUR_ROOT/ILSVRC/Data/CLS-LOC/train --exp_version=M2 --momentum=0.999 - M3: m = 0.999 / SBN

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5 python train.py --dataset_root=YOUR_ROOT/ILSVRC/Data/CLS-LOC/train --exp_version=M3 --momentum=0.999 --shuffle_bn - M0 will not converge because it does not have momentum. The training loss will oscillate. Check Ablation: momentum in Section.4.1.

- M1 will converge but M3 will have higher classifcation accuracy than M1 because of more consistent dictionary due to a higher momentum value. Check Ablation: momentum in Section.4.1.

- M3 will have higher classifcation accuracy than M2 because of shuffled batch norm. Check shuffling BN in Section 3.3.

- In Fig. 1a, M0 does not converge. It shows the importance of consistent dictionary in convergence. Although shuffled batch norm is used, the model cannot converge without consistent dictionary. M0 is early stopped because it does not seem to converge.

- In Fig. 1b and 1d, M3 is trained more stably than M1. It shows the importance of consistent dictionary.

- In Fig. 1c and 1d, M2 converges, but M3 converges better than M2 with lower loss value. Note that Fig. 1c has 1400K iterations and Fig. 1d has 250K iterations on x axis. Although M3 is trained for less iterations, it converges better than M2.

- Therefore, Fig. 1 shows that the consistent dictionary is the core of training. Shuffled batch norm can improve the training, but it is not the core.

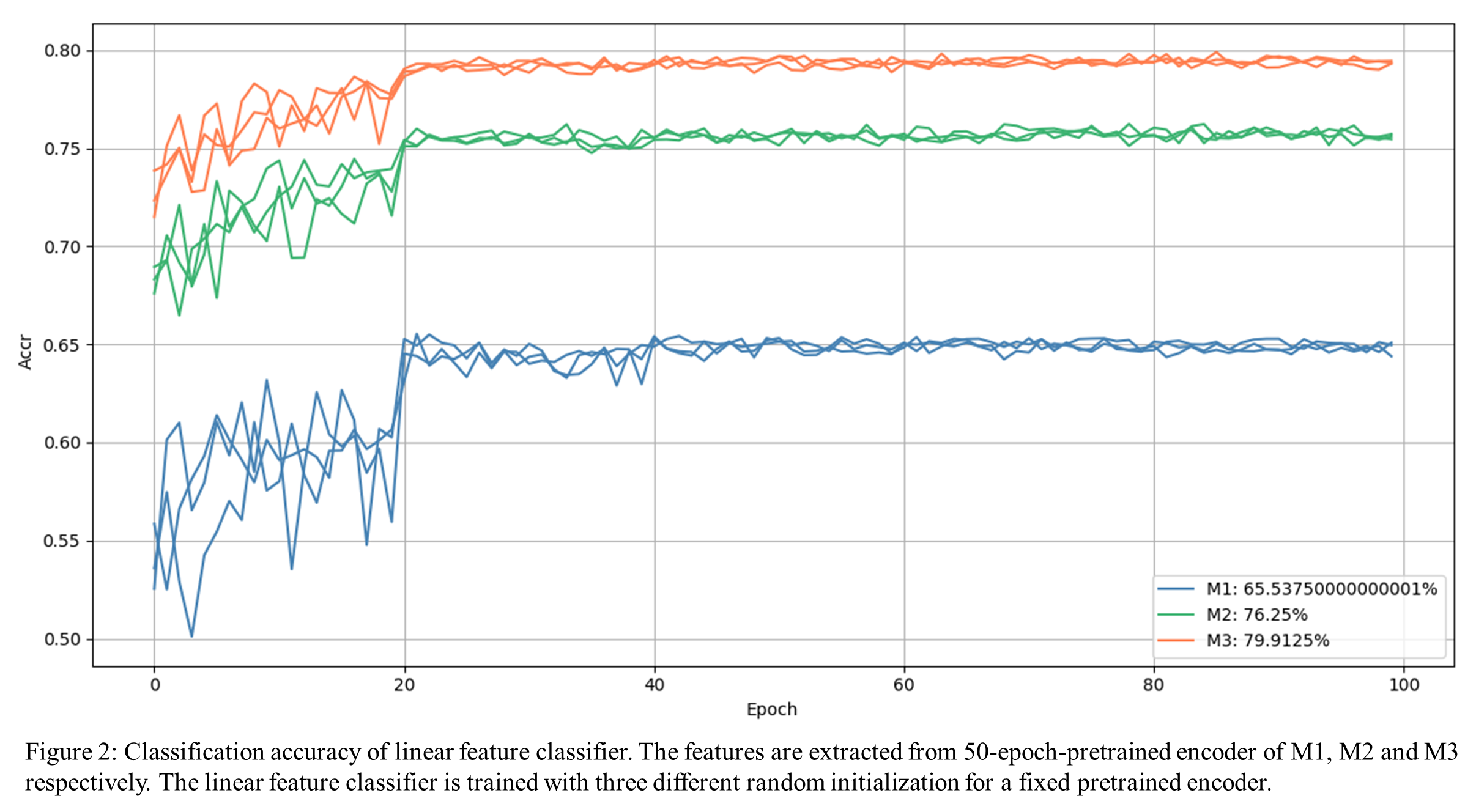

After you train M1, M2, and M3 by running commands above, you can plot graph in Fig. 2 by running

CUDA_VISIBLE_DEVICES=0 python visualize.py --dataset_root=YOUR_ROOT/STL-10 --multiple_encoder_exp_version M1 M2 M3- M0 is excluded becuase it does not converge.

- M2 records much higher classifcation accuracy than M1. Note that the only one difference between M1 and M2 is m. It shows the importance of consistent dictionary.

- M3 records higher classification accuracy than M2, but the gap between M3 and M2 is not as large as that between M2 and M1. Furthermore, the model does not converge without momentum as shown by M0. Therefore, we can notice that the consistent dictionary is necessary and shuffled batch norm can improve the performance.

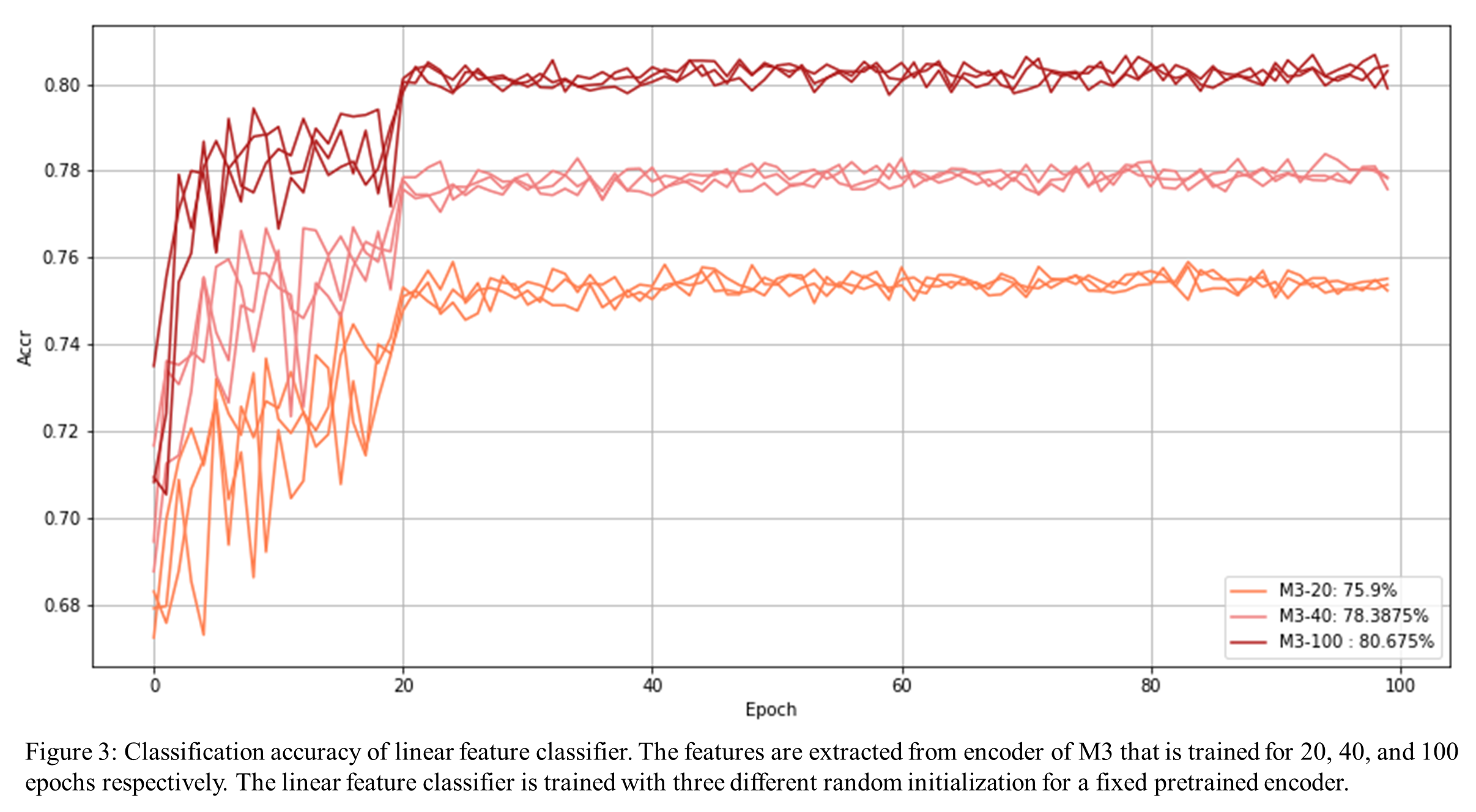

- Fig. 3 shows the feature classifcation accuracy with pretrained encoder of M3. It includes several graphs according to epochs that are spent to train M3. The training is done at 50% and still in progress. Currently, M3-100 (train M3 for 100 epochs) shows the best performance.