This is a practical example of a data engineering project with real-estates. The connected blog post about Building a Data Engineering Project in 20 Minutes you can find on my website. Topics are:

- Getting the Data – Scraping with BeautifulSoup

- Storing on S3-MinIO

- Custom Change Data Capture (CDC)

- Adding Database features to S3 – Delta Lake & Spark

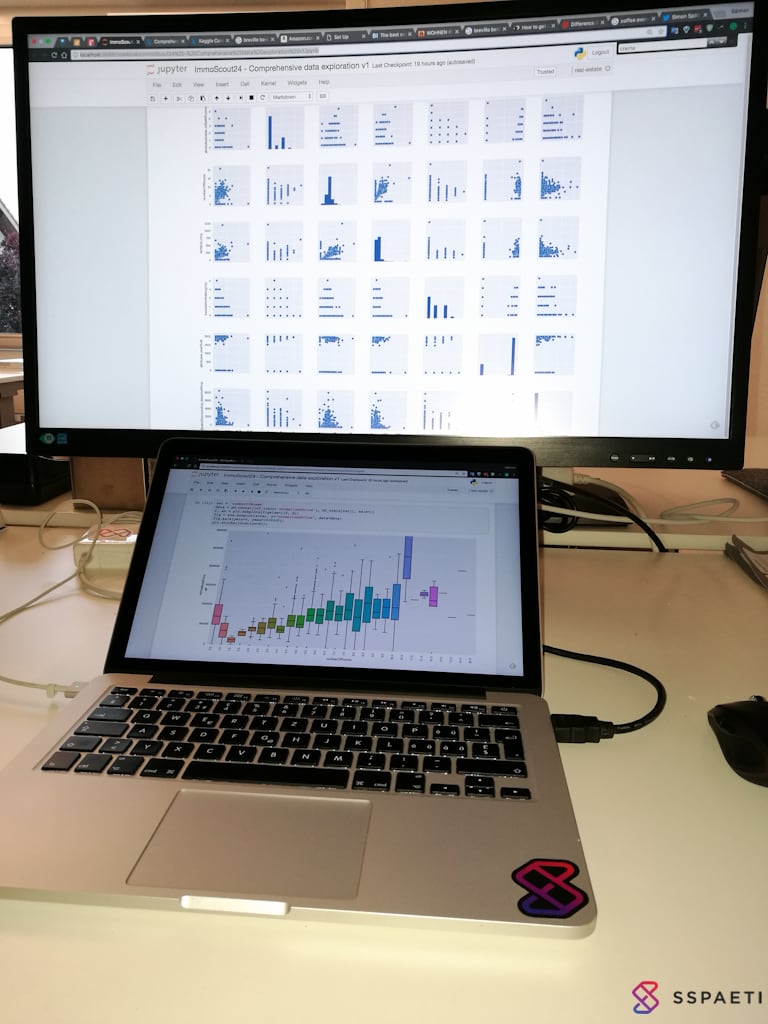

- Machine Learning part – Jupyter Notebook

- Ingesting Data Warehouse for low latency – Apache Druid

- The UI with Dashboards and more – Apache Superset

- Orchestrating everything together – Dagster

- DevOps engine – Kubernetes

The Status of the project you find here.

To get MinIO, Spark, Kubernetes, etc. ready, check the representive folder in here.

- MinIO started

- Kubernetes ready

- Spark image and role and namespaces ready

- cd

src/pipelines/real-estateand start dagit withdagit