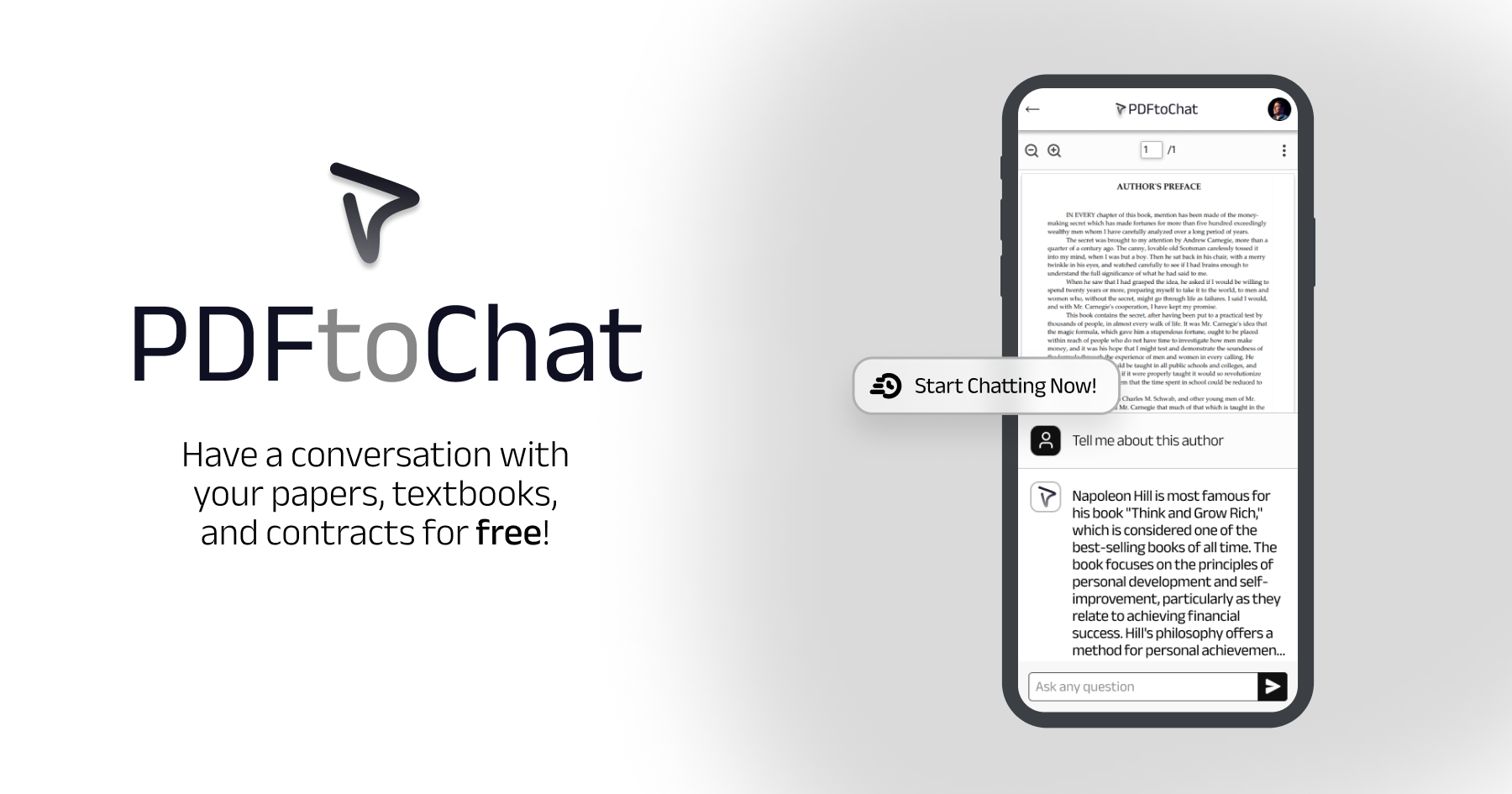

Chat with your PDFs in seconds. Powered by Together AI and Pinecone.

Tech Stack · Deploy Your Own · Common Errors · Credits · Future Tasks

- Next.js App Router for the framework

- Mixtral through Together AI inference for the LLM

- M2 Bert 80M through Together AI for embeddings

- Langchain.js for the RAG code

- Pinecone for the vector database

- Bytescale for the PDF storage

- Vercel for hosting and for the postgres DB

- Clerk for user authentication

- Tailwind CSS for styling

You can deploy this template to Vercel or any other host. Note that you'll need to:

- Set up Together.ai

- Set up Pinecone

- Set up Bytescale

- Set up Clerk

- Set up Vercel

See the .example.env for a list of all the required environment variables.

- Check that you've created an

.envfile that contains your valid (and working) API keys, environment and index name. - Check that you've set the vector dimensions to

768and thatindexmatched your specified field in the.env variable. - Check that you've added a credit card on Together AI if you're hitting rate limiting issues due to the free tier

- Youssef for the design of the app

- Mako for the original RAG repo and inspiration

- Joseph for the langchain help

- Together AI, Bytescale, Pinecone, and Clerk for sponsoring

These are some future tasks that I have planned. Contributions are welcome!

- Add a trash icon for folks to delete PDFs from the dashboard and implement delete functionality

- Add footer to the dashboard page with a support email so folks can contact me with questions

- Research best practices for chunking and retrieval and play around with them – ideally run benchmarks

- Try out Langsmith for more observability into how the RAG app runs

- Explore best practices for auto scrolling based on other chat apps like chatGPT

- Do some prompt engineering for Mixtral to make replies as good as possible

- Change the header at the top with something more similar to roomGPT to make it cleaner

- Configure emails on Clerk if I want to eventually add this

- Add demo video to the homepage to demonstrate functionality more easily

- Upgrade to Next.js 14 and fix any issues with that

- Implement sources like perplexity to be clickable with more info

- Add analytics to track the number of chats & errors with Vercel Analytics events

- Make some changes to the default tailwind

proseto decrease padding - Add an initial message with sample questions or just add them as bubbles on the page

- Protect API routes by making sure users are signed in before executing chats

- Add an option to get answers as markdown or in regular paragraphs

- Implement something like SWR to automatically revalidate data

- Save chats for each user to get back to later in the postgres DB

- Clean up and customize how the PDF viewer looks to be very minimal

- Bring up a message to direct folks to compress PDFs if they're beyond 10MB

- Migrate to the latest bytescale library and use a self-designed custom uploader

- Use a session tracking tool to better understand how folks are using the site

- Add better error handling overall with appropriate toasts when actions fail

- Add support for images in PDFs with something like Nougat

- Add rate limiting for uploads to only allow up to 5-10 uploads a day using upstash redis