Guidelines and notes about useful tools to analyze and optimize code.

The idea is to gather some tools that we use to develop high performance code. We should provide information about how to install and use these tools. Let's begin by collecting all instructions here and later move them to subfolders for each tool if readme gets confusing.

Use cache to speed up the compilation. If you compile the same project again and again with small changes, this can save you a lot of time. It is easy to setup. Installation via conda or via apt-get. See the manual for the different run modes. For cmake, you can use a flag

cmake .. -DCMAKE_INSTALL_PREFIX=$CONDA_PREFIX -DCMAKE_CXX_COMPILER_LAUNCHER=ccache

Use Clang & Ninja to speed up the compilation.

Use mold to speed up the linking. For cmake, you can add link option in CMakeLists.txt

add_link_options("-fuse-ld=mold")

If you execute compiled experimental code or run a python script that is corresponding c++ bindings Segmentation Faults. Specific tools can help you to fix them.

FIRST: compile in Debug mode

Use either gdb (linux) or lldb (macOS). The commands specified below work for both.

# Start debugging session

gdb <your-executable>

# set breakpoints in two different ways, either on specific line for function

b file.cpp:40`

breakpoint set -n functionName

# start

runSee here for further details.

Core dump is another way to debug. First, enable core files

ulimit -c unlimitedThen, view the backtrace in gdb

gdb path/to/my/executable path/to/coredumpfileSee here for further details.

Backward is a beautiful stack trace pretty printer for C++. You need to compile your project with generation of debug symbols enabled, usually -g with clang++ and g++. Add the following code to the source file

#include <backward.hpp>

namespace backward {

backward::SignalHandling sh;

}gdb python

# set breakpoints etc.

run your_script.pyOnce a program crashes, use bt to show the full backtrace.

You can spawn a Python interpreter in-context anywhere in your code:

__import__("IPython").embed()You may add breakpoints in your Python code using:

breakpoint()For a better debugger than the basic pdb one, you may install pdb++ using one of the following commands:

pip install pdbpp # In a pip environment

conda install -c conda-forge pdbpp # In a Conda environmentWith pdb++, add breakpoints again with breakpoint(). You may run sticky in the pdb++ environment to toggle a sticky mode (with colored code of the whole function) and start a Python interpreter with the interact command.

For debugging code with a graphical interface, check pudb (similar usage).

Checking how much time is spent for every function. Can help you to find the bottleneck in your code. FlameGraph is a nice visual tool to display your stack trace.

Use Rust-powered flamegraph -> fast

# Install tools needed for analysis like perf

sudo apt-get install linux-tools-common linux-tools-generic linux-tools-`uname -r`

# Install rust on linux or macOS

curl --proto '=https' --tlsv1.3 https://sh.rustup.rs -sSf | sh

# Now you have the rust package managar cargo and you can do

cargo install flamegraph

# Necessary to allow access to cpu (only once)

echo -1 | sudo tee /proc/sys/kernel/perf_event_paranoid

echo 0 | sudo tee /proc/sys/kernel/kptr_restrict

# Command to produce your flamegraph (use -c for custom options for perf)

flamegraph -o sparse_wrapper.svg -v -- example-cppUse classic perl flamegraph

# Install tools needed for analysis like perf

sudo apt-get install linux-tools-common linux-tools-generic linux-tools-`uname -r`

# Copy the repo

https://github.com/brendangregg/FlameGraph.git

# Necessary to allow access to cpu (only once)

echo -1 | sudo tee /proc/sys/kernel/perf_event_paranoid

echo 0 | sudo tee /proc/sys/kernel/kptr_restrict

# Run perf on your executable and create output repot in current dir

perf record --call-graph dwarf example-cpp

perf script > perf.out

# You can read the report with cat perf.out ..

cd <cloned-flamegraph-repo>

./stackcollapse-perf.pl <location-of-perf.out> > out.folded

./flamegraph.pl out.folded > file.svg

# Now open the file.svg in your favorite browser in enjoy the interactive modeAs the process with the default flamegraph repo is quite a pain, you can write your own script like @ManifoldFR in proxDDP.

Valgrind can automatically detect many memory management and threading bugs.

sudo apt install valgrind

# Use valgrind with input to check for mem leak

valgrind --leak-check=yes myprog arg1 arg2

Check here and doc for further explanation.

leaks is an alternative tool available on macOS to detect memory leaks:

$ leaks -atExit -- myprog

Date/Time: 2024-07-17 17:46:43.948 +0200

Launch Time: 2024-07-17 17:46:42.781 +0200

OS Version: macOS 14.5 (23F79)

Report Version: 7

Analysis Tool: Xcode.app/Contents/Developer/usr/bin/leaks

Analysis Tool Version: Xcode 15.4 (15F31d)

Physical footprint: 4646K

Physical footprint (peak): 4646K

Idle exit: untracked

----

leaks Report Version: 4.0, multi-line stacks

Process 56686: 507 nodes malloced for 47 KB

Process 56686: 0 leaks for 0 total leaked bytes.

Check out here for more information.

AddressSanitizer (aka ASan) is a memory error detector for C/C++. For cmake, you can add link option in CMakeLists.txt

set(CMAKE_C_FLAGS "${CMAKE_C_FLAGS} -fsanitize=address")

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -fsanitize=address")

Use Eigen tools to make sure you are not allocating memory where you do not want to do so -> it is slowing down your program. Check proxqp or here.

The macros defined in ProxQP allow us to do

PROXSUITE_EIGEN_MALLOC_NOT_ALLOWED();

output = superfast_function_without_allocations();

PROXSUITE_EIGEN_MALLOC_ALLOWED();and if this code is compiled in Debug mode, we will have assertation errors if eigen is allocation memory inside the function.

GUI to check how much memory is allocated in every function when executing a program.

-> Valgrind + KCachegrind

sudo apt-get install valgrind kcachegrind graphviz

valgrind --tool=massif --xtree-memory=full <your-executable>

kcachegrind <output-file-of-previous-cmd>Python provides a profiler named cProfile. To profile a script, simply add -m cProfile -o profile.prof when running the script, i.e.:

python -m cProfile -o profile.prof my_script.py --my_argsThis saves the result in the specified output path (here, profile.txt), which you can then visualize with snakeviz: pip install snakeviz and then:

snakeviz profile.profThis opens a browser tab with an interactive visualization of execution times.

Some very useful advices to optimize your C++ code that you should have in mind.

Narrator: "also quite amusing to read..."

A nice online course to get started with C++ explaining most of the basic concepts in c++14/17.

If you have a pipeline that is failing, and you would like to check some quick fixes directly on the CI machine debug-via-ssh is precious: Sign into your account on ngrok (you can use github) and follow the readme to set it up locally (2mins). Copy the token you obtained from ngrok into the secrets section of your repo, specify a password for the SSH connection also as token of the repo. Copy this to your workflow at the position where you would like to stop:

- name: Start SSH session

uses: luchihoratiu/debug-via-ssh@main

with:

NGROK_AUTH_TOKEN: ${{ secrets.NGROK_AUTH_TOKEN }}

SSH_PASS: ${{ secrets.SSH_PASS }}Run the CI and follow the output. Note: the option continue-on-error: True can be very useful the continue a failing workflow until the point where you ssh to it.

lhotari/action-upterm and action-tmate are also two alternative GitHub actions with similar functionality that can be ran directly without any setup in your CI jobs:

- name: Start SSH session

uses: lhotari/action-upterm@v1Consider using the action with limit-access-to-actor: true, to limit access to your account.

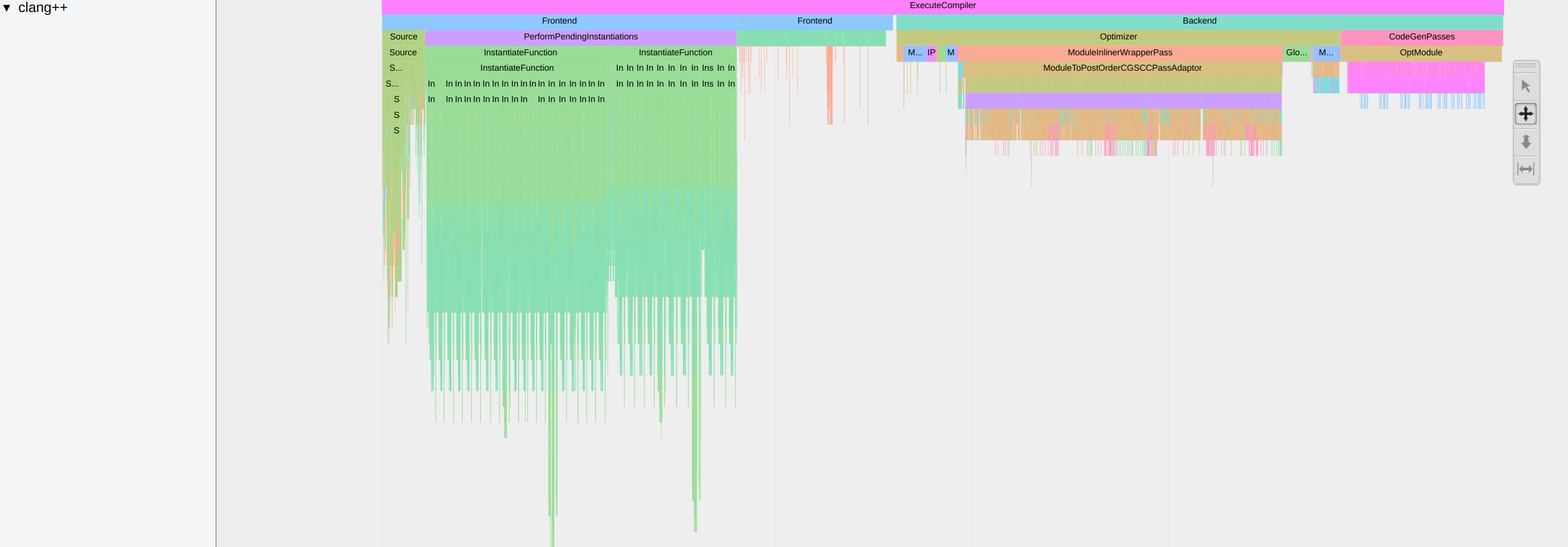

When doing heavy template meta-programming in C++ it can be useful to analyze what part of the code is taking a long time to compile.

clang allows to profile the compilation time. To activate this function, add the -ftime-trace option while building.

In a CMake project, you can do this with the following command line:

cmake .. -DCMAKE_CXX_FLAGS="-ftime-trace"Each .cpp will then produce a .json file. To find them you can use the following command line:

find . -iname "*.json"Then, you can open the file with the Chromium tracing tool. Open the about:tracing URL in Chromium and load the .json. You will have the following display:

-ftime-trace is only available with clang. With conda, you can install it with the following command line conda install clangxx.

Then, when running CMake for the first time use the following command:

CC=clang CXX=clang++ cmake ..