This is the code for our paper Understanding Human Hands in Contact at Internet Scale (CVPR 2020, Oral).

Dandan Shan, Jiaqi Geng*, Michelle Shu*, David F. Fouhey

This repo is the pytorch implementation of our Hand Object Detector based on Faster-RCNN.

More information can be found at our:

- Python 3.6

- Pytorch 1.0

- CUDA 10.0

Create a new conda called handobj, install pytorch-1.0.1 and cuda-10.0:

conda create --name handobj python=3.6

conda activate handobj

conda install pytorch=1.0.1 torchvision cudatoolkit=10.0 -c pytorch

First of all, clone the code

git clone https://github.com/ddshan/hand_object_detector && cd hand_object_detector

Install all the python dependencies using pip:

pip install -r requirements.txt

Compile the cuda dependencies using following simple commands:

cd lib

python setup.py build develop

PS:

Since the repo is modified based on faster-rcnn.pytorch (use branch pytorch-1.0), if you have futher questions about the environments, the issues in that repo may help.

- Tested on the testset of our 100K and ego dataset:

| Name | Hand | Obj | H+Side | H+State | H+O | All | Model Download Link |

| handobj_100K+ego | 90.4 | 66.3 | 88.4 | 73.2 | 47.6 | 39.8 | faster_rcnn_1_8_132028.pth |

| handobj_100K | 89.8 | 51.5 | 65.8 | 62.4 | 27.9 | 20.9 | faster_rcnn_1_8_89999.pth |

- Tested on the testset of our 100K dataset:

| Name | Hand | Obj | H+Side | H+State | H+O | All |

| handobj_100K+ego | 89.6 | 64.7 | 79.0 | 63.8 | 45.1 | 36.8 |

| handobj_100K | 89.6 | 64.0 | 78.9 | 64.2 | 46.9 | 38.6 |

- Tested on the testset of our ego dataset:

| Name | Hand | Obj | H+Side | H+State | H+O | All |

| handobj_100K+ego | 90.5 | 67.2 | 90.0 | 75.0 | 47.4 | 46.3 |

| handobj_100K | 89.8 | 41.7 | 59.5 | 62.8 | 20.3 | 12.7 |

The model handobj_100K is trained on trainset of 100K youtube frames.

The model handobj_100K+ego is trained on trainset of 100K plus additional egocentric data we annotated, which works much better on egocentric data.

We provide the frame names of the egocentric data we used here: trainval.txt, test.txt. This split is backwards compatible with the Epic-Kitchens2018 (EK), EGTEA, and CharadesEgo (CE).

Prepare and save pascal-voc format data in data/ folder:

mkdir data

You can download our prepared pascal-voc format data from pascal_voc_format.zip (see more of our downloads on our project and dataset webpage).

Download pretrained Resnet-101 model from faster-rcnn.pytorch (go to Pretrained Model section, download ResNet101 from their Dropbox link) and save it like:

data/pretrained_model/resnet101_caffe.pth

So far, the data/ folder should be like this:

data/

├── pretrained_model

│ └── resnet101_caffe.pth

├── VOCdevkit2007_handobj_100K

│ └── VOC2007

│ ├── Annotations

│ │ └── *.xml

│ ├── ImageSets

│ │ └── Main

│ │ └── *.txt

│ └── JPEGImages

│ └── *.jpg

To train a hand object detector model with resnet101 on pascal_voc format data, run:

CUDA_VISIBLE_DEVICES=0 python trainval_net.py --model_name handobj_100K --log_name=handobj_100K --dataset pascal_voc --net res101 --bs 1 --nw 4 --lr 1e-3 --lr_decay_step 3 --cuda --epoch=10 --use_tfb

To evaluate the detection performance, run:

CUDA_VISIBLE_DEVICES=0 python test_net.py --model_name=handobj_100K --save_name=handobj_100K --cuda --checkepoch=xxx --checkpoint=xxx

Download models by using the links in the table above from google drive.

Save models in the models/ folder:

mkdir models

models

└── res101_handobj_100K

└── pascal_voc

└── faster_rcnn_{checksession}_{checkepoch}_{checkpoint}.pth

Simple testing:

Put your images in the images/ folder and run the command. A new folder images_det will be created with the visualization. Check more about argparse parameters in demo.py.

CUDA_VISIBLE_DEVICES=0 python demo.py --cuda --checkepoch=xxx --checkpoint=xxx

Params to save detected results in demo.py you may need for your task:

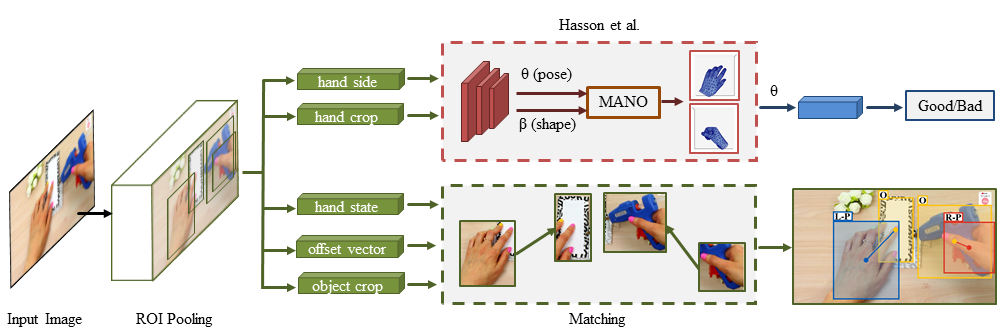

- hand_dets: detected results for hands, [boxes(4), score(1), state(1), offset_vector(3), left/right(1)]

- obj_dets: detected results for object, [boxes(4), score(1), state(1), offset_vector(3), left/right(1)]

We did not train the contact_state, offset_vector and hand_side part for objects. We keep them just to make the data format consistent. So, only use the bbox and confidence score infomation for objects.

Matching:

Check the additional matching.py script to match the detection results, hand_dets and obj_dets, if needed.

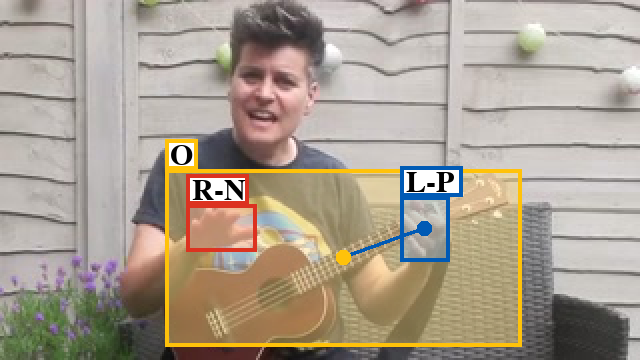

Color definitions:

- yellow: object bbox

- red: right hand bbox

- blue: left hand bbox

Label definitions:

- L: left hand

- R: right hand

- N: no contact

- S: self contact

- O: other person contact

- P: portable object contact

- F: stationary object contact (e.g.furniture)

- Occasional false positives with no people.

- Issues with left/right in egocentric data (Please check egocentric models that work far better).

- Difficulty parsing the full state with lots of people.

If this work is helpful in your research, please cite:

@INPROCEEDINGS{Shan20,

author = {Shan, Dandan and Geng, Jiaqi and Shu, Michelle and Fouhey, David},

title = {Understanding Human Hands in Contact at Internet Scale},

booktitle = CVPR,

year = {2020}

}

When you use the model trained on our ego data, make sure to also cite the original datasets (Epic-Kitchens, EGTEA and CharadesEgo) that we collect from and agree to the original conditions for using that data.