This is our PyTorch implementation for the Neural-Best Buddies paper.

The code was written by Kfir Aberman and supported by Mingyi Shi.

Neural Best-Buddies: Project | Paper

If you use this code for your research, please cite:

Neural Best-Buddies: Sparse Cross-Domain Correspondence Kfir Aberman, Jing Liao, Mingyi Shi, Dani Lischinski, Baoquan Chen, Daniel Cohen-Or, SIGGRAPH 2018.

- Linux or macOS

- Python 2 or 3

- CPU or NVIDIA GPU + CUDA CuDNN

- Run the algorithm (demo example)

#!./script.sh

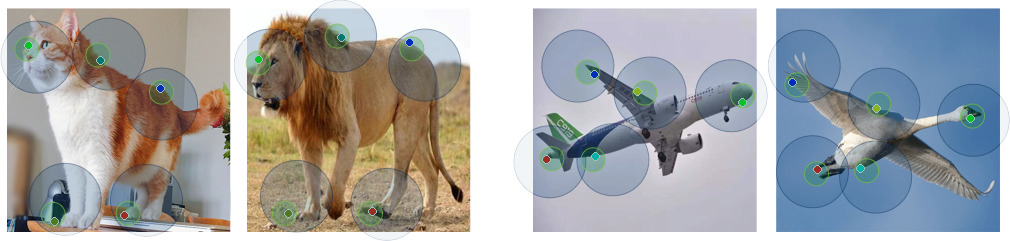

python3 main.py --datarootA ./images/original_A.png --datarootB ./images/original_B.png --name lion_cat --k_final 10The option --k_final dictates the final number of returned points. The results will be saved at ../results/. Use --results_dir {directory_path_to_save_result} to specify the results directory.

Sparse correspondence:

- correspondence_A.txt, correspondence_B.txt

- correspondence_A_top_k.txt, correspondence_B_top_k.txt

Dense correspondence (densifying based on MLS):

- BtoA.npy, AtoB.npy

Warped images (aligned to their middle geometry):

- warp_AtoM.png, warp_BtoM.png

- If you are running the algorithm on a bunch of pairs, we recommend to stop it at the second layer to reduce runtime (comes at the expense of accuracy), use the option

--fast. - If the images are very similar (e.g, two frames extracted from a video), many corresponding points might be found, resulting in long runtime. In this case we suggest to limit the number of corresponding points per level by setting

--k_per_level 20(or any other desired number)

If you use this code for your research, please cite our paper:

@article{aberman2018neural,

title={Neural best-buddies: Sparse cross-domain correspondence},

author={Aberman, Kfir and Liao, Jing and Shi, Mingyi and Lischinski, Dani and Chen, Baoquan and Cohen-Or, Daniel},

journal={ACM Transactions on Graphics (TOG)},

volume={37},

number={4},

pages={69},

year={2018},

publisher={ACM}

}