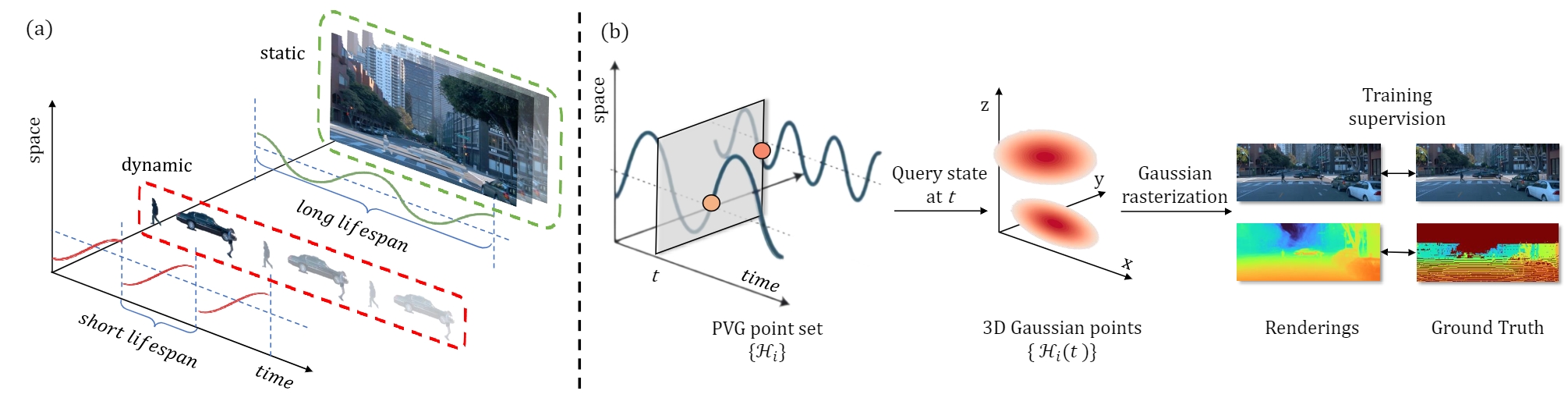

Periodic Vibration Gaussian: Dynamic Urban Scene Reconstruction and Real-time Rendering,

Yurui Chen, Chun Gu, Junzhe Jiang, Xiatian Zhu, Li Zhang

Arxiv preprint

Official implementation of "Periodic Vibration Gaussian: Dynamic Urban Scene Reconstruction and Real-time Rendering".

# Clone the repo.

git clone https://github.com/fudan-zvg/PVG.git

cd PVG

# Make a conda environment.

conda create --name pvg python=3.9

conda activate pvg

# Install requirements.

pip install -r requirements.txt

# Install simple-knn

git clone https://gitlab.inria.fr/bkerbl/simple-knn.git

pip install ./simple-knn

# a modified gaussian splatting (for feature rendering)

git clone --recursive https://github.com/SuLvXiangXin/diff-gaussian-rasterization

pip install ./diff-gaussian-rasterization

# Install nvdiffrast (for Envlight)

git clone https://github.com/NVlabs/nvdiffrast

pip install ./nvdiffrast

Create a directory for the data: mkdir data.

Preprocessed 4 waymo scenes for results in Table 1 of our paper can be downloaded here (optional: corresponding label). Please unzip and put it into data directory.

First prepare the kitti-format Waymo dataset:

# Given the following dataset, we convert it to kitti-format

# data

# └── waymo

# └── waymo_format

# └── training

# └── segment-xxxxxx

# install some optional package

pip install -r requirements-data.txt

# Convert the waymo dataset to kitti-format

python scripts/waymo_converter.py waymo --root-path ./data/waymo/ --out-dir ./data/waymo/ --workers 128 --extra-tag waymo

Then use the example script scripts/extract_scenes_waymo.py to extract the scenes from the kitti-format Waymo dataset which we employ to extract the scenes listed in StreetSurf.

Following StreetSurf, we use Segformer to extract the sky mask and put them as follows:

data

└── waymo_scenes

└── sequence_id

├── calib

│ └── frame_id.txt

├── image_0{0, 1, 2, 3, 4}

│ └── frame_id.png

├── sky_0{0, 1, 2, 3, 4}

│ └── frame_id.png

|── pose

| └── frame_id.txt

└── velodyne

└── frame_id.bin

We provide an example script scripts/extract_mask_waymo.py to extract the sky mask from the extracted Waymo dataset, follow instructions here to setup the Segformer environment.

Preprocessed 3 kitti scenes for results in Table 1 of our paper can be downloaded here. Please unzip and put it into data directory.

Put the KITTI-MOT dataset in data directory.

Following StreetSurf, we use Segformer to extract the sky mask and put them as follows:

data

└── kitti_mot

└── training

├── calib

│ └── sequence_id.txt

├── image_0{2, 3}

│ └── sequence_id

│ └── frame_id.png

├── sky_0{2, 3}

│ └── sequence_id

│ └── frame_id.png

|── oxts

| └── sequence_id.txt

└── velodyne

└── sequence_id

└── frame_id.bin

We also provide an example script scripts/extract_mask_kitti.py to extract the sky mask from the KITTI dataset.

# Waymo image reconstruction

CUDA_VISIBLE_DEVICES=0 python train.py \

--config configs/waymo_reconstruction.yaml \

source_path=data/waymo_scenes/0145050 \

model_path=eval_output/waymo_reconstruction/0145050

# Waymo novel view synthesis

CUDA_VISIBLE_DEVICES=0 python train.py \

--config configs/waymo_nvs.yaml \

source_path=data/waymo_scenes/0145050 \

model_path=eval_output/waymo_nvs/0145050

# KITTI image reconstruction

CUDA_VISIBLE_DEVICES=0 python train.py \

--config configs/kitti_reconstruction.yaml \

source_path=data/kitti_mot/training/image_02/0001 \

model_path=eval_output/kitti_reconstruction/0001 \

start_frame=380 end_frame=431

# KITTI novel view synthesis

CUDA_VISIBLE_DEVICES=0 python train.py \

--config configs/kitti_nvs.yaml \

source_path=data/kitti_mot/training/image_02/0001 \

model_path=eval_output/kitti_nvs/0001 \

start_frame=380 end_frame=431

After training, evaluation results can be found in {EXPERIMENT_DIR}/eval directory.

You can also use the following command to evaluate.

CUDA_VISIBLE_DEVICES=0 python evaluate.py \

--config configs/kitti_reconstruction.yaml \

source_path=data/kitti_mot/training/image_02/0001 \

model_path=eval_output/kitti_reconstruction/0001 \

start_frame=380 end_frame=431

You can the following command to automatically remove the dynamics, the render results will be saved in {EXPERIMENT_DIR}/separation directory.

CUDA_VISIBLE_DEVICES=1 python separate.py \

--config configs/waymo_reconstruction.yaml \

source_path=data/waymo_scenes/0158150 \

model_path=eval_output/waymo_reconstruction/0158150

0017085.mp4

0124100.mp4

0147030.mp4

0149060.mp4

comparison_static_0017085.mp4

comparison_static_0147030.mp4

comparison_dynamic_0017085.mp4

comparison_dynamic_0147030.mp4

novel.mp4

@article{chen2023periodic,

title={Periodic Vibration Gaussian: Dynamic Urban Scene Reconstruction and Real-time Rendering},

author={Chen, Yurui and Gu, Chun and Jiang, Junzhe and Zhu, Xiatian and Zhang, Li},

journal={arXiv:2311.18561},

year={2023},

}