PyTorch-Pose

PyTorch-Pose is a PyTorch implementation of the general pipeline for 2D single human pose estimation. The aim is to provide the interface of the training/inference/evaluation, and the dataloader with various data augmentation options for the most popular human pose databases (e.g., the MPII human pose, LSP and FLIC).

Some codes for data preparation and augmentation are brought from the Stacked hourglass network. Thanks to the original author.

Features

- Multi-thread data loading

- Multi-GPU training

- Logger

- Training/testing results visualization

Installation

-

PyTorch (>= 0.2.0): Please follow the installation instruction of PyTorch. Note that the code is developed with Python2 and has not been tested with Python3 yet.

-

Clone the repository with submodule

git clone --recursive https://github.com/bearpaw/pytorch-pose.git -

Create a symbolic link to the

imagesdirectory of the MPII dataset:ln -s PATH_TO_MPII_IMAGES_DIR data/mpii/images

Usage

Testing

You may download our pretrained models (e.g., 2-stack hourglass model) for a quick start.

Run the following command in terminal to evaluate the model on MPII validation split (The train/val split is from Tompson et al. CVPR 2015).

CUDA_VISIBLE_DEVICES=0 python example/mpii.py -a hg --stacks 2 --blocks 1 --checkpoint checkpoint/mpii/hg_s2_b1 --resume checkpoint/mpii/hg_s2_b1/model_best.pth.tar -e -d

-aspecifies a network architecture--resumewill load the weight from a specific model-estands for evaluation only-dwill visualize the network output. It can be also used during training

The result will be saved as a .mat file (preds_valid.mat), which is a 2958x16x2 matrix, in the folder specified by --checkpoint.

Evaluate the PCKh@0.5 score

Evaluate with MATLAB

You may use the matlab script evaluation/eval_PCKh.m to evaluate your predictions. The evaluation code is ported from Tompson et al. CVPR 2015.

The results (PCKh@0.5 score) trained using this code is reported in the following table.

| Model | Head | Shoulder | Elbow | Wrist | Hip | Knee | Ankle | Mean |

|---|---|---|---|---|---|---|---|---|

| hg_s2_b1 (last) | 95.80 | 94.57 | 88.12 | 83.31 | 86.24 | 80.88 | 77.44 | 86.76 |

| hg_s2_b1 (best) | 95.87 | 94.68 | 88.27 | 83.64 | 86.29 | 81.20 | 77.70 | 86.95 |

| hg_s8_b1 (last) | 96.79 | 95.19 | 90.08 | 85.32 | 87.48 | 84.26 | 80.73 | 88.64 |

| hg_s8_b1 (best) | 96.79 | 95.28 | 90.27 | 85.56 | 87.57 | 84.3 | 81.06 | 88.78 |

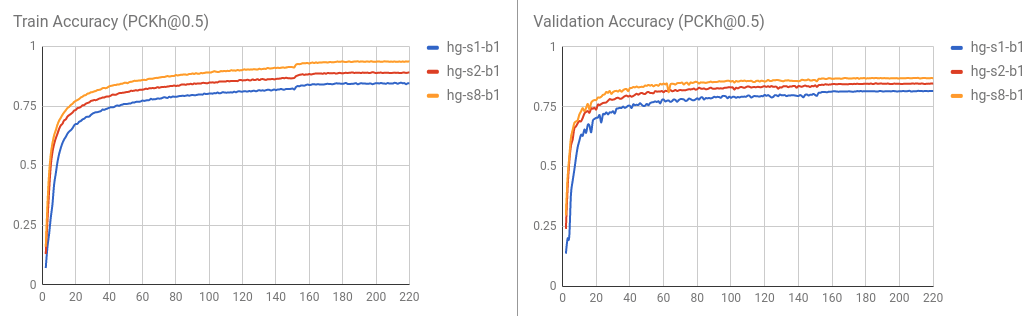

Training / validation curve is visualized as follows.

Evaluate with Python

You may also evaluate the result by running python evaluation/eval_PCKh.py to evaluate the predictions. It will produce exactly the same result as that of the MATLAB. Thanks @sssruhan1 for the contribution.

Training

Run the following command in terminal to train an 8-stack of hourglass network on the MPII human pose dataset.

CUDA_VISIBLE_DEVICES=0 python example/mpii.py -a hg --stacks 8 --blocks 1 --checkpoint checkpoint/mpii/hg8 -j 4

Here,

CUDA_VISIBLE_DEVICES=0identifies the GPU devices you want to use. For example, useCUDA_VISIBLE_DEVICES=0,1if you want to use two GPUs with ID0and1.-jspecifies how many workers you want to use for data loading.--checkpointspecifies where you want to save the models, the log and the predictions to.

Please refer to the example/mpii.py for the supported options/arguments.

To Do List

Supported dataset

Supported models

Contribute

Please create a pull request if you want to contribute.