This repository is an official implementation of PETR: Position Embedding Transformation for Multi-View 3D Object Detection.

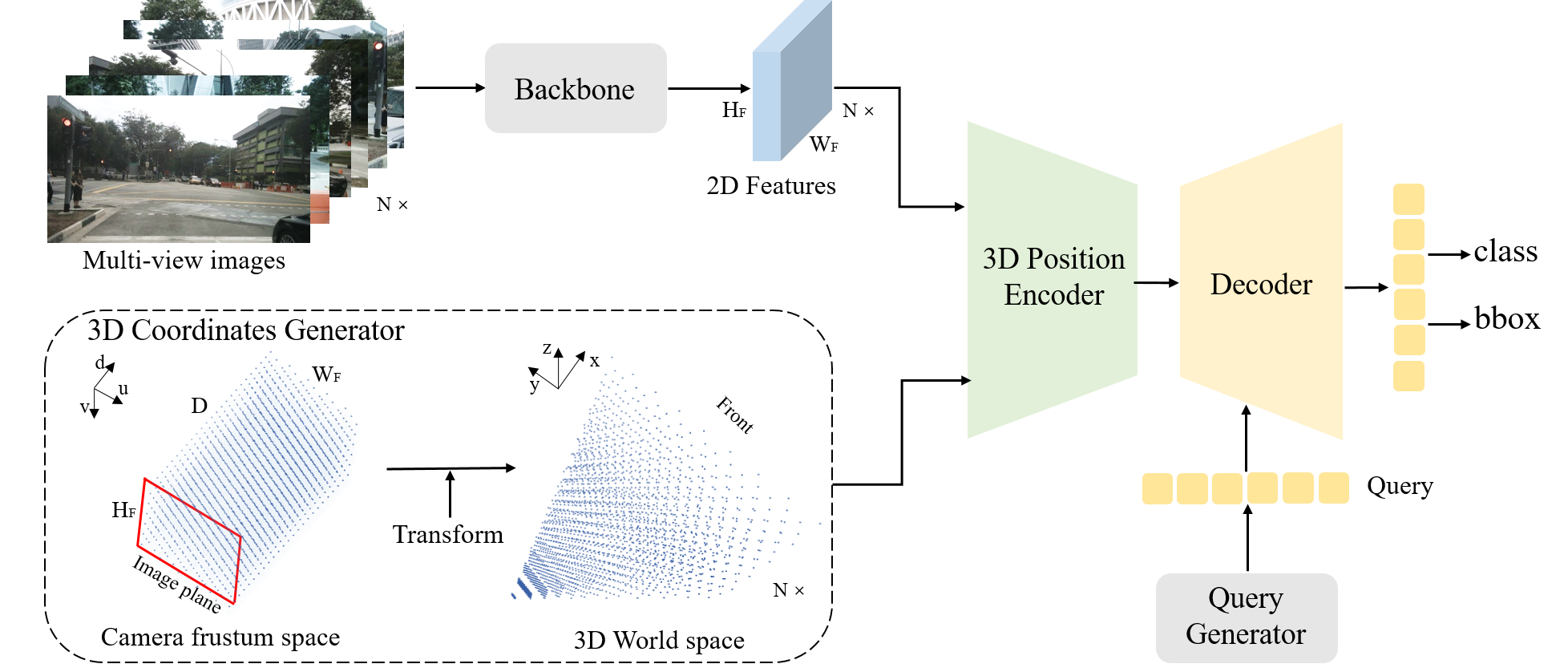

In this paper, we develop position embedding transformation (PETR) for multi-view 3D object detection. PETR encodes the position information of 3D coordinates into image features, producing the 3D position-aware features. Object query can perceive the 3D position-aware features and perform end-to-end object detection. It can serve as a simple yet strong baseline for future research.

2022.06.16 The code of 3D object detection in PETRv2 is released.

2022.06.10 The code of PETR is released.

2022.06.06 PETRv2 is released on arxiv.

2022.06.01 PETRv2 achieves another SOTA performance on nuScenes dataset (58.2% NDS and 49.0% mAP) by the temporal modeling and supports BEV segmentation.

2022.03.10 PETR is released on arxiv.

2022.03.08 PETR achieves SOTA performance (50.4% NDS and 44.1% mAP) on standard nuScenes dataset.

This implementation is built upon detr3d, and can be constructed as the install.md.

-

Environments

Linux, Python==3.6.8, CUDA == 11.2, pytorch == 1.9.0, mmdet3d == 0.17.1 -

Data

Follow the mmdet3d to process the nuScenes dataset (https://github.com/open-mmlab/mmdetection3d/blob/master/docs/en/data_preparation.md). -

Pretrained weights

To verify the performance on the val set, we provide the pretrained V2-99 weights. The V2-99 is pretrained on DDAD15M (weights) and further trained on nuScenes train set with FCOS3D. For the results on test set in the paper, we use the DD3D pretrained weights. The ImageNet pretrained weights of other backbone can be found here. Please put the pretrained weights into ./ckpts/. -

After preparation, you will be able to see the following directory structure:

PETR ├── mmdetection3d ├── projects │ ├── configs │ ├── mmdet3d_plugin ├── tools ├── data │ ├── nuscenes ├── ckpts ├── README.md

cd PETRYou can train the model following:

tools/dist_train.sh projects/configs/petr/petr_r50dcn_gridmask_p4.py 8 --work-dir work_dirs/petr_r50dcn_gridmask_p4/You can evaluate the model following:

tools/dist_test.sh projects/configs/petr/petr_r50dcn_gridmask_p4.py work_dirs/petr_r50dcn_gridmask_p4/latest.pth 8 --eval bboxPETR: We provide some results on nuScenes val set with pretrained models. These model are trained on 8x 2080ti without cbgs. Note that the models and logs are also available at Baidu Netdisk with code petr.

| config | mAP | NDS | training | config | download |

|---|---|---|---|---|---|

| PETR-r50-c5-1408x512 | 30.5% | 35.0% | 18hours | config | log / gdrive |

| PETR-r50-p4-1408x512 | 31.70% | 36.7% | 21hours | config | log / gdrive |

| PETR-vov-p4-800x320 | 37.8% | 42.6% | 17hours | config | log / gdrive |

| PETR-vov-p4-1600x640 | 40.40% | 45.5% | 36hours | config | log / gdrive |

PETRv2: We provide a 3D object detection baseline with two frames. The model is trained on 8x 2080ti without cbgs. The processed info files contain 30 previous frames, whose transformation matrix is aligned with the current frame. The info files, models and logs are also available at Baidu Netdisk with code petr.

| config | mAP | NDS | training | config | download |

|---|---|---|---|---|---|

| PETRv2-vov-p4-800x320 | 41.0% | 50.3% | 30hours | config | log / gdrive |

Many thanks to the authors of mmdetection3d and detr3d .

If you find this project useful for your research, please consider citing:

@article{liu2022petr,

title={Petr: Position embedding transformation for multi-view 3d object detection},

author={Liu, Yingfei and Wang, Tiancai and Zhang, Xiangyu and Sun, Jian},

journal={arXiv preprint arXiv:2203.05625},

year={2022}

}@article{liu2022petrv2,

title={PETRv2: A Unified Framework for 3D Perception from Multi-Camera Images},

author={Liu, Yingfei and Yan, Junjie and Jia, Fan and Li, Shuailin and Gao, Qi and Wang, Tiancai and Zhang, Xiangyu and Sun, Jian},

journal={arXiv preprint arXiv:2206.01256},

year={2022}

}If you have any questions, feel free to open an issue or contact us at liuyingfei@megvii.com or wangtiancai@megvii.com.