Helix is a Rust-based MEV-Boost Relay implementation developed as an entirely new code base from the ground up. It has been designed with key foundational principles at its core, such as modularity and extensibility, low-latency performance, robustness and fault tolerance, geo-distribution, and a focus on reducing operational costs.

Our goal is to provide a code base that is not only technologically advanced but also aligns with the evolving needs of proposers, builders, and the broader Ethereum community.

The PBS relay operates using two distinct flows, each with its own unique key requirements:

- Submit_block -> Get_header Flow (Latency): Currently, this is the only flow where latency is critically important. Our primary focus is on minimising latency while considering redundancy as a secondary priority. Future enhancements will include hyper-optimising the

get_headerandget_payloadflows for latency (see the Future Work section for more details). - Get_header -> Get_payload Flow (Redundancy): Promptly delivering the payload following a

get_headerrequest is essential. A delay in this process risks the proposer missing their slot, making high redundancy in this flow extremely important.

The current Flashbots MEV-Boost relay implementation is limited to operating as a single cluster. As a result, relays tend to aggregate in areas with a high density of proposers, particularly AWS data centres in North Virginia and Europe. This situation poses a significant disadvantage for proposers in locations with high network latency in these areas. To prevent missed slots, proposers in such locations are compelled to adjust their MEV-Boost configuration to call get_header earlier, which leads to reduced MEV rewards. In response, we have designed our relay to support geo-distribution. This allows multiple clusters to be operated in different geographical locations simultaneously, whilst collectively serving the relay API as one unified relay endpoint.

- Our design supports multiple geo-distributed clusters under a single relay URL.

- Automatic call routing via DNS resolution ensures low latency communication to relays.

- We've addressed potential non-determinism, such as differing routes for

get_headerandget_payload, by implementing theGossipClientTraitusing gRPC, which ensures payload availability across all clusters.

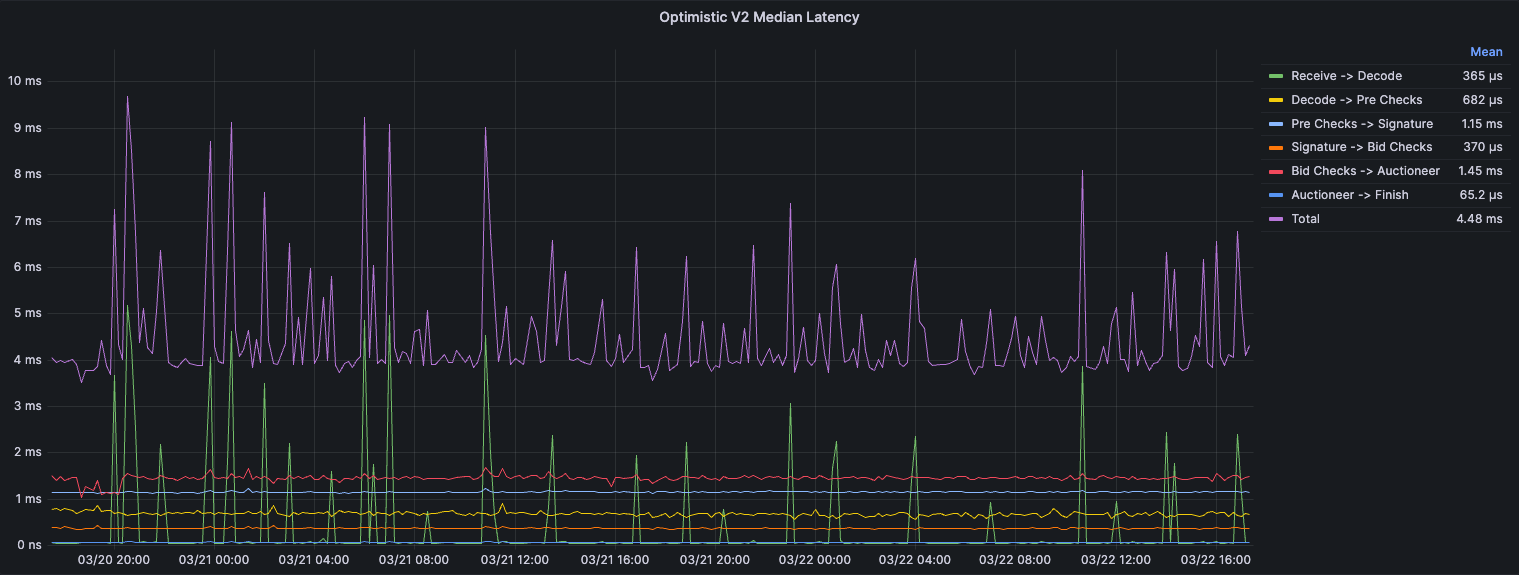

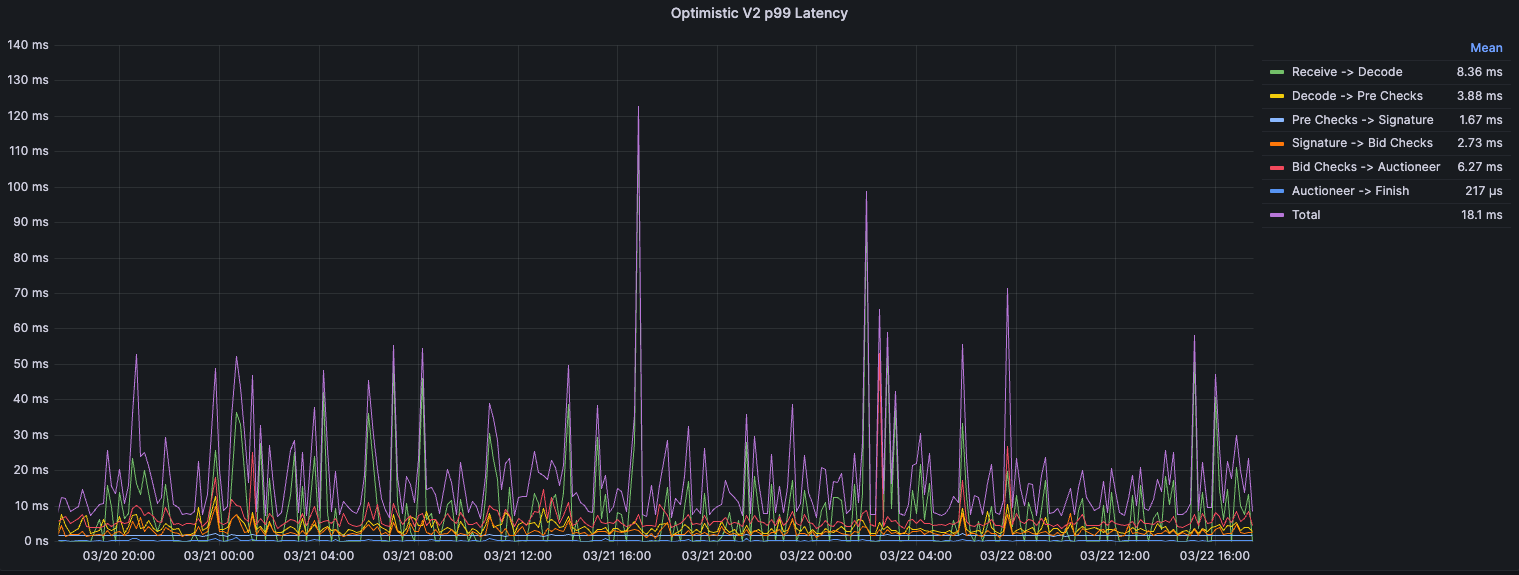

OptimisticV2, initially proposed here, introduces an architectural change where the lightweight header (1 MTU) is decoupled from the much heavier payload. Due to the much smaller size, the header can be quickly downloaded, deserialised and saved. Ready for get_header responses. Meanwhile, the much heavier full SignedBidSubmission is downloaded and verified asynchronously.

We have implemented two distinct endpoints for builders: submit_header and submit_block_v2. Builders will be responsible for ensuring that they only use these endpoints if their collateral covers the block value and that they submit payloads in a timely manner to the relay. Builders that fail to submit payloads will have their collateral slashed in the same process as the current Optimistic V1 implementation.

Along with reducing the internal latency, separating the header and payload drastically reduces the network latency.

To efficiently manage transactions based on regional policies, our relay operations have been streamlined as follows:

- Unified Relay System: Both regional and global filtering are integrated into a single relay system, allowing proposers to specify their filtering preferences during registration.

- Simplified Registration: Proposers can communicate their filtering preference through two different URLs: titanrelay.xyz for global filtering and regional.titanrelay.xyz for regional filtering. Registration to either URL will automatically set the appropriate preference. All other calls will be routed the same way.

- Builder Adaptations: Builders need to adapt to the system changes. The proposers API response will now include a

preferencesfield indicating the chosen filtering mode. Minor adjustments to internal logic are required, as filtering is now managed on a per-validator basis.

- Emphasising generic design, Helix allows for flexible integration with various databases and libraries.

- Key Traits include:

Database,Auctioneer,SimulatorandBeaconClient. - The current

Auctioneerimplementation supports Redis due to the ease of implementation when synchronising multiple processes in the same cluster. - The

Simulatoris also purposely generic, allowing for implementations of all optimistic relaying implementations and different forms of simulation. For example, communicating with the execution client via RPC or gRPC.

- To ensure efficient block propagation without the need for sophisticated Beacon client peering, we've integrated a

BroadcasterTrait, allowing integration with network services like Fiber for effective payload distribution. - Similar to the current MEV-Boost-relay implementation, we include a one-second delay before returning unblinded payloads to the proposer. This delay is required to mitigate attack vectors such as the recent low-carb-crusader attack. Consequently, the relay takes on the critical role of beacon-chain block propagation.

- In the future, we will be adding a custom module that will handle optimised peering

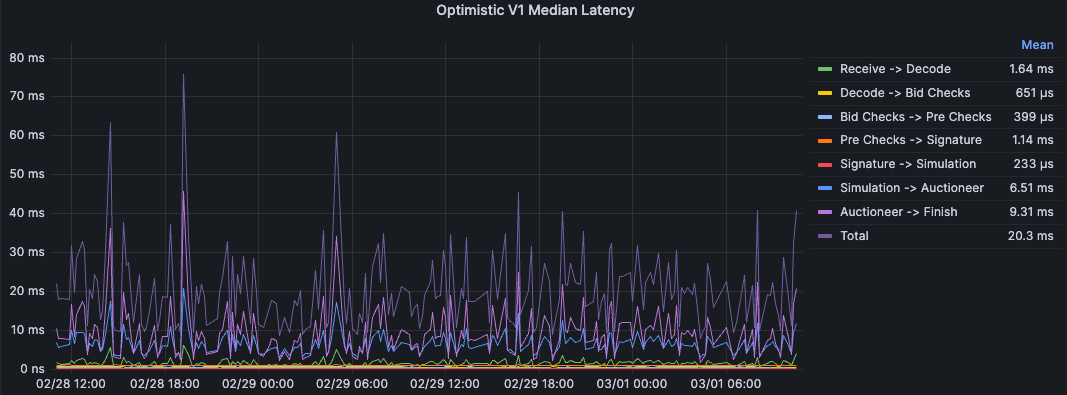

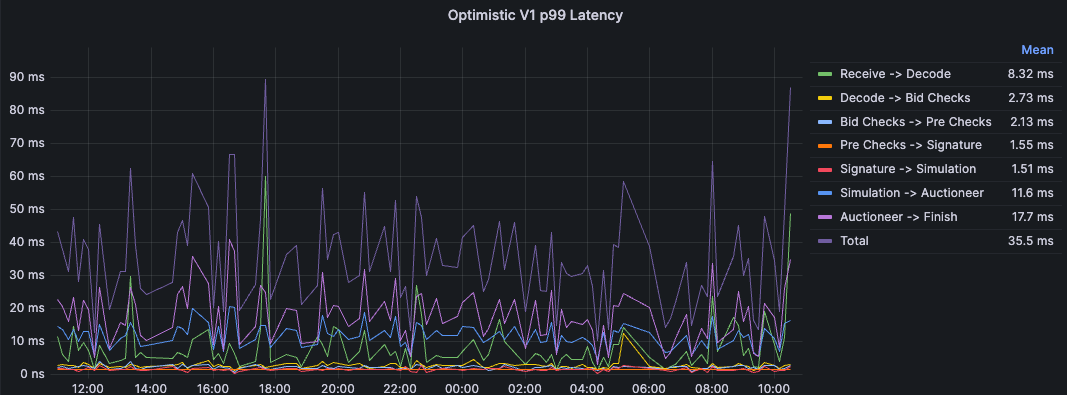

These latency measurements were taken on mainnet over multiple days handling full submissions from all Titan builder clusters.

Analysing the latency metrics presented, we observe significant latency spikes in two distinct segments: Receive -> Decode and Simulation -> Auctioneer. Note: Auctioneer -> Finish is irrelivant as the bid will be available to the ProposerAPI at Auctionner.

Receive -> Decode. The primary sources of latency can be attributed to the handling of incoming byte streams and their deserialisation into the SignedBidSubmission structure. This latency is primarily incurred due to the following:

- Byte Stream Processing: The initial step involves reading the incoming byte stream into a memory buffer, specifically using

hyper::body::to_bytes(body). This step is necessary to translate raw network data into a usable byte vector. Its latency is heavily influenced by the size of the incoming data and the efficiency of the network I/O operations. - GZIP Decompression: For compressed payloads, the GZIP decompression process can introduce significant computational overhead, especially for larger payloads.

- Deserialisation Overhead: This is the final deserialisation step, where the byte vector is converted into a

SignedBidSubmissionobject using either SSZ or JSON.

Simulation -> Auctioneer. In this section, we store all necessary information about the payload, preparing it to be returned by get_header and get_payload. This is handled using Redis in the current implementation, which can introduce significant latency, especially for larger payloads.

It is worth mentioning that all submissions during this period were simulated optimistically. If this weren’t the case, we would see most of the latency being taken up by Bid checks -> Simulation.

These latency measurements were taken on mainnet over multiple days handling full submissions from all Titan builder clusters.

The graphs illustrate a marked reduction in latency across several operational segments, with the most notable improvements observed in the Receive -> Decode and Bid Checks -> Auctioneer phases.

In addition to the improvements made in internal processing efficiency, using the OptimisticV2 implementation has resulted in significantly lower network latencies from our builders.

In multi-relay cluster configurations, synchronising the best bid across all nodes is crucial to minimise redundant processing. Currently, this synchronisation relies on Redis. While the current RedisCache implementation could be optimised further, we plan on shifting to an in-memory model for our Auctioneer component, eliminating the reliance on Redis for critical-path functions.

Our approach will separate Auctioneer functionalities based on whether they lie in the critical path. Non-critical path functions will continue to use Redis for synchronisation and redundancy. However, critical-path operations like get_last_slot_delivered and check_if_bid_is_below_floor will be moved in-memory. To ensure that we minimise redundant processing, each header update will gossip between local instances.

As stated in the "Optimised Block Propagation" section, we plan to develop a module dedicated to optimal beacon client peering. This module will feature a dynamic network crawler designed to fingerprint network nodes to enhance peer discovery and connectivity.

In line with the design principles of Protocol Enforced Proposer Commitments (PEPC) PEPC, we aim to offer more granularity in allowing proposers to communicate their preferences regarding the types of blocks they wish to commit to. Currently, this is achieved through the preferences field, which enables proposers to indicate whether they prefer to commit to a regional or global filtering policy. In the future, we plan to support additional preferences, such as commitment to blocks adhering to relay-established inclusion lists, blocks produced by trusted builders, and others.

- For proposers, Helix is fully compatible with the current MEV-Boost relay spec.

- For builders, there's a requirement to adapt to these changes as the proposers API response will now contain an extra

preferencesfield. Minor changes are also required to the internal logic, as filtering moves from a per-relay basis to a per-validator basis.

Flashbots:

Alex Stokes. A lot of the types used are derived/ taken from these repos:

- https://github.com/ralexstokes/ethereum-consensus

- https://github.com/ralexstokes/mev-rs

- https://github.com/ralexstokes/beacon-api-client

- Audit conducted by Spearbit, with Alex Stokes (EF) and Matthias Seitz (Reth, Foundry, ethers-rs) leading as security researchers. See the report here.

MIT + Apache-2.0