Food Delivery Modular Monolithis a practical and imaginary food delivery modular monolith, built with .Net Core and different software architecture and technologies like Modular Monolith Architecture, Vertical Slice Architecture , CQRS Pattern, Domain Driven Design (DDD), Event Driven Architecture. Forcommunicationbetween independent modules, we use asynchronous messaging using our In-Memory Broker, also we use synchronous communication for real-time communications using REST and gRPC calls.

💡 This application is not business-oriented and my focus is mostly on the technical part, I just want to implement a sample using different technologies, software architecture design, principles, and all the things we need for creating a modular monolith app.

This application ported to microservices architecture in another repository which is available in food-delivery-microservices repository.

🌀 Keep in mind this repository is a work in progress and will be completed over time 🚀

If you like feel free to ⭐ this repository, It helps out :)

Thanks a bunch for supporting me!

- Plan

- Technologies - Libraries

- The Domain and Bounded Context - Modules Boundary

- Application Architecture

- Application Structure

- Vertical Slice Flow in Modules

- Prerequisites

- How to Run

- Contribution

- Project References

- License

This project is in progress, new features will be added over time.

High-level plan is represented in the table

| Feature | Status |

|---|---|

| Building Blocks | Completed ✔️ |

- ✔️

.NET 8- .NET Framework and .NET Core, including ASP.NET and ASP.NET Core - ✔️

Npgsql Entity Framework Core Provider- Npgsql has an Entity Framework (EF) Core provider. It behaves like other EF Core providers (e.g. SQL Server), so the general EF Core docs apply here as well - ✔️

FluentValidation- Popular .NET validation library for building strongly-typed validation rules - ✔️

Swagger & Swagger UI- Swagger tools for documenting API's built on ASP.NET Core - ✔️

Serilog- Simple .NET logging with fully-structured events - ✔️

Polly- Polly is a .NET resilience and transient-fault-handling library that allows developers to express policies such as Retry, Circuit Breaker, Timeout, Bulkhead Isolation, and Fallback in a fluent and thread-safe manner - ✔️

Scrutor- Assembly scanning and decoration extensions for Microsoft.Extensions.DependencyInjection - ✔️

Opentelemetry-dotnet- The OpenTelemetry .NET Client - ✔️

DuendeSoftware IdentityServer- The most flexible and standards-compliant OpenID Connect and OAuth 2.x framework for ASP.NET Core - ✔️

Newtonsoft.Json- Json.NET is a popular high-performance JSON framework for .NET - ✔️

AspNetCore.Diagnostics.HealthChecks- Enterprise HealthChecks for ASP.NET Core Diagnostics Package - ✔️

Microsoft.AspNetCore.Authentication.JwtBearer- Handling Jwt Authentication and authorization in .Net Core - ✔️

NSubstitute- A friendly substitute for .NET mocking libraries. - ✔️

StyleCopAnalyzers- An implementation of StyleCop rules using the .NET Compiler Platform - ✔️

AutoMapper- Convention-based object-object mapper in .NET. - ✔️

Hellang.Middleware.ProblemDetails- A middleware for handling exception in .Net Core - ✔️

IdGen- Twitter Snowflake-alike ID generator for .Net

TODO

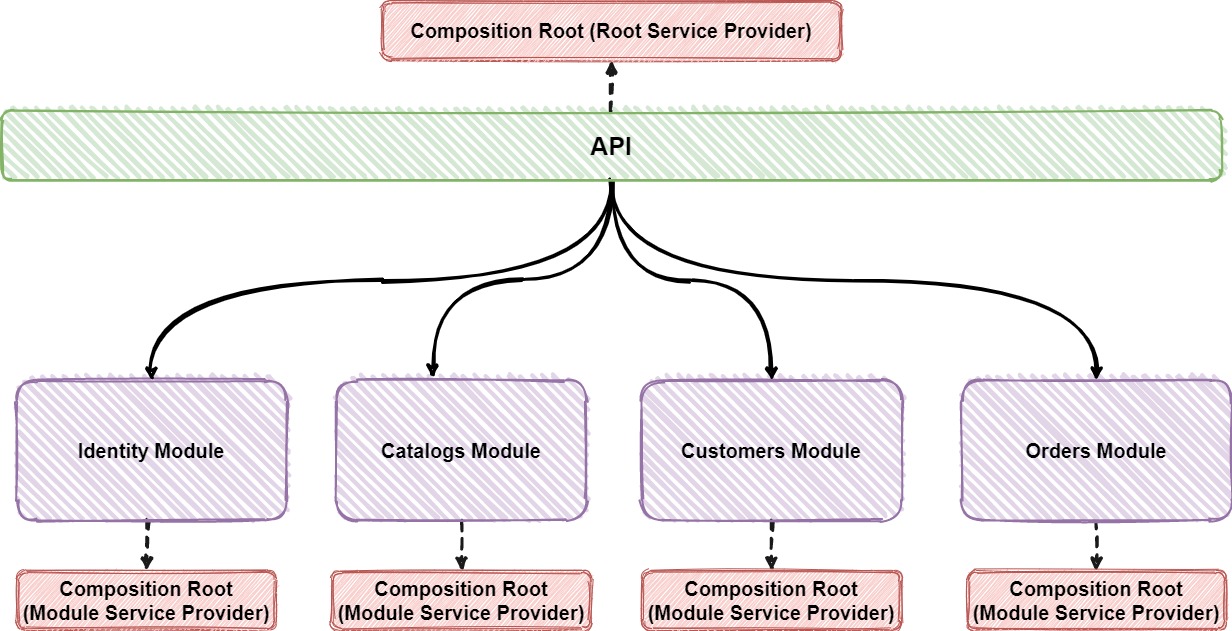

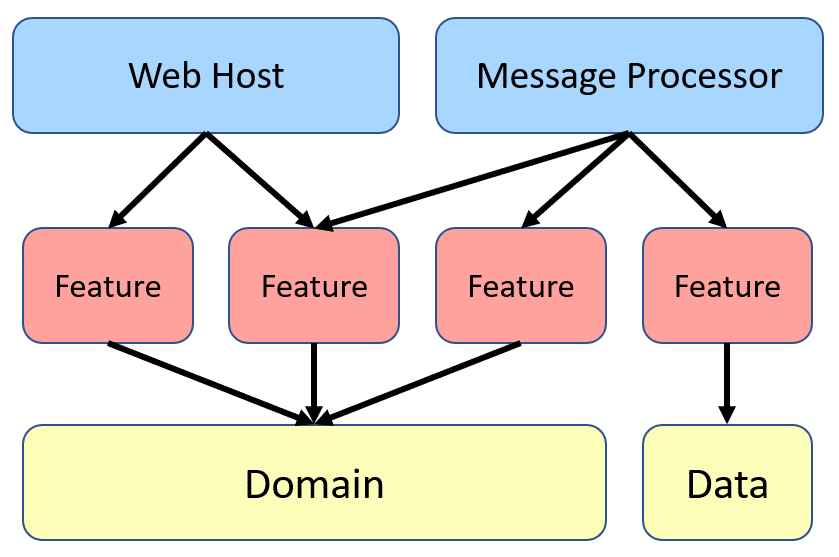

The bellow architecture shows that there is one Public API (API Project or API Gateway in microservice world) which host all of our internal modules and accessible for the clients and this is done via HTTP request/response. The API project then routes the HTTP request to the corresponding modules. here our API project instead of Http Calls or Network Calls our modules we have some In-Memory Calls for calling our internal modules. In our application this is responsibility of GatewayProcessor class in minimal apis or CustomServiceBasedControllerActivator in normal controllers. In our API there is no code it just hosts our modules and it uses routes which defined in each module in vertical slice architecture for example CreateProductEndpoint in catalogs module. And when this endpoint reached by user http request from the API, inner this Endpoint we use GatewayProcessor<CatalogModuleConfiguration> for sending a In-Memory request to our module with using a dedicated Composition Root. Behind the scenes this GatewayProcessor for each module, uses a dedicate Composition Root or a Root Service Provider and it is responsibility of CompositionRootRegistry for preserving and creating composition root for each modules.

When In-Memory Call reached to the internal module, the module should process this request autonomous. Actually in modular monolith each of our modules should treat like a microservice with completely autonomous behavior. For reaching this goal we should use separated Composition Root for each module and actually for each composition root we have a separated Service Provider. (read more here...)

Composition Root: With using separate Composition Root for module we can reach to autonomy also a given module can create its own object dependency graph or

Dependency Container, i.e. it should have its own Composition Root.

Each module is running within its own Composition Root or its own Service Provider and has directly access to its own local Database or Schema and its dependencies such as files, Mappers, etc. All these dependencies are only accessible for that module and not other modules. In fact modules are decoupled from each other and are autonomous (Not physically but virtually). also This approach makes migrating to the microservice easier for each module when we need that for example, scaling purpose. In this case we can extract given module to a separated microservice and our modular monolith will communicate with this service maybe with different broker like RabbitMQ.

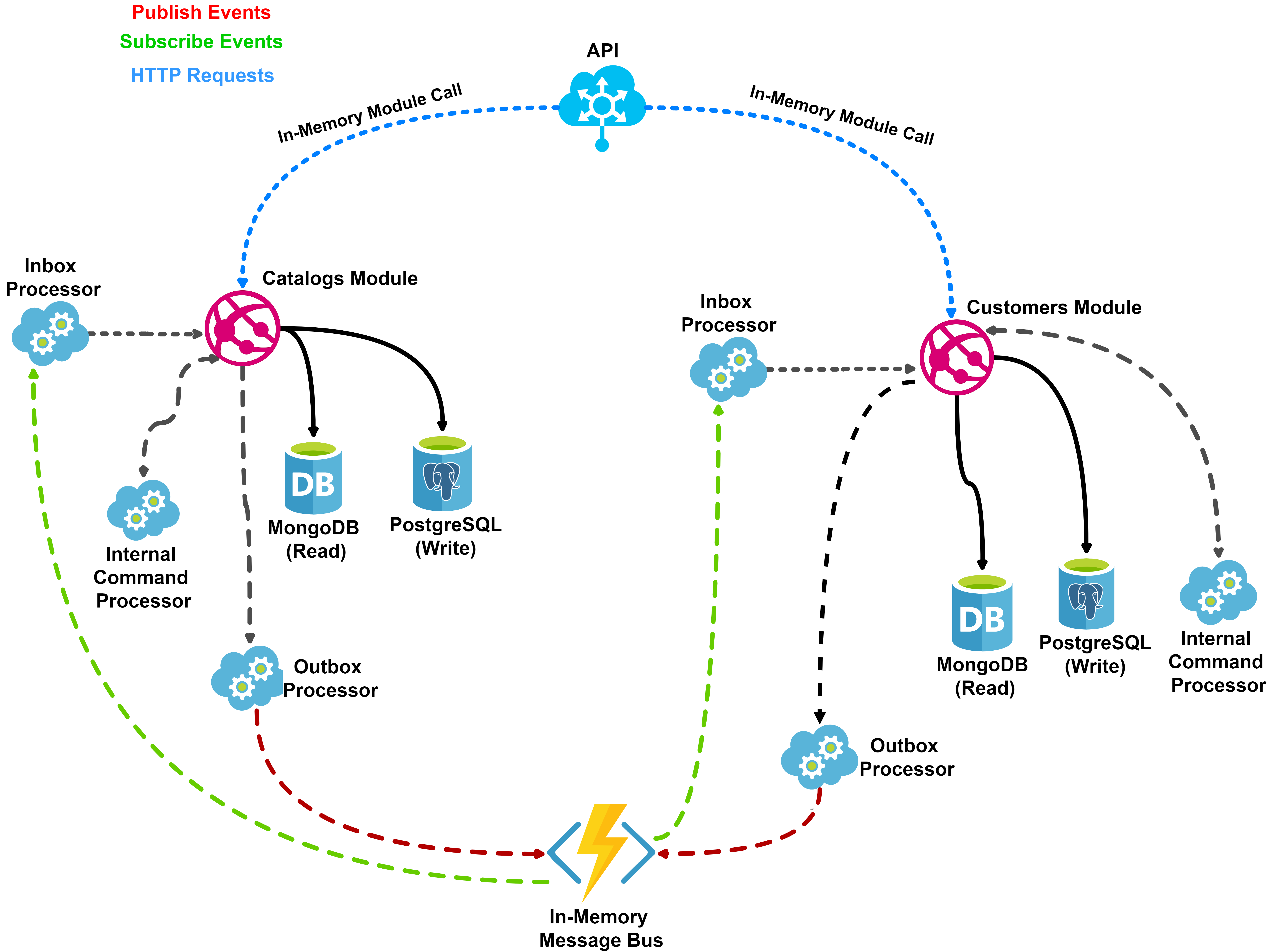

In this architecture modules should talk each other asynchronously most of the cases unless, we need the data immediately for example getting some data and sending to user. For async communications between modules we use a In-Memory Broker but we could use other message brokers depending on the needs and for sync communication we use REST calls or gRPC calls.

Modules are event based which means they can publish and/or subscribe to any events occurring in the setup. By using this approach for communicating between modules, each module does not need to know about the other modules or handle errors occurred in other modules.

In this architecture we use CQRS Pattern for separating read and write model beside of other CQRS Advantages. Here for now I don't use Event Sourcing for simplicity but I will use it in future for syncing read and write side with sending streams and using Projection Feature for some subscribers to syncing their data through sent streams and creating our Custom Read Models in subscribers side.

Here I have a write model that uses a postgres database for handling better Consistency and ACID Transaction guaranty. beside o this write side I use a read side model that uses MongoDB for better performance of our read side without any joins with suing some nested document in our document also better scalability with some good scaling features of MongoDB.

For syncing our read side and write side we have 2 options with using Event Driven Architecture (without using events streams in event sourcing):

-

If our

Read Sidesare inSame Service, during saving data in write side I save a Internal Command record in myCommand Processorstorage (like something we do in outbox pattern) and after commenting write side, ourcommand processor managerreads unsent commands and sends them to theirCommand Handlersin same corresponding service and this handlers could save their read models in our MongoDb database as a read side. -

If our

Read Sidesare inAnother Serviceswe publish an integration event (with saving this message in the outbox) after committing our write side and all of ourSubscriberscould get this event and save it in their read models (MongoDB).

All of this is optional in the application and it is possible to only use what that the service needs. Eg. if the service does not want to Use DDD because of business is very simple and it is mostly CRUD we can use Data Centric Architecture or If our application is not Task based instead of CQRS and separating read side and write side again we can just use a simple CRUD based application.

Here I used Outbox for Guaranteed Delivery and can be used as a landing zone for integration events before they are published to the message broker .

Outbox pattern ensures that a message was sent (e.g. to a queue) successfully at least once. With this pattern, instead of directly publishing a message to the queue, we put it in the temporary storage (e.g. database table) for preventing missing any message and some retry mechanism in any failure (At-least-once Delivery). For example When we save data as part of one transaction in our service, we also save messages (Integration Events) that we later want to process in another microservices as part of the same transaction. The list of messages to be processed is called a StoreMessage with Message Delivery Type Outbox that are part of our MessagePersistence service. This infrastructure also supports Inbox Message Delivery Type and Internal Message Delivery Type (Internal Processing).

Also we have a background service MessagePersistenceBackgroundService that periodically checks the our StoreMessages in the database and try to send the messages to the broker with using our MessagePersistenceService service. After it gets confirmation of publishing (e.g. ACK from the broker) it marks the message as processed to avoid resending.

However, it is possible that we will not be able to mark the message as processed due to communication error, for example broker is unavailable. In this case our MessagePersistenceBackgroundService try to resend the messages that not processed and it is actually At-Least-Once delivery. We can be sure that message will be sent once, but can be sent multiple times too! That’s why another name for this approach is Once-Or-More delivery. We should remember this and try to design receivers of our messages as Idempotents, which means:

In Messaging this concepts translates into a message that has the same effect whether it is received once or multiple times. This means that a message can safely be resent without causing any problems even if the receiver receives duplicates of the same message.

For handling Idempotency and Exactly-once Delivery in receiver side, we could use Inbox Pattern.

This pattern is similar to Outbox Pattern. It’s used to handle incoming messages (e.g. from a queue) for unique processing of a single message only once (even with executing multiple time). Accordingly, we have a table in which we’re storing incoming messages. Contrary to outbox pattern, we first save the messages in the database, then we’re returning ACK to queue. If save succeeded, but we didn’t return ACK to queue, then delivery will be retried. That’s why we have at-least-once delivery again. After that, an inbox background process runs and will process the inbox messages that not processed yet. also we can prevent executing a message with specific MessgaeIdmultiple times. after executing our inbox message for example with calling our subscribed event handlers we send a ACK to the queue when they succeeded. (Inbox part of the system is in progress, I will cover this part soon as possible)

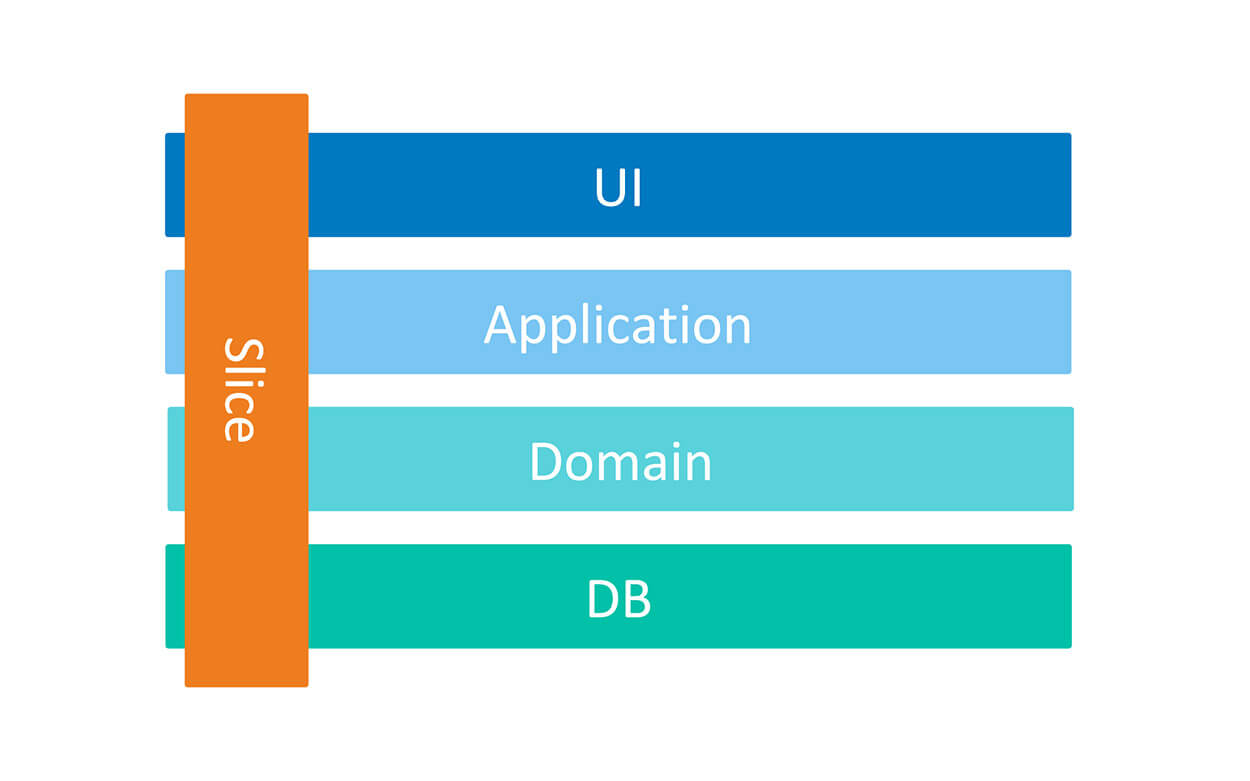

In this project I used vertical slice architecture or Restructuring to a Vertical Slice Architecture also I used feature folder structure in this project.

- We treat each request as a distinct use case or slice, encapsulating and grouping all concerns from front-end to back.

- When We adding or changing a feature in an application in n-tire architecture, we are typically touching many different "layers" in an application. we are changing the user interface, adding fields to models, modifying validation, and so on. Instead of coupling across a layer, we couple vertically along a slice and each change affects only one slice.

- We

Minimize couplingbetween slices, andmaximize couplingin a slice. - With this approach, each of our vertical slices can decide for itself how to best fulfill the request. New features only add code, we're not changing shared code and worrying about side effects. For implementing vertical slice architecture using cqrs pattern is a good match.

Also here I used CQRS for decompose my features to very small parts that makes our application:

- maximize performance, scalability and simplicity.

- adding new feature to this mechanism is very easy without any breaking change in other part of our codes. New features only add code, we're not changing shared code and worrying about side effects.

- easy to maintain and any changes only affect on one command or query (or a slice) and avoid any breaking changes on other parts

- it gives us better separation of concerns and cross cutting concern (with help of MediatR behavior pipelines) in our code instead of a big service class for doing a lot of things.

With using CQRS, our code will be more aligned with SOLID principles, especially with:

- Single Responsibility rule - because logic responsible for a given operation is enclosed in its own type.

- Open-Closed rule - because to add new operation you don’t need to edit any of the existing types, instead you need to add a new file with a new type representing that operation.

Here instead of some Technical Splitting for example a folder or layer for our services, controllers and data models which increase dependencies between our technical splitting and also jump between layers or folders, We cut each business functionality into some vertical slices, and inner each of these slices we have Technical Folders Structure specific to that feature (command, handlers, infrastructure, repository, controllers, data models, ...).

Usually, when we work on a given functionality we need some technical things for example:

- API endpoint (Controller)

- Request Input (Dto)

- Request Output (Dto)

- Some class to handle Request, For example Command and Command Handler or Query and Query Handler

- Data Model

Now we have all of these things beside each other and it decrease jumping and dependencies between some layers or folders.

Keeping such a split works great with CQRS. It segregates our operations and slices the application code vertically instead of horizontally. In Our CQRS pattern each command/query handler is a separate slice. This is where you can reduce coupling between layers. Each handler can be a separated code unit, even copy/pasted. Thanks to that, we can tune down the specific method to not follow general conventions (e.g. use custom SQL query or even different storage). In a traditional layered architecture, when we change the core generic mechanism in one layer, it can impact all methods.

TODO

TODO

- This application uses

Httpsfor hosting apis, to setup a valid certificate on your machine, you can create a Self-Signed Certificate. - Install git - https://git-scm.com/downloads.

- Install .NET Core 7.0 - https://dotnet.microsoft.com/download/dotnet/7.0.

- Install Visual Studio 2022, Rider or VSCode.

- Install docker - https://docs.docker.com/docker-for-windows/install/.

- Make sure that you have ~10GB disk space.

- Clone Project https://github.com/mehdihadeli/food-delivery-modular-monolith, make sure that's compiling

- Run the docker-compose.infrastructure.yaml file, for running prerequisites infrastructures with

docker-compose -f ./deployments/docker-compose.infrastructure.yaml -dcommand. - Open food-delivery.sln solution.

For Running this application we could run our application and their modules with running src/Api/FoodDelivery.Api/FoodDelivery.Api.csproj project in our Dev Environment, for me it's Rider.

For testing apis I used REST Client plugin of VSCode its related file scenarios are available in _httpclients folder. also after running api you have access to swagger open api for all modules in /swagger route path.

In this application I use a fake email sender with name of ethereal as a SMTP provider for sending email. after sending email by the application you can see the list of sent emails in ethereal messages panel. My temp username and password is available inner the all of appsettings file.

The application is in development status. You are feel free to submit pull request or create the issue.

- https://github.com/kgrzybek/modular-monolith-with-ddd

- https://github.com/oskardudycz/EventSourcing.NetCore

- https://github.com/dotnet-architecture/eShopOnContainers

- https://github.com/jbogard/ContosoUniversityDotNetCore-Pages

- https://github.com/thangchung/clean-architecture-dotnet

- https://github.com/jasontaylordev/CleanArchitecture

- https://github.com/DijanaPenic/DDD-VShop

The project is under MIT license.