This is an introduction to reinforcement learning based on the Imperial College course and the corresponding resources. The code inhere covers the theory and implementation of Tabular Methods.

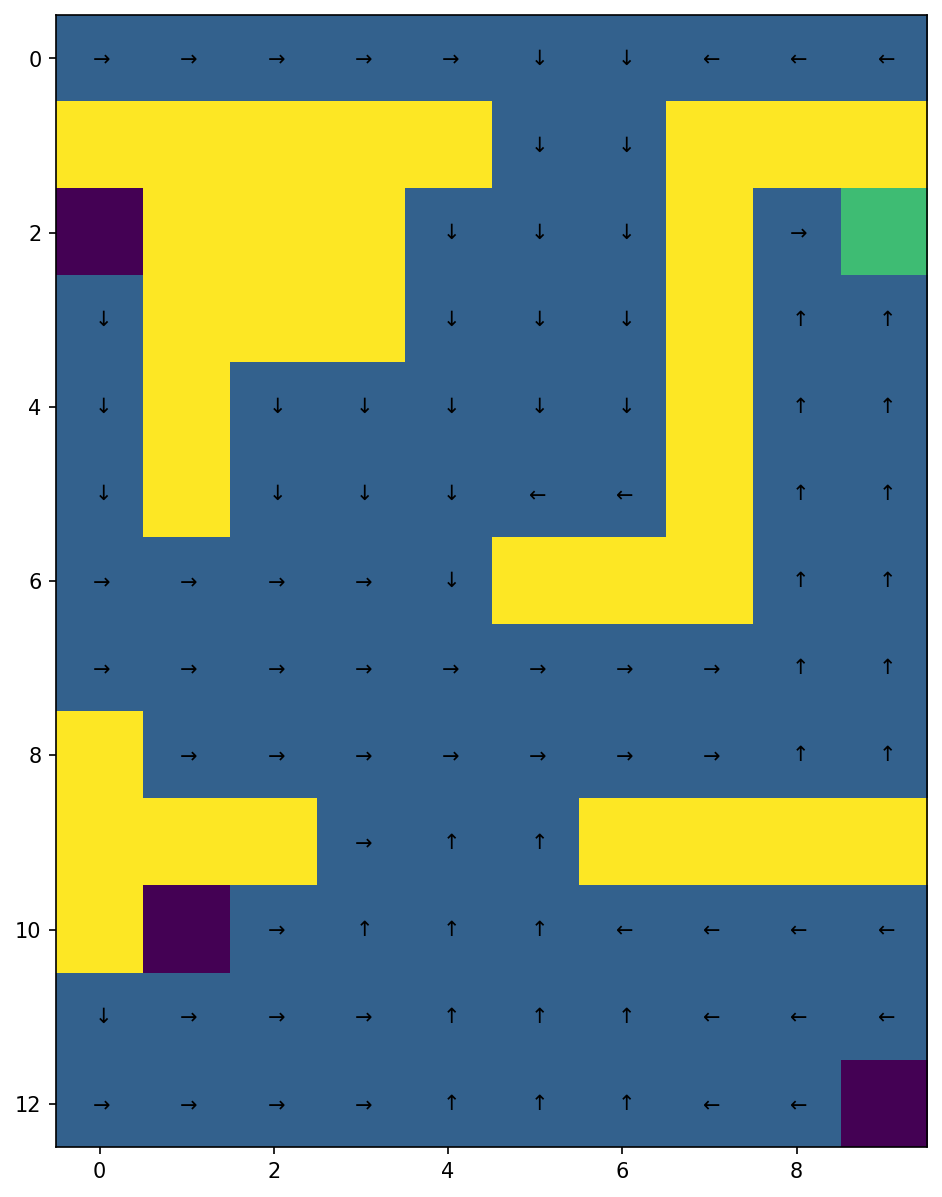

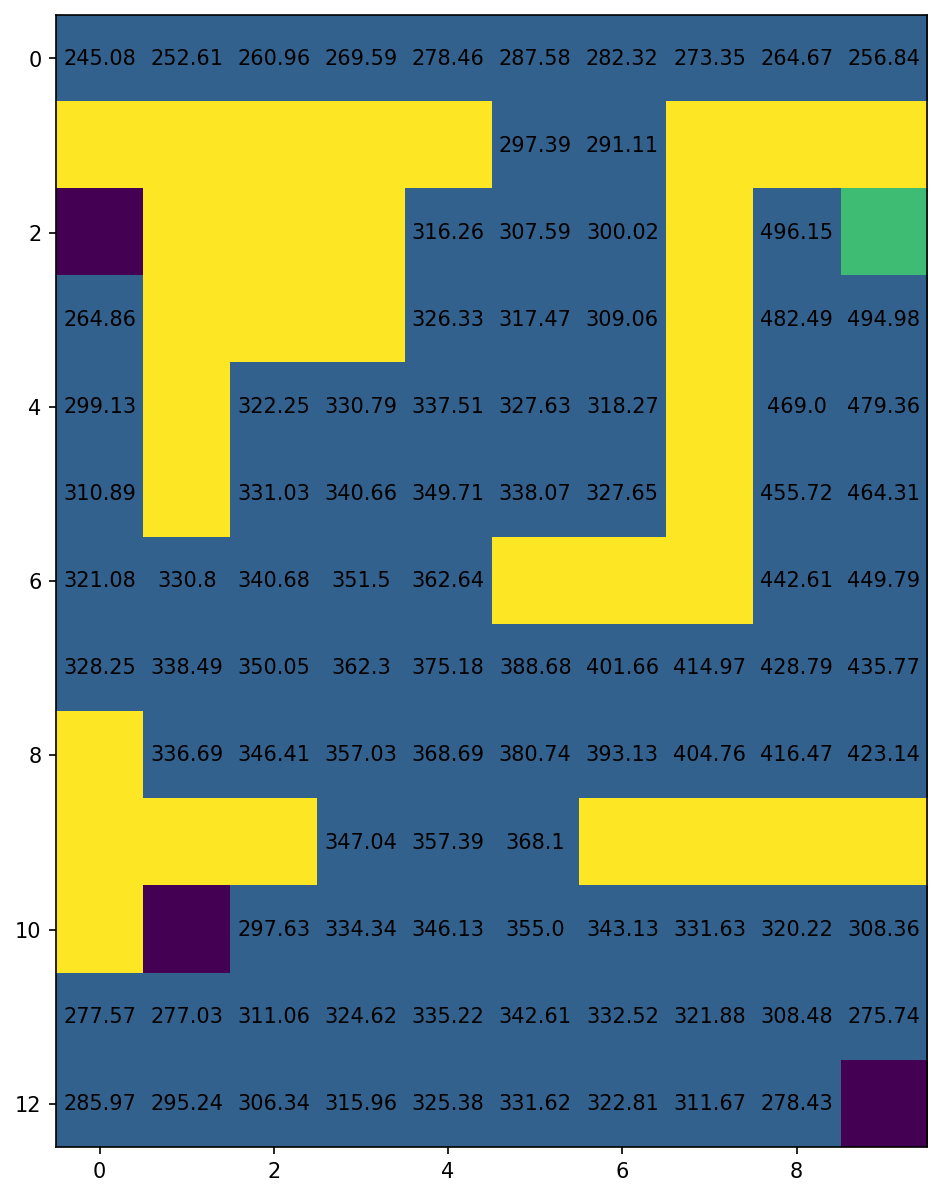

Inspired by Sutton and Barton's example in the 4th chapter of its book we will solve a maze to learn how to implement tabular methods. We will implement methods such as Dynamic programming, and others that do not require full knowledge of the dynamic of the environment, namely Monte-Carlo Methods and Temporal-Difference learning. We aim to solve a maze where an agent starts from the top-row cells and its goal is to reach the green cell by maximizing the reward function, considering that when the absorbing states (i.e., the purple ones) are reached the agent gets a reward of -50, -1 when reaching a blue cell and 500 when reaching the goal cell.

In order to apply dynamic programming in this problem we could consider using either value iteration or policy iteration. Value iteration will converge in the limit just like the policy iteration algorithm. However, value iteration avoids sweeping through the state set multiple times, and consequently converges faster. Thus, we think that it is going to be the best option in this case.

Bear in mind that value iteration is a specific version of policy iteration that converges faster, where the policy evaluation step has been truncated to just one sweep and we update the value function with the Bellman optimally equation.

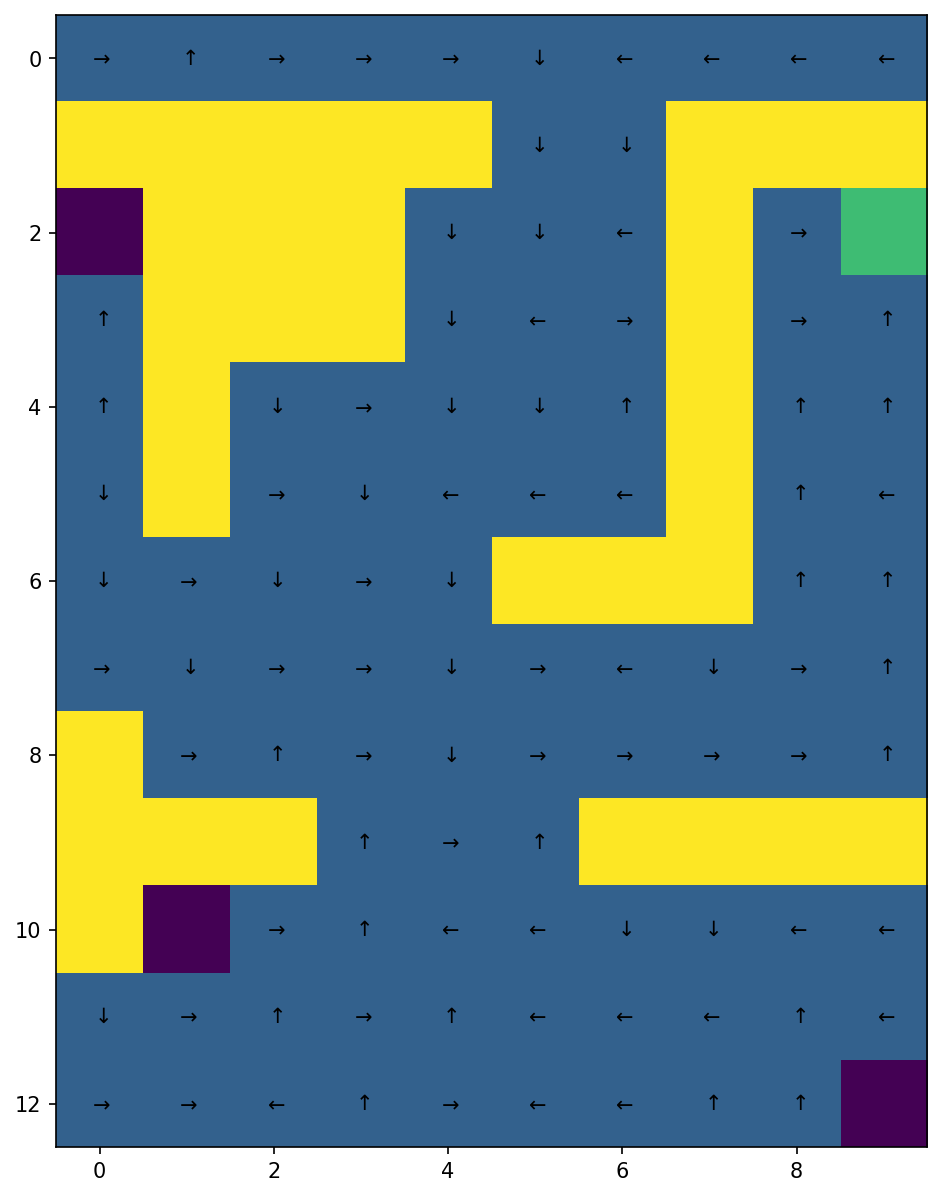

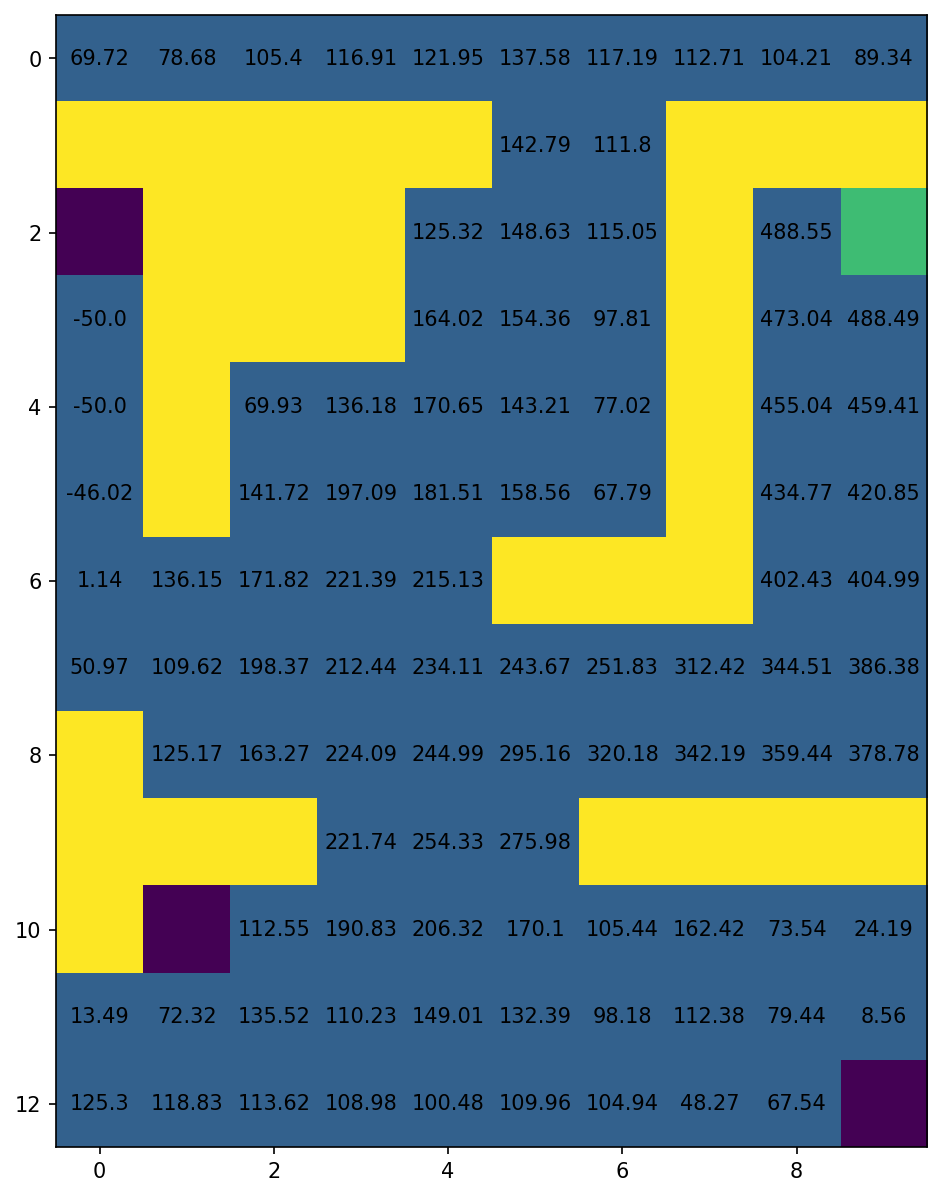

| Optimal Policy | Optimal Value function |

|---|---|

|

|

See the notebook for further details on the influence of

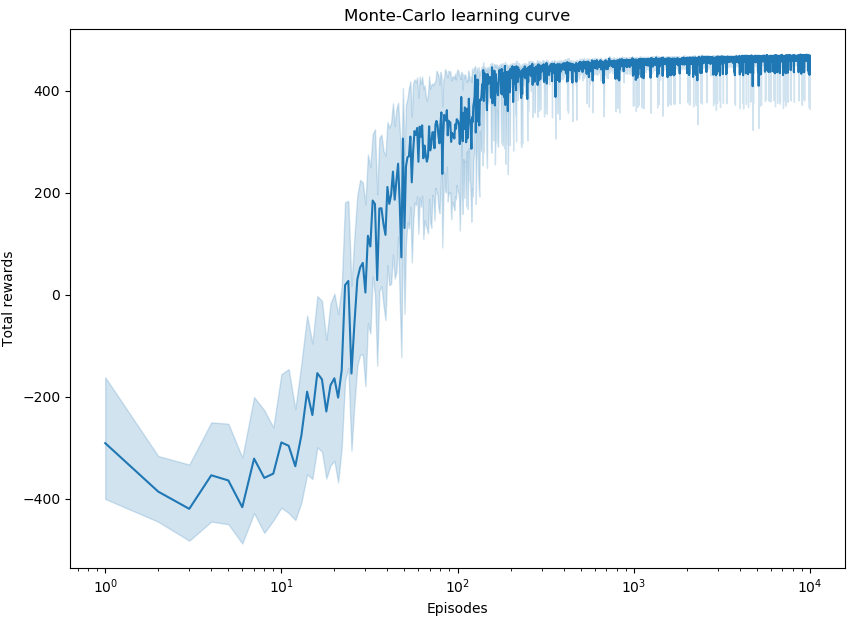

To solve a control problem with a Monte-Carlo agent we could have used an off-policy MC control algorithm, but as Sutton and Barto claim, the value function and the policy is learned from the last steps of the episodes and off-policy MC could slow the learning process. Hence, we are going to use a Monte-Carlo on-policy first visit algorithm.

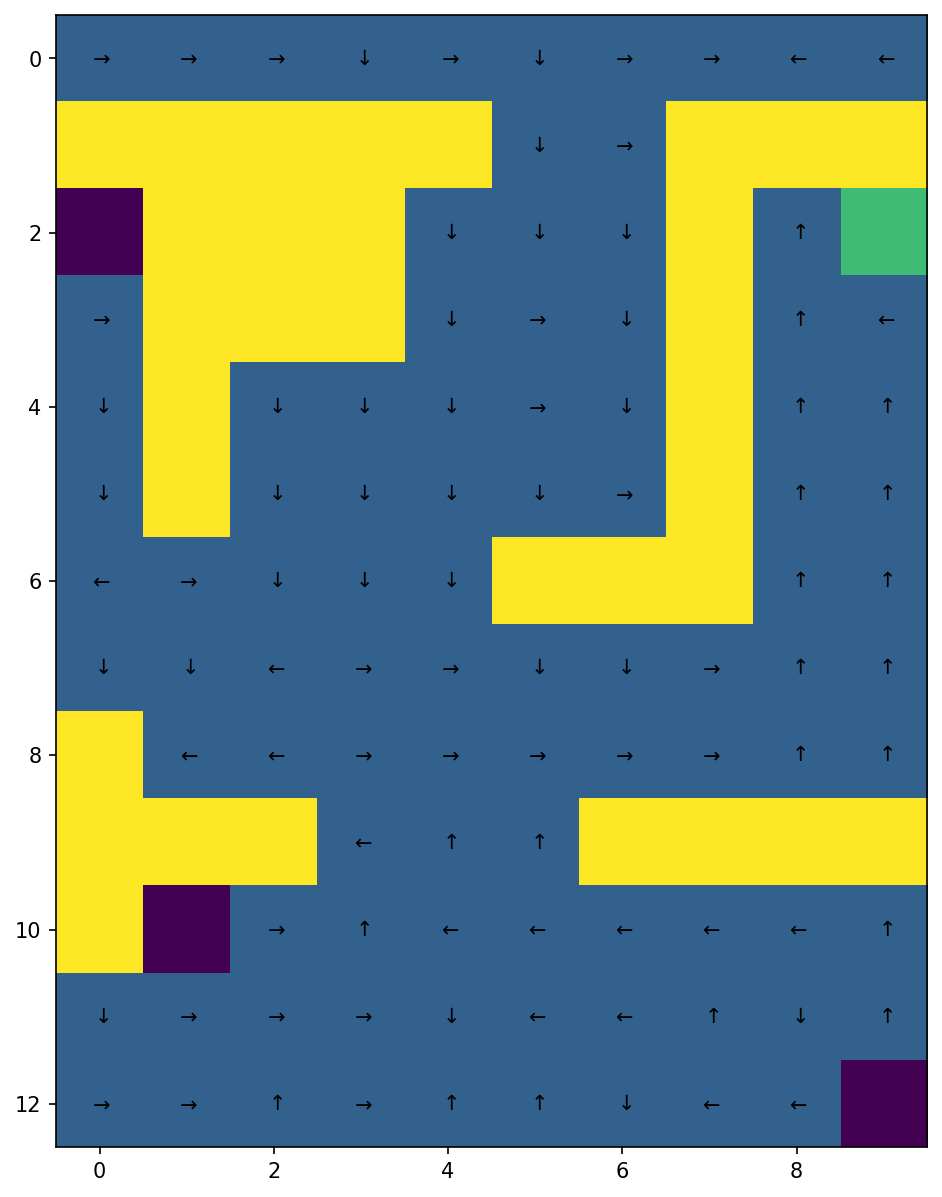

| Optimal policy after 1000 episodes | Corresponding optimal value function. |

|---|---|

|

|

For more details on the variability of the learning process and the impact of the variation of

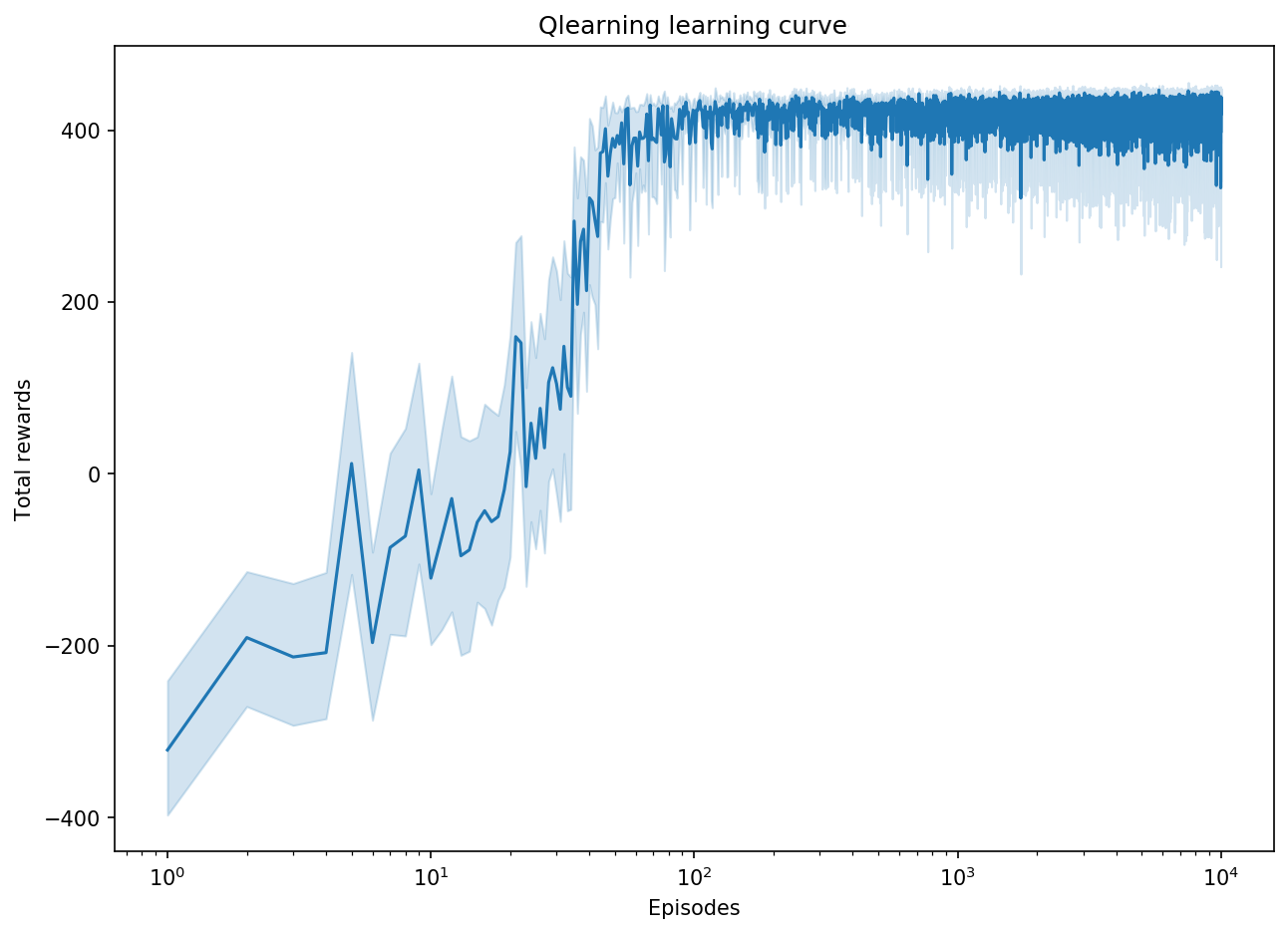

When it comes to Temporal Difference control, we can apply on-policy methods as SARSA or off-policy methods as Q-learning. Q-learning updates the Q values according to a greedy policy and explores new actions according to a behaviour policy which is an

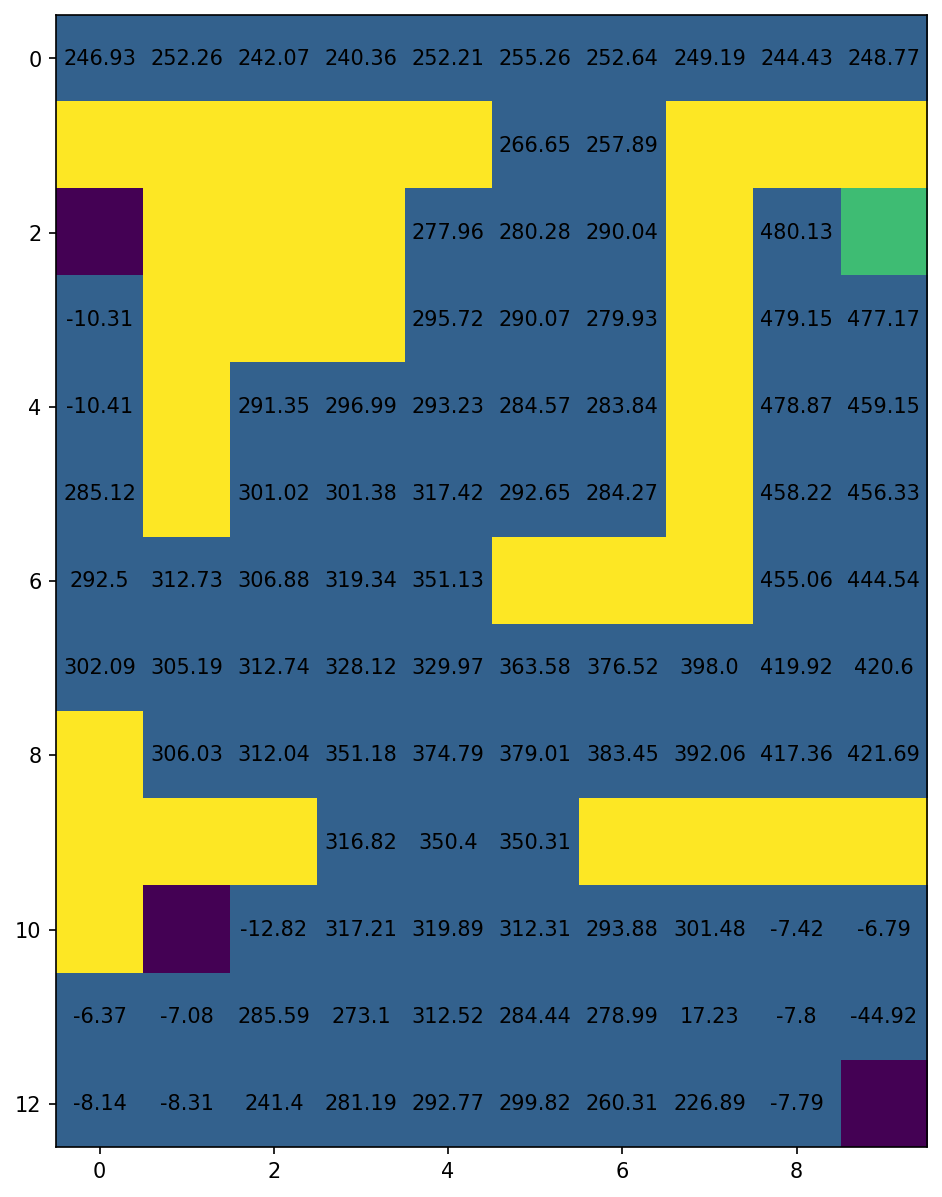

| Optimal policy after 1000 episodes | Corresponding optimal value function. |

|---|---|

|

|