Legal Disclaimer: The images shown above are included in the image-centric datasets covered by Affection (see below). We do not have or claim any ownership or copyright right for these images.

Legal Disclaimer: The images shown above are included in the image-centric datasets covered by Affection (see below). We do not have or claim any ownership or copyright right for these images.

This codebase accompamnies our CVPR-2023 paper.

❗ It is currently in its most bare and bones form allowing you to load and inspect the Affection data. For more code developments stay tuned.

Optionally, first create a clean environment with pytorch installed. E.g., with conda:

conda create -n eeai python=3.8

conda activate eeai

Then,

git clone https://github.com/optas/eeai.git

cd eeai

pip install -e .

For this bare-and-bones repo version, the setup.py lists the few straightforward dependencies. Feel free to use any version of Python3.

The Affection dataset is provided under specific terms of use. To download it please first fill this form accepting its terms.

-

For a detailed analysis of the content and structure of our annotations read this readme file.

-

You can use this notebook to load our annotations. To visualize them you will need access to the image datasets Affection is built upon. First read the above readme file, and then this one for more information.

If you find this work useful in your research, please consider citing:

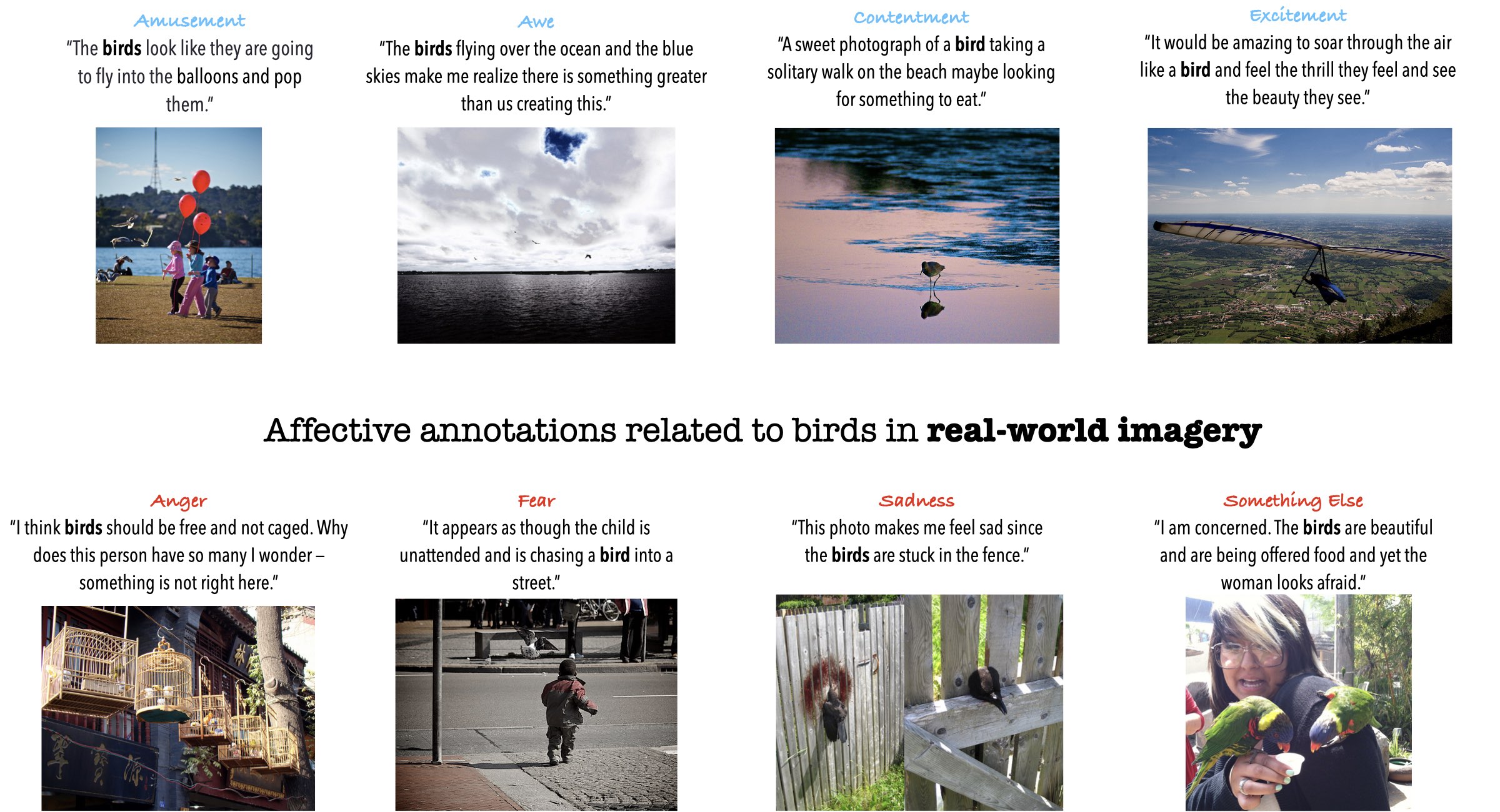

@article{achlioptas2023affection,

title={{Affection}: Learning Affective Explanations for Real-World Visual Data},

author={Achlioptas, Panos and Ovsjanikov, Maks and Guibas, Leonidas and

Tulyakov, Sergey},

journal = Conference on Computer Vision and Pattern Recognition (CVPR),

year={2023}

}