This project aims to collect and summarize the AI-related papers for readers who are interested in AI research in academia. We plan to collect all the AI-related papers in the top-tier architecture conferences such as ISCA, MICRO and HPCA in recent years. Now, we have collected them in ISCA from 2015 to 2019 with some basic analysis. These papers will be listed below and you can find our brief summaries in "/Summarys/#year_of_the_paper/". We are glad to have your suggestions of anything about this project!

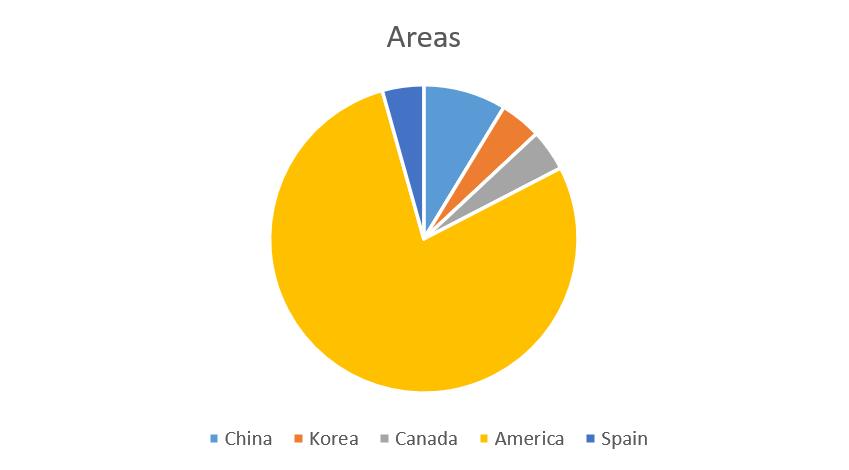

Some Statistics of the Papers 1. The yearly paper count (now only based on ISCA 2015-2019 statistics) 2. The countries and regions that contribute (now only based on ISCA 2015-2019 statistics) 3. Top researchers and their information (now only based on ISCA 2015-2019 statistics)

Rank

Author

Counts of paper

Region

Lab or Corp.

1

Hadi Esmaeilzadeh

4

US

Alternative Computing Technologies (ACT) Laboratory, University of California

2

Mingcong Song

3

US

Intelligent Design of Efficient Architectures Laboratory (IDEAL), University of Florida

2

Reetuparna Das

3

US

EECS department, University of Michigan

2

Tao Li

3

US

Intelligent Design of Efficient Architectures Laboratory (IDEAL), University of Florida

2

Tianshi Chen

3

China

Cambricon Technologies Corporation Limited(寒武纪科技)

2

Yunji Chen

3

China

Institute of Computing Technology, Chinese Academy of Sciences

2

Zidong Du

3

China

Institute of Computing Technology, Chinese Academy of Sciences

The Chronological Listing of Papers

Title

Authors

Area

Organization

1

Cnvlutin: Ineffectual-Neuron-Free Deep Neural Network Computing Jorge Albericio, Tayler Hetheringto

Canada

University of Toronto, University of British Columbia

2

ISAAC: A Convolutional Neural Network Accelerator with In-Situ Analog Arithmetic in Crossbars

Ali Shafiee, Vivek Srikumar

US

University of Utah,Hewlett Packard Labs

3

PRIME: A Novel Processing-in-Memory Architecture for Neural Network Computation in ReRAM-Based Main Memory

Ping Chi, Yuan Xie

US

University of California

4

EIE: Efficient Inference Engine on Compressed Deep Neural Network

Song Han, William J. Dally

US

Stanford University, NVIDIA

5

RedEye: Analog ConvNet Image Sensor Architecture for Continuous Mobile

Robert LiKamWa, Lin Zhong

US

Rice University

6

Minerva: Enabling Low-Power, Highly-Accurate Deep Neural Network Accelerators Brandon Reagen, David Brooks

US

Harvard University

7

Eyeriss: A Spatial Architecture for Energy-Efficient Dataflow for Convolutional Neural Networks

Yu-Hsin Chen, Vivienne Sze

US

MIT, NVIDIA

8

Neurocube: A Programmable Digital Neuromorphic Architecture with High-Density 3D Memory Duckhwan Kim, Saibal Mukhopadhyay

US

Georgia Institute of Technology

9

Cambricon: An Instruction Set Architecture for Neural Networks Shaoli Liu, Tianshi Chen

China

CAS, Cambricon Ltd.

10

Energy Efficient Architecture for Graph Analytics Accelerators Muhammet Mustafa Ozdal, Ozcan Ozturk

Turkey

Bilkent University

11

Accelerating Markov Random Field Inference Using Molecular Optical Gibbs Sampling Units Siyang Wang, Alvin R. Lieberk

US

Duke University

Title

Authors

Area

Organization

1

In-Datacenter Performance Analysis of a Tensor Processing Unit

Norman P. Jouppi

US

Google

2

Maximizing CNN Accelerator Efficiency Through Resource Partitioning

Yongming Shen

US

Stony Brook University

3

SCALEDEEP: A Scalable Compute Architecture for Learning and Evaluating Deep Networks

Swagath Venkataramani, Anand Raghunathan

US

Purdue University, Parallel Computing Lab, Intel Corporation

4

Scalpel: Customizing DNN Pruning to the Underlying Hardware Parallelism

Jiecao Yu, Scott Mahlke

US

University of Michigan, ARM

5

SCNN: An Accelerator for Compressed-sparse Convolutional Neural Networks Angshuman Parashar, William J. Dally

US

NVIDIA, MIT, UC-Berkeley, Stanford University

6

Stream-Dataflow Acceleration

Tony Nowatzki

US

University of California, University of Wisconsin

7

Understanding and Optimizing Asynchronous Low-Precision Stochastic Gradient Descent

Christopher De Sa, Kunle Olukotun

US

Stanford University

Title

Authors

Area

Organization

1

A Configurable Cloud-Scale DNN Processor for Real-Time AI Jeremy Fowers, Doug Burger

US

Microsoft

2

PROMISE: An End-to-End Design of a Programmable Mixed-Signal Accelerator for Machine- Learning Algorithms

Prakalp Srivastava, Mingu Kang

US

University of Illinois at Urbana-Champaign, IBM

3

Computation Reuse in DNNs by Exploiting Input Similarity

Marc Riera, Antonio Gonza ?lez

Spain

Universitat Polite ?cnica de Catalunya

4

GenAx: A Genome Sequencing Accelerator Daichi Fujiki, Satish Narayanasamy

US

University of Michigan

5

Flexon: A Flexible Digital Neuron for Efficient Spiking Neural Network Simulations

Dayeol Lee, Jangwoo Kim

North Korea,US

Seoul National University, University of California

6

Space-Time Algebra: A Model for Neocortical Computation

James E. Smith

US

University of Wisconsin-Madison

7

Architecting a Stochastic Computing Unit with Molecular Optical Devices

Xiangyu Zhang, Alvin R. Lebeck

US

Duke University, Parabon Labs

8

RANA: Towards Efficient Neural Acceleration with Refresh-Optimized Embedded DRAM Fengbin Tu, Shaojun Wei

China

Tsinghua University

9

Neural Cache: Bit-Serial In-Cache Acceleration of Deep Neural Networks

Charles Eckert, Reetuparna Das

US

University of Michigan, Intel Corporation

10

RoboX: An End-to-End Solution to Accelerate Autonomous Control in Robotics

Jacob Sacks, Hadi Esmaeilzadeh

US

Georgia Institute of Technology, University of California, San Diego

11

EVA2: Exploiting Temporal Redundancy in Live Computer Vision

Mark Buckler, Adrian Sampson

US

Cornell University

12

Euphrates: Algorithm-SoC Co-Design for Low-Power Mobile Continuous Vision Yuhao Zhu, Paul Whatmough

US

University of Rochetster, ARM Research

13

GANAX: A Unified MIMD-SIMD Acceleration for Generative Adversarial Networks Amir Yazdanbakhsh, Hadi Esmaeilzadeh

US

Georgia Institute of Technology, UC San Diego, Qualcomm Technologies, Inc.

14

SnaPEA: Predictive Early Activation for Reducing Computation in Deep Convolutional Neural Networks Vahideh Akhlaghi, Hadi Esmaeilzadeh

US

Georgia Institute of Technology, UC San Diego, Qualcomm Technologies, Inc.

15

UCNN: Exploiting Computational Reuse in Deep Neural Networks via Weight Repetition Kartik Hegde, Christopher W. Fletche

US

University of Illinois at Urbana-Champaign, NVIDIA

16

Energy-Efficient Neural Network Accelerator Based on Outlier-Aware Low-Precision Computation Eunhyeok Park, Sungjoo Yoo

North Korea

Seoul National University

17

Prediction Based Execution on Deep Neural Networks

Mingcong Song, Tao Li

US

University of Flirida

18

Bit Fusion: Bit-Level Dynamically Composable Architecture for Accelerating Deep Neural Network

Hardik Sharma, Hadi Esmaeilzadeh

US

Georgia Institute of Technology, University of California

19

Gist: Efficient Data Encoding for Deep Neural Network Training

Animesh Jain, Gennady Pekhimenko

US,Canada

Microsoft Research, University of Toronto, Univerity of Michigan

20

The Dark Side of DNN Pruning

Reza Yazdani, Antonio Gonza ?lez

Spain

Universitat Polite ?cnica de Catalunya

Title

Authors

Area

Organization

1

3D-based Video Recognition Acceleration by Leveraging Temporal Locality

Huixiang Chen, Tao Li

US

University of Florida

2

A Stochastic-Computing based Deep Learning Framework using Adiabatic Quantum-Flux-Parametron Superconducting Technology

Ruizhe Cai, Ao Ren, Nobuyuki Yoshikawa, Yanzhi Wang

US

Northeastern University

3

Accelerating Distributed Reinforcement Learning with In-Switch Computing Youjie Li, Jian Huang

US

UIUC

4

Eager Pruning: Algorithm and Architecture Support for Fast Training of Deep Neural Networks Jiaqi Zhang, Tao Li

US

University of Florida

5

Laconic Deep Learning Inference Acceleration Sayeh Sharify, Andreas Moshovos

Canada

University of Toronto

6

MnnFast: A Fast and Scalable System Architecture for Memory-Augmented Neural Networks Hanhwi Jang, Jangwoo Kim

North Korea

POSTECH, Seoul National University

7

Sparse ReRAM Engine: Joint Exploration of Activation and Weight Sparsity in Compressed Neural Networks Tzu-Hsien Yang

China Twain

National Taiwan University, Academia Sinica, Macronix International Co., Ltd.

8

TIE: Energy-efficient Tensor Train-based Inference Engine for Deep Neural Network Chunhua Deng, Bo Yuan

US

Rutgers University

9

FloatPIM_ in-memory acceleration of deep neural network training with high precision Mohsen Imani, Tajana Rosing

US

UC San Diego

10

Cambricon-F_ machine learning computers with fractal von neumann architecture Yongwei Zhao, Yunji Chen

China

ICT, Cambricon

11

Master of none acceleration_ a comparison of accelerator architectures for analytical query processing Andrea Lottarini, Martha A. Kim

US

Google, Columbia University