Jiale Zhang, Yulun Zhang, Jinjin Gu, Yongbing Zhang, Linghe Kong, and Xin Yuan, "Accurate Image Restoration with Attention Retractable Transformer", ICLR, 2023 (Spotlight)

[paper] [arXiv] [supplementary material] [visual results] [pretrained models]

This code is the PyTorch implementation of ART model. Our ART achieves state-of-the-art performance in

- bicubic image SR

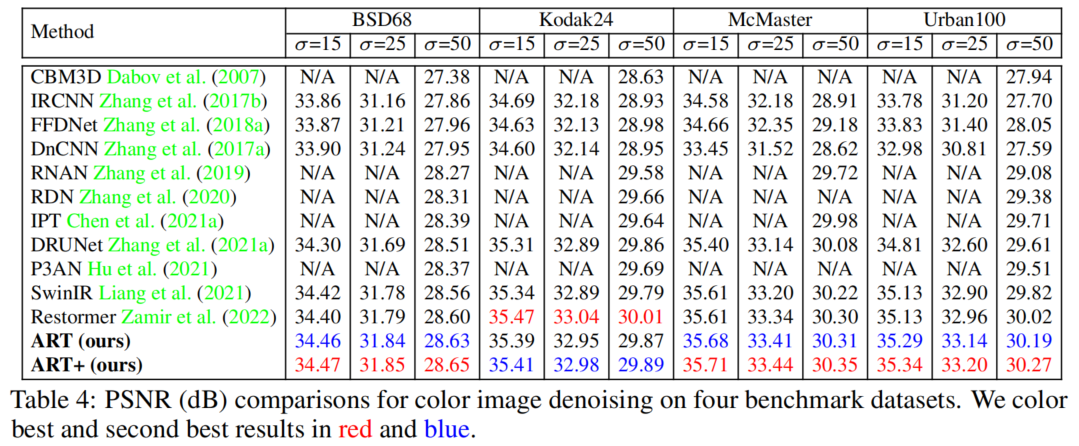

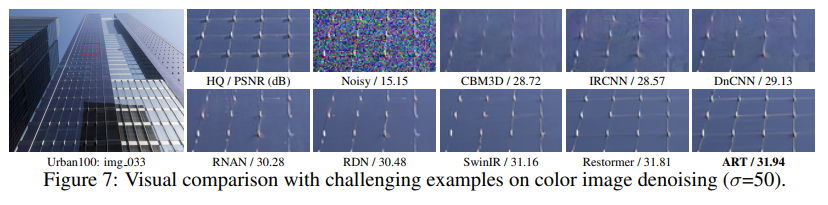

- gaussian color image denoising

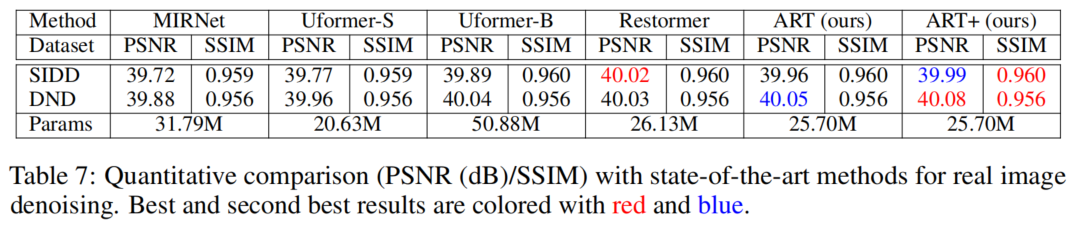

- real image denoising

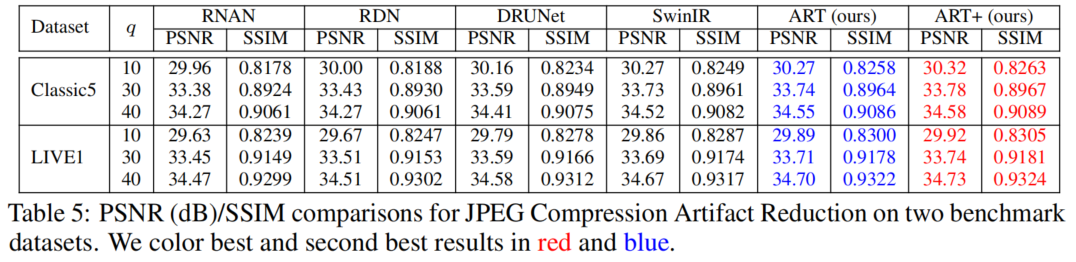

- jpeg compression artifact reduction

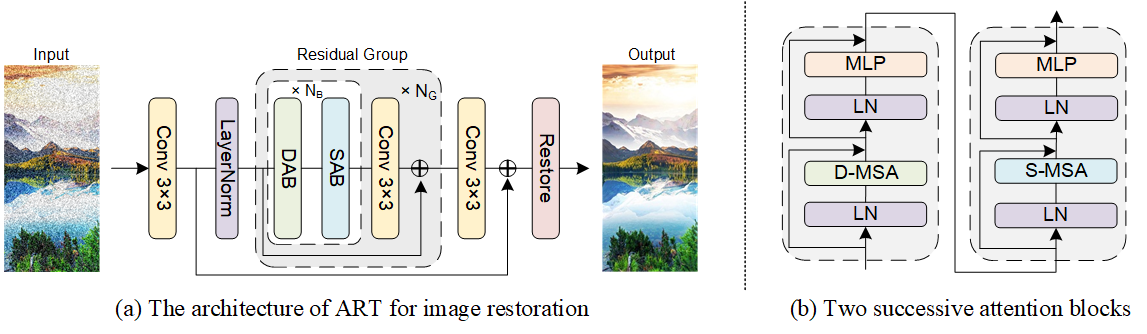

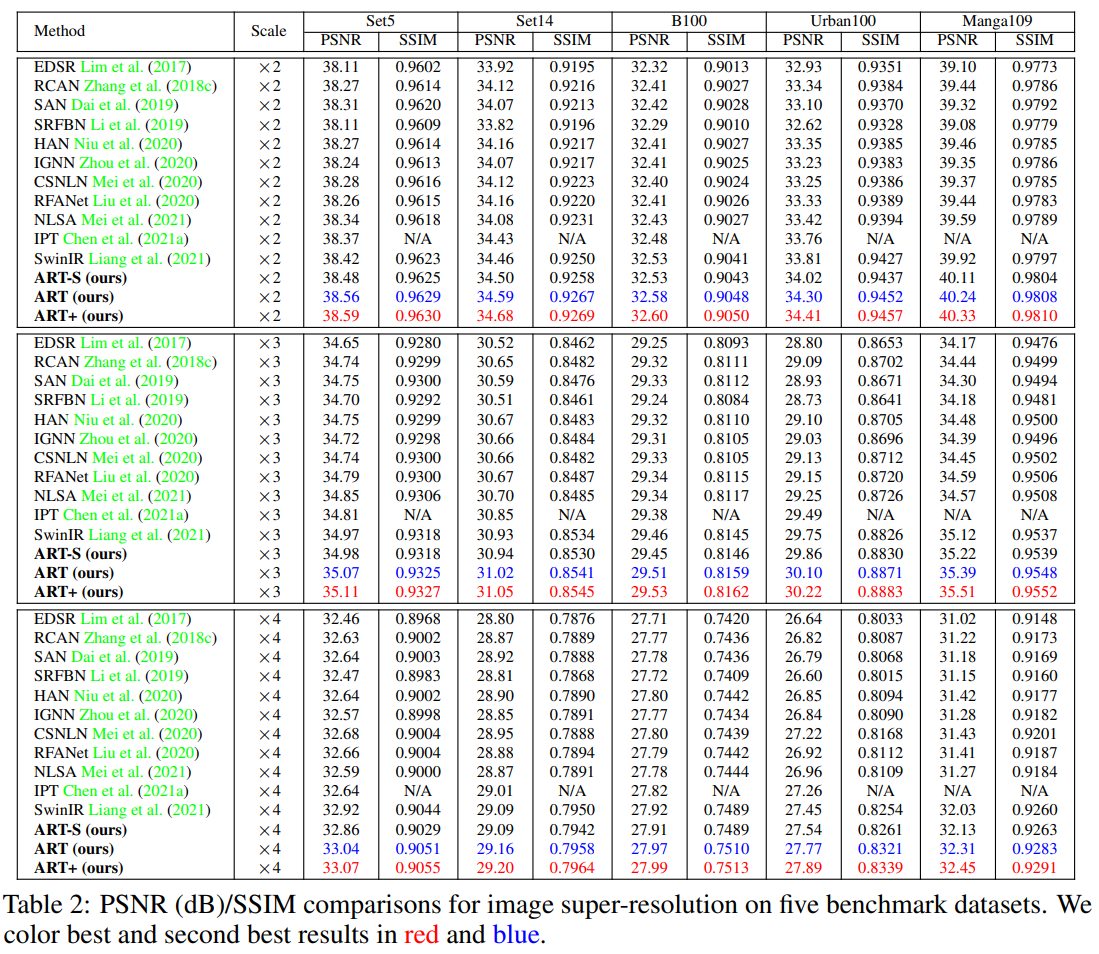

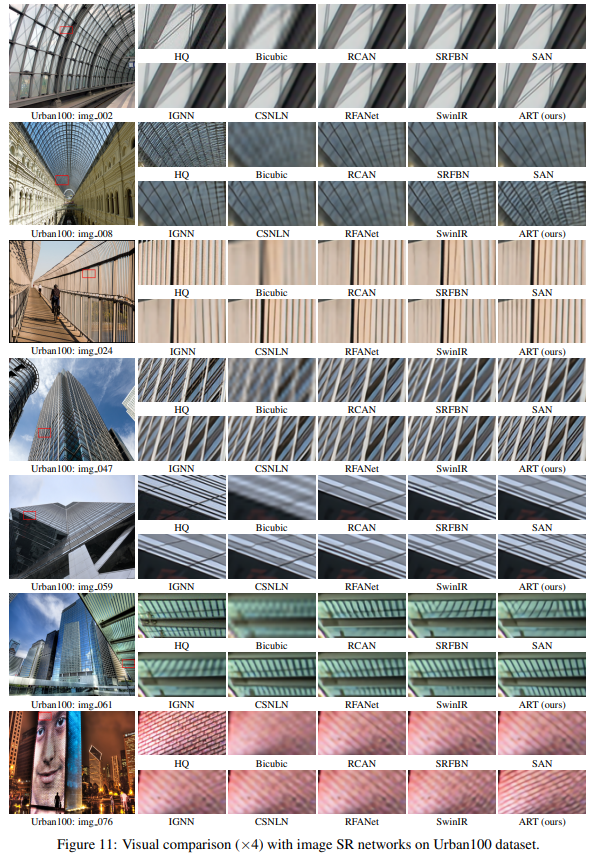

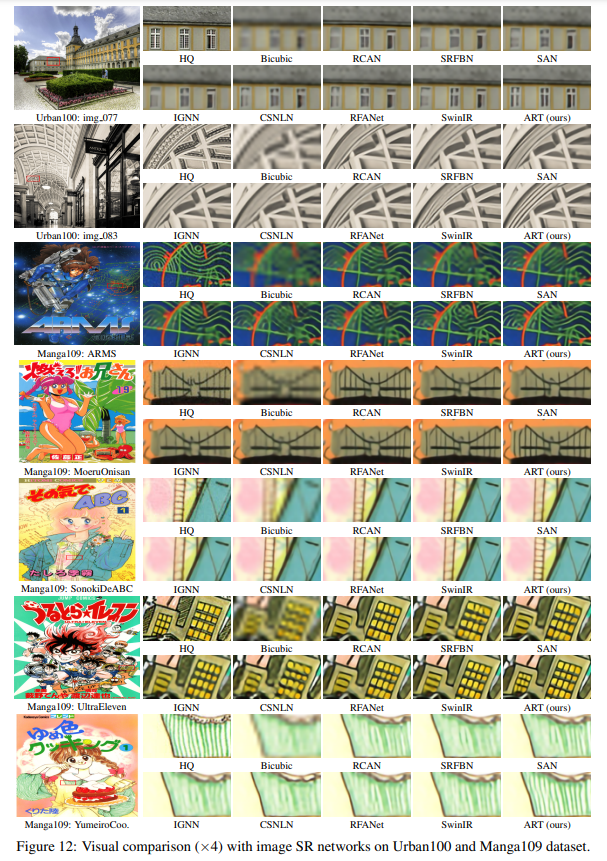

Abstract: Recently, Transformer-based image restoration networks have achieved promising improvements over convolutional neural networks due to parameter-independent global interactions. To lower computational cost, existing works generally limit self-attention computation within non-overlapping windows. However, each group of tokens are always from a dense area of the image. This is considered as a dense attention strategy since the interactions of tokens are restrained in dense regions. Obviously, this strategy could result in restricted receptive fields. To address this issue, we propose Attention Retractable Transformer (ART) for image restoration, which presents both dense and sparse attention modules in the network. The sparse attention module allows tokens from sparse areas to interact and thus provides a wider receptive field. Furthermore, the alternating application of dense and sparse attention modules greatly enhances representation ability of Transformer while providing retractable attention on the input image.We conduct extensive experiments on image super-resolution, denoising, and JPEG compression artifact reduction tasks. Experimental results validate that our proposed ART outperforms state-of-the-art methods on various benchmark datasets both quantitatively and visually. We also provide code and models at https://github.com/gladzhang/ART.

| img_092 (x4) | img_098 (x4) | HR | LR | SwinIR | ART (ours) |

|---|---|---|---|---|---|

|

|

|

|

|

|

- python 3.8

- pyTorch >= 1.8.0

- NVIDIA GPU + CUDA

git clone https://github.com/gladzhang/ART.git

cd ART

pip install -r requirements.txt

python setup.py develop- Testing on Image SR

- Testing on Color Image Denoising

- Testing on Real Image Denoising

- Testing on JPEG compression artifact reduction

- Training

- More tasks

| Task | Method | Params (M) | FLOPs (G) | Dataset | PSNR | SSIM | Model Zoo |

|---|---|---|---|---|---|---|---|

| SR | ART-S | 11.87 | 392 | Urban100 | 27.54 | 0.8261 | Google Drive |

| SR | ART | 16.55 | 782 | Urban100 | 27.77 | 0.8321 | Google Drive |

| Color-DN | ART | 16.15 | 465 | Urban100 | 30.19 | 0.8912 | Google Drive |

| Real-DN | ART | 25.70 | 73 | SIDD | 39.96 | 0.9600 | Google Drive |

| CAR | ART | 16.14 | 469 | LIVE1 | 29.89 | 0.8300 | Google Drive |

- We provide the performance on Urban100 (x4, SR), Urban100 (level=50, Color-DN) LIVE1 (q=10, CAR), and SIDD (Real-DN). We use the input 160 × 160 to calculate FLOPS.

- Download the models and put them into the folder

experiments/pretrained_models. Go to the folder to find details of directory structure.

Used training and testing sets can be downloaded as follows:

| Task | Training Set | Testing Set | Visual Results |

|---|---|---|---|

| image SR | DIV2K (800 training images) + Flickr2K (2650 images) [complete dataset DF2K download] | Set5 + Set14 + BSD100 + Urban100 + Manga109 [download] | Google Drive |

| gaussian color image denoising | DIV2K (800 training images) + Flickr2K (2650 images) + BSD500 (400 training&testing images) + WED(4744 images) [complete dataset DFWB_RGB download] | CBSD68 + Kodak24 + McMaster + Urban100 [download] | Google Drive |

| real image denoising | SIDD (320 training images) [complete dataset SIDD download] | SIDD + DND [download] | Google Drive |

| grayscale JPEG compression artifact reduction | DIV2K (800 training images) + Flickr2K (2650 images) + BSD500 (400 training&testing images) + WED(4744 images) [complete dataset DFWB_CAR download] | Classic5 + LIVE1 [download] | Google Drive |

Download training and testing datasets and put them into the folder datasets/. Go to the folder to find details of directory structure.

- Please download the corresponding training datasets and put them in the folder

datasets/DF2K. Download the testing datasets and put them in the folderdatasets/SR. - Follow the instructions below to begin training our ART model.

Run the script then you can find the generated experimental logs in the folder

# train ART for SR task, cropped input=64×64, 4 GPUs, batch size=8 per GPU python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_SR_x2.yml --launcher pytorch python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_SR_x3.yml --launcher pytorch python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_SR_x4.yml --launcher pytorch # train ART-S for SR task, cropped input=64×64, 4 GPUs, batch size=8 per GPU python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_S_SR_x2.yml --launcher pytorch python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_S_SR_x3.yml --launcher pytorch python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_S_SR_x4.yml --launcher pytorch

experiments.

- Please download the corresponding training datasets and put them in the folder

datasets/DFWB_RGB. Download the testing datasets and put them in the folderdatasets/ColorDN. - Follow the instructions below to begin training our ART model.

Run the script then you can find the generated experimental logs in the folder

# train ART for ColorDN task, cropped input=128×128, 4 GPUs, batch size=2 per GPU python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_ColorDN_level15.yml --launcher pytorch python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_ColorDN_level25.yml --launcher pytorch python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_ColorDN_level50.yml --launcher pytorchexperiments.

- Please download the corresponding training datasets and put them in the folder

datasets/SIDD. Note that we provide both training and validating files, which are already processed. - Go to folder 'realDenoising'. Follow the instructions below to train our ART model.

Run the script then you can find the generated experimental logs in the folder

# go to the folder cd realDenoising # set the new environment (BasicSRv1.2.0), which is the same with Restormer for training. python setup.py develop --no_cuda_ext # train ART for RealDN task, 8 GPUs python -m torch.distributed.launch --nproc_per_node=8 --master_port=2414 basicsr/train.py -opt options/train_ART_RealDN.yml --launcher pytorch

realDenoising/experiments. - Remember to go back to the original environment if you finish all the training or testing about real image denoising task. This is a friendly hint in order to prevent confusion in the training environment.

# Tips here. Go back to the original environment (BasicSRv1.3.5) after finishing all the training or testing about real image denoising. cd .. python setup.py develop

- Please download the corresponding training datasets and put them in the folder

datasets/DFWB_CAR. Download the testing datasets and put them in the folderdatasets/CAR. - Follow the instructions below to begin training our ART model.

Run the script then you can find the generated experimental logs in the folder

# train ART for CAR task, cropped input=126×126, 4 GPUs, batch size=2 per GPU python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_CAR_q10.yml --launcher pytorch python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_CAR_q30.yml --launcher pytorch python -m torch.distributed.launch --nproc_per_node=4 --master_port=2414 basicsr/train.py -opt options/train/train_ART_CAR_q40.yml --launcher pytorchexperiments.

- Please download the corresponding testing datasets and put them in the folder

datasets/SR. Download the corresponding models and put them in the folderexperiments/pretrained_models. - Follow the instructions below to begin testing our ART model.

# test ART model for image SR. You can find corresponding results in Table 2 of the main paper. python basicsr/test.py -opt options/test/test_ART_SR_x2.yml python basicsr/test.py -opt options/test/test_ART_SR_x3.yml python basicsr/test.py -opt options/test/test_ART_SR_x4.yml # test ART-S model for image SR. You can find corresponding results in Table 2 of the main paper. python basicsr/test.py -opt options/test/test_ART_S_SR_x2.yml python basicsr/test.py -opt options/test/test_ART_S_SR_x3.yml python basicsr/test.py -opt options/test/test_ART_S_SR_x4.yml

- Please upload the images that need to be upscaled, and put them in the folder

datasets/example. Download the corresponding models and put them in the folderexperiments/pretrained_models. - Choose the upscale size and follow the instructions below to apply our ART model to upscale the provided images.

Run the script then you can find the output visual results in the automatically generated folder

# apply ART model for image SR. python basicsr/test.py -opt options/apply/test_ART_SR_x2_without_groundTruth.yml python basicsr/test.py -opt options/apply/test_ART_SR_x3_without_groundTruth.yml python basicsr/test.py -opt options/apply/test_ART_SR_x4_without_groundTruth.ymlresults.

- Please download the corresponding testing datasets and put them in the folder

datasets/ColorDN. Download the corresponding models and put them in the folderexperiments/pretrained_models. - Follow the instructions below to begin testing our ART model.

# test ART model for Color Image Denoising. You can find corresponding results in Table 4 of the main paper. python basicsr/test.py -opt options/test/test_ART_ColorDN_level15.yml python basicsr/test.py -opt options/test/test_ART_ColorDN_level25.yml python basicsr/test.py -opt options/test/test_ART_ColorDN_level50.yml

-

Download the SIDD test and DND test. Place them in

datasets/RealDN. Download the corresponding models and put them in the folderexperiments/pretrained_models. -

Go to folder 'realDenoising'. Follow the instructions below to test our ART model. The output is in

realDenoising/results/Real_Denoising.# go to the folder cd realDenoising # set the new environment (BasicSRv1.2.0), which is the same with Restormer for testing. python setup.py develop --no_cuda_ext # test our ART (training total iterations = 300K) on SSID python test_real_denoising_sidd.py # test our ART (training total iterations = 300K) on DND python test_real_denoising_dnd.py

-

Run the scripts below to reproduce PSNR/SSIM on SIDD. You can find corresponding results in Table 7 of the main paper.

run evaluate_sidd.m

-

For PSNR/SSIM scores on DND, you can upload the genetated DND mat files to the online server and get the results.

-

Remerber to go back to the original environment if you finish all the training or testing about real image denoising task. This is a friendly hint in order to prevent confusion in the training environment.

# Tips here. Go back to the original environment (BasicSRv1.3.5) after finishing all the training or testing about real image denoising. cd .. python setup.py develop

- Please download the corresponding testing datasets and put them in the folder

datasets/CAR. Download the corresponding models and put them in the folderexperiments/pretrained_models. - Follow the instructions below to begin testing our ART model.

# ART model for JPEG CAR. You can find corresponding results in Table 5 of the main paper. python basicsr/test.py -opt options/test/test_ART_CAR_q10.yml python basicsr/test.py -opt options/test/test_ART_CAR_q30.yml python basicsr/test.py -opt options/test/test_ART_CAR_q40.yml

We provide the results on image SR, color image denoising, real image denoising, and JPEG compression artifact reduction here. More results can be found in the main paper. The visual results of ART can be downloaded here.

If you find the code helpful in your resarch or work, please cite the following paper(s).

@inproceedings{zhang2023accurate,

title={Accurate Image Restoration with Attention Retractable Transformer},

author={Zhang, Jiale and Zhang, Yulun and Gu, Jinjin and Zhang, Yongbing and Kong, Linghe and Yuan, Xin},

booktitle={ICLR},

year={2023}

}

This work is released under the Apache 2.0 license. The codes are based on BasicSR and Restormer. Please also follow their licenses. Thanks for their awesome works.