This repository contains the code and models for the following paper:

Peeking into the Future: Predicting Future Person Activities and Locations in Videos

Junwei Liang,

Lu Jiang,

Juan Carlos Niebles,

Alexander Hauptmann,

Li Fei-Fei

CVPR 2019

You can find more information at our Project Page.

Please note that this is not an officially supported Google product.

- [11/2022] CMU server is down. You can replace all

https://next.cs.cmu.eduwithhttps://precognition.team/next/to download necessary resources. - [02/2020] New paper on multi-future trajectory prediction is accepted by CVPR 2020.

If you find this code useful in your research then please cite

@InProceedings{Liang_2019_CVPR,

author = {Liang, Junwei and Jiang, Lu and Carlos Niebles, Juan and Hauptmann, Alexander G. and Fei-Fei, Li},

title = {Peeking Into the Future: Predicting Future Person Activities and Locations in Videos},

booktitle = {The IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2019}

}

In applications like self-driving cars and smart robot assistant it is important for a system to be able to predict a person's future locations and activities. In this paper we present an end-to-end neural network model that deciphers human behaviors to predict their future paths/trajectories and their future activities jointly from videos.

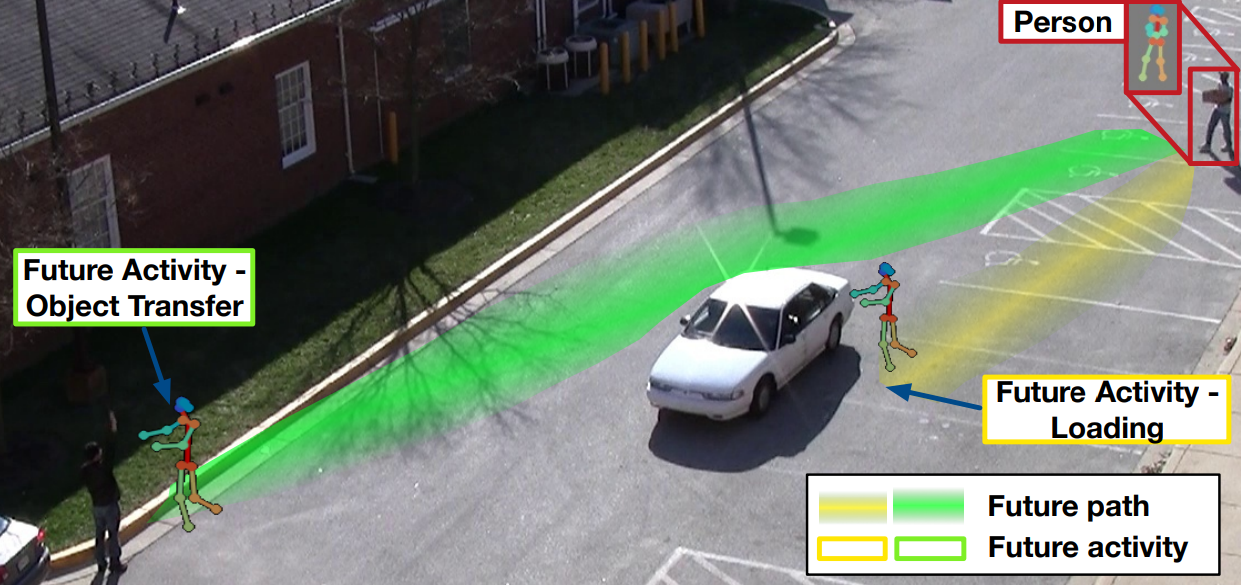

Below we show an example of the task. The green and yellow line show two possible future trajectories and two possible activities are shown in the green and yellow boxes. Depending on the future activity, the target person(top right) may take different paths, e.g. the yellow path for “loading” and the green path for “object transfer”.

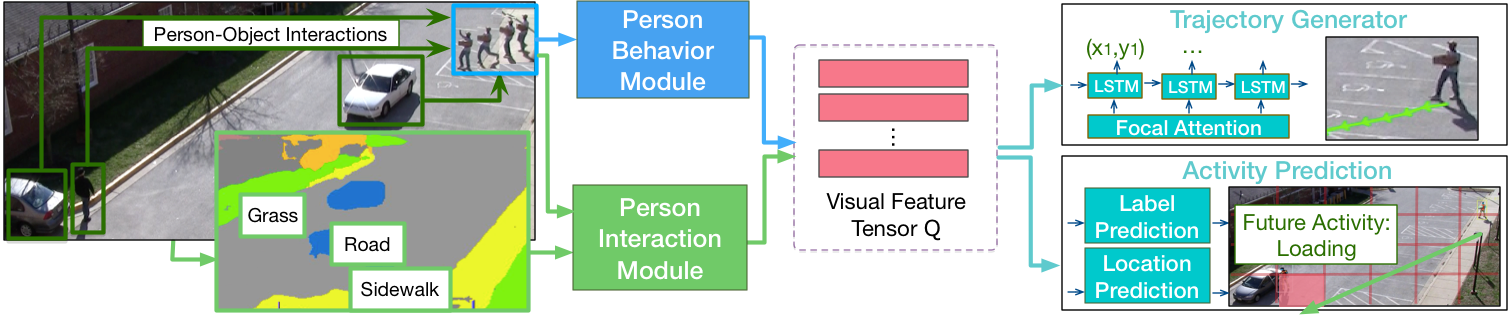

Given a sequence of video frames containing the person for prediction, our model utilizes person behavior module and person interaction module to encode rich visual semantics into a feature tensor. We propose novel person interaction module that takes into account both person-scene and person-object relations for joint activities and locations prediction.

- Python 2.7; TensorFlow == 1.10.0 (Should also work on 1.14+)

- [10/2020] Now it is compatible with Python 3.6 and Tensorflow 1.15

You can download pretrained models by running the script

bash scripts/download_single_models.sh.

This will download the following models, and will require

about 5.8 GB of disk space:

next-models/actev_single_model/: This folder includes single model for the ActEv experiment.next-models/ethucy_single_model/: This folder includes five single models for the ETH/UCY leave-one-scene-out experiment.

Instructions for testing pretrained models can be found here.

Instructions for training new models can be found here.

Instructions for extracting features can be found here.

The preprecessing code and evaluation code for trajectories were adapted from Social-GAN.