Installation guide | Tutorials | Documentation

BESS-KGE is a PyTorch library for knowledge graph embedding (KGE) models on IPUs implementing the distribution framework BESS, with embedding tables stored in the IPU SRAM.

Shallow KGE models are typically memory-bound, as little compute needs to be performed to score (h,r,t) triples once the embeddings of entities and relation types used in the batch have been retrieved. BESS (Balanced Entity Sampling and Sharing) is a KGE distribution framework designed to maximize bandwidth for gathering embeddings, by:

- storing them in fast-access IPU on-chip memory;

- minimizing communication time for sharing embeddings between workers, leveraging balanced collective operators over high-bandwidth IPU-links.

This allows BESS-KGE to achieve high throughput for both training and inference.

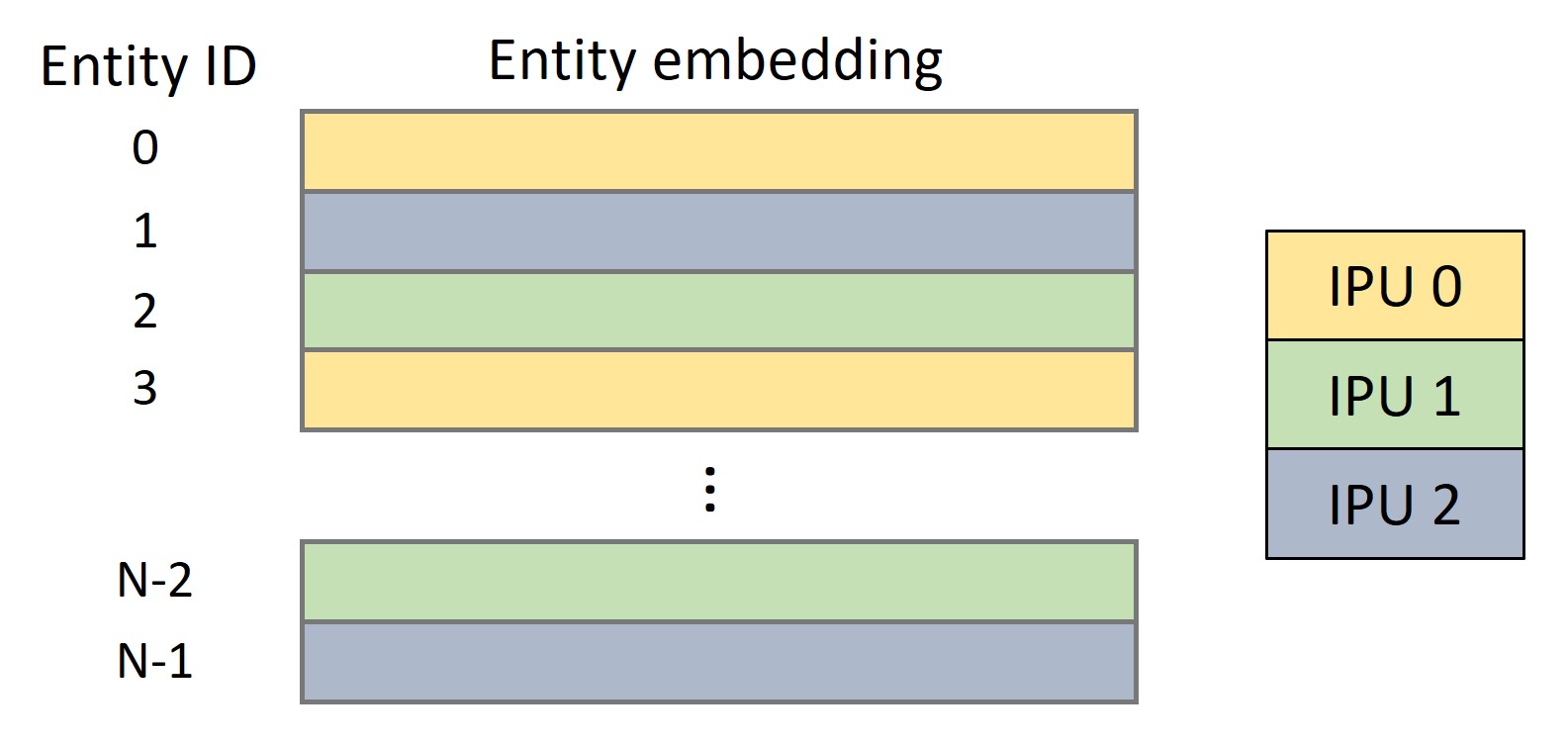

When distributing the workload over

The entity sharding induces a partitioning of the triples in the dataset, according to the shard-pair of the head entity and the tail entity. At execution time (for both training and inference), batches are constructed by sampling triples uniformly from each of the

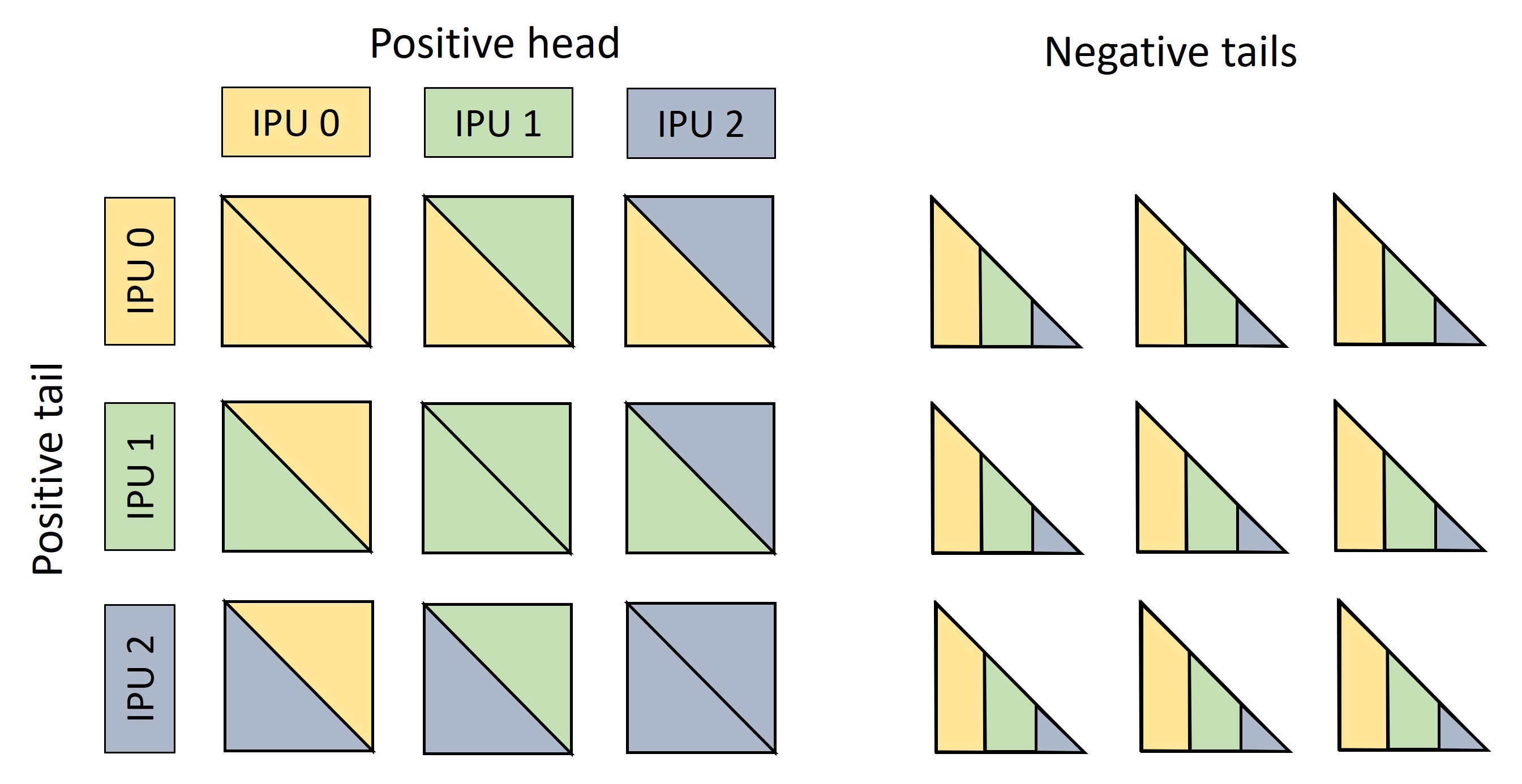

Figure 2. Left: A batch is made of

This batching scheme allows us to balance workload and communication across workers. First, each worker needs to gather the same number of embeddings from its on-chip memory, both for positive and negative samples. These include the embeddings needed by the worker itself, and the embeddings needed by its peers.

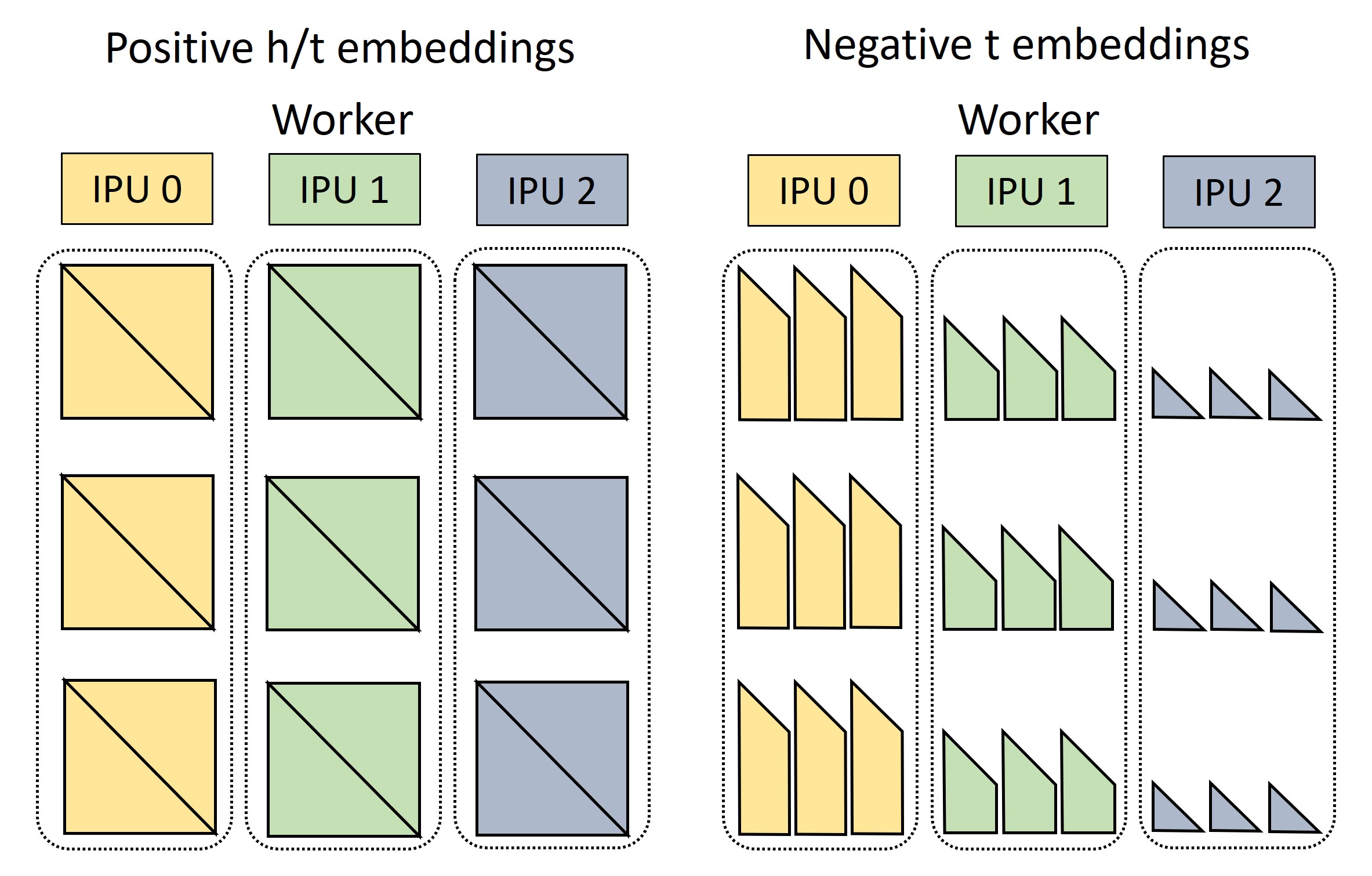

Figure 3. The required embeddings are gathered from the IPUs' SRAM. Each worker needs to retrieve the head embeddings for

The batch in Figure 2 can then be reconstructed by sharing the embeddings of positive tails and negative entities between workers through a balanced AllToAll collective operator. Head embeddings remain in place, as each triple block is then scored on the worker where the head embedding is stored.

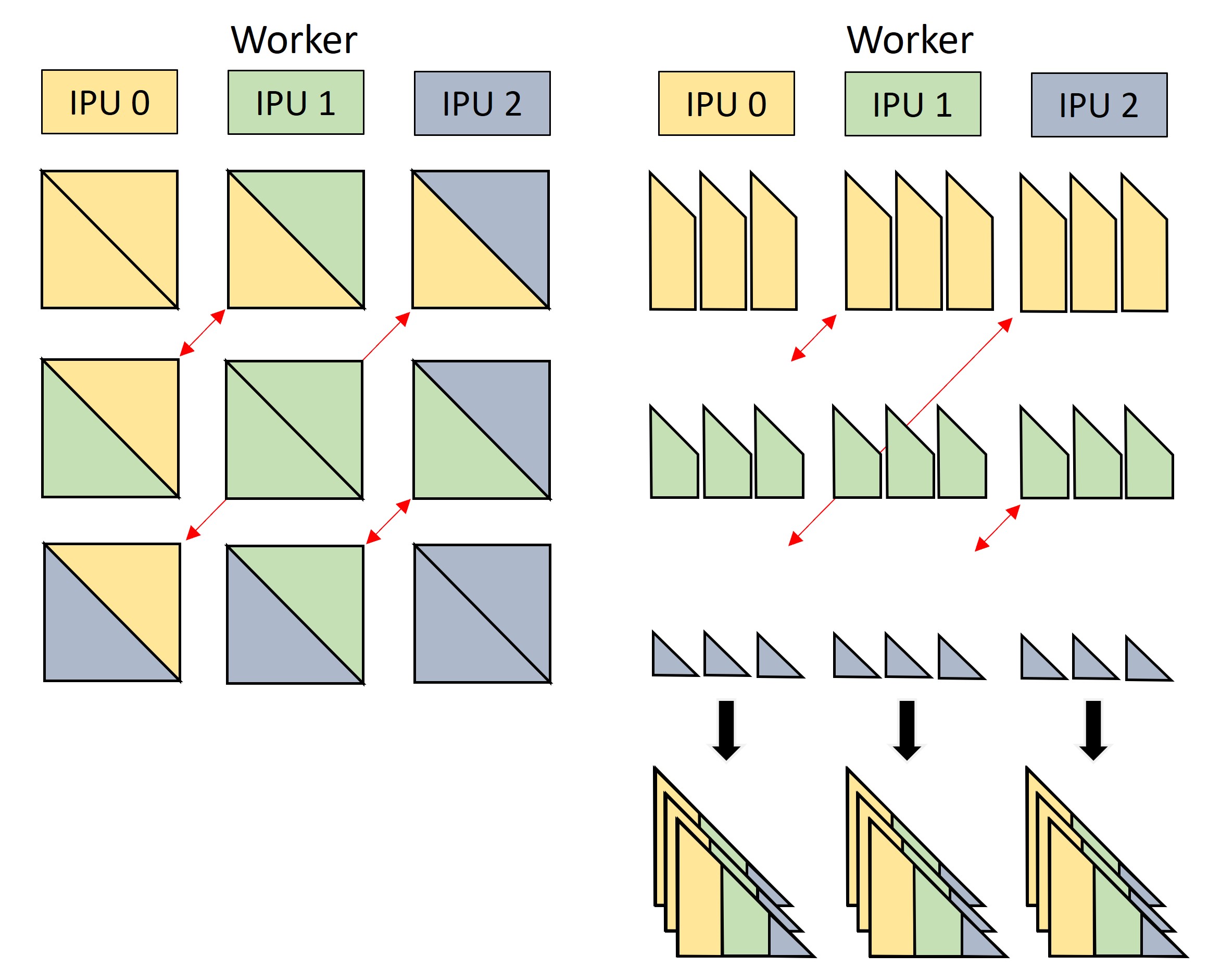

Figure 4. Embeddings of positive and negative tails are exchanged between workers with an AllToAll collective (red arrows), which effectively transposes rows and columns of the

Additional variations of the distribution scheme are detailed in the BESS-KGE documentation.

All APIs are documented in the BESS-KGE API documentation.

BESS-KGE provides built-in dataloaders for the following datasets. Notice that the use of these datasets is at own risk and Graphcore offers no warranties of any kind. It is the user's responsibility to comply with all license requirements for datasets downloaded with dataloaders in this repository.

| Dataset | Builder method | Entities | Entity types | Relation types | Triples | License |

|---|---|---|---|---|---|---|

| ogbl-biokg | KGDataset.build_ogbl_biokg | 93,773 | 5 | 51 | 5,088,434 | CC-0 |

| ogbl-wikikg2 | KGDataset.build_ogbl_wikikg2 | 2,500,604 | 1 | 535 | 16,968,094 | CC-0 |

| YAGO3-10 | KGDataset.build_yago310 | 123,182 | 1 | 37 | 1,089,040 | CC BY 3.0 |

| OpenBioLink2020 | KGDataset.build_openbiolink | 184,635 | 7 | 28 | 4,563,405 | link |

- BESS-KGE supports distribution for up to 16 IPUs.

- Storing embeddings in SRAM introduces limitations on the size of the embedding tables, and therefore on the entity count in the knowledge graph. Some (approximate) estimates for these limitations are given in the table below (assuming FP16 for weights and FP32 for gradient accumulation and second order momentum). Notice that the cap will also depend on the batch size and the number of negative samples used.

| Embeddings | Optimizer | Gradient accumulation |

Max number of entities (# embedding parameters) on |

||

|---|---|---|---|---|---|

| size | dtype | IPU-POD4 | IPU-POD16 | ||

| 100 | float16 | SGDM | No | 3.2M (3.2e8) | 13M (1.3e9) |

| 128 | float16 | Adam | No | 2.4M (3.0e8) | 9.9M (1.3e9) |

| 256 | float16 | SGDM | Yes | 900K (2.3e8) | 3.5M (9.0e8) |

| 256 | float16 | Adam | No | 1.2M (3.0e8) | 4.8M (1.2e9) |

| 512 | float16 | Adam | Yes | 375K (1.9e8) | 1.5M (7.7e8) |

If you get an error message during compilation about the ONNX protobuffer exceeding the maximum size, we recommend saving weights to a file using the poptorch.Options API options._Popart.set("saveInitializersToFile", "my_file.onnx").

Tested on Poplar SDK 3.3.0+1403, Ubuntu 20.04, Python 3.8

1. Install the Poplar SDK following the instructions in the Getting Started guide for your IPU system.

2. Enable the Poplar SDK, create and activate a Python virtualenv and install the PopTorch wheel:

source <path to Poplar installation>/enable.sh

source <path to PopART installation>/enable.sh

python3.8 -m venv .venv

source .venv/bin/activate

pip install wheel

pip install $POPLAR_SDK_ENABLED/../poptorch-*.whlMore details are given in the PyTorch quick start guide.

3. Pip install BESS-KGE:

pip install git+https://github.com/graphcore-research/bess-kge.git4. Import and use:

import besskgeFor a walkthrough of the besskge library functionalities, see our Jupyter notebooks. We recommend the following sequence:

- KGE training and inference on the OGBL-BioKG dataset

- Link prediction on the YAGO3-10 dataset

- FP16 weights and compute on the OGBL-WikiKG2 dataset

For pointers on how to run BESS-KGE on a custom Knowledge Graph dataset, see the notebook Using BESS-KGE with your own data

You can contribute to the BESS-KGE project: PRs are welcome! For details, see How to contribute to the BESS-KGE project.

BESS: Balanced Entity Sampling and Sharing for Large-Scale Knowledge Graph Completion (arXiv)

Copyright (c) 2023 Graphcore Ltd. Licensed under the MIT License.

The included code is released under the MIT license (see details of the license).

See notices for dependencies, credits, derived work and further details.