#pix2pix-tensorflow

TensorFlow implementation of Image-to-Image Translation Using Conditional Adversarial Networks that learns a mapping from input images to output images.

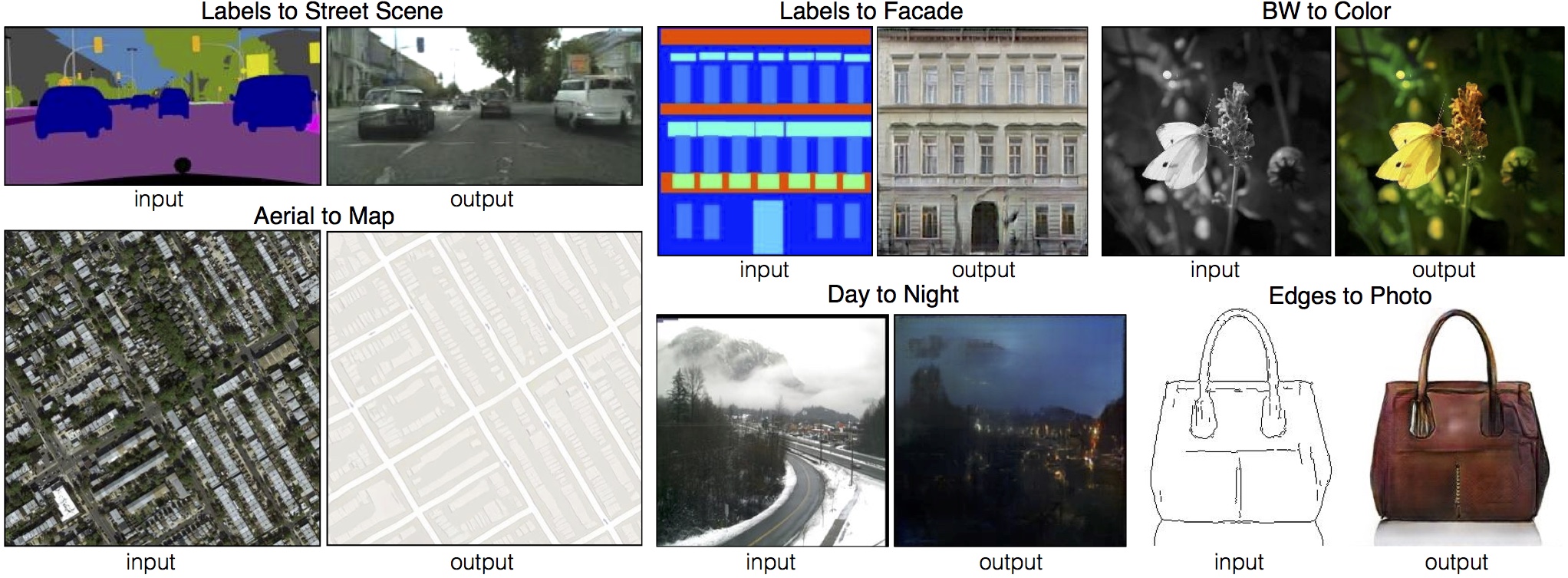

Here are some results generated by the authors of paper:

Setup

Prerequisites

- Linux

- Python with numpy

- NVIDIA GPU + CUDA 8.0 + CuDNNv5.1

- TensorFlow 0.11

Getting Started

- Clone this repo:

git clone git@github.com:yenchenlin/pix2pix-tensorflow.git

cd pix2pix-tensorflow- Download the dataset (script borrowed from torch code):

bash ./download_dataset.sh facades- Train the model

python main.py --phase train- Test the model:

python main.py --phase testResults

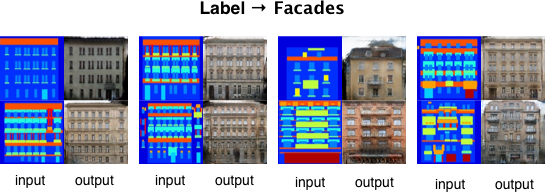

Here is the results generated from this implementation:

More results on other datasets coming soon!

Note: To avoid the fast convergence of D (discriminator) network, G (generator) network is updated twice for each D network update, which differs from original paper but same as DCGAN-tensorflow, which this project based on.

Train

Code currently supports CMP Facades dataset. To reproduce results presented above, it takes 200 epochs of training. Exact computing time depends on own hardware conditions.

Test

Test the model on validation set of CMP Facades dataset. It will generate synthesized images provided corresponding labels under directory ./test.

Acknowledgments

Code borrows heavily from pix2pix and DCGAN-tensorflow. Thanks for their excellent work!