This repository contains the code and results for the paper titled "Optimising Text2Image Generation via Search and Negative Prompts". The goal of this work is to enhance the text-to-image generation process through optimization techniques involving search and negative prompts.

Text-to-image generation has seen significant advancements in recent years, but improving the quality and relevance of generated images remains a challenging task. This repository presents an approach that leverages search algorithms and negative prompts to optimize the text-to-image generation process.

This notebook is utilized to execute the optimization process. It involves fetching images from the DiffusionDB database and optimizing their prompts to enhance image generation.

The purpose of this notebook is to take the images generated in the previous notebook and produce the images in the format used for human evaluation.

This notebook processes the outcomes of the optimization process and the generated form images. It calculates the metrics used in the results section of the paper.

The results obtained from executing the notebooks demonstrate the effectiveness of the proposed approach in improving text-to-image generation quality.

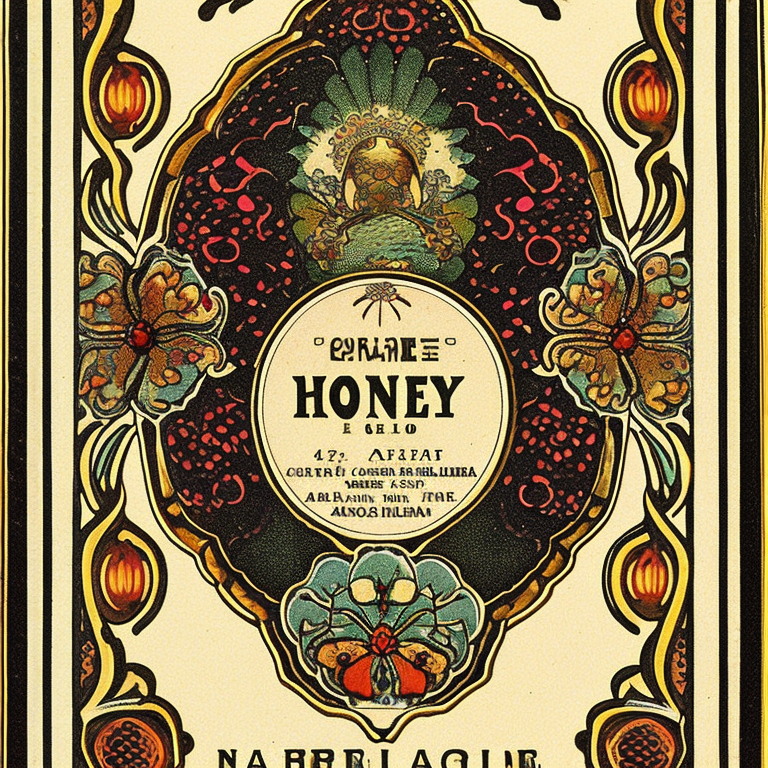

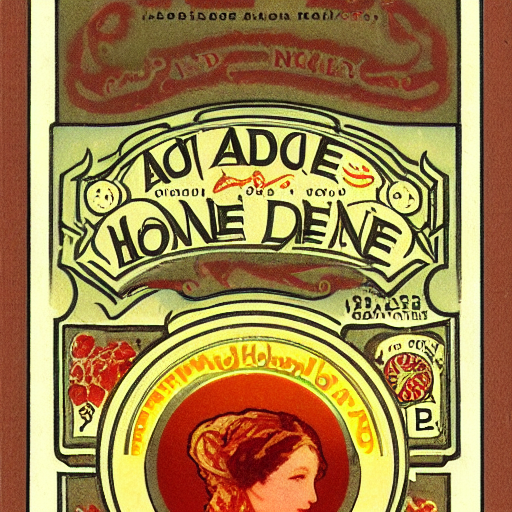

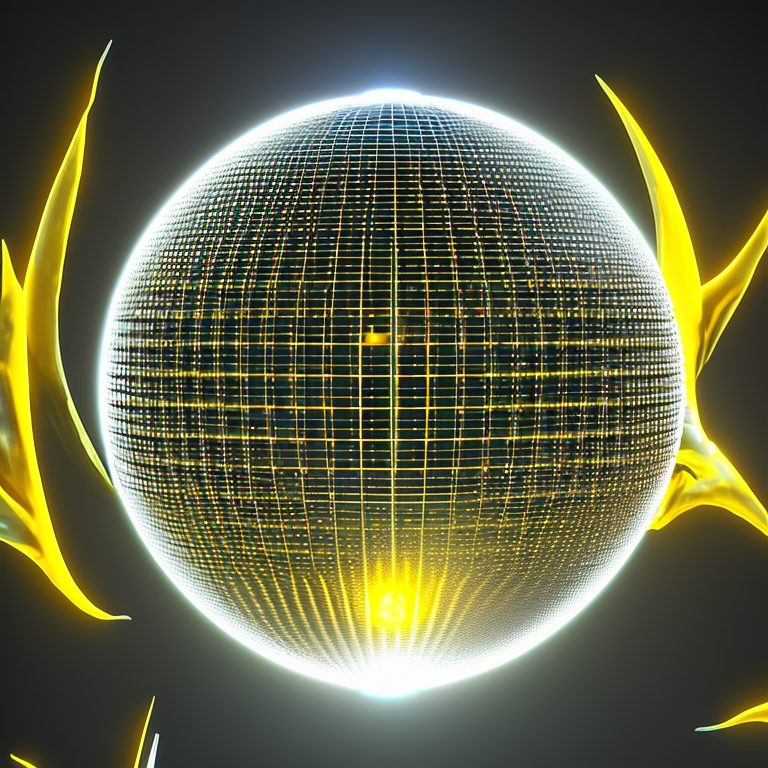

We can observe, for different prompts, how the generation process proposed in our approach improves the adequacy respect the prompt:

The rest of the images are stored in results/ folder. While form_imgs/ contains the images used in the human evaluation form.

Results of the research are contained in Generate_Results.ipynb notebook.

For our experiments we used Stable Diffusion v2 implemented by Stability AI, BLIP implemented by Salesforce and all-mpnet-base-v2 implemented by SBERT.

All experiments were conducted on on two 48 GB Nvidia Quadro RTX 8000 GPUs and an Intel Xeon Bronze 3206R CPU @ 1.90GHz.

For any questions or feedback regarding this repository, feel free to contact guillermo.iglesias@upm.es .