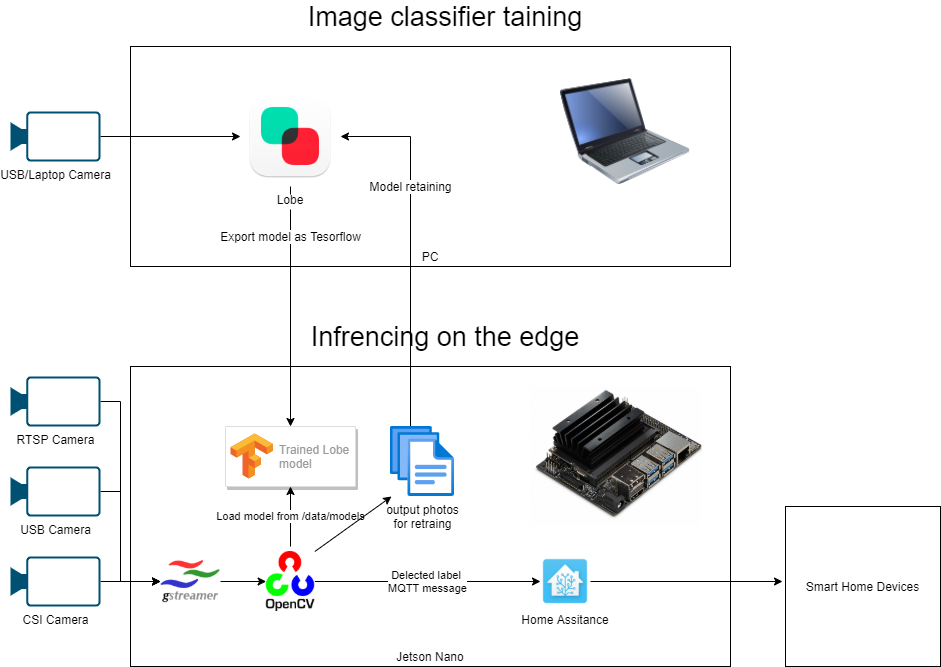

The goal of this project is demonstrating AI capabilities to Smart Home platform like Home Assistance and have a base ground for contribution.

Our Binha starter kit project is comprised of several components both hardware and software, we chose to cooporate with Nvidia and deploy all software on Nvidia Jetson Nano which is a small factor edge device with GPU which capable of accelerating deeplearning models infrencing and training.

We chose to use Microsoft Lobe for image cassification as its very easy to use and free of charge.

Video analytics framework which was used is comprised of gstreamer and OpenCV as it is already preinstalled on the Nvidia Jetson base image and very easy to use.

There are more advanced video analytics framework like Microsoft Live Video Analytics (LVA) and Nvidia Deepstream SDK, we are considering to add support for those in the future.

The device can be also managed remotely as an IoT Edge device and all components can be deployed as docker containers using Azure IoT Edge but to make this solution simple and easy to learn we have decided to reduce the technology stack.

This project assumes you are starting Wifi-connected headless Jetson Nanon device and a USB camera, we provide a BOM list and full instructions where to order the parts from and how to assemble it.

- Jetson Nano

- Intel WiFi

- Fan

- TODO- complete all BOM with links to purchase from

- for initial setup - an HDMI cable, keyboard, mouse and PC

- Lobe

- Remote Desktop Connection and SSH file transfer, we used MobaXterm, they have a free version capable for both

TODO: add step by step instructions with photos

follow Nvidia Jetson nano devkit instructions for preparing base image microSD card and initial setup.

compelete Ubuntu setup and connect to your home WiFi, write down your local IP address so you could connect by SSH and finish the software setup remotely from your PC.

- Download and install Micosoft Lobe.

- Watch Lobe's Tour to have the basic idea how to operate it and a train Computer Vision image cassifier

SSH into your Jetson device remotely from your PC, we used MobaXterm, during installation you'll need to reboot.

Jetson nano image is already preinstalled with OpenCV, the only requierment which needed to get install is Tensoflow version 1.15.3 in order to run Lobe exported TF model, this process can take ~40min

the following commands are based on the a guide by Nvidia (incase it get changed in the future) https://docs.nvidia.com/deeplearning/frameworks/install-tf-jetson-platform/index.html

sudo apt-get update

sudo apt upgrade

sudo apt-get install libhdf5-serial-dev hdf5-tools libhdf5-dev zlib1g-dev zip libjpeg8-dev liblapack-dev libblas-dev gfortran

sudo apt-get install python3-pip

pip3 install -U pip testresources setuptools==49.6.0

pip3 install -U numpy==1.16.1 future==0.18.2 mock==3.0.5 h5py==2.10.0 keras_preprocessing==1.1.1 keras_applications==1.0.8 gast==0.2.2 futures protobuf pybind11

sudo pip3 install --pre --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v44 'tensorflow==1.15.3'

sudo reboot-

Install cURL:

sudo apt update sudo apt upgrade sudo apt install curl -

Download

docker-compose:sudo curl -L --fail https://raw.githubusercontent.com/linuxserver/docker-docker-compose/master/run.sh -o /usr/local/bin/docker-compose -

Set it with execute permissions:

sudo chmod +x /usr/local/bin/docker-compose -

Verify it's installed property:

sudo /usr/local/bin/docker-compose --versionIt should first install the image, then you should get something like:

docker-compose version 1.28.0, build d02a7b1aSee original thread here

cd ~

mkdir repos

cd repos

git clone https://github.com/guybartal/Binha.git

cd Binha

sudo pip3 install -r requirements.txt

# create a folder which will hold all our image classifiers

mkdir /data/models

# create a folder which will hold output of classified images by the baked model so we could retrain and improve our model

mkdir /data/outputGo to ha/README.md

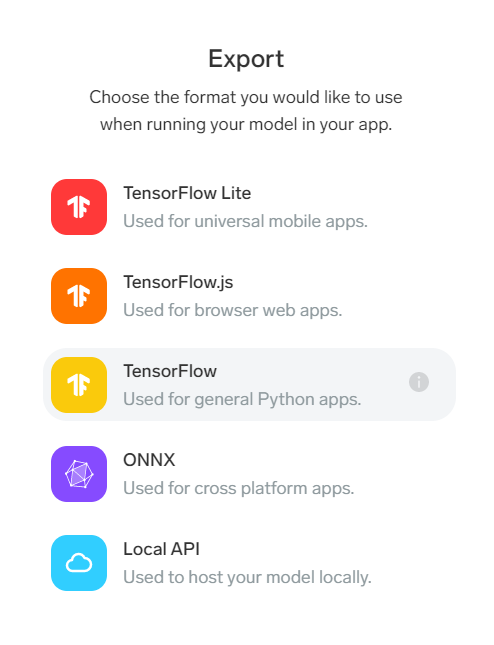

You can train your own image classifier and export it as Tensorflow format or use our pre-trained models.

upload your expored lobe tf model folder into /data/models inside your jetson device. after running the classifer should start inference on your csi camera feed and store a frame in the output folder per 30 frames with the predicted label so you could test and retrain your model.

SSH into your jetson device and run the following command (change the path to match your trained model path)

python3 classifier.py --model_dir /data/models/hands --output_dir /data/outputSSH into your jetson device and run the following command (change the path to match your trained model path)

python3 classifier-rtsp.py --model_dir /data/models/hands --output_dir /data/outputincase you want to run the classifier inside docker, use this command:

docker run -it --name lobe --net=host --runtime nvidia -e DISPLAY=$DISPLAY -w /opt/nvidia/deepstream/deepstream-5.0 -v /tmp/argus_socket:/tmp/argus_socket -v /usr/lib/aarch64-linux-gnu:/usr/lib/aarch64-linux-gnu -v /usr/lib/python3.6/dist-packages/cv2:/usr/lib/python3.6/dist-packages/cv2 -v /home/nvidia/ds_workspace:/home/mounted_workspace -v /data/models:/data/models --device /dev/video0:/dev/video0 -v /tmp/.X11-unix/:/tmp/.X11-unix guybartal/smarthomelobe:v0.3 /bin/bash

cd /app

python3 classifier.py --model_dir /data/models/replace_with_your_model_dir --output_dir /data/output

We really appreciate any feedback related to Binha, and also just enjoy seeing what you're working on! There is a growing community of Binha and Binha users. It's easy to get involved involved...

- Ask a question and discuss Binha related topics or share your project on the Binha GitHub Discussions

- Report a bug by creating an issue