[CVPR 2023] Improving Zero-shot Generalization and Robustness of Multi-modal Models

Improving Zero-shot Generalization and Robustness of Multi-modal Models

Yunhao Ge*, Jie Ren*, Andrew Gallagher, Yuxiao Wang, Ming-Hsuan Yang, Hartwig Adam, Laurent Itti, Balaji Lakshminarayanan, Jiaping Zhao ( * =equal contribution)

IEEE/ CVF International Conference on Computer Vision and Pattern Recognition (CVPR), 2023

Project Page | Video | Paper

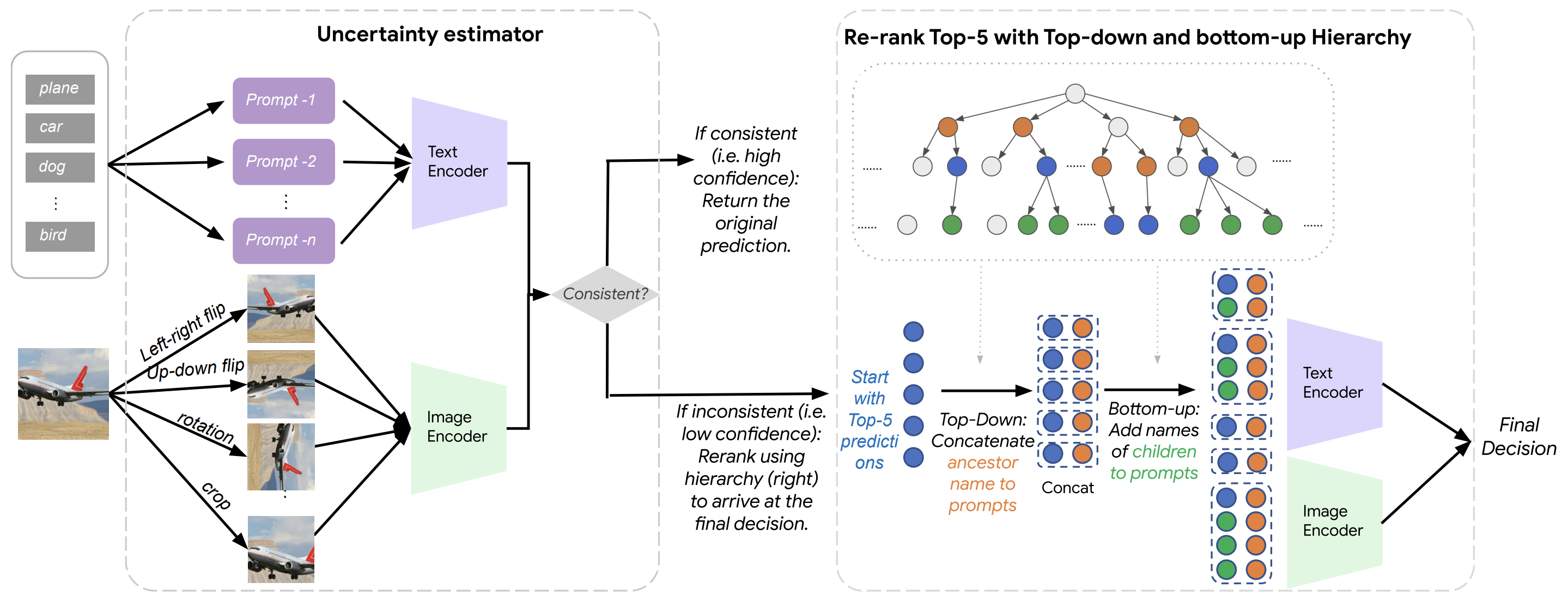

Figure: Our zero-shot classification pipeline consists of 2 steps: confidence estimation via self-consistency (left block) and top-down and bottom-up label augmentation using the WordNet hierarchy (right block).

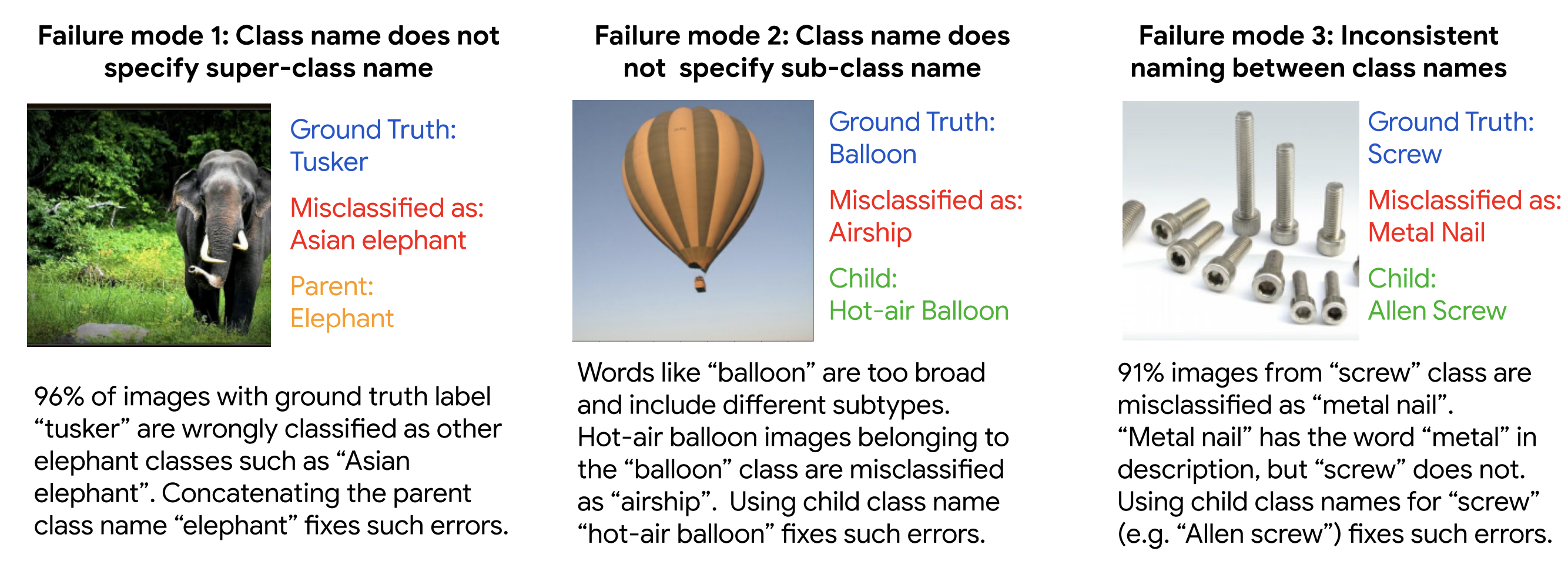

Figure: Typical failure modes in the cases where top-5 prediction was correct but top-1 was wrong.

- Clone this repo:

git clone https://github.com/gyhandy/Hierarchy-CLIP.git

cd Hierarchy-CLIP- Install required library:

git clone https://github.com/google-research/scenic.git

cd scenic

pip install .- Most of the dataset we used in paper could be load by tensorflow_datasets, with our provided function:

-

Note: please make sure you have registered ImageNet account.

dset = load_dataset('imagenet2012') - You could also first download ImageNet and then process them with tensorflow_datasets and load them with function:

If you want to use other dataset (paper Table 2), e.g., caltech101, Food-101, Flower102, Cifar-100, please use/rewrite our function: load_dataset_info()

dset = load_dataset_from(data_dir='YOUR/LOCAL/PATH/imagenet2012', dataset='imagenet2012', split='validation')

-

# caltech101 caltech101_dset, caltech101_dset_info = load_dataset_info('caltech101', split='test')

-

The wordnet hierarchy is based on Github repo: https://github.com/niharikajainn/imagenet-ancestors-descendants

-

We already put them into imagenet-ancestors-descendants, we also provide imagenet_label_to_wordnet_synset.txt

We provide a colab code, all details are in the following:

Hierarcy_Clip.ipynbGot Questions? We would love to answer them! Please reach out by email! You may cite us in your research as:

@inproceedings{ge2023improving,

title={Improving Zero-shot Generalization and Robustness of Multi-modal Models},

author={Ge, Yunhao and Ren, Jie and Gallagher, Andrew and Wang, Yuxiao and Yang, Ming-Hsuan and Adam, Hartwig and Itti, Laurent and Lakshminarayanan, Balaji and Zhao, Jiaping},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={11093--11101},

year={2023}

}