This is a sample service for audit logging. It provides two HTTP endpoints:

- POST

/writeevent_typeis required- Use

event_fieldsfor saving any other data

- GET

/searchevent_typeandtime_startare requiredtime_stopis optionalquery_paramsis optional and used for filtering byevent_fieldssaved while writing events

There is basic auth implemented for both endpoints. username and password is hardcoded for now (duck-taped). You can change it however you like.

See below for example use

- Install and start mongo db community version. You can pass this step if you have a running mongo db instance in your local.

- (Recommended) Instructions for Docker: https://www.mongodb.com/docs/manual/tutorial/install-mongodb-community-with-docker/

- Intructions for linux: https://www.mongodb.com/docs/manual/tutorial/install-mongodb-on-ubuntu/

-

Install required packages

pip install -r requirements.txt

-

Start uvicorn Server in the root directory of the project

uvicorn main:app --reload

-

Add events

curl --location 'http://127.0.0.1:8000/write' \ --header 'Content-Type: application/json' \ --header 'Authorization: Basic YWRtaW46cGFzc3dvcmQ=' \ --data '{"event_type": "create_account", "event_fields": {"name": "alperen", "age": 50}}'

-

Search events

curl --location --request GET 'http://127.0.0.1:8000/search' \ --header 'Content-Type: application/json' \ --header 'Authorization: Basic YWRtaW46cGFzc3dvcmQ=' \ --data '{"event_type": "create_account", "time_start": "2020-09-15T15:53:00+05:00", "query_params": {"name": "alperen"}}'

curl --location --request GET 'http://127.0.0.1:8000/search' \ --header 'Content-Type: application/json' \ --header 'Authorization: Basic YWRtaW46cGFzc3dvcmQ=' \ --data '{"event_type": "create_account", "time_start": "2000-09-15T15:53:00+05:00", "query_params": {"age": 50}}'

- Since the data schema is unknown (even-specific fields), NoSQL is a better choice than SQL. For example, PostgreSQL

support jsonb type to keep json data, but it is not that performant.

- MongoDB's write performance is pretty fascinating at the first look. Having indexes on many fields will affect write operation performance negatively. So, I just added indices on the common fields.

- Before MongoDB, I tried to use Time Series Databases because they have performant write capabilities but unknown

data schema caused some issues while searching. I tried InfluxDB and it creates multiple documents for one write

if

the doc has multiple fields. So, writing queries was complex, even it did not seem possible to do what we're

trying

to do here.

- So, one more +1 for MongoDB for simplicity

- I chose FastAPI since it is easy to use

- I did not create a lot of directories because the number of files is not even 10

- Investigate use of Time Series Databases

- Unit/integration tests

- Implement better auth

- Send logs to an external service

- Get secrets from environment instead of hardcoded variables.

- Enhance search capability. For example, age > 40

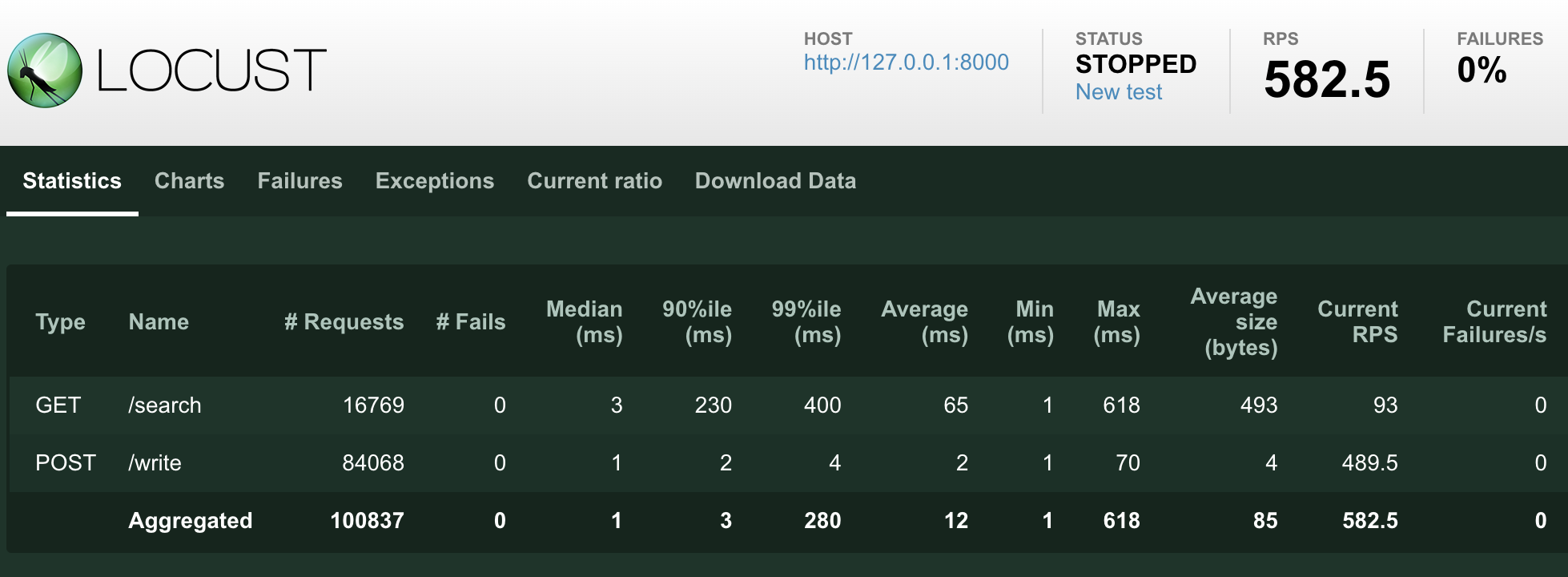

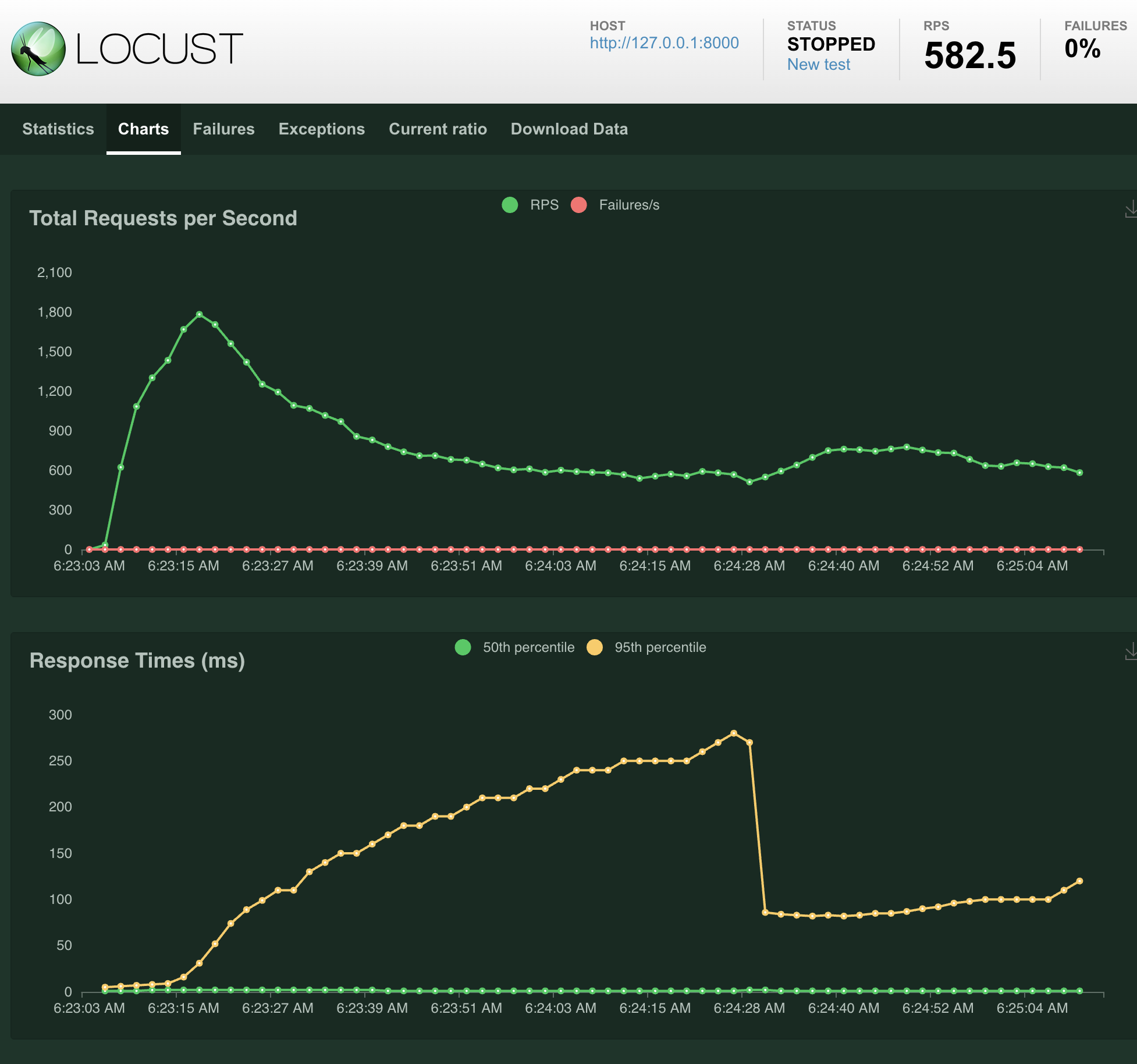

I added only load tests for now because they are end-to-end when manually checking the db and logs, then see nothing goes wrong.

- Run

locustcommand inload_test - Click on the url in the terminal

- Enter parameters and local service URL (http://127.0.0.1:8000)

- Start test

Here is an example result for 10 users and write-heavy scenario: