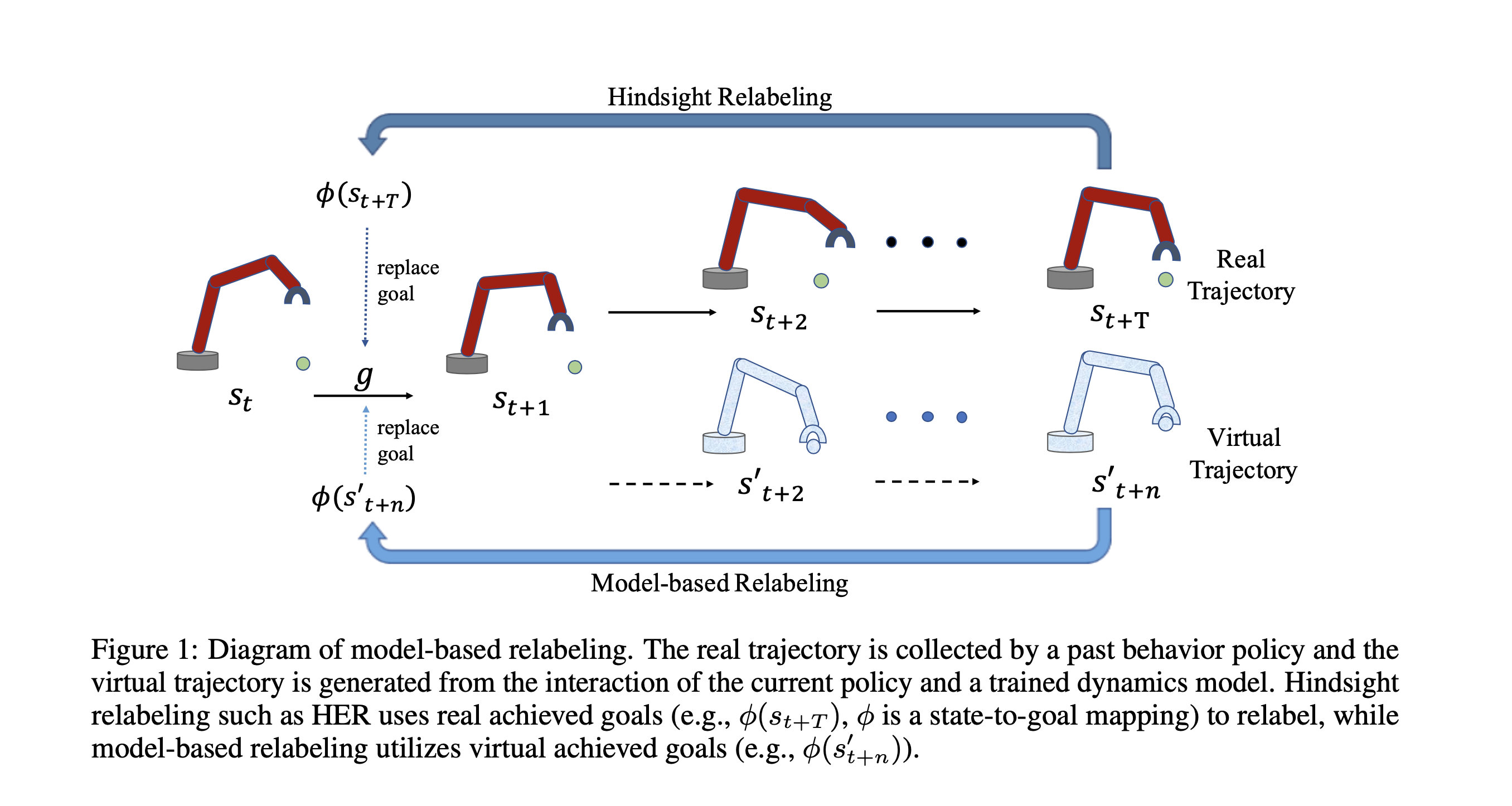

Code for Model-based Hindisight Experience Replay (MHER). MHER is a novel algorithm leveraging model-based achieved goals for both goal relabeling and policy improvement.

MHER can also be used for offline multi-goal RL, we revised the code based on WGCSL in the MHER_offline folder, where you can also find the offline datasets for all six environments used in our paper. To train offline MHER and other baselines, you can install the MHER_offline package and run it following the README file in MHER_offline folder.

python3.6+, tensorflow, gym, mujoco, mpi4py

-

Clone the repo and cd into it:

-

Install baselines package

pip install -e .

Environments: Point2DLargeEnv-v1, Point2D-FourRoom-v1, FetchReach-v1, SawyerReachXYZEnv-v1, Reacher-v2, SawyerDoor-v0.

MHER:

python -m mher.run --env=Point2DLargeEnv-v1 --num_epoch 30 --num_env 1 --n_step 5 --mode dynamic --alpha 3 --mb_relabeling_ratio 0.8 --log_path=~/logs/point/ --save_path=~/logs/point/MHER without MGSL (DDPG + MBR)

python -m mher.run --env=Point2DLargeEnv-v1 --num_epoch 30 --num_env 1 --n_step 5 --mode dynamic --alpha 0 --mb_relabeling_ratio 0.8 --no_mgsl True MHER without MBR (DDPG + SL)

python -m mher.run --env=Point2DLargeEnv-v1 --num_epoch 30 --num_env 1 --n_step 5 --mode dynamic --mb_relabeling_ratio 0.8 --no_mb_relabel TrueDDPG:

python -m mher.run --env=Point2DLargeEnv-v1 --num_epoch 30 --num_env 1 --noher True HER:

python -m mher.run --env=Point2DLargeEnv-v1 --num_epoch 30 --num_env 1 GCSL:

python -m mher.run --env=Point2DLargeEnv-v1 --num_epoch 30 --num_env 1 --mode supervisedModel-based Policy Optimization(MBPO):

python -m mher.run --env=FetchReach-v1 --num_epoch 30 --num_env 1 --mode mbpo --n_step 5Model-based Value Expansion(MVE):

python -m mher.run --env=FetchReach-v1 --num_epoch 30 --num_env 1 --mode mbpo --n_step 5