The purpose of this project is to construct a streaming pipeline around Apache Kafka and its ecosystem. Using public data from the Chicago Transit Authority we will construct an event pipeline around Kafka that allows us to simulate and display the status of train lines in real time.

The following are required to complete this project:

- Docker

- Python 3.7

- Access to a computer with a minimum of 16gb+ RAM and a 4-core CPU to execute the simulation

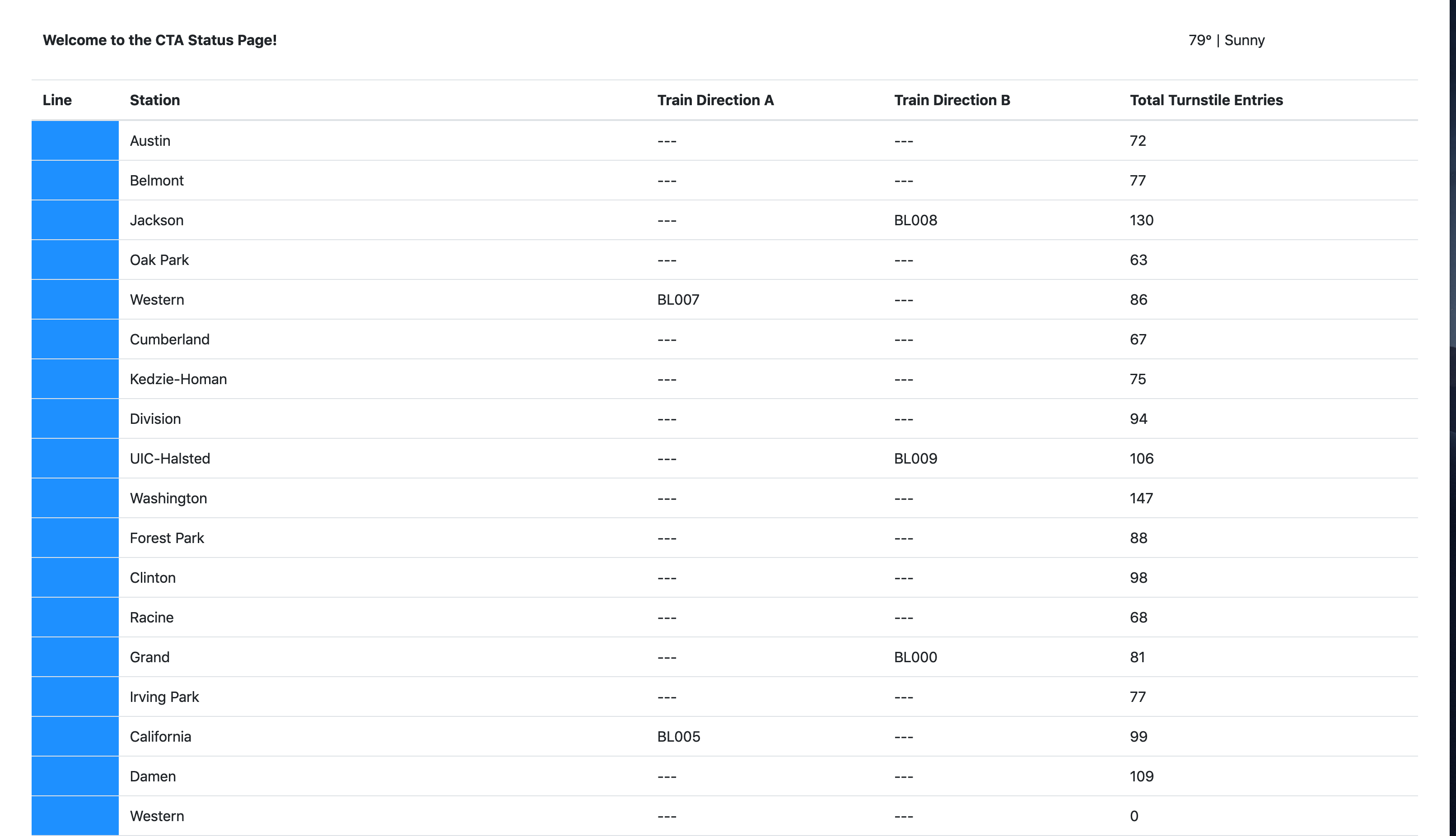

The Chicago Transit Authority (CTA) has asked us to develop a dashboard displaying system status for its commuters. We have decided to use Kafka and ecosystem tools like REST Proxy and Kafka Connect to accomplish this task.

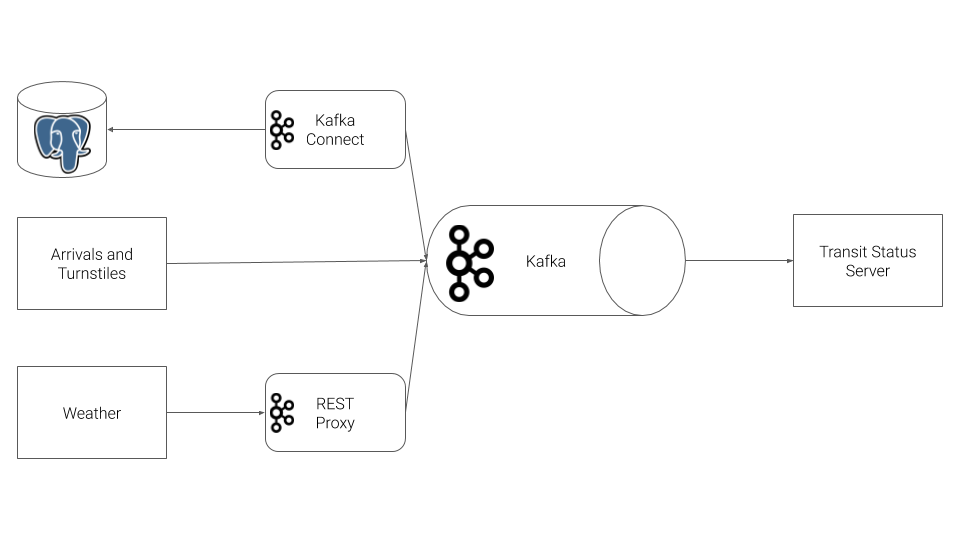

Our architecture will look like so:

This project explores and demonstrates the various components of the Kafka ecosystem.

Station data is streamed into a Kafka topic from PostgreSQL using a Kafka JDBC connector and Faust.

Weather data is streamed into a Kafka topic using the Kafka REST proxy.

Turnstile data is summarized for each station and placed into a table using KSQL.

Arrival and turnstile data are streamed into Kafka topics using Kafka producers.

To run the simulation, you must first start up the Kafka ecosystem on their machine utilizing Docker Compose.

%> docker-compose up

Docker compose will take a 3-5 minutes to start, depending on your hardware. Please be patient and wait for the docker-compose logs to slow down or stop before beginning the simulation.

Once docker-compose is ready, the following services will be available:

| Service | Host URL | Docker URL | Username | Password |

|---|---|---|---|---|

| Public Transit Status | http://localhost:8888 | n/a | ||

| Kafka Connect UI | http://localhost:8084 | http://connect-ui:8084 | ||

| Kafka Topics UI | http://localhost:8085 | http://topics-ui:8085 | ||

| Schema Registry UI | http://localhost:8086 | http://schema-registry-ui:8086 | ||

| Kafka | PLAINTEXT://localhost:9092,PLAINTEXT://localhost:9093,PLAINTEXT://localhost:9094 | PLAINTEXT://kafka0:9092,PLAINTEXT://kafka1:9093,PLAINTEXT://kafka2:9094 | ||

| REST Proxy | http://localhost:8082 | http://rest-proxy:8082/ | ||

| Schema Registry | http://localhost:8081 | http://schema-registry:8081/ | ||

| Kafka Connect | http://localhost:8083 | http://kafka-connect:8083 | ||

| KSQL | http://localhost:8088 | http://ksql:8088 | ||

| PostgreSQL | jdbc:postgresql://localhost:5432/cta |

jdbc:postgresql://postgres:5432/cta |

cta_admin |

chicago |

Note that to access these services from your own machine, you will always use the Host URL column.

When configuring services that run within Docker Compose, like Kafka Connect you must use the Docker URL. When you configure the JDBC Source Kafka Connector, for example, you will want to use the value from the Docker URL column.

There are two pieces to the simulation, the producer and consumer.

cd producersvirtualenv venv. venv/bin/activatepip install -r requirements.txtpython simulation.py

Once the simulation is running, you may hit Ctrl+C at any time to exit.

cd consumersvirtualenv venv. venv/bin/activatepip install -r requirements.txtfaust -A faust_stream worker -l info

cd consumersvirtualenv venv. venv/bin/activatepip install -r requirements.txtpython ksql.py

NOTE: Do not run the consumer until you have reached Step 6!

cd consumersvirtualenv venv. venv/bin/activatepip install -r requirements.txtpython server.py

Once the server is running, you may hit Ctrl+C at any time to exit.