👇 Why this guide can take your testing skills to the next level

📗 45+ best practices: Super-comprehensive and exhaustive

This is a guide for JavaScript & Node.js reliability from A-Z. It summarizes and curates for you dozens of the best blog posts, books and tools the market has to offer

🚢 Advanced: Goes 10,000 miles beyond the basics

Hop into a journey that travells way beyond the basics into advanced topics like testing in production, mutation testing, property-based testing and many other strategic & professional tools. Should you read every word in this guide your testing skills are likely to go way above the average

🌐 Full-stack: front, backend, CI, anything

Start by understanding the ubiquitous testing practices that are the foundation for any application tier. Then, delve into your area of choice: frontend/UI, backend, CI or maybe all of them?

Written By Yoni Goldberg

- A JavaScript & Node.js consultant

- 👨🏫 My testing workshop - learn about my workshops in Europe & US

- Follow me on Twitter

- Come hear me speak at LA, Verona, Kharkiv, free webinar. Future events TBD

- My JavaScript Quality newsletter - insights and content only on strategic matters

Table of contents

-

Section 0: The Golden Rule

A single advice that inspires all the others (1 special bullet)

-

Section 1: The Test Anatomy

The foundation - strucuturing clean tests (12 bullets)

-

Section 2: Backend

Writing backend and Microservices tests efficiently (8 bullets)

-

Section 3: Frontend, UI, E2E

Writing tests for web UI including component and E2E tests (11 bullets)

-

Section 4: Measuring Tests Effectivenss

Watching the watchman - measuring test quality (4 bullets)

-

Section 5: Continous Integration

Guideliness for CI in the JS world (9 bullets)

Section 0️⃣ : The Golden Rule

⚪️ 0. The Golden Rule: Design for lean testing

Our minds are full with the main production code, we don't have 'headspace' for additional complexity. Should we try to squeeze yet another challenging code into our poor brain it will slow the team down which works against the reason we do testing. Practically this is where many teams just abandon testing.

The tests are an opportunity for something else - a friendly and smiley assistant, one that it's delightful to work with and delivers great value for such a small investment. Science tells we have two brain systems: system 1 which is used for effortless activities like driving a car on an empty road and system 2 which is meant for complex and conscious operations like solving a math equation. Design your test for system 1, when looking at test code it should feel as easy as modifying an HTML document and not like solving 2X(17 × 24).

This can be achieved by selectively cherry-picking techniques, tools and test targets that are cost-effective and provide great ROI. Test only as much as needed, strive to keep it nimble, sometimes it's even worth dropping some tests and trade reliability for agility and simplicity.

Most of the advice below are derivatives of this principle.

Ready to start?

Section 1. The Test Anatomy

⚪ ️ 1.1 Include 3 parts in each test name

(1) What is being tested? For example, the ProductsService.addNewProduct method

(2) Under what circumstances and scenario? For example, no price is passed to the method

(3) What is the expected result? For example, the new product is not approved

✏ Code Examples

👏 Doing It Right Example: A test name that constitutes 3 parts

//1. unit under test

describe('Products Service', function() {

describe('Add new product', function() {

//2. scenario and 3. expectation

it('When no price is specified, then the product status is pending approval', ()=> {

const newProduct = new ProductService().add(...);

expect(newProduct.status).to.equal('pendingApproval');

});

});

});👏 Doing It Right Example: A test name that constitutes 3 parts

⚪ ️ 1.2 Structure tests by the AAA pattern

✅ Do: Structure your tests with 3 well-separated sections Arrange, Act & Assert (AAA). Following this structure guarantees that the reader spends no brain CPU on understanding the test plan:

1st A - Arrange: All the setup code to bring the system to the scenario the test aims to simulate. This might include instantiating the unit under test constructor, adding DB records, mocking/stubbing on objects and any other preparation code

2nd A - Act: Execute the unit under test. Usually 1 line of code

3rd A - Assert: Ensure that the received value satisfies the expectation. Usually 1 line of code

❌ Otherwise: Not only you spend long daily hours on understanding the main code, now also what should have been the simple part of the day (testing) stretches your brain

✏ Code Examples

👏 Doing It Right Example: A test strcutured with the AAA pattern

describe('Customer classifier', () => {

test('When customer spent more than 500$, should be classified as premium', () => {

//Arrange

const customerToClassify = {spent:505, joined: new Date(), id:1}

const DBStub = sinon.stub(dataAccess, "getCustomer")

.reply({id:1, classification: 'regular'});

//Act

const receivedClassification = customerClassifier.classifyCustomer(customerToClassify);

//Assert

expect(receivedClassification).toMatch('premium');

});

});👎 Anti Pattern Example: No separation, one bulk, harder to interpret

test('Should be classified as premium', () => {

const customerToClassify = {spent:505, joined: new Date(), id:1}

const DBStub = sinon.stub(dataAccess, "getCustomer")

.reply({id:1, classification: 'regular'});

const receivedClassification = customerClassifier.classifyCustomer(customerToClassify);

expect(receivedClassification).toMatch('premium');

});⚪ ️1.3 Describe expectations in a product language: use BDD-style assertions

✅ Do: Coding your tests in a declarative-style allows the reader to get the grab instantly without spending even a single brain-CPU cycle. When you write an imperative code that is packed with conditional logic the reader is thrown away to an effortful mental mood. In that sense, code the expectation in a human-like language, declarative BDD style using expect or should and not using custom code. If Chai & Jest don’t include the desired assertion and it’s highly repeatable, consider extending Jest matcher (Jest) or writing a custom Chai plugin

✏ Code Examples

👎 Anti Pattern Example: The reader must skim through not so short, and imperative code just to get the test story

test("When asking for an admin, ensure only ordered admins in results" , ()={

//assuming we've added here two admins "admin1", "admin2" and "user1"

const allAdmins = getUsers({adminOnly:true});

const admin1Found, adming2Found = false;

allAdmins.forEach(aSingleUser => {

if(aSingleUser === "user1"){

assert.notEqual(aSingleUser, "user1", "A user was found and not admin");

}

if(aSingleUser==="admin1"){

admin1Found = true;

}

if(aSingleUser==="admin2"){

admin2Found = true;

}

});

if(!admin1Found || !admin2Found ){

throw new Error("Not all admins were returned");

}

});👏 Doing It Right Example: Skimming through the following declarative test is a breeze

it("When asking for an admin, ensure only ordered admins in results" , ()={

//assuming we've added here two admins

const allAdmins = getUsers({adminOnly:true});

expect(allAdmins).to.include.ordered.members(["admin1" , "admin2"])

.but.not.include.ordered.members(["user1"]);

});⚪ ️ 1.4 Stick to black-box testing: Test only public methods

✏ Code Examples

👎 Anti Pattern Example: A test case is testing the internals for no good reason

class ProductService{

//this method is only used internally

//Change this name will make the tests fail

calculateVAT(priceWithoutVAT){

return {finalPrice: priceWithoutVAT * 1.2};

//Change the result format or key name above will make the tests fail

}

//public method

getPrice(productId){

const desiredProduct= DB.getProduct(productId);

finalPrice = this.calculateVATAdd(desiredProduct.price).finalPrice;

}

}

it("White-box test: When the internal methods get 0 vat, it return 0 response", async () => {

//There's no requirement to allow users to calculate the VAT, only show the final price. Nevertheless we falsely insist here to test the class internals

expect(new ProductService().calculateVATAdd(0).finalPrice).to.equal(0);

});⚪ ️ ️1.5 Choose the right test doubles: Avoid mocks in favor of stubs and spies

✅ Do: Test doubles are a necessary evil because they are coupled to the application internals, yet some provide an immense value (Read here a reminder about test doubles: mocks vs stubs vs spies). However, the various techniques were not born equal: some of them, spies and stubs, are focused on testing the requirements but as an inevitable side-effect they also slightly touch the internals. Mocks, on the contrary side, are focused on testing the internals — this brings huge overhead as explained in the bullet “Stick to black box testing”.

However, the various techniques were not born equal: some of them, spies and stubs, are focused on testing the requirements but as an inevitable side-effect they also slightly touch the internals. Mocks, on the contrary side, are focused on testing the internals — this brings huge overhead as explained in the bullet “Stick to black box testing”.

Before using test doubles, ask a very simple question: Do I use it to test functionality that appears, or could appear, in the requirements document? If no, it’s a smell of white-box testing.

For example, if you want to test what your app behaves reasonably when the payment service is down, you might stub the payment service and trigger some ‘No Response’ return to ensure that the unit under test returns the right value. This checks our application behavior/response/outcome under certain scenarios. You might also use a spy to assert that an email was sent when that service is down — this is again a behavioral check which is likely to appear in a requirements doc (“Send an email if payment couldn’t be saved”). On the flip side, if you mock the Payment service and ensure that it was called with the right JavaScript types — then your test is focused on internal things that got nothing with the application functionality and are likely to change frequently

❌ Otherwise: Any refactoring of code mandates searching for all the mocks in the code and updating accordingly. Tests become a burden rather than a helpful friend

✏ Code Examples

👎 Anti-pattern example: Mocks focus on the internals

it("When a valid product is about to be deleted, ensure data access DAL was called once, with the right product and right config", async () => {

//Assume we already added a product

const dataAccessMock = sinon.mock(DAL);

//hmmm BAD: testing the internals is actually our main goal here, not just a side-effect

dataAccessMock.expects("deleteProduct").once().withArgs(DBConfig, theProductWeJustAdded, true, false);

new ProductService().deletePrice(theProductWeJustAdded);

mock.verify();

});👏Doing It Right Example: spies are focused on testing the requirements but as a side-effect are unavoidably touching to the internals

it("When a valid product is about to be deleted, ensure an email is sent", async () => {

//Assume we already added here a product

const spy = sinon.spy(Emailer.prototype, "sendEmail");

new ProductService().deletePrice(theProductWeJustAdded);

//hmmm OK: we deal with internals? Yes, but as a side effect of testing the requirements (sending an email)

});⚪ ️1.6 Don’t “foo”, use realistic input dataing

✏ Code Examples

👎 Anti-Pattern Example: A test suite that passes due to non-realistic data

const addProduct = (name, price) =>{

const productNameRegexNoSpace = /^\S*$/;//no white-space allowd

if(!productNameRegexNoSpace.test(name))

return false;//this path never reached due to dull input

//some logic here

return true;

};

test("Wrong: When adding new product with valid properties, get successful confirmation", async () => {

//The string "Foo" which is used in all tests never triggers a false result

const addProductResult = addProduct("Foo", 5);

expect(addProductResult).toBe(true);

//Positive-false: the operation succeeded because we never tried with long

//product name including spaces

});👏Doing It Right Example: Randomizing realistic input

it("Better: When adding new valid product, get successful confirmation", async () => {

const addProductResult = addProduct(faker.commerce.productName(), faker.random.number());

//Generated random input: {'Sleek Cotton Computer', 85481}

expect(addProductResult).to.be.true;

//Test failed, the random input triggered some path we never planned for.

//We discovered a bug early!

});⚪ ️ 1.7 Test many input combinations using Property-based testing

❌ Otherwise: Unconsciously, you choose the test inputs that cover only code paths that work well. Unfortunately, this decreases the efficiency of testing as a vehicle to expose bugs

✏ Code Examples

👏 Doing It Right Example: Testing many input permutations with “mocha-testcheck”

require('mocha-testcheck').install();

const {expect} = require('chai');

const faker = require('faker');

describe('Product service', () => {

describe('Adding new', () => {

//this will run 100 times with different random properties

check.it('Add new product with random yet valid properties, always successful',

gen.int, gen.string, (id, name) => {

expect(addNewProduct(id, name).status).to.equal('approved');

});

})

});⚪ ️ 1.8 If needed, use only short & inline snapshots

On the other hand, ‘classic snapshots’ tutorials and tools encourage to store big files (e.g. component rendering markup, API JSON result) over some external medium and ensure each time when the test run to compare the received result with the saved version. This, for example, can implicitly couple our test to 1000 lines with 3000 data values that the test writer never read and reasoned about. Why is this wrong? By doing so, there are 1000 reasons for your test to fail - it’s enough for a single line to change for the snapshot to get invalid and this is likely to happen a lot. How frequently? for every space, comment or minor CSS/HTML change. Not only this, the test name wouldn’t give a clue about the failure as it just checks that 1000 lines didn’t change, also it encourages to the test writer to accept as the desired true a long document he couldn’t inspect and verify. All of these are symptoms of obscure and eager test that is not focused and aims to achieve too much

It’s worth noting that there are few cases where long & external snapshots are acceptable - when asserting on schema and not data (extracting out values and focusing on fields) or when the received document rarely changes

❌ Otherwise: A UI test fails. The code seems right, the screen renders perfect pixels, what happened? your snapshot testing just found a difference from the origin document to current received one - a single space character was added to the markdown...

✏ Code Examples

👎 Anti-Pattern Example: Coupling our test to unseen 2000 lines of code

it('TestJavaScript.com is renderd correctly', () => {

//Arrange

//Act

const receivedPage = renderer

.create( <DisplayPage page = "http://www.testjavascript.com" > Test JavaScript < /DisplayPage>)

.toJSON();

//Assert

expect(receivedPage).toMatchSnapshot();

//We now implicitly maintain a 2000 lines long document

//every additional line break or comment - will break this test

});👏 Doing It Right Example: Expectations are visible and focused

it('When visiting TestJavaScript.com home page, a menu is displayed', () => {

//Arrange

//Act

receivedPage tree = renderer

.create( <DisplayPage page = "http://www.testjavascript.com" > Test JavaScript < /DisplayPage>)

.toJSON();

//Assert

const menu = receivedPage.content.menu;

expect(menu).toMatchInlineSnapshot(`

<ul>

<li>Home</li>

<li> About </li>

<li> Contact </li>

</ul>

`);

});⚪ ️1.9 Avoid global test fixtures and seeds, add data per-test

❌ Otherwise: Few tests fail, a deployment is aborted, our team is going to spend precious time now, do we have a bug? let’s investigate, oh no — it seems that two tests were mutating the same seed data

✏ Code Examples

👎 Anti Pattern Example: tests are not independent and rely on some global hook to feed global DB data

before(() => {

//adding sites and admins data to our DB. Where is the data? outside. At some external json or migration framework

await DB.AddSeedDataFromJson('seed.json');

});

it("When updating site name, get successful confirmation", async () => {

//I know that site name "portal" exists - I saw it in the seed files

const siteToUpdate = await SiteService.getSiteByName("Portal");

const updateNameResult = await SiteService.changeName(siteToUpdate, "newName");

expect(updateNameResult).to.be(true);

});

it("When querying by site name, get the right site", async () => {

//I know that site name "portal" exists - I saw it in the seed files

const siteToCheck = await SiteService.getSiteByName("Portal");

expect(siteToCheck.name).to.be.equal("Portal"); //Failure! The previous test change the name :[

});👏 Doing It Right Example: We can stay within the test, each test acts on its own set of data

it("When updating site name, get successful confirmation", async () => {

//test is adding a fresh new records and acting on the records only

const siteUnderTest = await SiteService.addSite({

name: "siteForUpdateTest"

});

const updateNameResult = await SiteService.changeName(siteUnderTest, "newName");

expect(updateNameResult).to.be(true);

});⚪ ️ 1.10 Don’t catch errors, expect them

✅ Do: When trying to assert that some input triggers an error, it might look right to use try-catch-finally and asserts that the catch clause was entered. The result is an awkward and verbose test case (example below) that hides the simple test intent and the result expectations

A more elegant alternative is the using the one-line dedicated Chai assertion: expect(method).to.throw (or in Jest: expect(method).toThrow()). It’s absolutely mandatory to also ensure the exception contains a property that tells the error type, otherwise given just a generic error the application won’t be able to do much rather than show a disappointing message to the user

❌ **Otherwise:**It will be challenging to infer from the test reports (e.g. CI reports) what went wrong

✏ Code Examples

👎 Anti-pattern Example: A long test case that tries to assert the existence of error with try-catch

/it("When no product name, it throws error 400", async() => {

let errorWeExceptFor = null;

try {

const result = await addNewProduct({name:'nest'});}

catch (error) {

expect(error.code).to.equal('InvalidInput');

errorWeExceptFor = error;

}

expect(errorWeExceptFor).not.to.be.null;

//if this assertion fails, the tests results/reports will only show

//that some value is null, there won't be a word about a missing Exception

});👏 Doing It Right Example: A human-readable expectation that could be understood easily, maybe even by QA or technical PM

it.only("When no product name, it throws error 400", async() => {

expect(addNewProduct)).to.eventually.throw(AppError).with.property('code', "InvalidInput");

});⚪ ️ 1.11 Tag your tests

✏ Code Examples

👏 Doing It Right Example: Tagging tests as ‘#cold-test’ allows the test runner to execute only fast tests (Cold===quick tests that are doing no IO and can be executed frequently even as the developer is typing)

//this test is fast (no DB) and we're tagging it correspondigly

//now the user/CI can run it frequently

describe('Order service', function() {

describe('Add new order #cold-test #sanity', function() {

test('Scenario - no currency was supplied. Excpectation - Use the default currency #sanity', function() {

//code logic here

});

});

});

⚪ ️1.12 Other generic good testing hygiene

Learn and practice TDD principles — they are extremely valuable for many but don’t get intimidated if they don’t fit your style, you’re not the only one. Consider writing the tests before the code in a red-green-refactor style, ensure each test checks exactly one thing, when you find a bug — before fixing write a test that will detect this bug in the future, let each test fail at least once before turning green, start a module by writing a quick and simplistic code that satsifies the test - then refactor gradually and take it to a prdoction grade level, avoid any dependency on the environment (paths, OS, etc)

Section 2️⃣ : Backend Testing

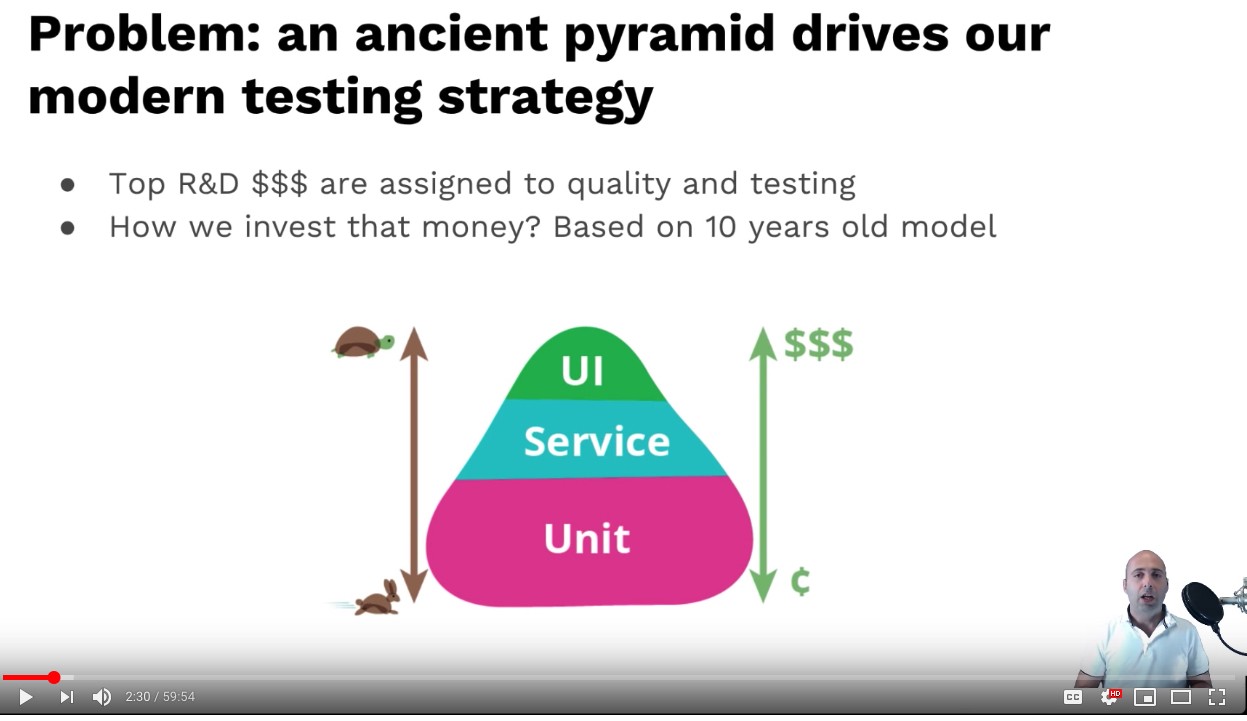

⚪ ️2.1 Enrich your testing portfolio: Look beyond unit tests and the pyramid

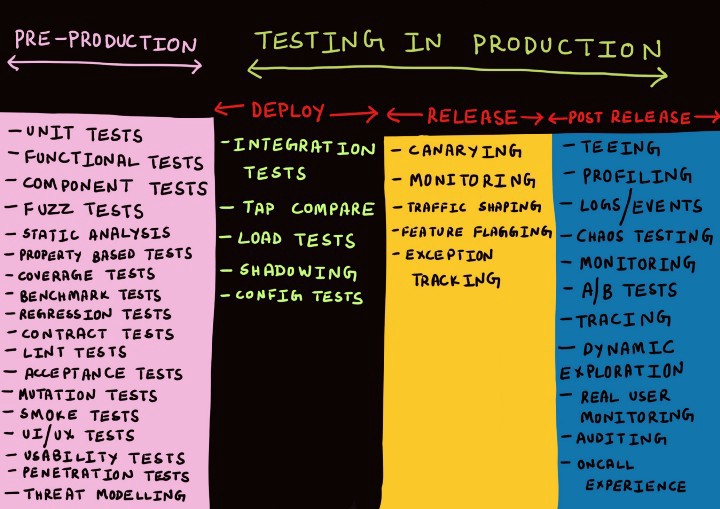

Don’t get me wrong, in 2019 the testing pyramid, TDD and unit tests are still a powerful technique and are probably the best match for many applications. Only like any other model, despite its usefulness, it must be wrong sometimes. For example, consider an IOT application that ingests many events into a message-bus like Kafka/RabbitMQ, which then flow into some data-warehouse and are eventually queried by some analytics UI. Should we really spend 50% of our testing budget on writing unit tests for an application that is integration-centric and has almost no logic? As the diversity of application types increase (bots, crypto, Alexa-skills) greater are the chances to find scenarios where the testing pyramid is not the best match.

It’s time to enrich your testing portfolio and become familiar with more testing types (the next bullets suggest few ideas), mind models like the testing pyramid but also match testing types to real-world problems that you’re facing (‘Hey, our API is broken, let’s write consumer-driven contract testing!’), diversify your tests like an investor that build a portfolio based on risk analysis — assess where problems might arise and match some prevention measures to mitigate those potential risks

A word of caution: the TDD argument in the software world takes a typical false-dichotomy face, some preach to use it everywhere, others think it’s the devil. Everyone who speaks in absolutes is wrong :]

✏ Code Examples

👏 Doing It Right Example: Cindy Sridharan suggests a rich testing portfolio in her amazing post ‘Testing Microservices — the sane way’

⚪ ️2.2 Component testing might be your best affair

Component tests focus on the Microservice ‘unit’, they work against the API, don’t mock anything which belongs to the Microservice itself (e.g. real DB, or at least the in-memory version of that DB) but stub anything that is external like calls to other Microservices. By doing so, we test what we deploy, approach the app from outwards to inwards and gain great confidence in a reasonable amount of time.

❌ Otherwise: You may spend long days on writing unit tests to find out that you got only 20% system coverage

✏ Code Examples

👏 Doing It Right Example: Supertest allows approaching Express API in-process (fast and cover many layers)

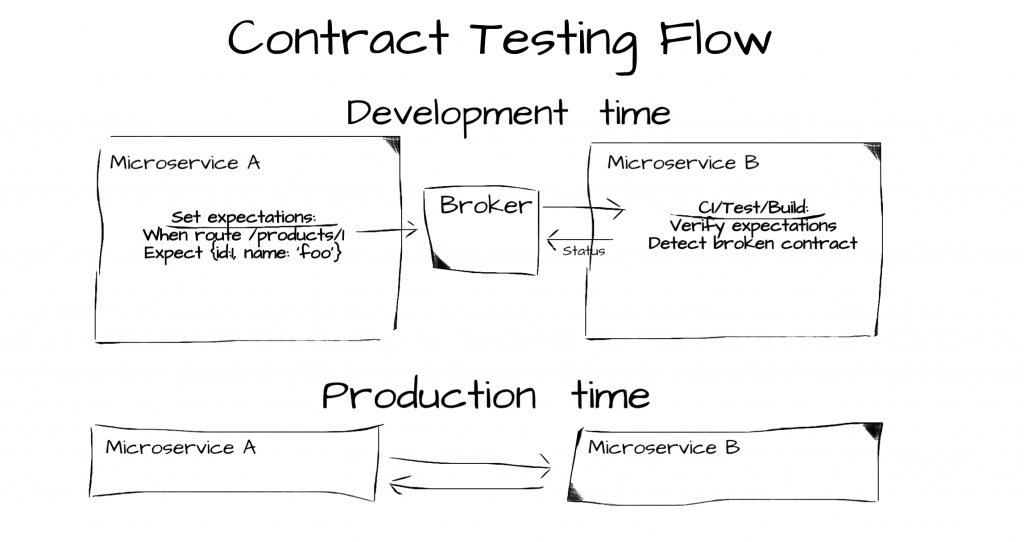

⚪ ️2.3 Ensure new releases don’t break the API using

⚪ ️ 2.4 Test your middlewares in isolation

❌ Otherwise: A bug in Express middleware === a bug in all or most requests

✏ Code Examples

👏 Doing It Right Example: Testing middleware in isolation without issuing network calls and waking-up the entire Express machine

//the middleware we want to test

const unitUnderTest = require('./middleware')

const httpMocks = require('node-mocks-http');

//Jest syntax, equivelant to describe() & it() in Mocha

test('A request without authentication header, should return http status 403', () => {

const request = httpMocks.createRequest({

method: 'GET',

url: '/user/42',

headers: {

authentication: ''

}

});

const response = httpMocks.createResponse();

unitUnderTest(request, response);

expect(response.statusCode).toBe(403);

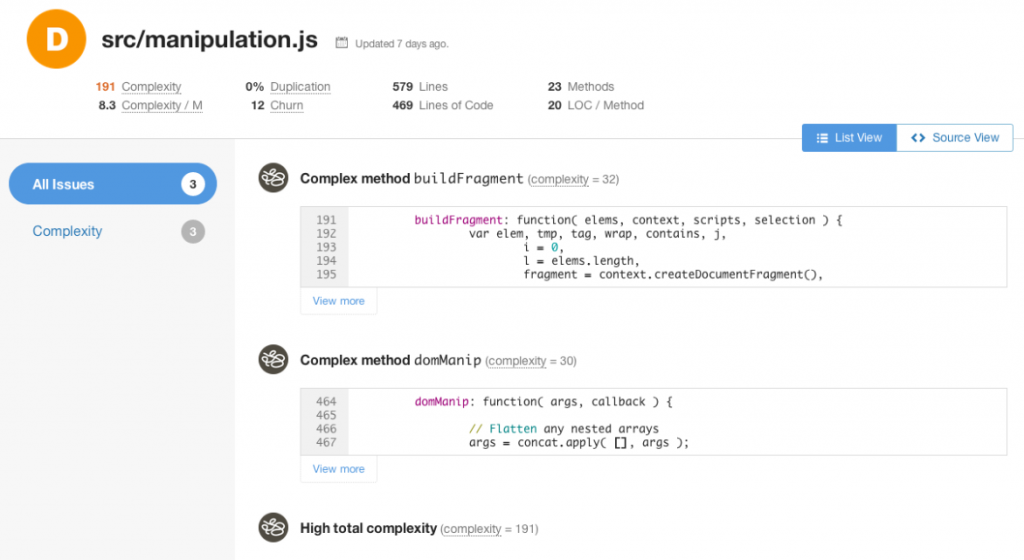

});⚪ ️2.5 Measure and refactor using static analysis tools

Credit:: Keith Holliday

❌ Otherwise: With poor code quality, bugs and performance will always be an issue that no shiny new library or state of the art features can fix

✏ Code Examples

👏 Doing It Right Example: CodeClimat, a commercial tool that can identify complex methods:

⚪ ️ 2.6 Check your readiness for Node-related chaos

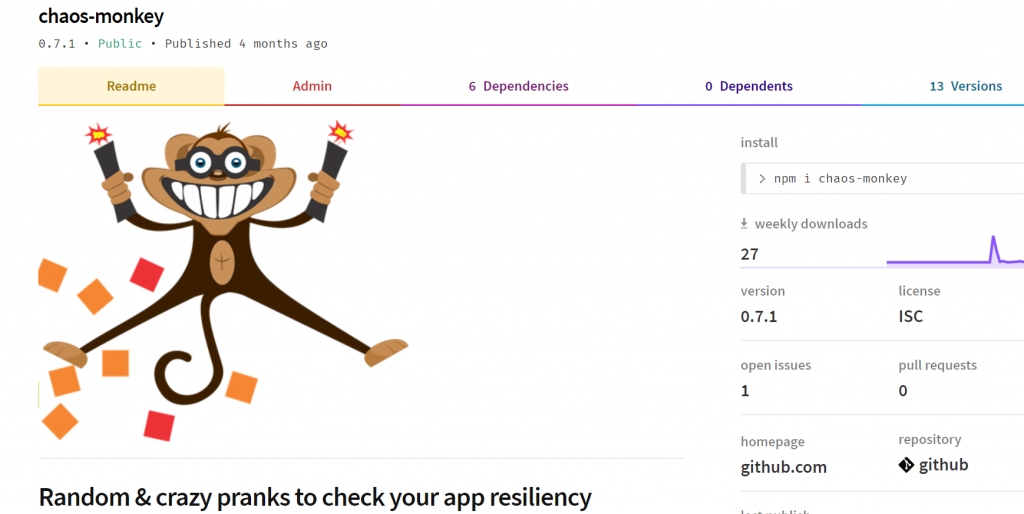

✅ Do: Weirdly, most software testings are about logic & data only, but some of the worst things that happen (and are really hard to mitigate ) are infrastructural issues. For example, did you ever test what happens when your process memory is overloaded, or when the server/process dies, or does your monitoring system realizes when the API becomes 50% slower?. To test and mitigate these type of bad things — Chaos engineering was born by Netflix. It aims to provide awareness, frameworks and tools for testing our app resiliency for chaotic issues. For example, one of its famous tools, the chaos monkey, randomly kills servers to ensure that our service can still serve users and not relying on a single server (there is also a Kubernetes version, kube-monkey, that kills pods). All these tools work on the hosting/platform level, but what if you wish to test and generate pure Node chaos like check how your Node process copes with uncaught errors, unhandled promise rejection, v8 memory overloaded with the max allowed of 1.7GB or whether your UX stays satisfactory when the event loop gets blocked often? to address this I’ve written, node-chaos (alpha) which provides all sort of Node-related chaotic acts

✏ Code Examples

👏 Doing It Right Example: : Node-chaos can generate all sort of Node.js pranks so you can test how resilience is your app to chaos

⚪ ️2.7 Avoid global test fixtures and seeds, add data per-test

✏ Code Examples

👎 Anti Pattern Example: tests are not independent and rely on some global hook to feed global DB data

before(() => {

//adding sites and admins data to our DB. Where is the data? outside. At some external json or migration framework

await DB.AddSeedDataFromJson('seed.json');

});

it("When updating site name, get successful confirmation", async () => {

//I know that site name "portal" exists - I saw it in the seed files

const siteToUpdate = await SiteService.getSiteByName("Portal");

const updateNameResult = await SiteService.changeName(siteToUpdate, "newName");

expect(updateNameResult).to.be(true);

});

it("When querying by site name, get the right site", async () => {

//I know that site name "portal" exists - I saw it in the seed files

const siteToCheck = await SiteService.getSiteByName("Portal");

expect(siteToCheck.name).to.be.equal("Portal"); //Failure! The previous test change the name :[

});👏 Doing It Right Example: We can stay within the test, each test acts on its own set of data

it("When updating site name, get successful confirmation", async () => {

//test is adding a fresh new records and acting on the records only

const siteUnderTest = await SiteService.addSite({

name: "siteForUpdateTest"

});

const updateNameResult = await SiteService.changeName(siteUnderTest, "newName");

expect(updateNameResult).to.be(true);

});Section 3️⃣ : Frontend Testing

⚪ ️ 3.1. Separate UI from functionality

✏ Code Examples

👏 Doing It Right Example: Separating out the UI details

test('When users-list is flagged to show only VIP, should display only VIP members', () => {

// Arrange

const allUsers = [

{ id: 1, name: 'Yoni Goldberg', vip: false },

{ id: 2, name: 'John Doe', vip: true }

];

// Act

const { getAllByTestId } = render(<UsersList users={allUsers} showOnlyVIP={true}/>);

// Assert - Extract the data from the UI first

const allRenderedUsers = getAllByTestId('user').map(uiElement => uiElement.textContent);

const allRealVIPUsers = allUsers.filter((user) => user.vip).map((user) => user.name);

expect(allRenderedUsers).toEqual(allRealVIPUsers); //compare data with data, no UI here

});👎 Anti Pattern Example: Assertion mix UI details and data

test('When flagging to show only VIP, should display only VIP members', () => {

// Arrange

const allUsers = [

{id: 1, name: 'Yoni Goldberg', vip: false },

{id: 2, name: 'John Doe', vip: true }

];

// Act

const { getAllByTestId } = render(<UsersList users={allUsers} showOnlyVIP={true}/>);

// Assert - Mix UI & data in assertion

expect(getAllByTestId('user')).toEqual('[<li data-testid="user">John Doe</li>]');

});⚪ ️ 3.2 Query HTML elements based on attributes that are unlikely to change

❌ Otherwise: You want to test the login functionality that spans many components, logic and services, everything is set up perfectly - stubs, spies, Ajax calls are isolated. All seems perfect. Then the test fails because the designer changed the div CSS class from 'thick-border' to 'thin-border'

✏ Code Examples

👏 Doing It Right Example: Querying an element using a dedicated attrbiute for testing

// the markup code (part of React component)

<h3>

<Badge pill className="fixed_badge" variant="dark">

<span data-testid="errorsLabel">{value}</span> <!-- note the attribute data-testid -->

</Badge>

</h3>// this example is using react-testing-library

test('Whenever no data is passed to metric, show 0 as default', () => {

// Arrange

const metricValue = undefined;

// Act

const { getByTestId } = render(<dashboardMetric value={undefined}/>);

expect(getByTestId('errorsLabel')).text()).toBe("0");

});👎 Anti-Pattern Example: Relying on CSS attributes

<!-- the markup code (part of React component) -->

<span id="metric" className="d-flex-column">{value}</span> <!-- what if the designer changes the classs? -->// this exammple is using enzyme

test('Whenever no data is passed, error metric shows zero', () => {

// ...

expect(wrapper.find("[className='d-flex-column']").text()).toBe("0");

});⚪ ️ 3.3 Whenever possible, test with a realistic and fully rendered component

✅ Do: Whenever reasonably sized, test your component from outside like your users do, fully render the UI, act on it and assert that the rendered UI behaves as expected. Avoid all sort of mocking, partial and shallow rendering - this approach might result in untrapped bugs due to lack of details and harden the maintenance as the tests mess with the internals (see bullet 'Favour blackbox testing'). If one of the child components is significantly slowing down (e.g. animation) or complicating the setup - consider explicitly replacing it with a fake

With all that said, a word of caution is in order: this technique works for small/medium components that pack a reasonable size of child components. Fully rendering a component with too many children will make it hard to reason about test failures (root cause analysis) and might get too slow. In such cases, write only a few tests against that fat parent component and more tests against its children

✏ Code Examples

👏 Doing It Right Example: Working realstically with a fully rendered component

class Calendar extends React.Component {

static defaultProps = {showFilters: false}

render() {

return (

<div>

A filters panel with a button to hide/show filters

<FiltersPanel showFilter={showFilters} title='Choose Filters'/>

</div>

)

}

}

//Examples use React & Enzyme

test('Realistic approach: When clicked to show filters, filters are displayed', () => {

// Arrange

const wrapper = mount(<Calendar showFilters={false} />)

// Act

wrapper.find('button').simulate('click');

// Assert

expect(wrapper.text().includes('Choose Filter'));

// This is how the user will approach this element: by text

})

👎 Anti-Pattern Example: Mocking the reality with shallow rendering

test('Shallow/mocked approach: When clicked to show filters, filters are displayed', () => {

// Arrange

const wrapper = shallow(<Calendar showFilters={false} title='Choose Filter'/>)

// Act

wrapper.find('filtersPanel').instance().showFilters();

// Tap into the internals, bypass the UI and invoke a method. White-box approach

// Assert

expect(wrapper.find('Filter').props()).toEqual({title: 'Choose Filter'});

// what if we change the prop name or don't pass anything relevant?

})⚪ ️ 3.4 Don't sleep, use frameworks built-in support for async events. Also try to speed things up

✏ Code Examples

👏 Doing It Right Example: E2E API that resolves only when the async operations is done (Cypress)

// using Cypress

cy.get('#show-products').click()// navigate

cy.wait('@products')// wait for route to appear

// this line will get executed only when the route is ready👏 Doing It Right Example: Testing library that waits for DOM elements

// @testing-library/dom

test('movie title appears', async () => {

// element is initially not present...

// wait for appearance

await wait(() => {

expect(getByText('the lion king')).toBeInTheDocument()

})

// wait for appearance and return the element

const movie = await waitForElement(() => getByText('the lion king'))

})👎 Anti-Pattern Example: custom sleep code

test('movie title appears', async () => {

// element is initially not present...

// custom wait logic (caution: simplistic, no timeout)

const interval = setInterval(() => {

const found = getByText('the lion king');

if(found){

clearInterval(interval);

expect(getByText('the lion king')).toBeInTheDocument();

}

}, 100);

// wait for appearance and return the element

const movie = await waitForElement(() => getByText('the lion king'))

})⚪ ️ 3.5. Watch how the content is served over the network

⚪ ️ 3.6 Stub flakky and slow resources like backend APIs

❌ Otherwise: The average test runs no longer than few ms, a typical API call last 100ms>, this makes each test ~20x slower

✏ Code Examples

👏 Doing It Right Example: Stubbing or intercepting API calls

// unit under test

export default function ProductsList() {

const [products, setProducts] = useState(false)

const fetchProducts = async() => {

const products = await axios.get('api/products')

setProducts(products);

}

useEffect(() => {

fetchProducts();

}, []);

return products ? <div>{products}</div> : <div data-testid='no-products-message'>No products</div>

}

// test

test('When no products exist, show the appropriate message', () => {

// Arrange

nock("api")

.get(`/products`)

.reply(404);

// Act

const {getByTestId} = render(<ProductsList/>);

// Assert

expect(getByTestId('no-products-message')).toBeTruthy();

});⚪ ️ 3.7 Have very few end-to-end tests that spans the whole system

⚪ ️ 3.8 Speed-up E2E tests by reusing login credentials

❌ Otherwise: Given 200 test cases and assuming login=100ms = 20 seconds only for logging-in again and again

✏ Code Examples

👏 Doing It Right Example: Logging-in before-all and not before-each

let authenticationToken;

// happens before ALL tests run

before(() => {

cy.request('POST', 'http://localhost:3000/login', {

username: Cypress.env('username'),

password: Cypress.env('password'),

})

.its('body')

.then((responseFromLogin) => {

authenticationToken = responseFromLogin.token;

})

})

// happens before EACH test

beforeEach(setUser => () {

cy.visit('/home', {

onBeforeLoad (win) {

win.localStorage.setItem('token', JSON.stringify(authenticationToken))

},

})

})⚪ ️ 3.9 Have one E2E smoke test that just travells across the site map

✅ Do: For production monitoring and development-time sanity check, run a single E2E test that visits all/most of the site pages and ensures no one breaks. This type of test brings a great return on investment as it's very easy to write and maintain, but it can detect any kind of failure including functional, network and deployment issues. Other styles of smoke and sanity checking are not as reliable and exhaustive - some ops teams just ping the home page (production) or developers who run many integration tests which don't discover packaging and browser issues. Goes without saying that the smoke test doesn't replace functional tests rather just aim to serve as a quick smoke detector

✏ Code Examples

👏 Doing It Right Example: Smoke travelling across all pages

it('When doing smoke testing over all page, should load them all successfully', () => {

// exemplified using Cypress but can be implemented easily

// using any E2E suite

cy.visit('https://mysite.com/home');

cy.contains('Home');

cy.contains('https://mysite.com/Login');

cy.contains('Login');

cy.contains('https://mysite.com/About');

cy.contains('About');

})⚪ ️ 3.10 Expose the tests as a live collaborative document

✅ Do: Besides increasing app reliability, tests bring another attractive opportunity to the table - serve as live app documentation. Since tests inherently speak at a less-technical and product/UX language, using the right tools they can serve as a communication artifact that greatly aligns all the peers - developers and their customers. For example, some frameworks allow expressing the flow and expectations (i.e. tests plan) using a human-readable language so any stakeholder, including product managers, can read, approve and collaborate on the tests which just became the live requirements document. This technique is also being referred to as 'acceptance test' as it allows the customer to define his acceptance criteria in plain language. This is BDD (behavior-driven testing) at its purest form. One of the popular frameworks that enable this is Cucumber which has a JavaScript flavor, see example below. Another similar yet different opportunity, StoryBook, allows exposing UI components as a graphic catalog where one can walk through the various states of each component (e.g. render a grid w/o filters, render that grid with multiple rows or with none, etc), see how it looks like, and how to trigger that state - this can appeal also to product folks but mostly serves as live doc for developers who consume those components.

❌ Otherwise: After investing top resources on testing, it's just a pity not to leverage this investment and win great value

✏ Code Examples

👏 Doing It Right Example: Describing tests in human-language using cocumber-js

// this is how one can describe tests using cocumber: plain language that allows anyone to understand and collaborate

Feature: Twitter new tweet

I want to tweet something in Twitter

@focus

Scenario: Tweeting from the home page

Given I open Twitter home

Given I click on "New tweet" button

Given I type "Hello followers!" in the textbox

Given I click on "Submit" button

Then I see message "Tweet saved"

👏 Doing It Right Example: Visualizing our components, their various states and inputs using Storybook

⚪ ️ 3.11 Detect visual issues with automated tools

✏ Code Examples

👎 Anti Pattern Example: A typical visual regression - right content that is served badly

👏 Doing It Right Example: Configuring wraith to capture and compare UI snapshots

# Add as many domains as necessary. Key will act as a label

domains:

english: "http://www.mysite.com"

# Type screen widths below, here are a couple of examples

screen_widths:

- 600

- 768

- 1024

- 1280

# Type page URL paths below, here are a couple of examples

paths:

about:

path: /about

selector: '.about'

subscribe:

selector: '.subscribe'

path: /subscribe

👏 Doing It Right Example: Using Applitools to get snapshot comaprison and other advanced features

import * as todoPage from '../page-objects/todo-page';

describe('visual validation', () => {

before(() => todoPage.navigate());

beforeEach(() => cy.eyesOpen({ appName: 'TAU TodoMVC' }));

afterEach(() => cy.eyesClose());

it('should look good', () => {

cy.eyesCheckWindow('empty todo list');

todoPage.addTodo('Clean room');

todoPage.addTodo('Learn javascript');

cy.eyesCheckWindow('two todos');

todoPage.toggleTodo(0);

cy.eyesCheckWindow('mark as completed');

});

});Section 4️⃣ : Measuring Test Effectiveness

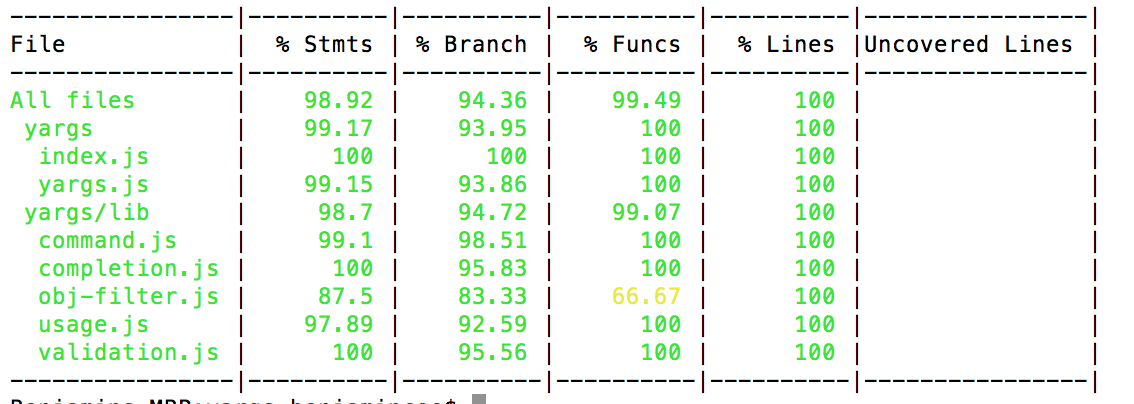

⚪ ️ 4.1 Get enough coverage for being confident, ~80% seems to be the lucky number

Implementation tips: You may want to configure your continuous integration (CI) to have a coverage threshold (Jest link) and stop a build that doesn’t stand to this standard (it’s also possible to configure threshold per component, see code example below). On top of this, consider detecting build coverage decrease (when a newly committed code has less coverage) — this will push developers raising or at least preserving the amount of tested code. All that said, coverage is only one measure, a quantitative based one, that is not enough to tell the robustness of your testing. And it can also be fooled as illustrated in the next bullets

✏ Code Examples

👏 Example: A typical coverage report

👏 Doing It Right Example: Setting up coverage per component (using Jest)

⚪ ️ 4.2 Inspect coverage reports to detect untested areas and other oddities

✏ Code Examples

👎 Anti-Pattern Example: What’s wrong with this coverage report? based on a real-world scenario where we tracked our application usage in QA and find out interesting login patterns (Hint: the amount of login failures is non-proportional, something is clearly wrong. Finally it turned out that some frontend bug keeps hitting the backend login API)

⚪ ️ 4.3 Measure logical coverage using mutation testing

Mutation-based testing is here to help by measuring the amount of code that was actually TESTED not just VISITED. Stryker is a JavaScript library for mutation testing and the implementation is really neat:

(1) it intentionally changes the code and “plants bugs”. For example the code newOrder.price===0 becomes newOrder.price!=0. This “bugs” are called mutations

(2) it runs the tests, if all succeed then we have a problem — the tests didn’t serve their purpose of discovering bugs, the mutations are so-called survived. If the tests failed, then great, the mutations were killed.

Knowing that all or most of the mutations were killed gives much higher confidence than traditional coverage and the setup time is similar

✏ Code Examples

👎 Anti Pattern Example: 100% coverage, 0% testing

function addNewOrder(newOrder) {

logger.log(`Adding new order ${newOrder}`);

DB.save(newOrder);

Mailer.sendMail(newOrder.assignee, `A new order was places ${newOrder}`);

return {approved: true};

}

it("Test addNewOrder, don't use such test names", () => {

addNewOrder({asignee: "John@mailer.com",price: 120});

});//Triggers 100% code coverage, but it doesn't check anything👏 Doing It Right Example: Stryker reports, a tool for mutation testing, detects and counts the amount of code that is not tested (Mutations)

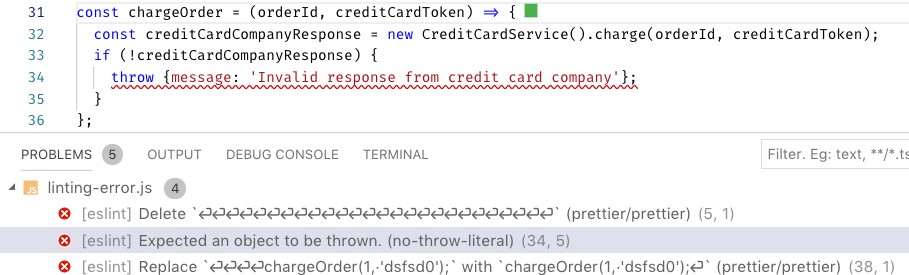

⚪ ️4.4 Preventing test code issues with Test linters

✏ Code Examples

👎 Anti Pattern Example: A test case full of errors, luckily all are caught by Linters

describe("Too short description", () => {

const userToken = userService.getDefaultToken() // *error:no-setup-in-describe, use hooks (sparingly) instead

it("Some description", () => {});//* error: valid-test-description. Must include the word "Should" + at least 5 words

});

it.skip("Test name", () => {// *error:no-skipped-tests, error:error:no-global-tests. Put tests only under describe or suite

expect("somevalue"); // error:no-assert

});

it("Test name", () => {*//error:no-identical-title. Assign unique titles to tests

});Section 5️⃣ CI and Other Quality Measures

⚪ ️ 5.1 Enrich your linters and abort builds that have linting issues

✏ Code Examples

👎 Anti Pattern Example: The wrong Error object is thrown mistakenly, no stack-trace will appear for this error. Luckily, ESLint catches the next production bug

⚪ ️ 5.2 Shorten the feedback loop with local developer-CI

Practically, some CI vendors (Example: CircleCI load CLI) allow running the pipeline locally. Some commercial tools like wallaby provide highly-valuable & testing insights as a developer prototype (no affiliation). Alternatively, you may just add npm script to package.json that runs all the quality commands (e.g. test, lint, vulnerabilities) — use tools like concurrently for parallelization and non-zero exit code if one of the tools failed. Now the developer should just invoke one command — e.g. ‘npm run quality’ — to get instant feedback. Consider also aborting a commit if the quality check failed using a githook (husky can help)

✏ Code Examples

👏 Doing It Right Example: npm scripts that perform code quality inspection, all are run in parallel on demand or when a developer is trying to push new code

"scripts": {

"inspect:sanity-testing": "mocha **/**--test.js --grep \"sanity\"",

"inspect:lint": "eslint .",

"inspect:vulnerabilities": "npm audit",

"inspect:license": "license-checker --failOn GPLv2",

"inspect:complexity": "plato .",

"inspect:all": "concurrently -c \"bgBlue.bold,bgMagenta.bold,yellow\" \"npm:inspect:quick-testing\" \"npm:inspect:lint\" \"npm:inspect:vulnerabilities\" \"npm:inspect:license\""

},

"husky": {

"hooks": {

"precommit": "npm run inspect:all",

"prepush": "npm run inspect:all"

}

}⚪ ️5.3 Perform e2e testing over a true production-mirror

The huge Kubernetes eco-system is yet to formalize a standard convenient tool for local and CI-mirroring though many new tools are launched frequently. One approach is running a ‘minimized-Kubernetes’ using tools like Minikube and MicroK8s which resemble the real thing only come with less overhead. Another approach is testing over a remote ‘real-Kubernetes’, some CI providers (e.g. Codefresh) has native integration with Kubernetes environment and make it easy to run the CI pipeline over the real thing, others allow custom scripting against a remote Kubernetes.

❌ Otherwise: Using different technologies for production and testing demands maintaining two deployment models and keeps the developers and the ops team separated

✏ Code Examples

👏 Example: a CI pipeline that generates Kubernetes cluster on the fly (Credit: Dynamic-environments Kubernetes)

deploy:

stage: deploy

image: registry.gitlab.com/gitlab-examples/kubernetes-deploy

script:

- ./configureCluster.sh $KUBE_CA_PEM_FILE $KUBE_URL $KUBE_TOKEN

- kubectl create ns $NAMESPACE

- kubectl create secret -n $NAMESPACE docker-registry gitlab-registry --docker-server="$CI_REGISTRY" --docker-username="$CI_REGISTRY_USER" --docker-password="$CI_REGISTRY_PASSWORD" --docker-email="$GITLAB_USER_EMAIL"

- mkdir .generated

- echo "$CI_BUILD_REF_NAME-$CI_BUILD_REF"

- sed -e "s/TAG/$CI_BUILD_REF_NAME-$CI_BUILD_REF/g" templates/deals.yaml | tee ".generated/deals.yaml"

- kubectl apply --namespace $NAMESPACE -f .generated/deals.yaml

- kubectl apply --namespace $NAMESPACE -f templates/my-sock-shop.yaml

environment:

name: test-for-ci

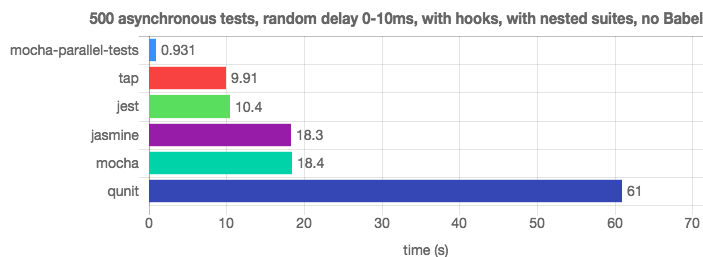

⚪ ️5.4 Parallelize test execution

✏ Code Examples

👏 Doing It Right Example: Mocha parallel & Jest easily outrun the traditional Mocha thanks to testing parallelization (Credit: JavaScript Test-Runners Benchmark)

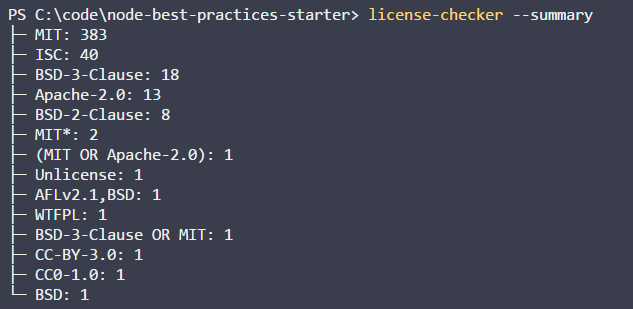

⚪ ️5.5 Stay away from legal issues using license and plagiarism check

❌ Otherwise: Unintentionally, developers might use packages with inappropriate licenses or copy paste commercial code and run into legal issues

✏ Code Examples

👏 Doing It Right Example:

//install license-checker in your CI environment or also locally

npm install -g license-checker

//ask it to scan all licenses and fail with exit code other than 0 if it found unauthorized license. The CI system should catch this failure and stop the build

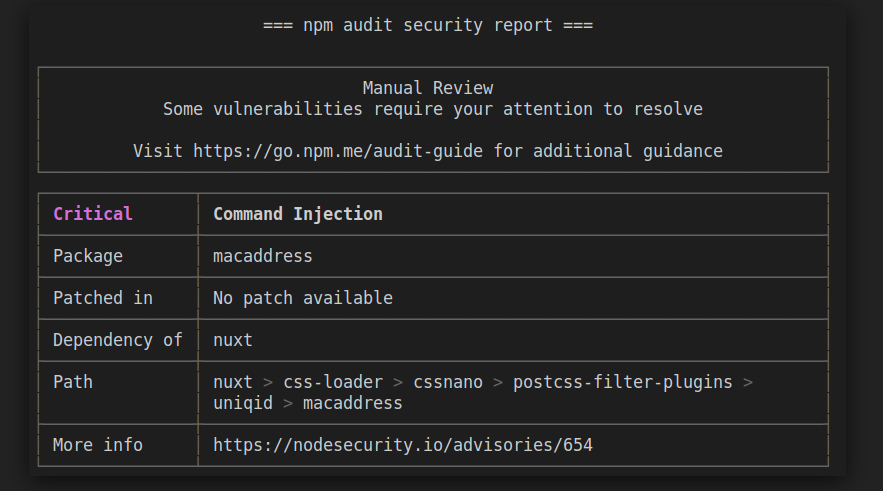

license-checker --summary --failOn BSD⚪ ️5.6 Constantly inspect for vulnerable dependencies

❌ Otherwise: Even the most reputable dependencies such as Express have known vulnerabilities. This can get easily tamed using community tools such as npm audit, or commercial tools like snyk (offer also a free community version). Both can be invoked from your CI on every build

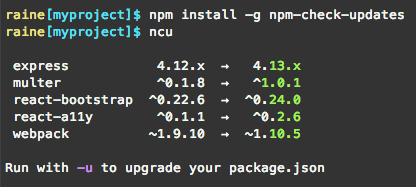

⚪ ️5.7 Automate dependency updates

✏ Code Examples

👏 Example: ncu can be used manually or within a CI pipeline to detect to which extent the code lag behind the latest versions

⚪ ️ 5.8 Other, non-Node related, CI tips

- Use a declarative syntax. This is the only option for most vendors but older versions of Jenkins allows using code or UI

- Opt for a vendor that has native Docker support

- Fail early, run your fastest tests first. Create a ‘Smoke testing’ step/milestone that groups multiple fast inspections (e.g. linting, unit tests) and provide snappy feedback to the code committer

- Make it easy to skim-through all build artifacts including test reports, coverage reports, mutation reports, logs, etc

- Create multiple pipelines/jobs for each event, reuse steps between them. For example, configure a job for feature branch commits and a different one for master PR. Let each reuse logic using shared steps (most vendors provide some mechanism for code reuse

- Never embed secrets in a job declaration, grab them from a secret store or from the job’s configuration

- Explicitly bump version in a release build or at least ensure the developer did so

- Build only once and perform all the inspections over the single build artifact (e.g. Docker image)

- Test in an ephemeral environment that doesn’t drift state between builds. Caching node_modules might be the only exception

⚪ ️ 5.9 Build matrix: Run the same CI steps using multiple Node versions

✏ Code Examples

👏 Example: Using Travis (CI vendor) build definition to run the same test over multiple Node versions

language: node_js

node_js:

- "7"

- "6"

- "5"

- "4"

install:

- npm install

script:

- npm run test

Team

Yoni Goldberg

Role: Writer

About: I'm an independent consultant who works with 500 fortune corporates and garage startups on polishing their JS & Node.js applications. More than any other topic I'm fascinated by and aims to master the art of testing. I'm also the author of 'Node.js Best Practices

Workshop:

Follow:

Bruno Scheufler

Role: Tech reviewer and advisor

Took care to revise, improve, lint and polish all the texts

About: full-stack web engineer, Node.js & GraphQL enthusiast

Ido Richter

Role: Concept, design and great advice

About: A savvy frontend developer, CSS expert and emojis freak

allows approaching Express API in-process (fast and cover many layers) alt text](https://raw.githubusercontent.com/han4wluc/javascript-testing-best-practices/master/assets/bp-13-component-test-yoni-goldberg.png)