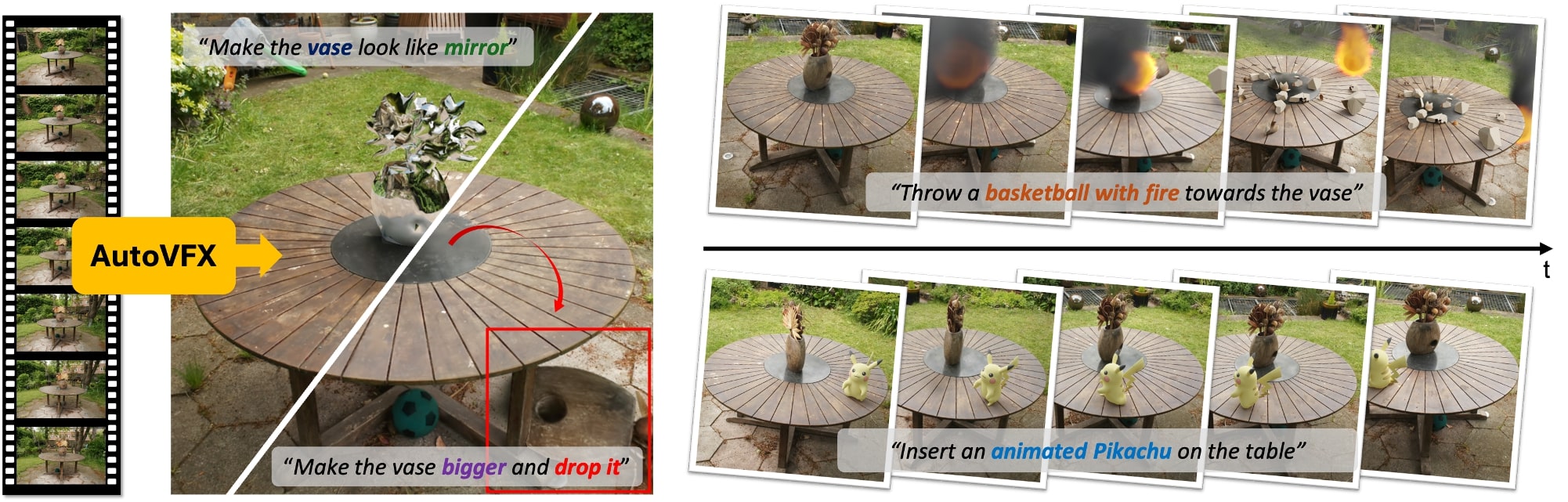

AutoVFX: Physically Realistic Video Editing from Natural Language Instructions.

Hao-Yu Hsu1, Zhi-Hao Lin1, Albert J. Zhai1, Hongchi Xia1, Shenlong Wang1

1University of Illinois at Urbana-Champaign

International Conference on 3D Vision (3DV), 2025

- Environment Setup

- Pretrained checkpoints, data, and software preparation

- Simulation example on Garden scene

- Details of pose extraction (SfM) and pose alignment

- Details of training 3DGS

- Details of surface reconstruction

- Details of estimating relative scene scale

- Code for sampling custom camera trajectory

- Details of light estimation for indoor scenes

- Demo edits on a single casually-captured video

- Local gradio app demo

The code has been tested on:

- OS: Ubuntu 22.04.5 LTS

- GPU: NVIDIA GeForce RTX 4090

- Driver Version: 550

- CUDA Version: 12.4

- nvcc: 11.8

- Create environment:

git clone https://github.com/haoyuhsu/autovfx.git

cd autovfx/

conda create -n autovfx python=3.10

conda activate autovfx- Install PyTorch & cudatoolkit:

conda install pytorch==2.0.1 torchvision==0.15.2 torchaudio==2.0.2 pytorch-cuda=11.8 -c pytorch -c nvidia

# (Optional) To build the necessary CUDA extensions, cuda-toolkit is required.

conda install -c "nvidia/label/cuda-11.8.0" cuda-toolkit- Install Gaussian Splatting submodules:

cd sugar/gaussian_splatting/

pip install submodules/diff-gaussian-rasterization

pip install submodules/simple-knn- Install segmentation & tracking modules:

# Tracking-with-DEVA

cd ../../tracking

pip install -e .

# Grounded-SAM

git clone https://github.com/hkchengrex/Grounded-Segment-Anything.git

cd Grounded-Segment-Anything

export AM_I_DOCKER=False

export BUILD_WITH_CUDA=True

python -m pip install -e segment_anything

python -m pip install -e GroundingDINO

# RAM & Tag2Text

git submodule init

git submodule update

git clone https://github.com/xinyu1205/recognize-anything.git

pip install -r ./recognize-anything/requirements.txt

pip install -e ./recognize-anything/- Install inpainting modules:

# LaMa

cd ../../inpaint/lama

pip install -r requirements.txt- Install lighting estimation modules:

# DiffusionLight

cd ../../lighting/diffusionlight

pip install -r requirements.txt- Install other required packages:

# Other packages

pip install openai objaverse kornia wandb open3d plyfile imageio-ffmpeg einops e3nn pygltflib lpips scann geffnetopen_clip_torch sentence-transformers==2.7.0 geffnet mmcv

# PyTorch3D (try one of the commands)

pip install "git+https://github.com/facebookresearch/pytorch3d.git"

pip install "git+https://github.com/facebookresearch/pytorch3d.git@stable"

conda install pytorch3d -c pytorch3d

# Trimesh with speedup packages

pip install trimesh==4.3.2

pip install Rtree==1.2.0

conda install -c conda-forge embree=2.17.7

conda install -c conda-forge pyembree

# (Optional) COLMAP if not build from source

conda install conda-forge::colmap

cd ../..We use DEVA for open-vocabulary video segmentation.

cd tracking

bash download_models.shWe use LaMa to inpaint the unseen region.

cd inpaint && mkdir ckpts

wget https://huggingface.co/smartywu/big-lama/resolve/main/big-lama.zip && unzip big-lama.zip -d ckpts

rm big-lama.zipWe use CLIP & SBERT features to annotate assets in Objaverse, and we use SBERT features to annotate PBR materials in PolyHaven. The preprocessed embeddings of both Objaverse 3D assets and PolyHaven PBR materials need to be downloaded.

cd retrieval

# download processed embeddings

gdown --folder https://drive.google.com/drive/folders/1Lw87MstzbQgEX0iacTm9GpLYK2UE3gNm

# download PolyHaven PBR-materials

gdown https://drive.google.com/uc?id=1adZo_FPyLj7pFofNJfxSbnAv_EaJEV75

unzip polyhaven.zip && rm polyhaven.zipWe tested with Blender 3.6.11. Note that Blender 3+ requires Ubuntu version >= 20.04.

cd third_parties/Blender

wget https://download.blender.org/release//Blender3.6/blender-3.6.11-linux-x64.tar.xz

tar -xvf blender-3.6.11-linux-x64.tar.xz

rm blender-3.6.11-linux-x64.tar.xzPlease download the preprocessed dataset of Garden scene from here for quick demo. The expected folder structure of the dataset will be:

├── datasets

│ | <your scene name>

│ ├── custom_camera_path # optional for free-viewpoint rendering

│ ├── transforms_001.json

| ├── ...

│ ├── images

| ├── 00000.png

| ├── 00001.png

| ├── 00002.png

| ├── ...

│ ├── mesh

| ├── material_0.png

| ├── mesh.mtl

| ├── mesh.obj

│ ├── emitter_mesh.obj # optional for indoor scenes

│ ├── normal

| ├── 00000_normal.png

| ├── 00001_normal.png

| ├── 00002_normal.png

| ├── ...

| ├── sparse

| | 0

| ├── cameras.bin

| ├── images.bin

| ├── points3D.bin

| ├── transforms.json

For your custom dataset, please follow these steps:

- Create a folder and put your images under

images. The folder will be like this:

├── datasets

│ | <your scene name>

│ ├── images

| ├── 00000.png

| ├── 00001.png

| ├── 00002.png

| ├── ...

- Estimate normal maps for the usage of both pose alignment and normal regularization during 3DGS and BakedSDF training. Currently, we support three types of methods for monocular normal estimation, which are Metric3D, DSINE. and Omnidata. Empirically, the quality of normal estimation is ranked as Metric3D > DSINE > Omnidata.

python dataset_utils/get_mono_normal.py \

--dataset_dir ./datasets/<your scene name> \

--method metric3d # 'metric3d', 'dsine', 'omnidata'- Perform pose extraction using COLMAP, followed by pose alignment to set the up direction of the scene to

(0,0,1). Specify a text prompt for the most obvious flat surfaces in the scene, such asground,floorortable.

python dataset_utils/colmap_runner.py \

--dataset_dir ./datasets/<your scene name> \

--text_prompt ground- For details on surface mesh extraction, please refer to the Estimate Scene Properties section.

- All cameras are in camera-to-world coordinate with OpenCV format (x: right, y: down, z: front). Please refer to this tutorial on conversion between OpenCV and OpenGL camera format.

Details of estimating scene properties (e.g., geometry, appearance, lighting, and semantics) will be updated soon.

We use BakedSDF implemented in SDFStudio for surface reconstruction. Please make sure to use our custom SDFStudio for reproducibility. We recommend to create an extra environemnt for this part since CUDA 11.3 has been tested on this repo.

# Example command

ns-train bakedsdf-mlp --vis wandb \

--output-dir outputs/<scene name> --experiment-name <experiment name> \

--trainer.steps-per-save 1000 \

--trainer.steps-per-eval-image 5000 --trainer.steps-per-eval-all-images 50000 \

--trainer.max-num-iterations 250001 --trainer.steps-per-eval-batch 5000 \

--pipeline.datamanager.train-num-rays-per-batch 2048 \

--pipeline.datamanager.eval-num-rays-per-batch 512 \

--pipeline.model.sdf-field.inside-outside False \

--pipeline.model.background-model none \

--pipeline.model.near-plane 0.001 --pipeline.model.far-plane 6.0 \

--machine.num-gpus 1 \

--pipeline.model.mono-normal-loss-mult 0.1 \

panoptic-data \

--data <path to your dataset> \

--panoptic_data False --mono_normal_data True --panoptic_segment False \

--orientation-method none --center-poses False --auto-scale-poses False \Generally, a decent surface mesh can be obtained with the command above. However, there are several hyperparameters that you should be careful to set appropriately.

- For fully captured indoor scenes, such as those in ScanNet++, set

--pipeline.model.sdf-field.inside-outsidetoTrue. - For outdoor scenes with distant backgrounds, such as those in the Tanks & Temples, set

--pipeline.model.background-modeltomlp. - Adjust

--pipeline.datamanager.train-num-rays-per-batch,--pipeline.datamanager.eval-num-rays-per-batch, and--pipeline.model.num-neus-samples-per-rayif you encounter OOM (out-of-memory) errors during training.

scene=outputs/<scene name>/<experiment name>/bakedsdf-mlp/<timestamp>

# Extract mesh

python scripts/extract_mesh.py --load-config $scene/config.yml \

--output-path $scene/mesh.ply \

--bounding-box-min -2.0 -2.0 -2.0 --bounding-box-max 2.0 2.0 2.0 \

--resolution 2048 --marching_cube_threshold 0.001 --create_visibility_mask True --simplify-mesh True

mkdir $scene/textured

# Bake texture

python scripts/texture.py \

--load-config $scene/config.yml \

--input-mesh-filename $scene/mesh-simplify.ply \

--output-dir $scene/textured \

--target_num_faces NoneIt is better not changing bounding-box-min and bounding-box-max since camera poses are already normalized within a unit cube in the pose alignment step.

You could start training 3D gaussian splatting with one command.

bash train_3dgs.sh <your scene name>Explanation of several hyperparameters used in train_3dgs.sh:

- Optimization parameters:

lambda_normal: loss between rendered normal and monocular normal predictionlambda_pseudo_normal: loss between rendered normal and pseudo normal derived from rendered depthlambda_anisotropic: regularize 3D gaussians shape to be isotropic

- Densification parameters:

- consider adjust

size_thresholdandmin_opacityif the Gaussians are floating excessively.

- consider adjust

- Gaussians initialization parameters

--init_strategy:colmap: use a point cloud extracted from COLMAP for initializationray_mesh: use intersection points between camera rays from all training views and the scene mesh for initialization.hybrid: combine bothcolmapandray_meshfor initialization- Ensure that

--scene_sdf_mesh_pathis specified when usingray_meshorhybrid

Use the following script to determine the relative scale between the current scene and a real-world scenario. Then, set the --scene_scale parameter to the estimated value during simulation.

python dataset_utils/estimate_scene_scale.py \

--dataset_dir ./datasets/<your scene name> \

--scene_mesh_path ./datasets/<your scene name>/mesh/mesh.obj \

--anchor_frame_idx 0pending.

Please download the preprocessed Garden scene from here, and the pretrained 3DGS checkpoints and estimated scene properties from here.

# If you encounter an error with gdown, please use the Google Drive link above to download the files.

mkdir datasets && cd datasets

gdown --folder https://drive.google.com/drive/folders/1eRdSAqDloGXk04JK60v3io6GHWdomy2N

cd ../

mkdir output && cd output

gdown --folder https://drive.google.com/drive/folders/1KE8LSA_r-3f2LVlTLJ5k4SHENvbwdAfN- Text Prompt: "Drop 5 basketballs on the table."

export OPENAI_API_KEY=/your/openai_api_key/

export MESHY_API_KEY=/your/meshy_api_key/ # if you want to retrieve generated 3D assets

SCENE_NAME=garden_large

CUSTOM_TRAJ_NAME=transforms_001

SCENE_SCALE=2.67

BLENDER_CONFIG_NAME=blender_cfg_rigid_body_simulation

python edit_scene.py \

--source_path datasets/${SCENE_NAME} \

--model_path output/${SCENE_NAME}/ \

--gaussians_ckpt_path output/${SCENE_NAME}/coarse/sugarcoarse_3Dgs15000_densityestim02_sdfnorm02/22000.pt \

--custom_traj_name ${CUSTOM_TRAJ_NAME} \

--anchor_frame_idx 0 \

--scene_scale ${SCENE_SCALE} \

--edit_text "Drop 5 basketballs on the table." \

--scene_mesh_path datasets/${SCENE_NAME}/mesh/mesh.obj \

--blender_config_name ${BLENDER_CONFIG_NAME}.json \

--blender_output_dir_name ${BLENDER_CONFIG_NAME} \

--render_type MULTI_VIEW \

--deva_dino_threshold 0.45 \

--is_uv_meshAll the parameters are listed in the opt.py. Details of those parameters will be updated soon.

If you find this paper and repository useful for your research, please consider citing:

@article{hsu2024autovfx,

title={AutoVFX: Physically Realistic Video Editing from Natural Language Instructions},

author={Hsu, Hao-Yu and Lin, Zhi-Hao and Zhai, Albert and Xia, Hongchi and Wang, Shenlong},

journal={arXiv preprint arXiv:2411.02394},

year={2024}

}This project is supported by the Intel AI SRS gift, Meta research grant, the IBM IIDAI Grant and NSF Awards #2331878, #2340254, #2312102, #2414227, and #2404385. Hao-Yu Hsu is supported by Siebel Scholarship. We greatly appreciate the NCSA for providing computing resources. We thank Derek Hoiem, Sarita Adve, Benjamin Ummenhofer, Kai Yuan, Micheal Paulitsch, Katelyn Gao, Quentin Leboutet for helpful discussions.

Our codebase are built based on gaussian-splatting, SuGaR, SDFStudio, DiffusionLight, DEVA, Objaverse, and the most important Blender. Thanks for open-sourcing!.