This repository is the official implementation of Contextual Transformer Networks for Visual Recognition.

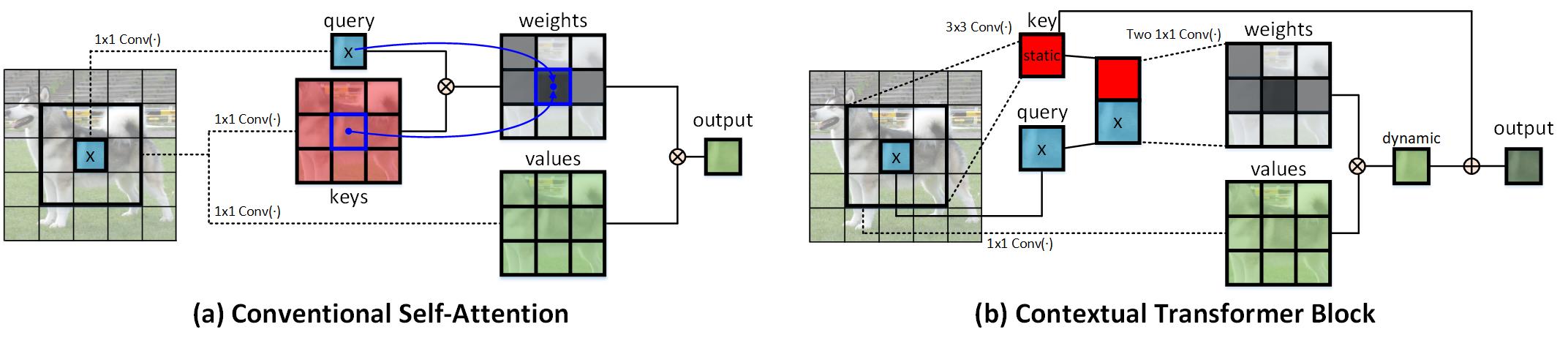

CoT is a unified self-attention building block, and acts as an alternative to standard convolutions in ConvNet. As a result, it is feasible to replace convolutions with their CoT counterparts for strengthening vision backbones with contextualized self-attention.

Rank 1 in Open World Image Classification Challenge @ CVPR 2021. (Team name: VARMS)

The code is mainly based on timm.

- PyTorch 1.8.0+

- Python3.7

- CUDA 10.1+

- CuPy.

git clone https://github.com/JDAI-CV/CoTNet.git

First, download the ImageNet dataset. To train CoTNet-50 on ImageNet on a single node with 8 gpus for 350 epochs run:

python -m torch.distributed.launch --nproc_per_node=8 train.py --folder ./experiments/cot_experiments/CoTNet-50-350epoch

The training scripts for CoTNet (e.g., CoTNet-50) can be found in the cot_experiments folder.

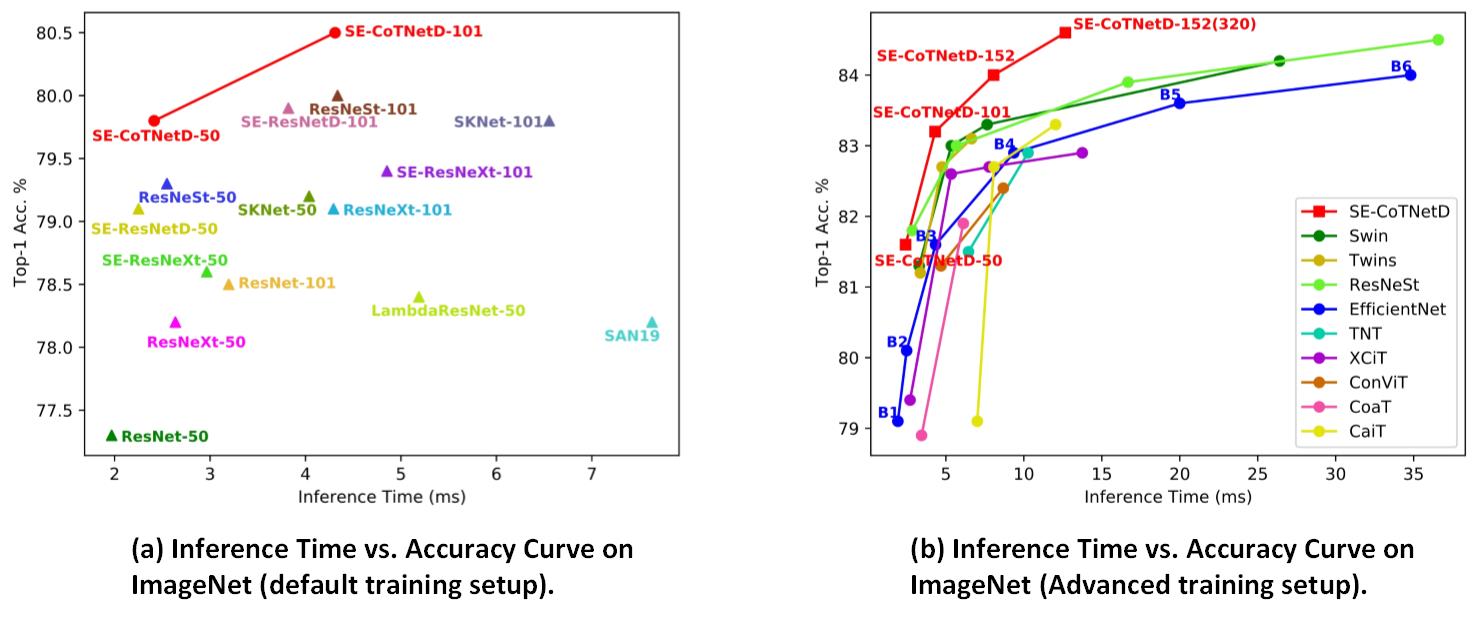

CoTNet models consistently obtain better top-1 accuracy with less inference time than other vision backbones across both default and advanced training setups. In a word, CoTNet models seek better inference time-accuracy trade-offs than existing vision backbones.

| name | resolution | #params | FLOPs | Top-1 Acc. | Top-5 Acc. | model |

|---|---|---|---|---|---|---|

| CoTNet-50 | 224 | 22.2M | 3.3 | 81.3 | 95.6 | GoogleDrive |

| CoTNeXt-50 | 224 | 30.1M | 4.3 | 82.1 | 95.9 | GoogleDrive |

| SE-CoTNetD-50 | 224 | 23.1M | 4.1 | 81.6 | 95.8 | GoogleDrive |

| CoTNet-101 | 224 | 38.3M | 6.1 | 82.8 | 96.2 | GoogleDrive |

| CoTNeXt-101 | 224 | 53.4M | 8.2 | 83.2 | 96.4 | GoogleDrive |

| SE-CoTNetD-101 | 224 | 40.9M | 8.5 | 83.2 | 96.5 | GoogleDrive |

| SE-CoTNetD-152 | 224 | 55.8M | 17.0 | 84.0 | 97.0 | GoogleDrive |

| SE-CoTNetD-152 | 320 | 55.8M | 26.5 | 84.6 | 97.1 | GoogleDrive |

@article{cotnet,

title={Contextual Transformer Networks for Visual Recognition},

author={Li, Yehao and Yao, Ting and Pan, Yingwei and Mei, Tao},

journal={arXiv preprint arXiv:2107.12292},

year={2021}

}

Thanks the contribution of timm and awesome PyTorch team.