| Paper | Blog | Leaderboard | Roadmap |

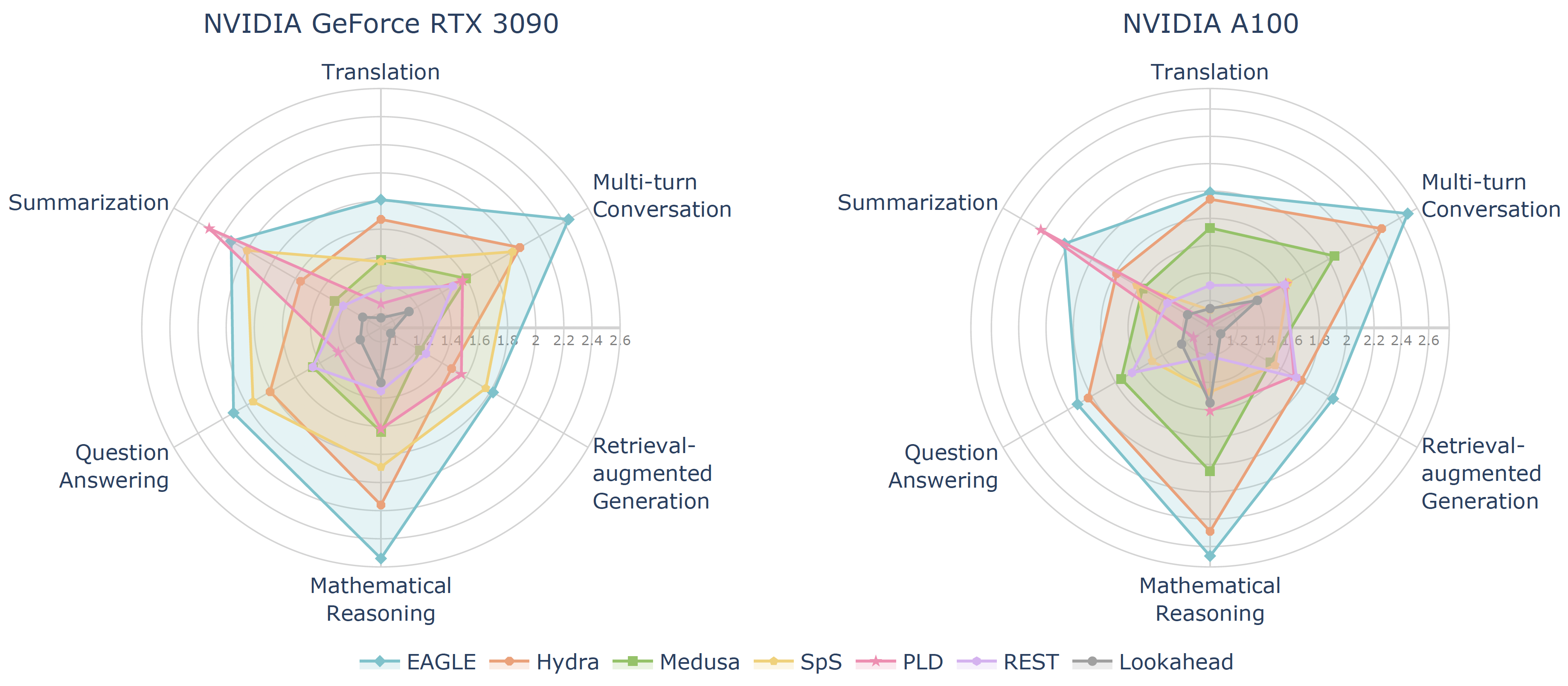

Spec-Bench is a comprehensive benchmark designed for assessing Speculative Decoding methods across diverse scenarios. Based on Spec-Bench, we aim to establish and maintain a unified evaluation platform for open-source Speculative Decoding approaches. This platform facilitates the systematic assessment of existing methods in the same device and testing environment, thereby ensuring fair comparisons.

Currently, Spec-Bench supports the evaluation of the following open source models:

2024.5.29: We have integrated SPACE into Spec-Bench.

2024.5.16: Our paper has been accepted by ACL 2024 Findings 🎉 !

2024.3.12: We now support statistics for #Mean accepted tokens.

2024.3.11: We have integrated Hydra into Spec-Bench, check it out!

conda create -n specbench python=3.9

conda activate specbench

cd Spec-Bench

pip install -r requirements.txt

Download corresponding model weights (if required) and modify the checkpoint path in eval.sh.

cd model/rest/DraftRetriever

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | sh

maturin build --release --strip -i python3.9 # will produce a .whl file

pip3 install ./target/wheels/draftretriever-0.1.0-cp39-cp39-linux_x86_64.whl

cd model/rest/datastore

./datastore.sh # modify your own path

Select specific command line in eval.sh, the results will be stored in data/spec_bench/model_answer/.

./eval.sh

Obtain the corresponding speedup compared to vanilla autoregressive decoding.

python evaluation/speed.py --file-path /your_own_path/eagle.jsonl --base-path /your_own_path/vicuna.jsonl

Examine whether the generated results are equal to autoregressive decoding or not.

python evaluation/equal.py --file-path /your_own_path/model_answer/ --jsonfile1 vicuna.jsonl --jsonfile2 eagle.jsonl

We warmly welcome contributions and discussions related to Spec-Bench! If you have any suggestions for improvements or ideas you'd like to discuss, please don't hesitate to open an issue. This will allow us to collaborate and discuss your ideas in detail.

More models are welcome! - If you're aware of any open-source Speculative Decoding methods not currently included in Spec-Bench, we encourage you to contribute by submitting a pull request. This helps ensure Spec-Bench remains a comprehensive and fair benchmarking platform for comparing existing methods. Please ensure that your changes are well-tested before submission.

This codebase is built from Medusa and EAGLE. We integrated code implementations of multiple open-source Speculative Decoding methods to facilitate unified evaluation.

If you find the resources in this repository useful, please cite our paper:

@misc{xia2024unlocking,

title={Unlocking Efficiency in Large Language Model Inference: A Comprehensive Survey of Speculative Decoding},

author={Heming Xia and Zhe Yang and Qingxiu Dong and Peiyi Wang and Yongqi Li and Tao Ge and Tianyu Liu and Wenjie Li and Zhifang Sui},

year={2024},

eprint={2401.07851},

archivePrefix={arXiv},

primaryClass={cs.CL}

}