🚧 pipegoose: Decentralized large-scale 4D parallelism multi-modal pre-training for 🤗 transformers in Mixture of Experts

We're building a library for an end-to-end framework for training multi-modal MoE in a decentralized way, as proposed by the paper DiLoCo. The core papers that we are replicating are:

- DiLoCo: Distributed Low-Communication Training of Language Models [link]

- Pipeline MoE: A Flexible MoE Implementation with Pipeline Parallelism [link]

- Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity [link]

- Flamingo: a Visual Language Model for Few-Shot Learning [link]

- Megatron-LM: Training Multi-Billion Parameter Language Models Using Model Parallelism [link]

bloom-560m is supported. Support for hybrid 3D parallelism and distributed optimizer for 🤗 transformers will be available in the upcoming weeks (it's basically done, but it doesn't support 🤗 transformers yet)

from torch.utils.data import DataLoader

+ from torch.utils.data.distributed import DistributedSampler

from torch.optim import Adam

from transformers import AutoModelForCausalLM, AutoTokenizer

from datasets import load_dataset

+ from pipegoose.distributed import ParallelContext, ParallelMode

+ from pipegoose.nn import DataParallel, TensorParallel

+ from pipegoose.optim import DistributedOptimizer

model = AutoModelForCausalLM.from_pretrained("bigscience/bloom-560m")

tokenizer = AutoTokenizer.from_pretrained("bigscience/bloom-560m")

tokenizer.pad_token = tokenizer.eos_token

BATCH_SIZE = 4

+ DATA_PARALLEL_SIZE = 2

+ parallel_context = ParallelContext.from_torch(

+ tensor_parallel_size=2,

+ data_parallel_size=2,

+ pipeline_parallel_size=1

+ )

+ model = TensorParallel(model, parallel_context).parallelize()

+ model = DataParallel(model, parallel_context).parallelize()

model.to("cuda")

+ device = next(model.parameters()).device

optim = Adam(model.parameters(), lr=1e-3)

+ optim = DistributedOptimizer(optim, parallel_context)

dataset = load_dataset("imdb", split="train")

+ dp_rank = parallel_context.get_local_rank(ParallelMode.DATA)

+ sampler = DistributedSampler(dataset, num_replicas=DATA_PARALLEL_SIZE, rank=dp_rank, seed=42)

+ dataloader = DataLoader(dataset, batch_size=BATCH_SIZE // DATA_PARALLEL_SIZE, shuffle=False, sampler=sampler)

for epoch in range(100):

+ sampler.set_epoch(epoch)

for batch in dataloader:

inputs = tokenizer(batch["text"], padding=True, truncation=True, max_length=1024, return_tensors="pt")

inputs = {name: tensor.to(device) for name, tensor in inputs.items()}

labels = inputs["input_ids"]

outputs = model(**inputs, labels=labels)

optim.zero_grad()

outputs.loss.backward()

optim.step()Installation and try it out

You can install the package through the following command:

git clone https://github.com/xrsrke/pipegoose.git

cd pipegoose && pip install -e .And try out a hybrid tensor and data parallelism training script (You must have at least 4 GPUs in order to try hybrid 2D parallelism).

cd pipegoose/examples

torchrun --standalone --nnodes=1 --nproc-per-node=4 hybrid_parallelism.pyWe did a small scale correctness test by comparing the validation losses between a paralleized transformer and one kept by default, starting at identical checkpoints and training data. We will conduct rigorous large scale convergence and weak scaling law benchmarks against Megatron and DeepSpeed in the near future if we manage to make it.

- Data Parallelism [link]

Tensor Parallelism [link](We've found a bug in convergence, and we are fixing it)Hybrid 2D Parallelism (TP+DP) [link]- Distributed Optimizer ZeRO-1 Convergence: [sgd link] [adam link]

Features

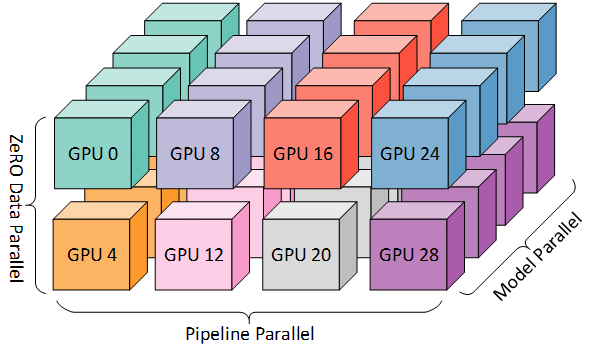

- End-to-end multi-modal including in 3D parallelism including distributed CLIP..

- Sequence parallelism and Mixture of Experts that work in 3D parallelism

- ZeRO-1: Distributed Optimizer

- Kernel fusion

- ...

Appreciation

-

Big thanks to 🤗 Hugging Face for sponsoring this project with GPUs for testing!

-

The library's APIs are inspired by OSLO's and ColossalAI's APIs.

Citation

@software{pipegoose,

title = {{pipegoose: Large-scale 4D parallelism pre-training for `transformers`}},

author = {},

url = {https://github.com/xrsrke/pipegoose},

doi = {},

month = {},

year = {2024},

version = {},

}