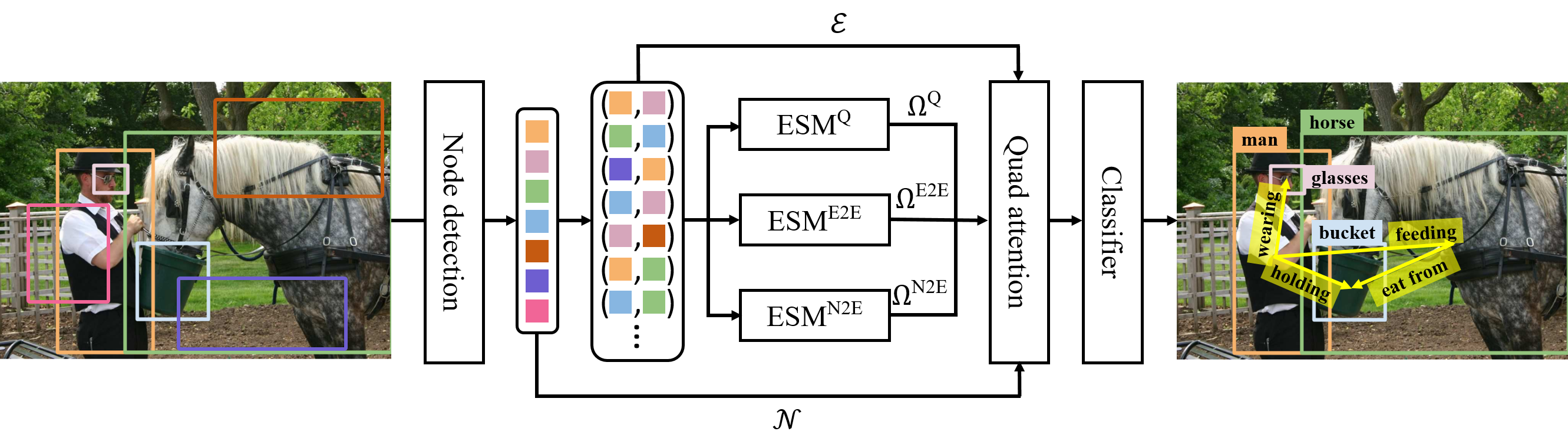

This repo is the official implementation of the CVPR 2023 paper: Devil's on the Edges: Selective Quad Attention for Scene Graph Generation.

Check INSTALL.md for installation instructions.

Check DATASET.md for instructions of dataset preprocessing.

- You can download the pretrained Faster R-CNN we used in the paper:

- put the checkpoint into the folder:

mkdir -p checkpoints/detection/pretrained_faster_rcnn/

# for VG

mv /path/vg_faster_det.pth checkpoints/detection/pretrained_faster_rcnn/

Then, you need to modify the pretrained weight parameter MODEL.PRETRAINED_DETECTOR_CKPT in configs yaml configs/e2e_relSQUAT_[vg, oiv6].yaml to the path of corresponding pretrained rcnn weight to make sure you load the detection weight parameter correctly.

You can follow the following instructions to train your own, which takes 4 GPUs for train each SGG model. The results should be very close to the reported results given in paper.

We provide the one-click script for training our SQUAT model in scripts/train.sh.

You can simply replace the --config-file options with config file below to reproduce our model.

Or you can copy the following command to train

gpu_num=4 && python -m torch.distributed.launch --master_port 10028 --nproc_per_node=$gpu_num \

tools/relation_train_net.py \

--config-file "configs/e2e_relSQUAT_vg.yaml" \

EXPERIMENT_NAME "SQUAT-3-3" \

SOLVER.IMS_PER_BATCH $[3*$gpu_num] \

TEST.IMS_PER_BATCH $[$gpu_num] \

SOLVER.VAL_PERIOD 1000 \

SOLVER.CHECKPOINT_PERIOD 1000 \

MODEL.ROI_RELATION_HEAD.PREDICTOR SquatPredictor \

MODEL.ROI_RELATION_HEAD.SQUAT_MODULE.RHO 0.7 \

MODEL.ROI_RELATION_HEAD.SQUAT_MODULE.BETA 0.7

Similarly, we also provide the scripts/test.sh for directly produce the results from the checkpoint provide by us.

By replacing the parameter of archive_dir and model_name to the directory and file name for trained model weight and selected dataset name in DATASETS.TEST, you can directly eval the model on validation or test set.

We provide the pre-trained model weights and config files which was used for training the each model. You should use the RHO and BETA as written in the table for evaluating the pre-trained models.

| Task | RHO | BETA | mR@20 | mR@50 | mR@100 | R@20 | R@50 | R@100 | Link (Google Drive) |

|---|---|---|---|---|---|---|---|---|---|

| PredCls | 0.5 | 1.0 | 25.64 | 30.87 | 33.41 | 48.01 | 55.67 | 57.94 | Link |

| SGCls | 0.5 | 1.0 | 14.35 | 17.47 | 18.87 | 28.87 | 32.92 | 34.26 | Link |

| SGDet | 0.35 | 0.7 | 10.57 | 14.12 | 16.47 | 17.85 | 24.51 | 28.93 | Link |

| RHO | BETA | R@50 | rel | phr | final_score | Link (Google Drive) |

|---|---|---|---|---|---|---|

| 0.6 | 0.7 | 75.8 | 34.9 | 35.9 | 43.5 | Link |

If you find this project helps your research, please kindly consider citing our papers in your publications.

@inproceedings{jung2023devil,

title={Devil's on the Edges: Selective Quad Attention for Scene Graph Generation},

author={Jung, Deunsol and Kim, Sanghyun and Kim, Won Hwa and Cho, Minsu},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2023}

}

This repository is developed on top of the scene graph benchmarking framwork develped by SHTUPLUS