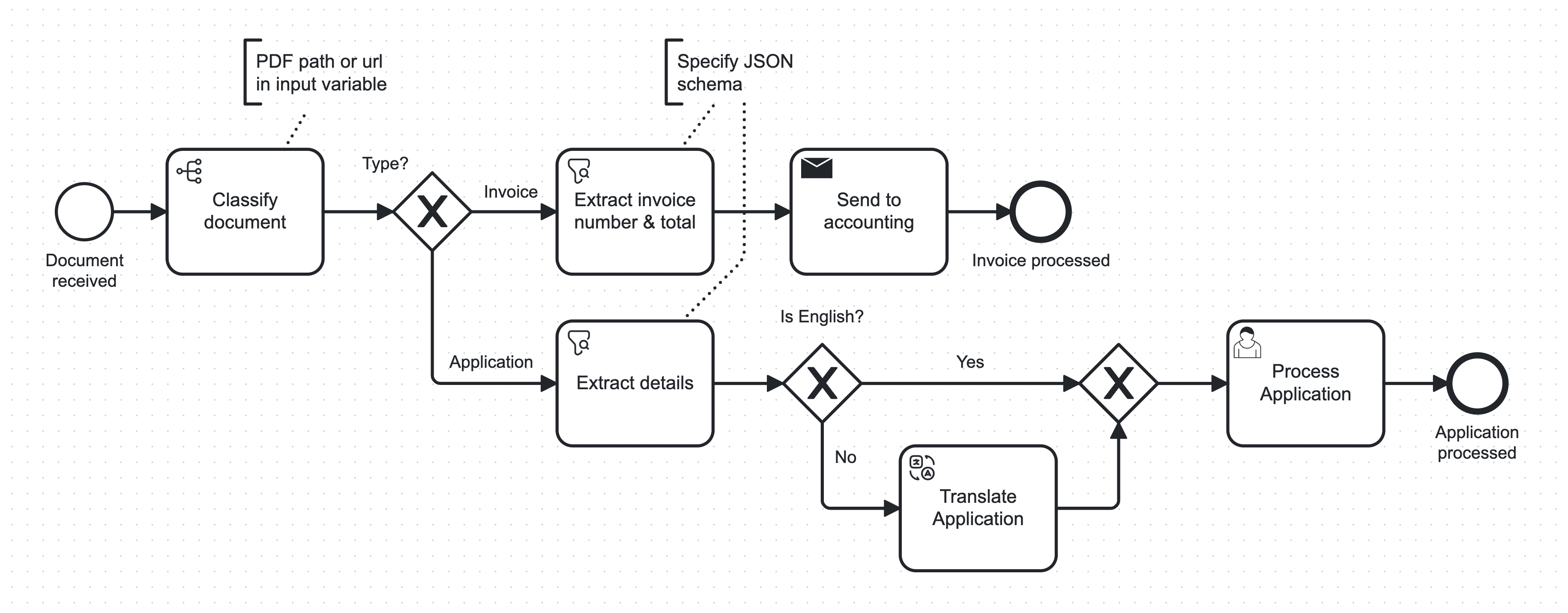

Boost automation in your Camunda BPMN processes using pre-configured, task-specific AI solutions - wrapped in easy-to-use connectors 🚀

The connectors automate activities in business processes that previously required user tasks or specialized AI models, including:

- 🔍 Information Extraction from unstructured data such as emails, letters, documents, etc.

- ⚖ Decision-Making based on process variables

- ✍🏼 Text Generation for emails, letters, etc.

- 🌍 Translation

Use API-based LLMs and AI services like OpenAI GPT-4 or go 100% local with open-access LLMs and AI models from HuggingFace Hub, local OCR with tesseract and local audio transcription with Whisper. All running CPU-only, no GPU required.

- Use 100% local LLMs with zero configuration!

- Anthropic Claude 3 model options (all support images/PDFs)

- New GPT-4 Turbo (supports images/PDFs)

- Use any OpenAI compatible LLM API

- Option to use small AI models running 100% locally on the CPU - no API key or GPU needed!

- Curated models known to work well, just select from dropdown

- Or use any compatible model from HuggingFace Hub

- Multimodal input:

- Audio (voice messages, call recordings, ...) using local or API-based transcription

- Images / Documents (document scans, PDFs, ...) using local or API-based OCR or multimodal AI models

- Use files from Amazon S3 or Azure Blob Storage

- Logging & Tracing support with Langfuse

- higher quality local OCR

Launch everything you need with a single command (cloud or automatically started local cluster):

bash <(curl -s https://raw.githubusercontent.com/holunda-io/bpm-ai-connectors-camunda-8/main/wizard.sh)

On Windows, use WSL2.

The Wizard will guide you through your preferences, create an .env file, and download and start the docker-compose.yml.

After starting the connector workers in their runtime, you also need to make the connectors known to the Modeler in order to actually model processes with them:

- Upload the element templates from /bpmn/.camunda/element-templates to your project in Camunda Cloud Modeler

- Click

publishon each one

- Click

- Or, if you're working locally:

- Place them in a

.camunda/element-templatesfolder next to your .bpmn file - Or add them to the

resources/element-templatesdirectory of your Modeler (details). - Or let the wizard copy them automatically, if your Modeler is found in a typical location

- Place them in a

Create an .env file (use env.sample as a template) and fill in your cluster information and your OpenAI API key:

OPENAI_API_KEY=<put your key here>

ZEEBE_CLIENT_CLOUD_CLUSTER_ID=<cluster-id>

ZEEBE_CLIENT_CLOUD_CLIENT_ID=<client-id>

ZEEBE_CLIENT_CLOUD_CLIENT_SECRET=<client-secret>

ZEEBE_CLIENT_CLOUD_REGION=<cluster-region>

# OR

ZEEBE_CLIENT_BROKER_GATEWAY_ADDRESS=zeebe:26500

Create a data and .cache directory for the connector volume

mkdir ./data ./.cache

and launch the connector runtime with a local zeebe cluster:

docker compose --profile platform up -d

For Camunda Cloud, remove the platform profile.

To use the inference extension container that includes local AI model inference implementations for decide, extract and translate, as well as local OCR, additionally use the inference profile:

docker compose --profile inference --profile platform up -d

Two types of Docker images are available on DockerHub:

- The main image suitable for users only needing the Anthropic/OpenAI and Azure/Amazon APIs (and other future API-based services)

- An optional inference image that contains all dependencies to run local LLMs and other AI models locally on the CPU

Learn how to effectively use the connectors in your processes, use fully local AI, different input modalities like PDFs, images, or audio files, and more.

- Getting Started

- Connectors

- Use Local LLMs

- Use Local Specialized Models

- Use Images & Audio

- Example Usecases & HowTos

Our connectors support logging traces of all task runs into Langfuse.

This allows for easy debugging, latency and cost monitoring, task performance analysis, and even curation and export of datasets from your past runs.

To configure tracing, add your keys to the .env file:

LANGFUSE_SECRET_KEY=<put your secret key here>

LANGFUSE_PUBLIC_KEY=<put your public key here>

# only if self-hosted:

#LANGFUSE_HOST=host:port

Read more here.

This project is developed under