This is a Pytorch implementation of our Technical report (arxiv).

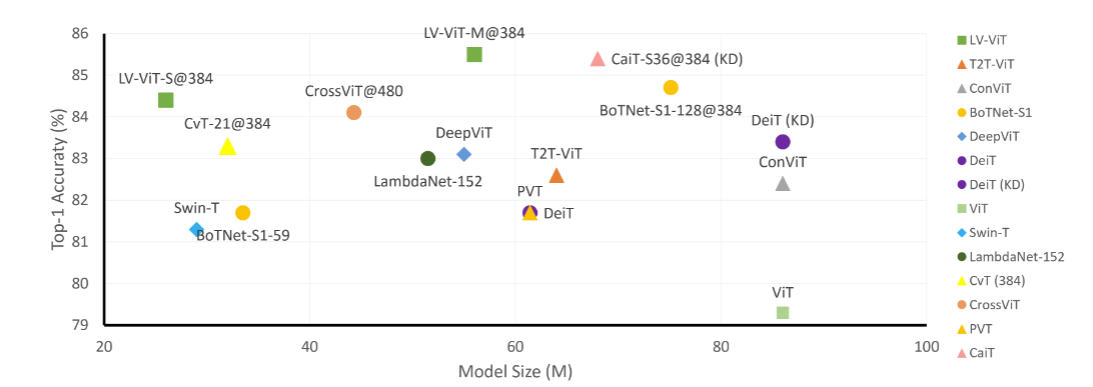

Comparison between the proposed LV-ViT and other recent works based on transformers. Note that we only show models whose model sizes are under 100M.

Our codes are based on the pytorch-image-models by Ross Wightman.

| Model | layer | dim | Image resolution | Param | Top 1 | Download |

|---|---|---|---|---|---|---|

| LV-ViT-S | 16 | 384 | 224 | 26.15M | 83.3 | link |

| LV-ViT-S | 16 | 384 | 384 | 26.30M | 84.4 | link |

| LV-ViT-M | 20 | 512 | 224 | 55.83M | 84.0 | link |

| LV-ViT-M | 20 | 512 | 384 | 56.03M | 85.4 | link |

| LV-ViT-L | 24 | 768 | 448 | 150.47M | 86.2 | link |

torch>=1.4.0 torchvision>=0.5.0 pyyaml timm==0.4.5

data prepare: ImageNet with the following folder structure, you can extract imagenet by this script.

│imagenet/

├──train/

│ ├── n01440764

│ │ ├── n01440764_10026.JPEG

│ │ ├── n01440764_10027.JPEG

│ │ ├── ......

│ ├── ......

├──val/

│ ├── n01440764

│ │ ├── ILSVRC2012_val_00000293.JPEG

│ │ ├── ILSVRC2012_val_00002138.JPEG

│ │ ├── ......

│ ├── ......

Replace DATA_DIR with your imagenet validation set path and MODEL_DIR with the checkpoint path

CUDA_VISIBLE_DEVICES=0 bash eval.sh /path/to/imagenet/val /path/to/checkpoint

We provide NFNet-F6 generated dense label map here. As NFNet-F6 are based on pure ImageNet data, no extra training data is involved.

Coming soon

If you use this repo or find it useful, please consider citing:

@misc{jiang2021token,

title={Token Labeling: Training a 85.4% Top-1 Accuracy Vision Transformer with 56M Parameters on ImageNet},

author={Zihang Jiang and Qibin Hou and Li Yuan and Daquan Zhou and Xiaojie Jin and Anran Wang and Jiashi Feng},

year={2021},

eprint={2104.10858},

archivePrefix={arXiv},

primaryClass={cs.CV}

}