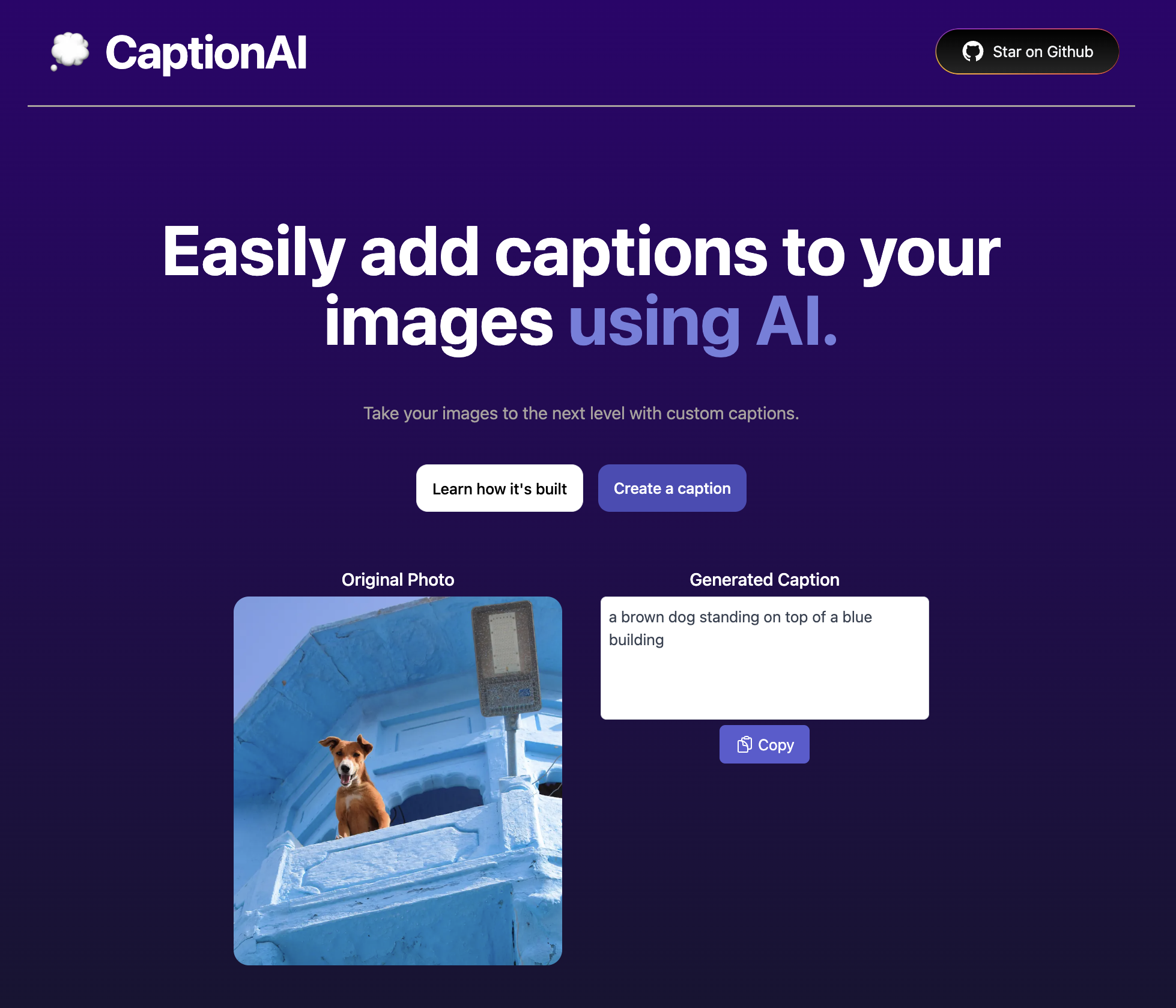

CaptionAI

This project creates captions for photos using AI.

How it works

Developed this using this template.

It uses an ML model from salesforce called BLIP on Replicate to convert images into text. This application gives you the ability to upload any photo, which will send it through this ML Model using a Next.js API route, and return your caption.

Running Locally

Cloning the repository the local machine.

git cloneCreating a account on Replicate to get an API key.

- Go to Replicate to make an account.

- Click on your profile picture in the top right corner, and click on "Dashboard".

- Click on "Account" in the navbar. And, here you can find your API token, copy it.

Storing API key in .env file.

Create a file in root directory of project with env. And store your API key in it, as shown in the .example.env file.

If you'd also like to do rate limiting, create an account on UpStash, create a Redis database, and populate the two environment variables in .env as well. If you don't want to do rate limiting, you don't need to make any changes.

Installing the dependencies.

npm installRunning the application.

Then, run the application in the command line and it will be available at http://localhost:3000.

npm run devDeploy

When deploying on Vercel also include the Environmentable Variables.

Powered by

This example is powered by the following 3 services:

- Replicate (AI API)

- Upload (storage)

- Upstash Redis (Rate Limiting)

- Vercel (hosting, serverless functions, analytics)