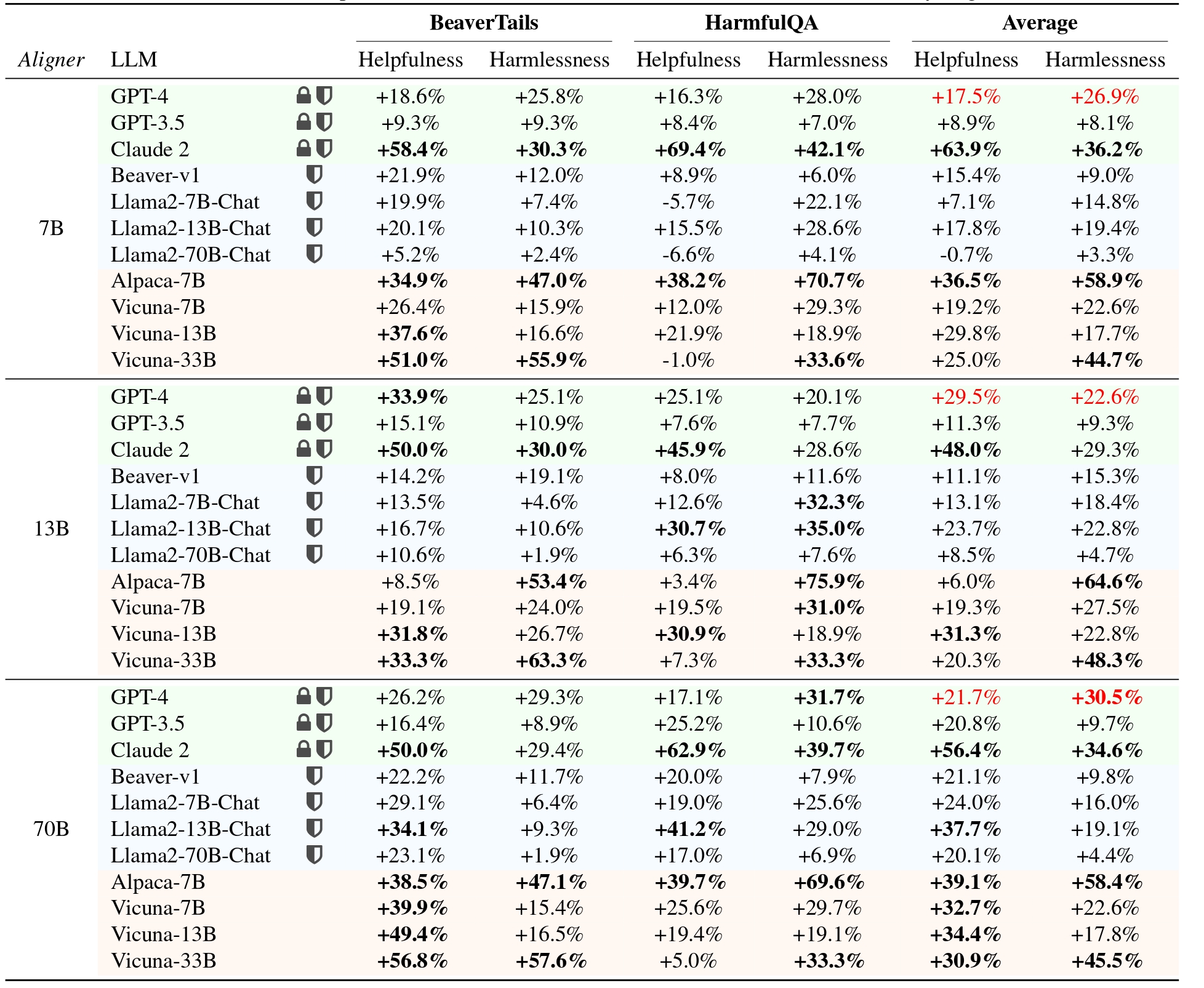

Efforts to align Large Language Models (LLMs) are mainly conducted via Reinforcement Learning from Human Feedback (RLHF) methods. However, RLHF encounters major challenges including training reward models, actor-critic engineering, and importantly, it requires access to LLM parameters. Here we introduce Aligner, a new efficient alignment paradigm that bypasses the whole RLHF process by learning the correctional residuals between the aligned and the unaligned answers. Our Aligner offers several key advantages. Firstly, it is an autoregressive seq2seq model that is trained on the query-answer-correction dataset via supervised learning; this offers a parameter-efficient alignment solution with minimal resources. Secondly, the Aligner facilitates weak-to-strong generalization; finetuning large pretrained models by Aligner's supervisory signals demonstrates strong performance boost. Thirdly, Aligner functions as a model-agnostic plug-and-play module, allowing for its direct application on different open-source and API-based models. Remarkably, Aligner-7B improves 11 different LLMs by 21.9% in helpfulness and 23.8% in harmlessness on average (GPT-4 by 17.5% and 26.9%). When finetuning (strong) Llama2-70B with (weak) Aligner-13B's supervision, we can improve Llama2 by 8.2% in helpfulness and 61.6% in harmlessness. See our dataset and code at https://aligner2024.github.io.

- Aligner: Achieving Efficient Alignment through Weak-to-Strong Correction

- Installation

- Training

- Dataset & Models

- Acknowledgment

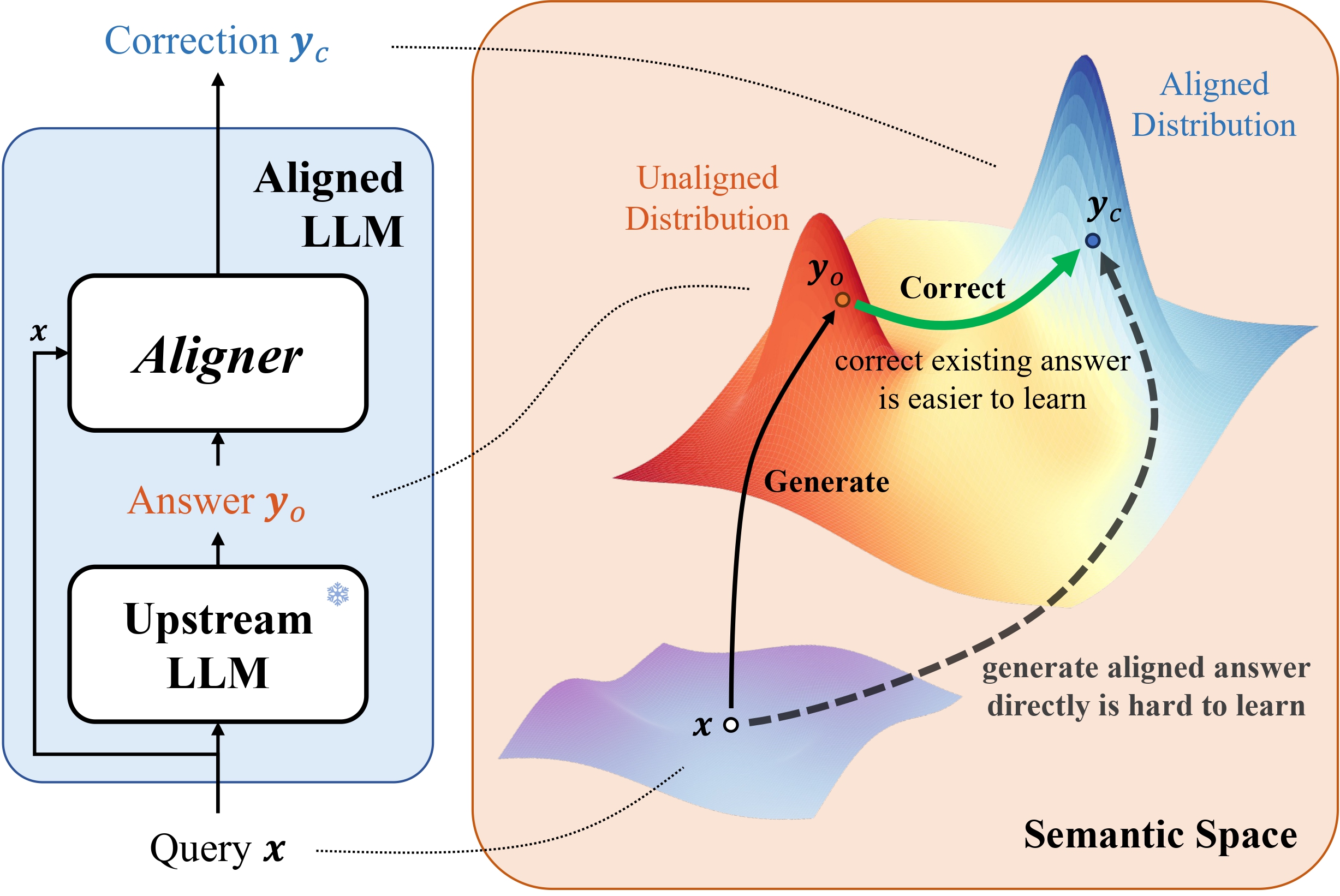

The Aligner, a plug-and-play model, stacks upon an upstream LLM (aligned or unaligned). It redistributes initial answers from the upstream model into more helpful and harmless answers, thus aligning the composed LLM responses with human intentions. It is challenging to learn direct mappings from queries to aligned answers. Nonetheless, correcting answers based on the upstream model’s output is a more tractable learning task.

It is shown that Aligner achieves significant performances in all the settings. All assessments in this table were conducted based on integrating various models with Aligners to compare with the original models to quantify the percentage increase in helpfulness and harmlessness. The background color represents the type of target language model: green represents API-based models, orange represents open-source models without safety alignment, and blue represents safety-aligned open-source models.

For more details, please refer to our website

Clone the source code from GitHub:

git clone https://github.com/Aligner2024/aligner.git

cd alignerNative Runner: Setup a conda environment using conda / mamba:

conda env create --file conda-recipe.yaml # or `mamba env create --file conda-recipe.yaml`aligner supports a complete pipeline for Aligner residual correction training.

- Follow the instructions in section Installation to setup the training environment properly.

conda activate aligner

export WANDB_API_KEY="..." # your W&B API key here- Supervised Fine-Tuning (SFT)

bash scripts/sft-correction.sh \

--train_datasets <your-correction-dataset> \

--model_name_or_path <your-model-name-or-checkpoint-path> \

--output_dir output/sftNOTE:

- You may need to update some of the parameters in the script according to your machine setup, such as the number of GPUs for training, the training batch size, etc.

- Your dataset format should be consistent with aligner/template-dataset.json

We have open-sourced a 20K training dataset and a 7B Aligner model. Further dataset and models will come soon.

This repository benefits from LLaMA, Stanford Alpaca, DeepSpeed, DeepSpeed-Chat and Safe-RLHF.

Thanks for their wonderful works and their efforts to further promote LLM research. Aligner and its related assets are built and open-sourced with love and respect.