This repository show the code to deploy a deep learning model serialized and running in C++ backend.

For the sake of comparison, the same model was loaded using PyTorch JIT and ONNX Runtime.

The C++ server can be used as a code template to deploy another models.

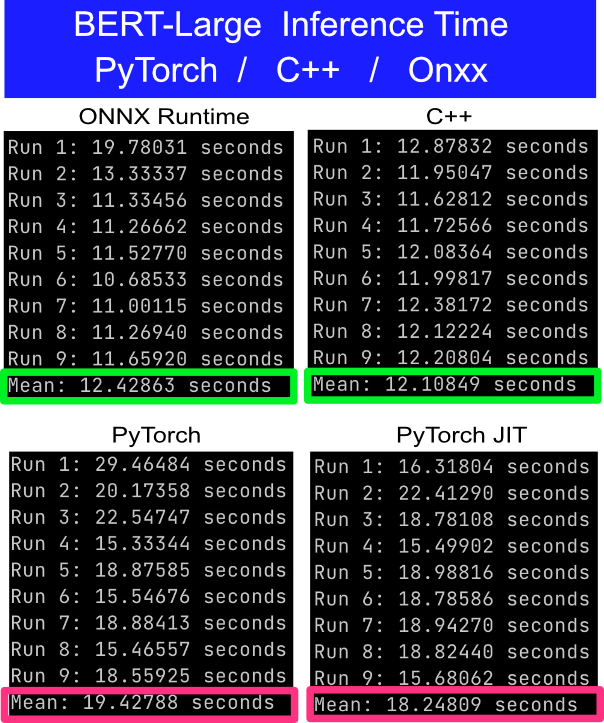

The test consist of make 100 async calls to the server. The client measure the time taken to get the response of the 100 requests. To reduce variability it is performed 10x, i.e, 1000 requests.

- The results was obtained in a Macbook Pro with a i7 6C-12T CPU.

- The CPU fans was set the max to reduce any possible thermal-throttling.

- Before start each test the temps was lowered down around 50 C.

- Model: BERT Large uncased pretrained from hugginface.

- Input: Text with [MASK] token to be predicted

- Output: Tensor in shape (seq_len, 30522)

FastAPI web-app server running by hypercorn with 6 workers (adjust this by the number of threads you have).

- server-python/app_pt.py: Load the model using PyTorch load_model(), the standard way of load a PyTorch model.

- server-python/app_jit.py: Load the serialized model using PyTorch JIT runtime.

- server-python/app_onnx.py: Load the serialized model using ONNX runtime.

To deploy the model in C++ it was used the same serialized model used in JIT runtime. The model is loaded using libtorch C++ API. To deploy as a http-server, it is used crow. The server is started in multithreaded mode.

- server-cpp/server.cpp: Code to generate the c++ application that load the model and start the http server on port 8000.

Deploy a model in C++ is not as straightforward as it is in Python. Check the libs folder for more details about the codes used.

Client uses async and multi-thread to perform as many requests as possible in parallel. See the test_api.py

- Experiment on CUDA device.

- Experiment another models like BERT.

- Exprimento quantized models.