Lianghui Zhu1 *, Yingyue Li1 *, Jiemin Fang1, Yan Liu2, Hao Xin2, Wenyu Liu1, Xinggang Wang1 📧

1 School of EIC, Huazhong University of Science & Technology, 2 Ant Group

(*) equal contribution, (📧) corresponding author.

ArXiv Preprint (arXiv 2304.01184)

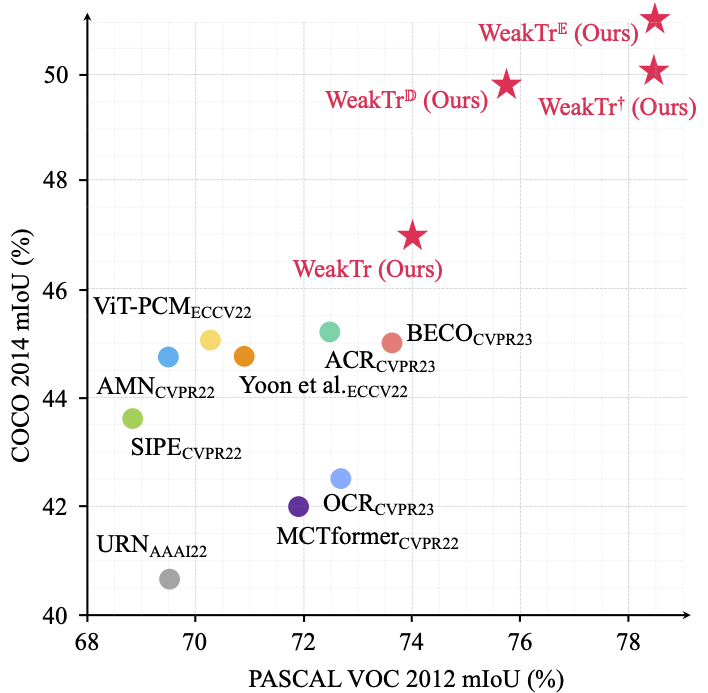

- The proposed WeakTr fully explores the potential of plain ViT in the WSSS domain. State-of-the-art results are achieved on both challenging WSSS benchmarks, with 74.0% mIoU on VOC12 and 46.9% on COCO14 validation sets respectively, significantly surpassing previous methods.

- The proposed WeakTr

based on the DINOv2 which is pretrained on ImageNet-1k and the extra LVD-142M dataset performs better with 75.8% mIoU on VOC12 and 48.9% on COCO14 validation sets respectively.

- The proposed WeakTr

based on the improved ViT which is pretrained on ImageNet-21k and fine-tuned on ImageNet-1k performs better with 78.4% mIoU on VOC12 and 50.3% on COCO14 validation sets respectively.

- The proposed WeakTr

based on the EVA-02 which uses EVA-CLIP as the masked image modeling(MIM) teacher and is pretrained on ImageNet-1k performs better with 78.5% mIoU on VOC12 and 51.1% on COCO14 validation sets respectively.

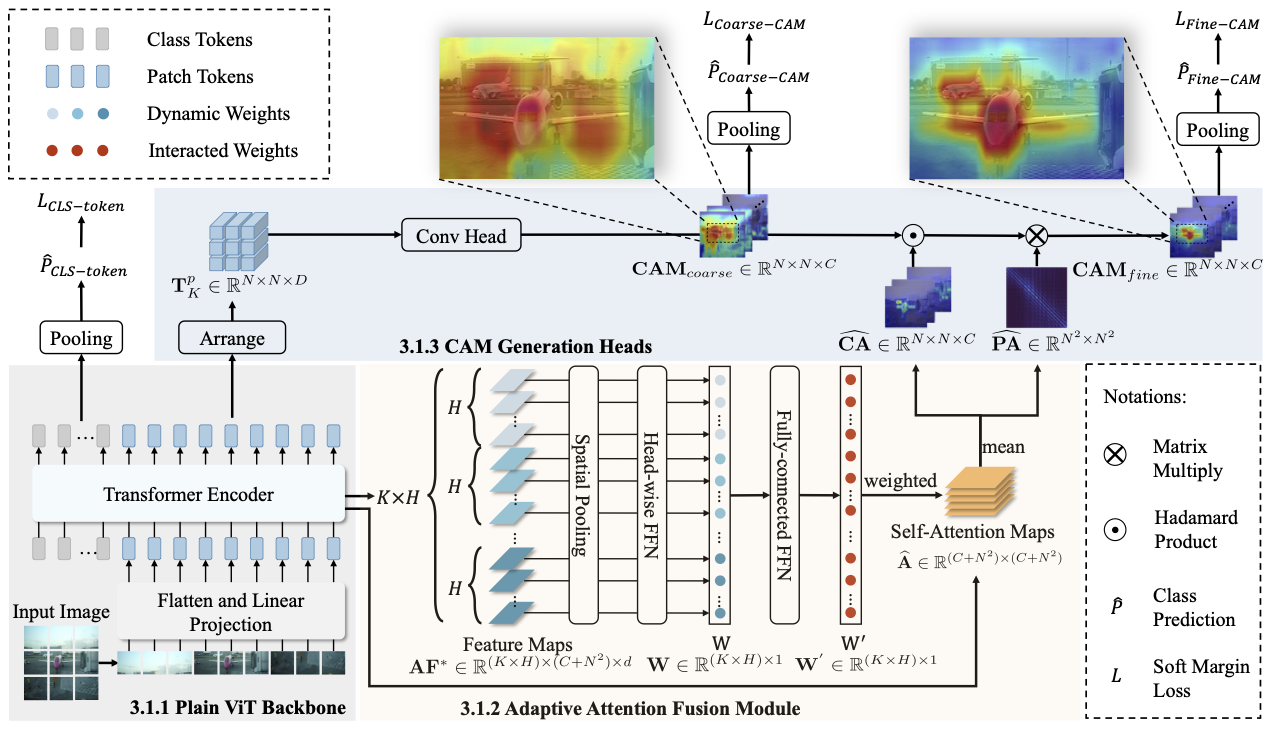

This paper explores the properties of the plain Vision Transformer (ViT) for Weakly-supervised Semantic Segmentation (WSSS). The class activation map (CAM) is of critical importance for understanding a classification network and launching WSSS. We observe that different attention heads of ViT focus on different image areas. Thus a novel weight-based method is proposed to end-to-end estimate the importance of attention heads, while the self-attention maps are adaptively fused for high-quality CAM results that tend to have more complete objects.

Step1: End-to-End CAM Generation

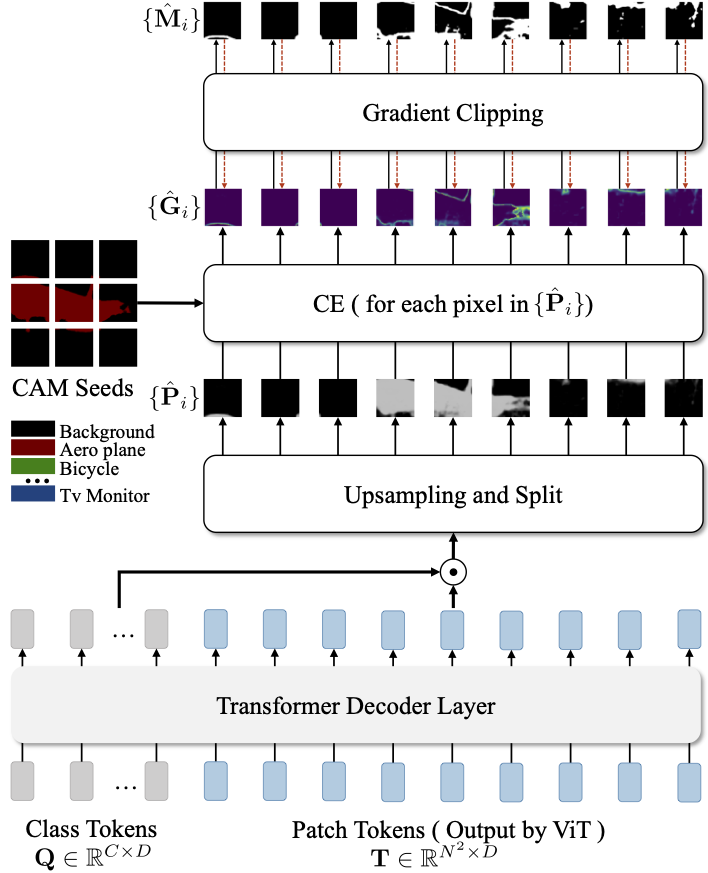

Besides, we propose a ViT-based gradient clipping decoder for online retraining with the CAM results to complete the WSSS task. We name this plain Transformer-based Weakly-supervised learning framework WeakTr. It achieves the state-of-the-art WSSS performance on standard benchmarks, i.e., 78.5% mIoU on the val set of VOC12 and 51.1% mIoU on the val set of COCO14.

Step2: Online Retraining with Gradient Clipping Decoder

2023/08/31: 🔥 We update the experiments based on the EVA-02 and DINOv2 pretrain weight which need to update the environment.

| Dataset | Method | Backbone | Checkpoint | CAM_Label | Train mIoU |

|---|---|---|---|---|---|

| VOC12 | WeakTr | DeiT-S |

Google Drive | Google Drive | 69.4% |

| COCO14 | WeakTr | DeiT-S |

Google Drive | Google Drive | 42.6% |

| Dataset | Method | Backbone | Checkpoint | Val mIoU | Pseudo-mask | Train mIoU |

|---|---|---|---|---|---|---|

| VOC12 | WeakTr | DeiT-S |

Google Drive | 74.0% | Google Drive | 76.5% |

| VOC12 | WeakTr |

DINOv2-S |

Google Drive | 75.8% | Google Drive | 78.1% |

| VOC12 | WeakTr |

ViT-S |

Google Drive | 78.4% | Google Drive | 80.3% |

| VOC12 | WeakTr |

EVA-02-S |

Google Drive | 78.5% | Google Drive | 80.0% |

| COCO14 | WeakTr | DeiT-S |

Google Drive | 46.9% | Google Drive | 48.9% |

| COCO14 | WeakTr |

DINOv2-S |

Google Drive | 48.9% | Google Drive | 50.7% |

| COCO14 | WeakTr |

ViT-S |

Google Drive | 50.3% | Google Drive | 51.3% |

| COCO14 | WeakTr |

EVA-02-S |

Google Drive | 51.1% | Google Drive | 52.2% |

If you find this repository/work helpful in your research, welcome to cite the paper and give a ⭐.

@article{zhu2023weaktr,

title={WeakTr: Exploring Plain Vision Transformer for Weakly-supervised Semantic Segmentation},

author={Lianghui Zhu and Yingyue Li and Jiemin Fang and Yan Liu and Hao Xin and Wenyu Liu and Xinggang Wang},

year={2023},

journal={arxiv:2304.01184},

}