By Peng Tang, Xinggang Wang, Song Bai, Wei Shen, Xiang Bai, Wenyu Liu, and Alan Yuille.

This is a PyTorch implementation of our PCL/OICR. The original Caffe implementation of PCL/OICR is available here.

The final performance of this implementation is mAP 49.2% and CorLoc 65.0% mAP 52.9% and CorLoc 67.2% using vgg16_voc2007.yaml and mAP 54.1% and CorLoc 69.5% using vgg16_voc2007_more.yaml on PASCAL VOC 2007 using a single VGG16 model. The results are comparable with the recent state of the arts.

Small trick to obtain better results on COCO: changing this line of codes to return 4.0 * loss.mean().

- Use the trick proposed in our ECCV paper.

- Use OICR and train more iterations.

- Add bounding box regression / Fast R-CNN branch following paper1 and paper2.

- Support PyTorch 1.6.0 by changing codes of losses to pure PyTorch codes and using RoI-Pooling from mmcv. Please check the 0.4.0 branch for the older version of codes.

- Make the loss of first refinement branch 3x bigger following paper3, please check here.

- For vgg16_voc2007_more.yaml, use one more image scale 1000 and train more iterations following paper3.

Proposal Cluster Learning (PCL) is a framework for weakly supervised object detection with deep ConvNets.

- It achieves state-of-the-art performance on weakly supervised object detection (Pascal VOC 2007 and 2012, ImageNet DET, COCO).

- Our code is written based on PyTorch, Detectron.pytorch, and faster-rcnn.pytorch.

The original paper has been accepted by CVPR 2017. This is an extened version. For more details, please refer to here and here.

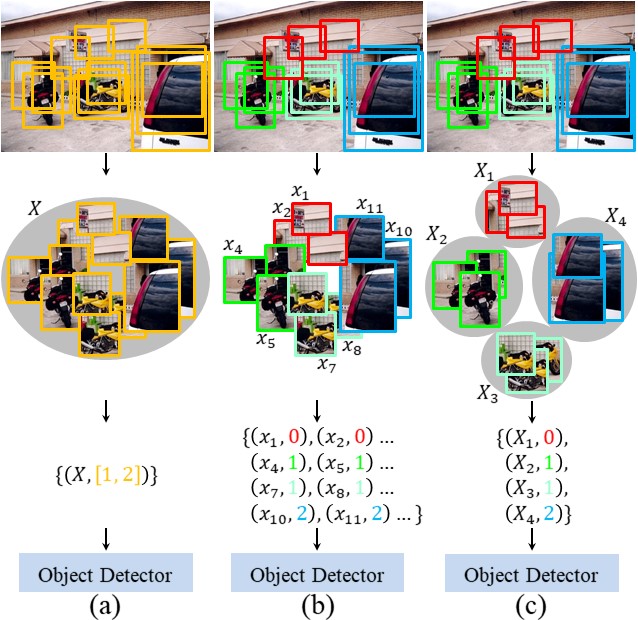

(a) Conventional MIL method; (b) Our original OICR method with newly proposed proposal cluster generation method; (c) Our PCL method.

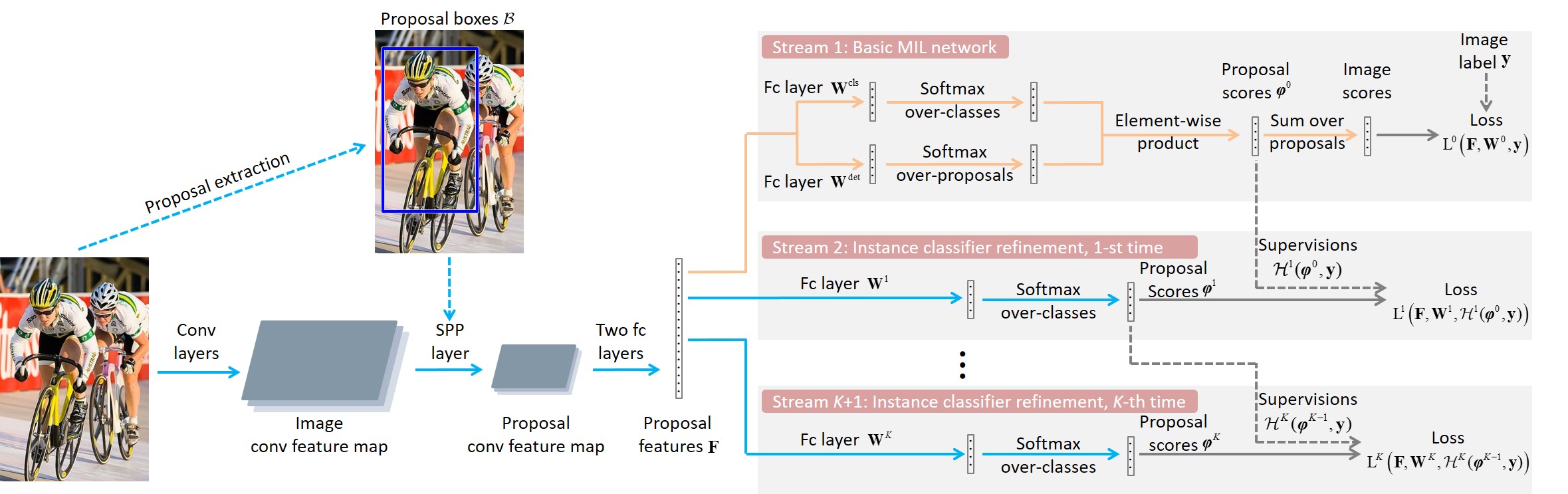

Some PCL visualization results.

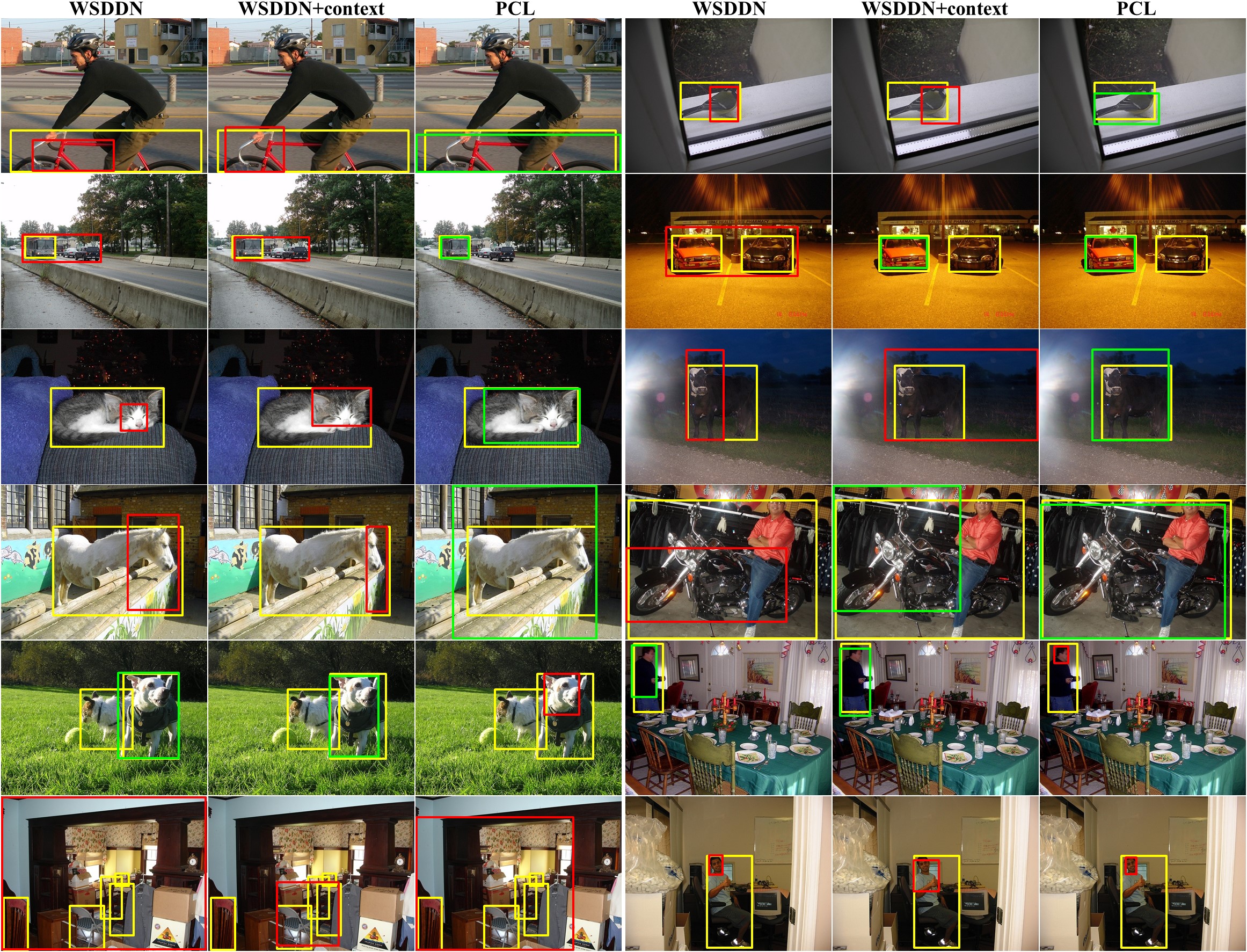

Some visualization comparisons among WSDDN, WSDDN+context, and PCL.

PCL is released under the MIT License (refer to the LICENSE file for details).

If you find PCL useful in your research, please consider citing:

@article{tang2018pcl,

author = {Tang, Peng and Wang, Xinggang and Bai, Song and Shen, Wei and Bai, Xiang and Liu, Wenyu and Yuille, Alan},

title = {{PCL}: Proposal Cluster Learning for Weakly Supervised Object Detection},

journal = {IEEE Transactions on Pattern Analysis and Machine Intelligence},

volume = {},

number = {},

pages = {1--1},

year = {2018}

}

@inproceedings{tang2017multiple,

author = {Tang, Peng and Wang, Xinggang and Bai, Xiang and Liu, Wenyu},

title = {Multiple Instance Detection Network with Online Instance Classifier Refinement},

booktitle = {IEEE Conference on Computer Vision and Pattern Recognition},

pages = {3059--3067},

year = {2017}

}

- NVIDIA GTX 1080Ti (~11G of memory)

- Clone the PCL repository

git clone https://github.com/ppengtang/pcl.pytorch.git & cd pcl.pytorch- Install libraries

sh install.sh- Download the training, validation, test data and VOCdevkit

wget http://host.robots.ox.ac.uk/pascal/VOC/voc2007/VOCtrainval_06-Nov-2007.tar

wget http://host.robots.ox.ac.uk/pascal/VOC/voc2007/VOCtest_06-Nov-2007.tar

wget http://host.robots.ox.ac.uk/pascal/VOC/voc2012/VOCdevkit_18-May-2011.tar- Extract all of these tars into one directory named

VOCdevkit

tar xvf VOCtrainval_06-Nov-2007.tar

tar xvf VOCtest_06-Nov-2007.tar

tar xvf VOCdevkit_18-May-2011.tar-

Download the COCO format pascal annotations from here and put them into the

VOC2007/annotationsdirectory -

It should have this basic structure

$VOC2007/

$VOC2007/annotations

$VOC2007/JPEGImages

$VOC2007/VOCdevkit

# ... and several other directories ...- Create symlinks for the PASCAL VOC dataset

cd $PCL_ROOT/data

ln -s $VOC2007 VOC2007Using symlinks is a good idea because you will likely want to share the same PASCAL dataset installation between multiple projects.

-

[Optional] follow similar steps to get PASCAL VOC 2012.

-

You should put the generated proposal data under the folder $PCL_ROOT/data/selective_search_data, with the name "voc_2007_trainval.pkl", "voc_2007_test.pkl". You can downlad the Selective Search proposals here.

-

The pre-trained models are available at: Dropbox, VT Server. You should put it under the folder $PCL_ROOT/data/pretrained_model.

Train a PCL network. For example, train a VGG16 network on VOC 2007 trainval

CUDA_VISIBLE_DEVICES=0 python tools/train_net_step.py --dataset voc2007 \

--cfg configs/baselines/vgg16_voc2007.yaml --bs 1 --nw 4 --iter_size 4or

CUDA_VISIBLE_DEVICES=0 python tools/train_net_step.py --dataset voc2007 \

--cfg configs/baselines/vgg16_voc2007_more.yaml --bs 1 --nw 4 --iter_size 4Note: The current implementation has a bug on multi-gpu training and thus does not support multi-gpu training.

Test a PCL network. For example, test the VGG 16 network on VOC 2007:

python tools/test_net.py --cfg configs/baselines/vgg16_voc2007.yaml \

--load_ckpt Outputs/vgg16_voc2007/$MODEL_PATH \

--dataset voc2007trainvalpython tools/test_net.py --cfg configs/baselines/vgg16_voc2007.yaml \

--load_ckpt Outputs/vgg16_voc2007/$model_path \

--dataset voc2007testTest output is written underneath $PCL_ROOT/Outputs.

Note: Add --multi-gpu-testing if multiple gpus are available.

For mAP, run the python code tools/reval.py

python tools/reeval.py --result_path $output_dir/detections.pkl \

--dataset voc2007test --cfg configs/baselines/vgg16_voc2007.yamlFor CorLoc, run the python code tools/reval.py

python tools/reeval.py --result_path $output_dir/discovery.pkl \

--dataset voc2007trainval --cfg configs/baselines/vgg16_voc2007.yaml- Add PASCAL VOC 2012 configurations.

- Upload trained models.

- Support multi-gpu training.

- Clean codes.

- Fix bugs for multi-gpu testing.

We thank Mingfei Gao, Yufei Yun, and Ke Yang for the help of improving this repo.