- 🔥 [08.25] MMBench Our model is selected into MMBench by OpenCompass and achieves Rank 1 on MMBench (especially among 7B models). This is the first large-scale evaluation multimodal dataset covering so many ability dimensions.

- 🔥 [07.19] The LLaMA-2 version of mPLUG-Owl will be releasing soon, which achieves new state-of-the-art performance on various benchmarks compared previous version. Please stay tuned!

- 🔥 [06.30] The video version code and checkpoint are released. The checkpoint will also be available on Huggingface Model Hub soon.

- 🔥 [05.30] The multilingual version checkpoint is available on Huggingface Model Hub now.

- [05.27] We provide a multilingual version of mPLUG-Owl (supports Chinese, English, Japanese, French, Korean and German) on ModelScope!

- [05.24] The Pokémon Arena: Our model is selected into Multi-Modal Arena. This is an interesting Multi-Modal Foundation Models competition arena that let you see different models reaction to the same question.

- [05.19] mPLUG-Owl is now natively support Huggingface style usage with Huggingface Trainer. Users can train their customized models with only a single V100 GPU now! We also refactorize the project code and much easier to use. The offline demo can be inferenced with only a single 16GB T4 GPU with 8 bits support!

- [05.16] We retrain our model based on video-image-text data. Online demo has been updated and new checkpoints and improved code are coming soon.

- [05.16] Online demo on HuggingFace now supports 8 bits!

- [05.12] Online demo and API available on Replicate!

- [05.05] We released code and dataset for instruction tuning.

- [05.05] Online demo on HuggingFace is available. Thank Huggingface for providing us with free computing resources!

- [05.05] Online demo on HuggingFace now supports recieve video! Demo on ModelScope will support soon.

- [05.05] We upload our visually-related evaluation set OwlEval.

- [04.26] We provide an online demo on modelscope for the public to experience.

- [04.26] We released code of mPLUG-Owl🦉 with its pre-trained and instruction tuning checkpoints.

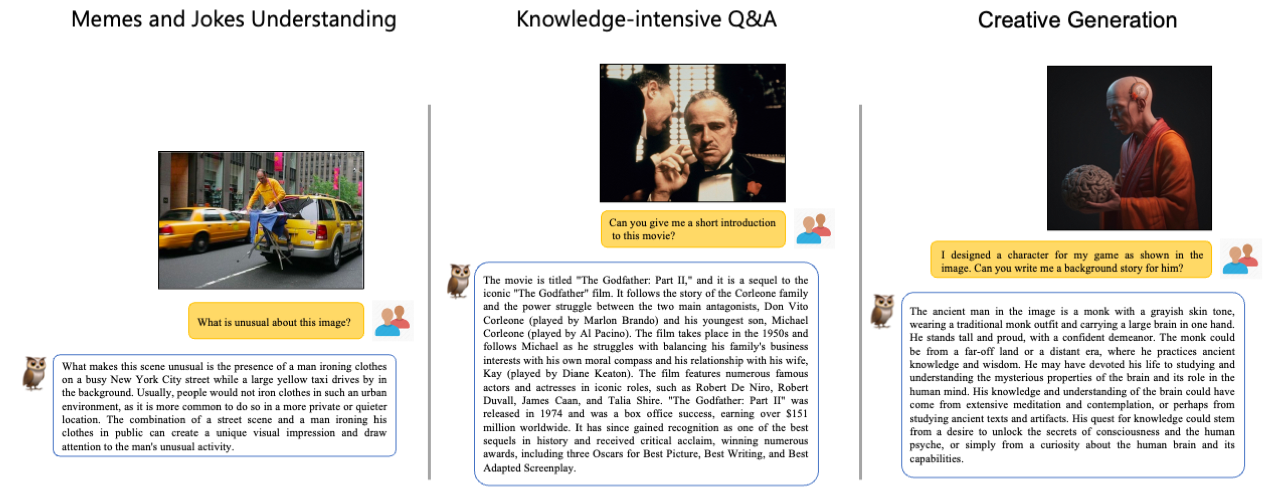

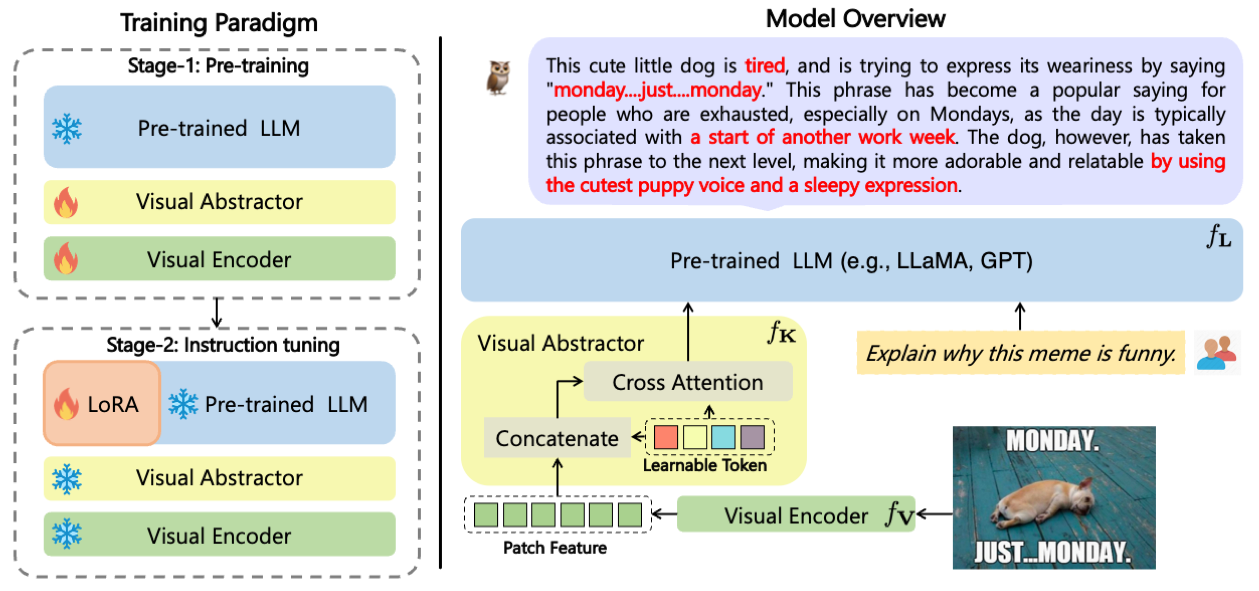

- A new training paradigm with a modularized design for large multi-modal language models.

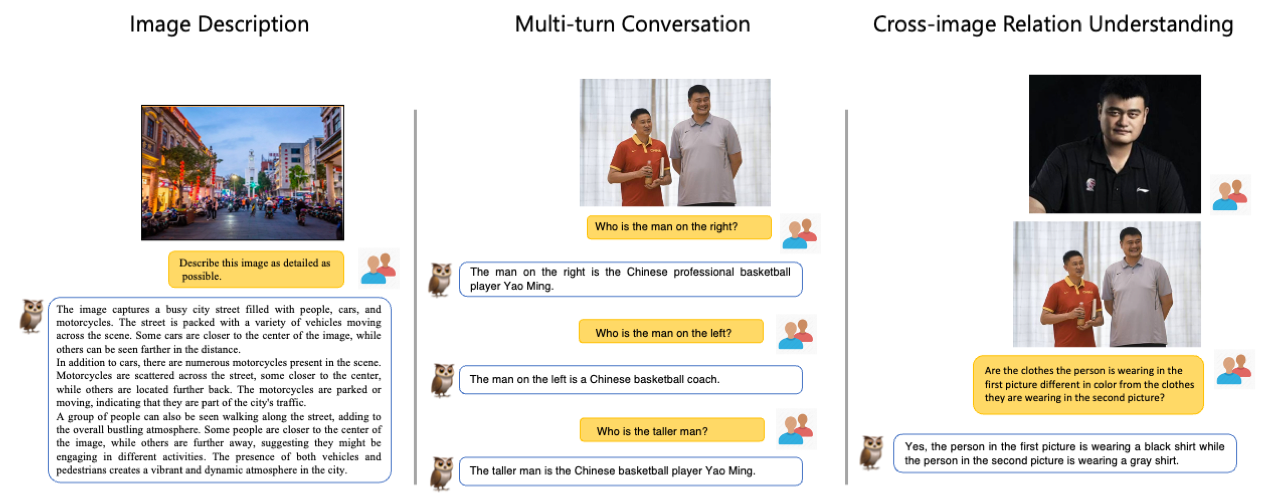

- Learns visual knowledge while support multi-turn conversation consisting of different modalities (images/videos/texts).

- Observed abilities such as multi-image correlation and scene text understanding, vision-based document comprehension.

- Release a visually-related instruction evaluation set OwlEval.

- Our outstanding works on modularization:

- E2E-VLP, mPLUG and mPLUG-2, were respectively accepted by ACL 2021, EMNLP 2022 and ICML 2023.

- mPLUG is the first to achieve the human parity on VQA Challenge.

- Comming soon

- Video support.

- Multi-lingustic support.

- Publish on Huggingface Hub / Model Hub

- Huggingface space demo.

- Instruction tuning code and pre-training code.

- A visually-related evaluation set OwlEval to comprehensively evaluate various models.

The code in the current main branch has been refactored in Huggingface style, and several issues with the model have been fixed. We have re-trained the models and released new checnpoints in Huggingface Hub. As a result, the old code and new checkpoints are incompatible. We have moved that code into the v0 branch.

| Model | Phase | Download link |

|---|---|---|

| mPLUG-Owl LLaMA-2 7B | Pre-training | Download link |

| mPLUG-Owl LLaMA-2 7B | Instruction tuning | Download link |

| mPLUG-Owl 7B | Pre-training | Download link |

| mPLUG-Owl 7B | Instruction tuning (LoRA) | Download link |

| mPLUG-Owl 7B | Instruction tuning (FT) | Download link |

| mPLUG-Owl 7B (Multilingual) | Instruction tuning (LoRA) | Download link |

| mPLUG-Owl 7B (Video) | Instruction tuning (LoRA) | Download link |

The evaluation dataset OwlEval can be found in ./OwlEval.

- Create conda environment

conda create -n mplug_owl python=3.10

conda activate mplug_owl- Install PyTorch

conda install pytorch==1.13.1 torchvision==0.14.1 torchaudio==0.13.1 pytorch-cuda=11.7 -c pytorch -c nvidia

- Install other dependencies

pip install -r requirements.txtWe provide a script to deploy a simple demo in your local machine.

python -m serve.web_server --base-model 'your checkpoint directory' --bf16For example, if you want to load the checkpoint MAGAer13/mplug-owl-llama-7b from Huggingface Model Hub, here is it.

python -m serve.web_server --base-model MAGAer13/mplug-owl-llama-7b --bf16If you want to load the model (e.g. MAGAer13/mplug-owl-llama-7b) from the model hub on Huggingface or on local, you can use the following code snippet.

# Load via Huggingface Style

from transformers import AutoTokenizer

from mplug_owl.modeling_mplug_owl import MplugOwlForConditionalGeneration

from mplug_owl.processing_mplug_owl import MplugOwlImageProcessor, MplugOwlProcessor

pretrained_ckpt = 'MAGAer13/mplug-owl-llama-7b'

model = MplugOwlForConditionalGeneration.from_pretrained(

pretrained_ckpt,

torch_dtype=torch.bfloat16,

)

image_processor = MplugOwlImageProcessor.from_pretrained(pretrained_ckpt)

tokenizer = AutoTokenizer.from_pretrained(pretrained_ckpt)

processor = MplugOwlProcessor(image_processor, tokenizer)Prepare model inputs.

# We use a human/AI template to organize the context as a multi-turn conversation.

# <image> denotes an image placehold.

prompts = [

'''The following is a conversation between a curious human and AI assistant. The assistant gives helpful, detailed, and polite answers to the user's questions.

Human: <image>

Human: Explain why this meme is funny.

AI: ''']

# The image paths should be placed in the image_list and kept in the same order as in the prompts.

# We support urls, local file paths and base64 string. You can custom the pre-process of images by modifying the mplug_owl.modeling_mplug_owl.ImageProcessor

image_list = ['https://xxx.com/image.jpg']Get response.

# generate kwargs (the same in transformers) can be passed in the do_generate()

generate_kwargs = {

'do_sample': True,

'top_k': 5,

'max_length': 512

}

from PIL import Image

images = [Image.open(_) for _ in image_list]

inputs = processor(text=prompts, images=images, return_tensors='pt')

inputs = {k: v.bfloat16() if v.dtype == torch.float else v for k, v in inputs.items()}

inputs = {k: v.to(model.device) for k, v in inputs.items()}

with torch.no_grad():

res = model.generate(**inputs, **generate_kwargs)

sentence = tokenizer.decode(res.tolist()[0], skip_special_tokens=True)

print(sentence)Build model, toknizer and processor.

from pipeline.interface import get_model

model, tokenizer, processor = get_model(pretrained_ckpt='your checkpoint directory', use_bf16='use bf16 or not')Prepare model inputs.

# We use a human/AI template to organize the context as a multi-turn conversation.

# <image> denotes an image placehold.

prompts = [

'''The following is a conversation between a curious human and AI assistant. The assistant gives helpful, detailed, and polite answers to the user's questions.

Human: <image>

Human: Explain why this meme is funny.

AI: ''']

# The image paths should be placed in the image_list and kept in the same order as in the prompts.

# We support urls, local file paths and base64 string. You can custom the pre-process of images by modifying the mplug_owl.modeling_mplug_owl.ImageProcessor

image_list = ['https://xxx.com/image.jpg',]For multiple images inputs, as it is an emergent ability of the models, we do not know which format is the best. Below is an example format we have tried in our experiments. Exploring formats that can help models better understand multiple images could be beneficial and worth further investigation.

prompts = [

'''The following is a conversation between a curious human and AI assistant. The assistant gives helpful, detailed, and polite answers to the user's questions.

Human: <image>

Human: <image>

Human: Do the shirts worn by the individuals in the first and second pictures vary in color? If so, what is the specific color of each shirt?

AI: ''']

image_list = ['https://xxx.com/image_1.jpg', 'https://xxx.com/image_2.jpg']Get response.

# generate kwargs (the same in transformers) can be passed in the do_generate()

from pipeline.interface import do_generate

sentence = do_generate(prompts, image_list, model, tokenizer, processor,

use_bf16=True, max_length=512, top_k=5, do_sample=True)To perform video inference you can use the following code:

from mplug_owl_video.modeling_mplug_owl import MplugOwlForConditionalGeneration

from transformers import AutoTokenizer

from mplug_owl_video.processing_mplug_owl import MplugOwlImageProcessor, MplugOwlProcessor

pretrained_ckpt = 'MAGAer13/mplug-owl-llama-7b-video'

model = MplugOwlForConditionalGeneration.from_pretrained(

pretrained_ckpt,

torch_dtype=torch.bfloat16,

)

image_processor = MplugOwlImageProcessor.from_pretrained(pretrained_ckpt)

tokenizer = AutoTokenizer.from_pretrained(pretrained_ckpt)

processor = MplugOwlProcessor(image_processor, tokenizer)

# We use a human/AI template to organize the context as a multi-turn conversation.

# <|video|> denotes an video placehold.

prompts = [

'''The following is a conversation between a curious human and AI assistant. The assistant gives helpful, detailed, and polite answers to the user's questions.

Human: <|video|>

Human: What is the woman doing in the video?

AI: ''']

video_list = ['yoga.mp4']

# generate kwargs (the same in transformers) can be passed in the do_generate()

generate_kwargs = {

'do_sample': True,

'top_k': 5,

'max_length': 512

}

inputs = processor(text=prompts, videos=video_list, num_frames=4, return_tensors='pt')

inputs = {k: v.bfloat16() if v.dtype == torch.float else v for k, v in inputs.items()}

inputs = {k: v.to(model.device) for k, v in inputs.items()}

with torch.no_grad():

res = model.generate(**inputs, **generate_kwargs)

sentence = tokenizer.decode(res.tolist()[0], skip_special_tokens=True)

print(sentence)The training samples are stored in xxx.jsonl and orgnized in the following format:

{"text": "The following is a conversation between a curious human and AI assistant. The assistant gives helpful, detailed, and polite answers to the user's questions.\nHuman: Construct a sentence using the given verb in the past tense\nshudder\nAI: She shuddered at the thought of being alone in the dark.", "task_type": "gpt4instruct_sft"}

{"image": ["xxx.jpg"], "text": "The following is a conversation between a curious human and AI assistant. The assistant gives helpful, detailed, and polite answers to the user's questions.\nHuman: <image>\nHuman: Where is the man located in the image?\nAI: The man is located in a small restaurant, sitting and enjoying his meal.\nHuman: What type of food is the man eating in the image?\nAI: The man is eating a burrito in the image.\nHuman: What can be seen next to the man in the restaurant?\nAI: Next to the man in the restaurant, there is a fridge filled with cans of soda.\nHuman: How does the man feel as he is about to eat his meal?\nAI: The man looks happy and is smiling as he is about to take a bite of his meal.\nHuman: What unique aspect of the photo makes the scene appear distorted or unusual?\nAI: The unique aspect of this photo is that it was taken using a fish-eye lens, which creates a distorted, wide-angle view of the scene, making it appear different from a standard perspective.", "task_type": "llava_sft"}The task_type can be in one of {'quora_chat_sft', 'sharegpt_chat_sft', 'llava_sft', 'gpt4instruct_sft'}.

Prepare your own train.jsonl and dev.jsonl and modify data_files in configs/v0.yaml.

Execute the training script.

PYTHONPATH=./ bash train_it.sh # If you want to finetune LLM, replace it with train_it_wo_lora.sh

You can also now run the model and demo locally with cog, an open source ML tool maintained by Replicate. To get started, follow the instructions in this cog fork of mPLUG-Owl.

We notice that some users are suffering from loss of NaN issue.

There is a high possibility that the issue is caused by the prompt being too long and the part complement being cut off during preprocessing. As a result, the label_mask is all -100 and CrossEntropy will return a loss of NaN. There are three potential solutions to this issue:

- Prevent feeding input_ids that do not include any complement.

- Set the

reduction='none'in the CrossEntropyLoss of Llama and reduce the loss with an epsilon value likeoutputs.loss = (outputs.loss * loss_mask.view(-1)).sum()/(loss_mask.sum()+1e-5). - Simply set

output.loss=output.loss*0if you get a loss of NaN.

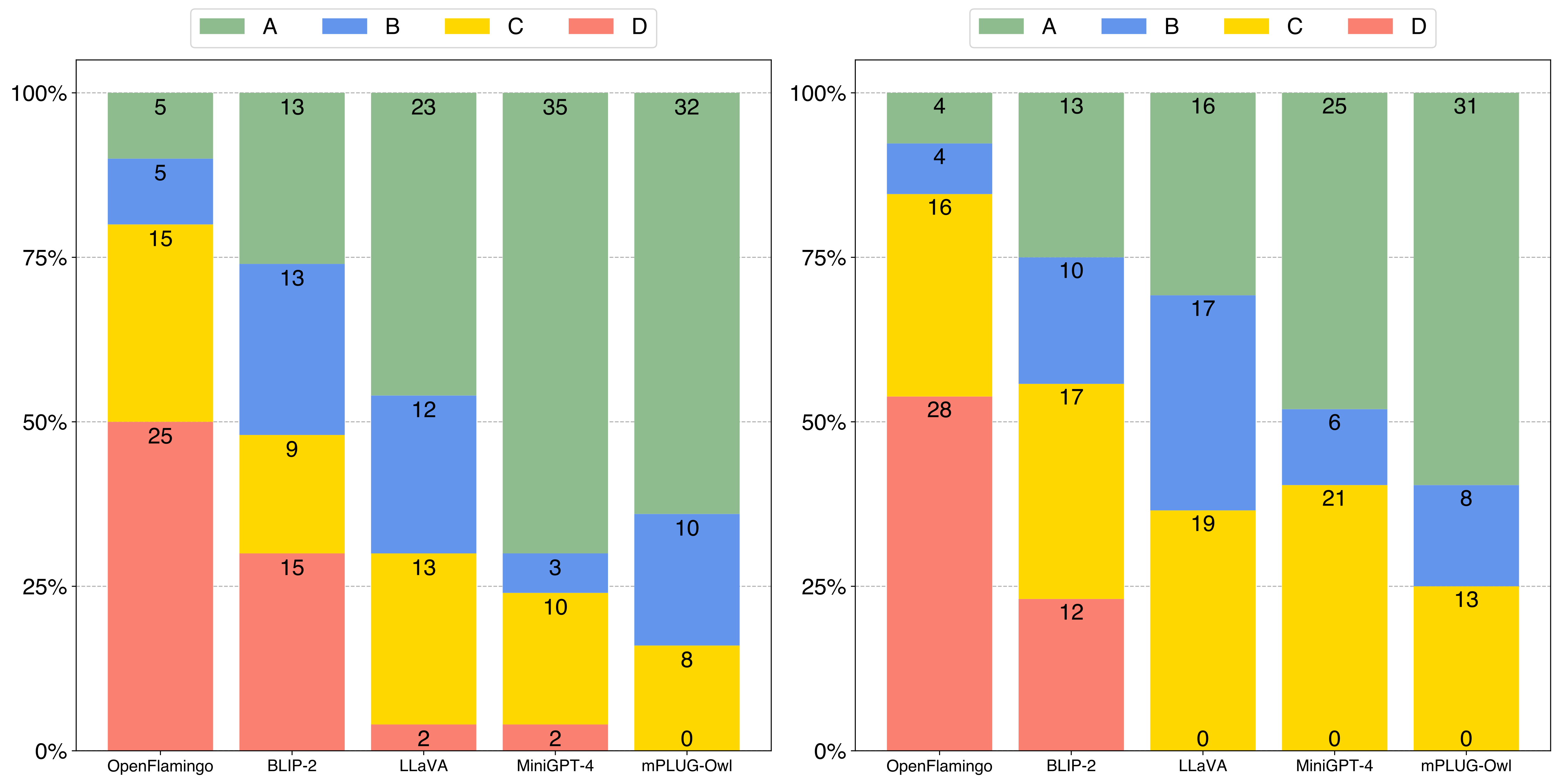

The comparison results of 50 single-turn responses (left) and 52 multi-turn responses (right) between mPLUG-Owl and baselines with manual evaluation metrics. A/B/C/D denote the rate of each response.

- LLaMA. A open-source collection of state-of-the-art large pre-trained language models.

- Baize. An open-source chat model trained with LoRA on 100k dialogs generated by letting ChatGPT chat with itself.

- Alpaca. A fine-tuned model trained from a 7B LLaMA model on 52K instruction-following data.

- LoRA. A plug-and-play module that can greatly reduce the number of trainable parameters for downstream tasks.

- MiniGPT-4. A multi-modal language model that aligns a frozen visual encoder with a frozen LLM using just one projection layer.

- LLaVA. A visual instruction tuned vision language model which achieves GPT4 level capabilities.

- mPLUG. A vision-language foundation model for both cross-modal understanding and generation.

- mPLUG-2. A multimodal model with a modular design, which inspired our project.

If you found this work useful, consider giving this repository a star and citing our paper as followed:

@misc{ye2023mplugowl,

title={mPLUG-Owl: Modularization Empowers Large Language Models with Multimodality},

author={Qinghao Ye and Haiyang Xu and Guohai Xu and Jiabo Ye and Ming Yan and Yiyang Zhou and Junyang Wang and Anwen Hu and Pengcheng Shi and Yaya Shi and Chaoya Jiang and Chenliang Li and Yuanhong Xu and Hehong Chen and Junfeng Tian and Qian Qi and Ji Zhang and Fei Huang},

year={2023},

eprint={2304.14178},

archivePrefix={arXiv},

primaryClass={cs.CL}

}