TF2DeepFloorplan

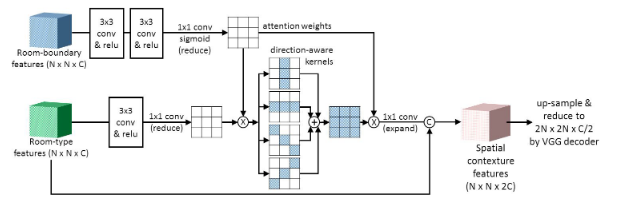

This repo contains a basic procedure to train and deploy the DNN model suggested by the paper 'Deep Floor Plan Recognition using a Multi-task Network with Room-boundary-Guided Attention'. It rewrites the original codes from zlzeng/DeepFloorplan into newer versions of Tensorflow and Python.

Network Architectures from the paper,

Requirements

Install the packages stated in requirements.txt, including matplotlib,numpy,opencv-python,pdbpp, tensorflow-gpu and tensorboard.

The code has been tested under the environment of Python 3.7.4 with tensorflow-gpu==2.3.0, cudnn==7.6.5 and cuda10.1_0. Used Nvidia RTX2080-Ti eGPU, 60 epochs take approximately 1 hour to complete.

How to run?

- Install packages via

pipandrequirements.txt.

pip install -r requirements.txt

- According to the original repo, please download r3d dataset and transform it to tfrecords

r3d.tfrecords. Friendly reminder: there is another dataset r2v used to train their original repo's model, I did not use it here cos of limited access. Please see the link here zlzeng/DeepFloorplan#17. - Run the

train.pyfile to initiate the training, model checkpoint is stored aslog/store/Gand weight is inmodel/store,

python train.py [--batchsize 2][--lr 1e-4][--epochs 1000]

[--logdir 'log/store'][--modeldir 'model/store']

[--saveTensorInterval 10][--saveModelInterval 20]

- for example,

python train.py --batchsize=8 --lr=1e-4 --epochs=60

--logdir=log/store --modeldir=model/store

- Run Tensorboard to view the progress of loss and images via,

tensorboard --logdir=log/store

- Convert model to tflite via

convert2tflite.py.

python convert2tflite.py [--modeldir model/store]

[--tflitedir model/store/model.tflite]

[--quantize]

- Download and unzip model from google drive,

gdown https://drive.google.com/uc?id=1czUSFvk6Z49H-zRikTc67g2HUUz4imON # log files 112.5mb

unzip log.zip

gdown https://drive.google.com/uc?id=1tuqUPbiZnuubPFHMQqCo1_kFNKq4hU8i # pb files 107.3mb

unzip model.zip

gdown https://drive.google.com/uc?id=1B-Fw-zgufEqiLm00ec2WCMUo5E6RY2eO # tfilte file 37.1mb

unzip tflite.zip

- Deploy the model via

deploy.py, please be aware that load method parameter should match with weight input.

python deploy.py [--image 'path/to/image']

[--postprocess][--colorize][--save 'path/to/output_image']

[--loadmethod 'log'/'pb'/'tflite']

[--weight 'log/store/G'/'model/store'/'model/store/model.tflite']

- for example,

python deploy.py --image floorplan.jpg --weight log/store/G

--postprocess --colorize --save output.jpg --loadmethod log

Docker for API

- Build and run docker container.

docker build -t tf_docker -f Dockerfile .

docker run -d -p 1111:1111 tf_docker:latest

docker run --gpus all -d -p 1111:1111 tf_docker:latest

- Call the api for output.

curl -H "Content-Type: application/json" --request POST \

-d '{"uri":"https://cdn.cnn.com/cnnnext/dam/assets/200212132008-04-london-rental-market-intl-exlarge-169.jpg","colorize":1,"postprocess":0}' \

http://0.0.0.0:1111/process --output out.jpg

curl --request POST -F "file=@/home/yui/Pictures/4plan/tmp.jpeg;type=image/jpeg" \

-F "postprocess=0" -F "colorize=0" http://0.0.0.0:1111/process --output out.jpg

Google Colab

- Click on

and authorize access.

- Run the first code cell for installation.

- Go to Runtime Tab, click on Restart runtime. This ensures the packages installed are enabled.

- Run the rest of the notebook.

Results

- From

train.pyandtensorboard.

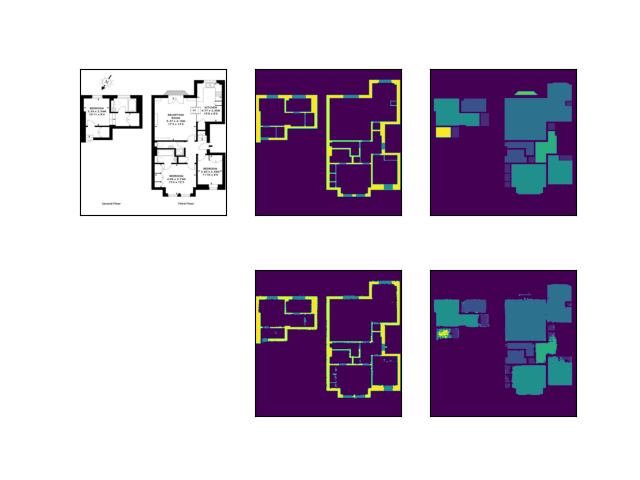

| Compare Ground Truth (top) against Outputs (bottom) |

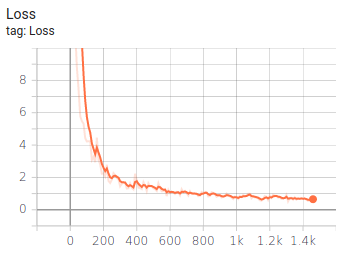

Total Loss |

|---|---|

|

|

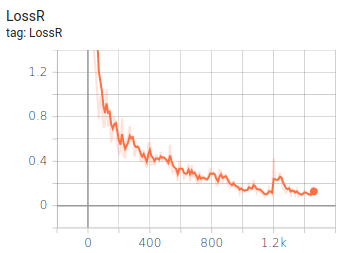

| Boundary Loss | Room Loss |

|

|

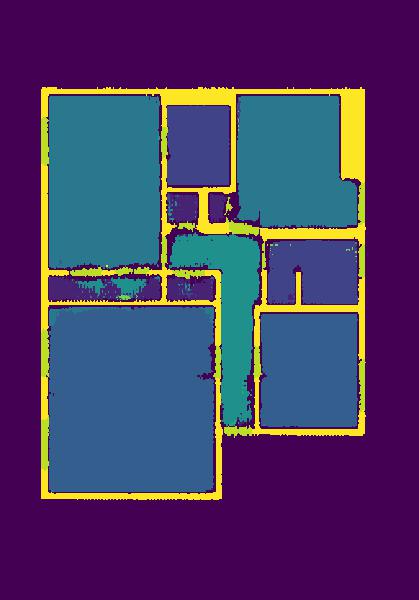

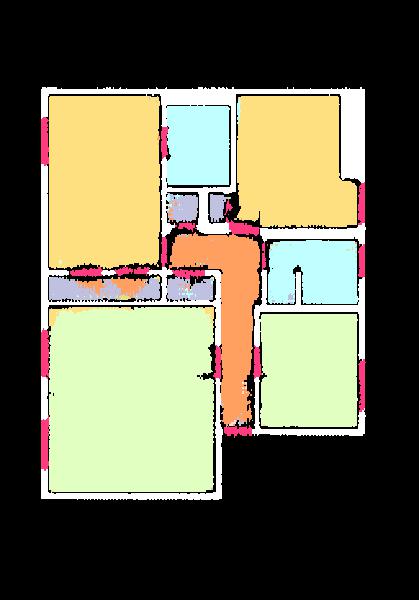

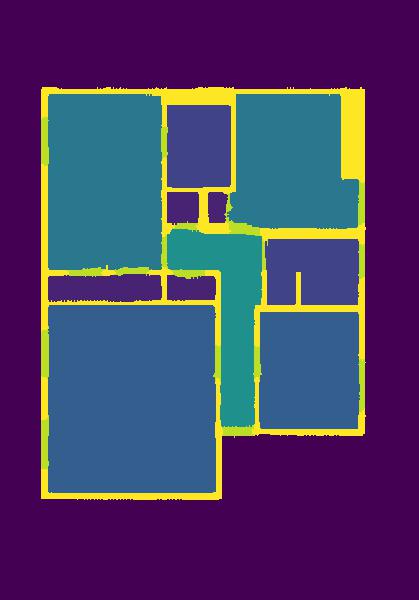

- From

deploy.pyandutils/legend.py.

| Input | Legend | Output |

|---|---|---|

|

|

|

--colorize |

--postprocess |

--colorize--postprocess |

|

|

|