Makemore is a tiny character-level language model designed to generate sequences of characters based on input names. This project serves as a simple tool for generating name-like sequences using a character-level language model.

The data used for this lies here.

Makemore operates on a character-by-character basis, taking names as input and producing a sequence of characters as output. It utilizes a language model to understand name patterns and generate plausible sequences based on the provided input.

Here, in this project, we tried to implement different approaches for the same neural network.

-

The first approach is to generate a BiGram by simply counting the occurrences and using the most used ones to generate two letter sequences.

-

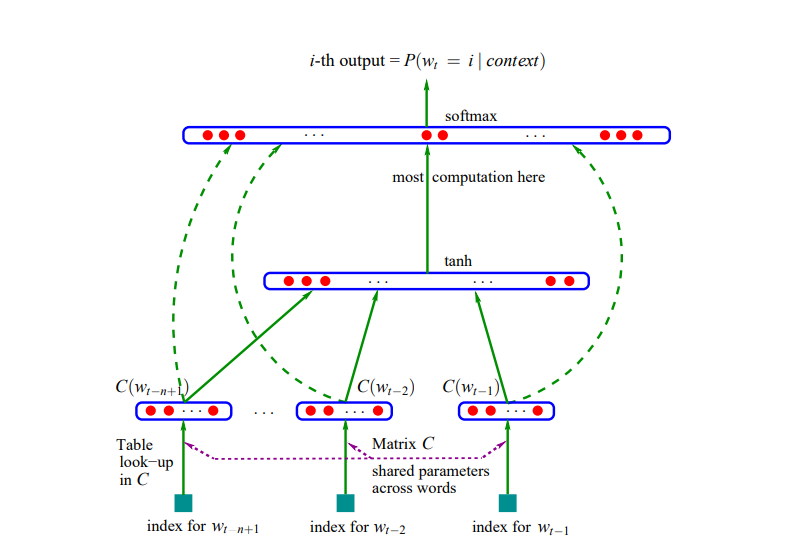

In the second method, we implement an MLP architecture introduced to us through this paper : A Neural Probabilistic Language Model.

- The third method includes, introducing the the ideas of:

Adjusting the weights and biases of the nn. Research Paper

Batch normalization for input size of the neural network. Research Paper

- The fourth one includes exercises to make you a backpropagation ninja by manually implementing them.

This shows how backpropagation was done back in the early days. Also has the side effect of giving you a sense of superiority over other practitioners of Deep Learning.

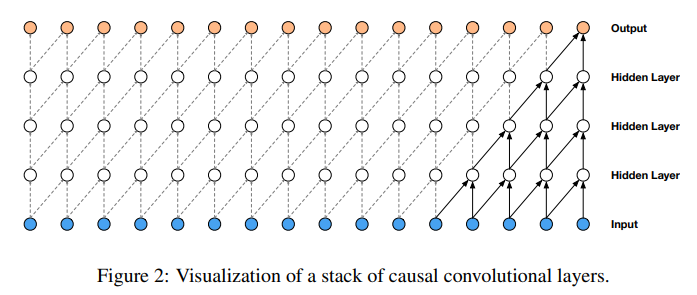

- The fifth notebook includes the implementation of WaveNet architecture from this paper: WaveNet: A Generative Model for Raw Audio

git clone https://github.com/iamharshvardhan/makemore-from-scratch.gitThis project was made possible because of these lectures by Andrej Karpathy (Lecture 2 to 6), and Stanford's cs231n course.

This project is licensed under the MIT License. See the LICENSE file for details.