Demo code for journal "Vehicle Re-identification: exploring feature fusion using multi-stream convolutional networks". (https://arxiv.org/abs/1911.05541)

Demo video for journal in here.

If you find our work useful in your research, please cite our paper:

@article{oliveira2019vehicle,

author = {I. O. {de Oliveira} and R. {Laroca} and D. {Menotti} and K. V. O. {Fonseca} and R. {Minetto}},

title = {Vehicle Re-identification: exploring feature fusion using multi-stream convolutional networks},

journal = {arXiv preprint},

volume = {arXiv:1911.05541},

number = {},

pages = {1-11},

year = {2019},

}

- Ícaro Oliveira de Oliveira (UTFPR) mailto

- Rayson Laroca (UFPR) mailto

- David Menotti (UFPR) mailto

- Keiko Veronica Ono Fonseca (UTFPR) mailto

- Rodrigo Minetto (UTFPR) mailto

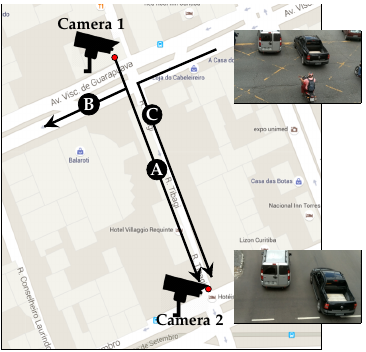

This work addresses the problem of vehicle re-identification through a network of non-overlapping cameras.

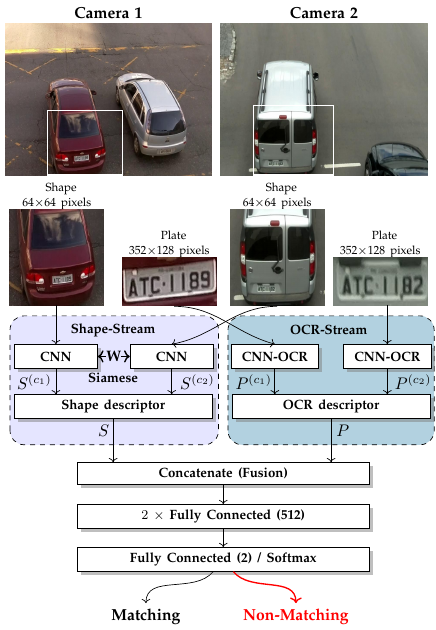

As our main contribution, we propose a novel two-stream convolutional neural network (CNN) that simultaneously uses two of the most distinctive and persistent features available: the vehicle appearance and its license plate. This is an attempt to tackle a major problem, false alarms caused by vehicles with similar design or by very close license plate identifiers. In the first network stream, shape similarities are identified by a Siamese CNN that uses a pair of low-resolution vehicle patches recorded by two different cameras.

In the second stream, we use a CNN for optical character recognition (OCR) to extract textual information, confidence scores, and string similarities from a pair of high-resolution license plate patches.

Then, features from both streams are merged by a sequence of fully connected layers for decision. As part of this work, we created an important dataset for vehicle re-identification with more than three hours of videos spanning almost 3,000 vehicles. In our experiments, we achieved a precision, recall and F-score values of 99.6%, 99.2% and 99.4%, respectively.

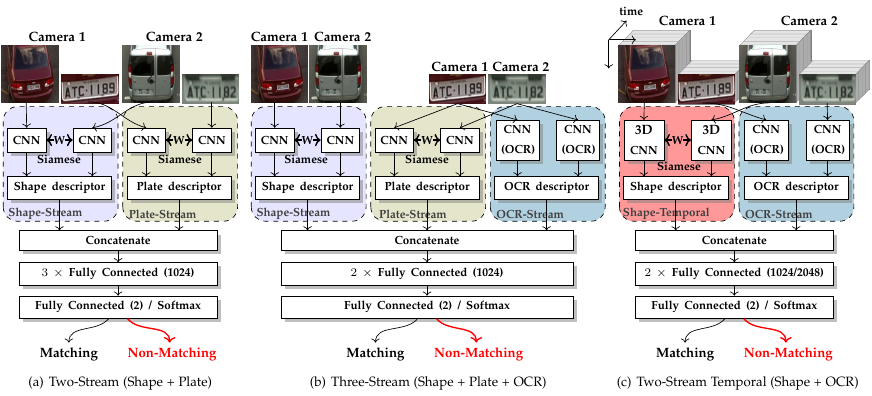

As another contribution, we discuss and compare three alternative architectures that explore the same features but using additional streams and temporal information.

- Python 3.6.X

- Keras

- Tensorflow

- Imgaug

To install all python packages, please run the following command:

pip3 install -r requirements.txt

config.py

If you prefer to run the model in the second gpu you can use config_1.py instead of config.py in the python code.

You need to change the following line: from config import * to from config_1 import *

For example, you can see in the siamese_shape_stream1.py.

OBS: If you don't decompress the data.tgz in the vehicle-ReId folder, change the parameter path in config.py and config1.py with new path of data.

In this process, the data are loaded from the json file generated for the step 6, and it is runned the process of training and validation.

python3 siamese_plate_stream.py train

You can train the siamese shape with the following algorithms: resnet50, resnet6, resnet8, mccnn, vgg16, googlenet, lenet5, matchnet or smallvgg.

Example:

python3 siamese_shape_stream.py train smallvgg

python3 siamese_two_stream.py train

python3 siamese_three_stream.py train

python3 siamese_two_stream_ocr.py train

python3 siamese_temporal2.py train

python3 siamese_temporal3.py train

In this process, the data are loaded from the json file generated for the step 6.

python3 siamese_plate_stream.py test models/Plate

You can train the siamese shape with the following algorithms: resnet50, resnet6, resnet8, mccnn, vgg16, googlenet, lenet5, matchnet or smallvgg.

Example:

python3 siamese_shape_stream.py test smallvgg models/Shape/Smallvgg

python3 siamese_two_stream.py test models/Two-Stream-Shape-Plate

python3 siamese_three_stream.py test models/Three-Stream

python3 siamese_two_stream_ocr.py test models/Two-Stream-Shape-OCR

python3 siamese_temporal2.py test models/Temporal2

python3 siamese_temporal3.py test models/Temporal3

In this process, for each algorithm is loaded the models and a json file contained the samples.

python3 siamese_plate_stream.py predict sample_plate.json models/Plate

You can predict the siamese shape with the following algorithms: resnet50, resnet6, resnet8, mccnn, vgg16, googlenet, lenet5, matchnet or smallvgg.

Example:

python3 siamese_shape_stream.py predict smallvgg sample_shape.json models/Shape/Smallvgg

python3 siamese_two_stream.py predict sample_two.json models/Two-Stream-Shape-Plate

python3 siamese_three_stream.py predict sample_three.json models/Three-Stream

python3 siamese_two_stream_ocr.py predict sample_two_ocr.json models/Two-Stream-Shape-OCR

python3 siamese_temporal2.py predict sample_temporal2.json models/Temporal2

python3 siamese_temporal3.py predict sample_temporal3.json models/Temporal3

In the OCR folder under models (models.tgz), you must first run "make" in the "darknet" folder to compile Darknet and then run "python3 cnn-ocr.py image_file" in the same folder to run the CNN-OCR model. For more information, please refer to the README.txt file in the OCR folder.

You can generate the datasets for 1 image or the temporal stream between 2 to 5 images.

Example:

python3 generate_n_sets.py 1

or

python3 generate_n_sets.py 2