Forked from https://github.com/ianshmean/YOLO.jl

Full credits go to https://github.com/ianshmean and https://github.com/Ybakman. This copy is used for experimenting with YOLOv2 reorg and concat layers, as well as loss function definition.

- Update: ported YOLOv2 loss function from https://fairyonice.github.io/Part_4_Object_Detection_with_Yolo_using_VOC_2012_data_loss.html. Comparable results with the TF implementation, but tested only on dummay arrays. Need to load annotations and test more.

- Update: Loading YOLOv2-VOC (https://github.com/pjreddie/darknet/blob/master/cfg/yolov2-voc.cfg) is now possible along with the pretrained weights. Please check the example below for using the model.

- Loading YOLOv2-tiny and the VOC-2007 pretrained model (pretrained on Darknet) is possible.

The majority of this is made possible by Yavuz Bakman's great work in https://github.com/Ybakman/YoloV2

See below for examples or ask questions on

| Platform | Build Status |

|---|---|

| Linux & MacOS x86 | |

| Windows 32/64-bit | |

| Linux ARM 32/64-bit | |

| FreeBSD x86 | |

The package can be installed with the Julia package manager.

From the Julia REPL, type ] to enter the Pkg REPL mode and run:

pkg> add https://github.com/iuliancioarca/YOLO.jl.git

If you have a CUDA-supported graphics card, make sure that you have CUDA set up such that it satisfies CUDAapi.jl or CuArrays.jl builds.

If you just want to run on CPU (or on a GPU-less CI instance) Knet.jl is currently dependent on a system compiler for the GPU-less conv layer, so make sure you have a compiler installed: i.e. apt-get update && apt-get install gcc g++ for linux or install visual studio for windows

using YOLO

#First time only (downloads 5011 images & labels!)

YOLO.download_dataset("voc2007")

# V2_tiny

settings = YOLO.pretrained.v2_tiny_voc.load(minibatch_size=1) #run 1 image at a time

model = YOLO.v2_tiny.load(settings)

YOLO.loadWeights!(model, settings)

# V2

settings = YOLO.pretrained.v2_voc.load(minibatch_size=1)

model = YOLO.v2.load(settings)

nr_constants = 5 # nr of constants at the beginning of weights file

YOLO.loadWeights!(model, settings, nr_constants)

voc = YOLO.datasets.VOC.populate()

vocloaded = YOLO.load(voc, settings, indexes = [100]) #load image #100 (a single image)

#Run the model

res = model(vocloaded.imstack_mat);

res4loss = reshape(res,13, 13, 5, 4 + 1 + 20,2) # this had 5 bboxes. will be useful for loss function

#Convert the output into readable predictions

predictions = YOLO.postprocess(res, settings, conf_thresh = 0.3, iou_thresh = 0.3)To pass an image through, the image needs to be loaded, and scaled to the appropriate input size.

For YOLOv2-tiny and YOLOv2 that would be (w, h, color_channels, minibatch_size) == (416, 416, 3, 1).

loadResizePadImageToFit can be used to load, resize & pad the image, while maintaining aspect ratio and anti-aliasing during the resize process.

using YOLO

## Load once V2_tiny

settings = YOLO.pretrained.v2_tiny_voc.load(minibatch_size=1) #run 1 image at a time

model = YOLO.v2_tiny.load(settings)

YOLO.loadWeights!(model, settings)

## OR Load once V2

settings = YOLO.pretrained.v2_voc.load(minibatch_size=1)

model = YOLO.v2.load(settings)

nr_constants = 5 # nr of constants at the beginning of weights file

YOLO.loadWeights!(model, settings, nr_constants)

## Run for each image

imgmat = YOLO.loadResizePadImageToFit("image.jpeg", settings)

res = model(imgmat)

res4loss = reshape(res,13, 13, 5, 4 + 1 + 20,2) # this had 5 bboxes. will be useful for loss function

predictions = YOLO.postprocess(res, settings, conf_thresh = 0.3, iou_thresh = 0.3)or manually resize and reshape

using Images, Makie

img = load("image.jpg");

img1 = imresize(img,416,416);

img2 = Float32.(channelview(img1));

img3 = permutedims(img2,[2,3,1]);

imgmat = reshape(img3,size(img3)...,1);

@time res = model(imgmat);

res4loss = reshape(res,13, 13, 5, 4 + 1 + 20,2) # this had 5 bboxes. will be useful for loss function

predictions = YOLO.postprocess(res, settings, conf_thresh = 0.3, iou_thresh = 0.3);

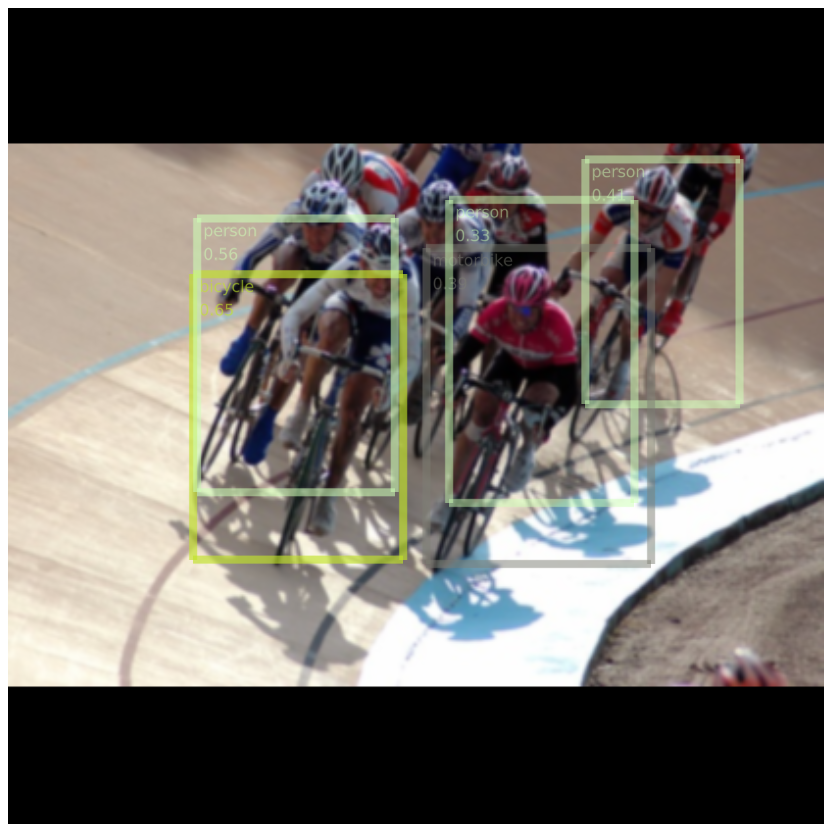

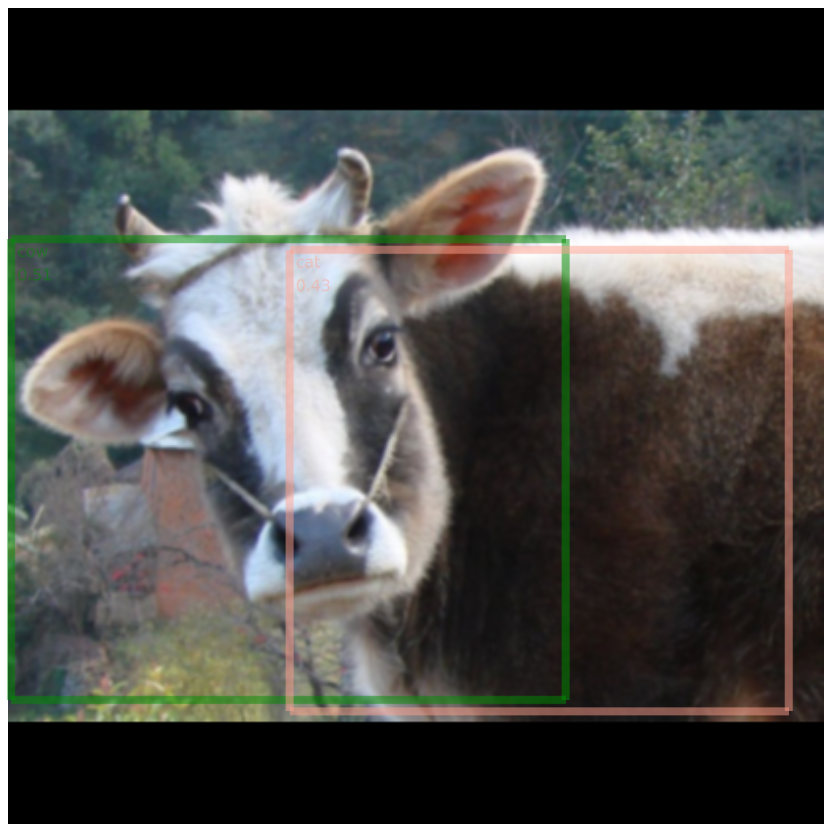

scene = YOLO.renderResult(img3, predictions, settings, save_file = "test.png");

display(scene)

To render results, first load Makie before YOLO (in a fresh julia instance):

using Makie, YOLO

## Repeat all above steps to load & run the model

scene = YOLO.renderResult(vocloaded.imstack_mat[:,:,:,1], predictions, settings, save_file = "test.png")

display(scene)The package tests include a small benchmark. A 2018 macbook pro i7. CPU-only:

[ Info: YOLO_v2_tiny inference time per image: 0.1313 seconds (7.62 fps)

[ Info: YOLO_v2_tiny postprocess time per image: 0.0023 seconds (444.07 fps)

[ Info: Total time per image: 0.1336 seconds (7.49 fps)

An i7 desktop with a GTX 1070 GPU:

[ Info: YOLO_v2_tiny inference time per image: 0.0039 seconds (254.79 fps)

[ Info: YOLO_v2_tiny postprocess time per image: 0.0024 seconds (425.51 fps)

[ Info: Total time per image: 0.0063 seconds (159.36 fps)