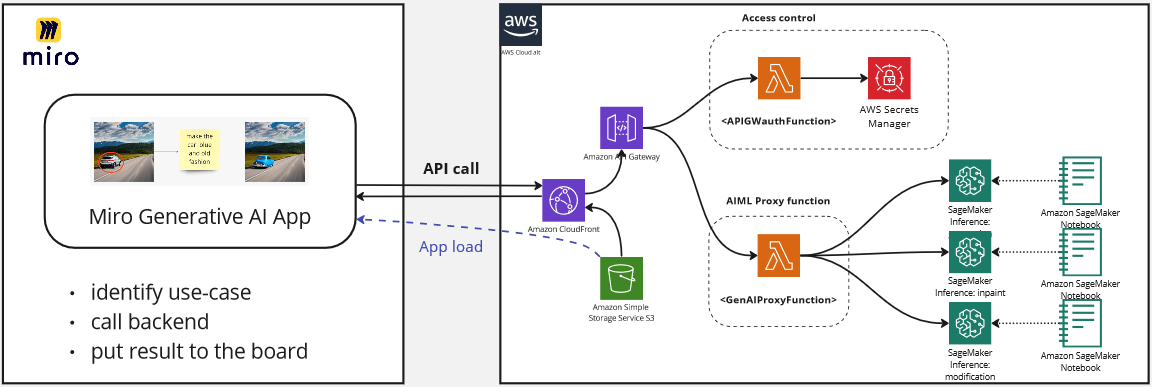

This demo shows three Generative AI use-cases integrated into single solution on Miro board. It turns Python notebooks into dynamic interactive experience, where several team members can brainstorm, explore, exchange ideas empowered by privately hosted Sagemaker generative AI models. This demo can be easily extended by adding use-cases to demonstrate new concepts and solutions.

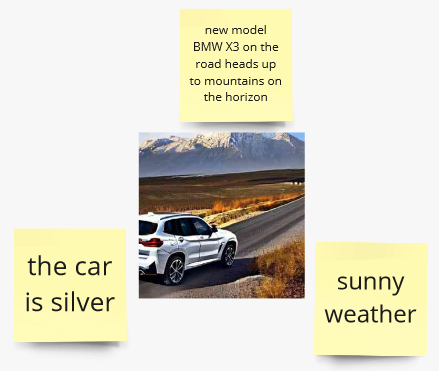

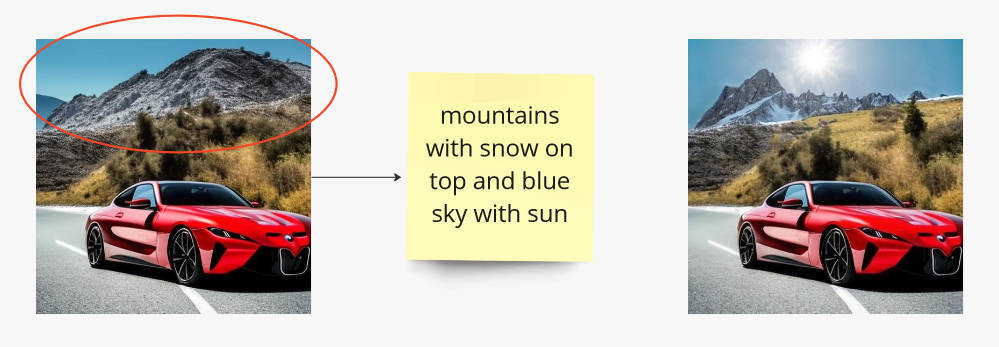

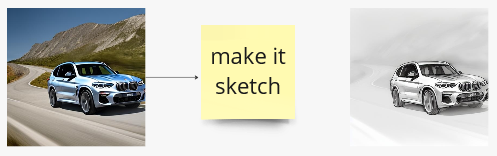

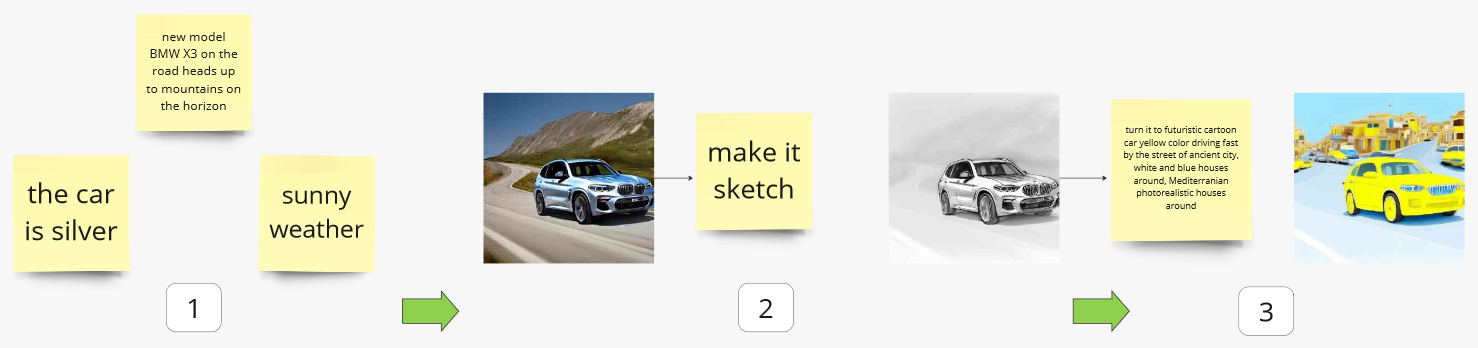

Start from brainstorming and then develop your visual idea step-by-step.

💡 Tips: you can use resulted image from previous step as an input for the next.

- Miro application is running on the board. Loaded from S3 bucket, accessed via CloudFront distribution. Written on TypeScript.

- Authorization and AIML proxy lambdas. Accessed via APIGateway deployed behind CloudFront. Written on Python.

- Authorization functions

authorizeandonBoardprovide access to backend functions only for Miro team members. It's used to protect organization data and generated content in AWS account. - AIML proxy function

mlInferenceis required to handle API call from application and redirect it to correct Sagemaker inference endpoint. It also can be used for more complex use-cases, when several AIML functions could be used.

- Authorization functions

- Sagemaker inference endpoints. Run inference.

The demo could be extended in two ways:

- by adding AIML use cases.

- by changing/empowering interface on Miro board or Web-interface.

In both cases existing environment can be used as boilerplate. More details here

- AWS account with access to create

- IAM roles

- ECR repositories

- Lambda functions

- API Gateway endpoints

- S3 buckets

- CloudFront distributions

- AWS CLI installed and configured

- NodeJS installed

- NPM installed

- AWS CDK installed (min. version 2.83.x is required)

- Docker installed

-

Configure CLI access to AWS account via profile or environment variables

👇 Demo operator user/role policies

(steps below developed and tested in Cloud9 and Sagemaker, role with following policies)IAMFullAccess, AmazonS3FullAccess, AmazonSSMFullAccess, CloudWatchLogsFullAccess, CloudFrontFullAccess, AmazonAPIGatewayAdministrator, AWSCloudFormationFullAccess, AWSLambda_FullAccess, AmazonEC2ContainerRegistryFullAccess, AmazonSageMakerFullAccess -

Export AWS_REGION environment variable by run

export AWS_REGION='your region here'(i.e.export AWS_REGION='eu-east-1'), as Lambda function deployment script relies on that -

Bootstrap CDK stack in the target account:

cdk bootstrap aws://<account_id>/<region> -

Docker buildx is required to build Lambda images. It could be either used from Docker Desktop package - no need in steps 4.i and 4.ii in this case; or installed separately (steps below developed and tested on AWS Cloud9):

-

On x86 platform to enable multiarch building capability launch

docker run --rm --privileged multiarch/qemu-user-static --reset -p yes

-

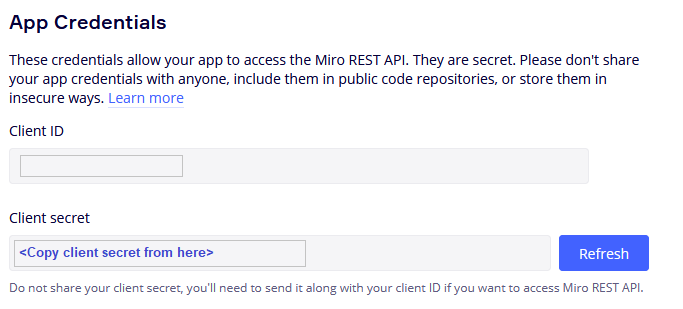

You need Miro application client secret to authorize Miro app to use backend. To get this secret start creating your application in Miro (steps 7-10).

-

When you see the client secret on the step 10 of Miro application setup, return here and just run

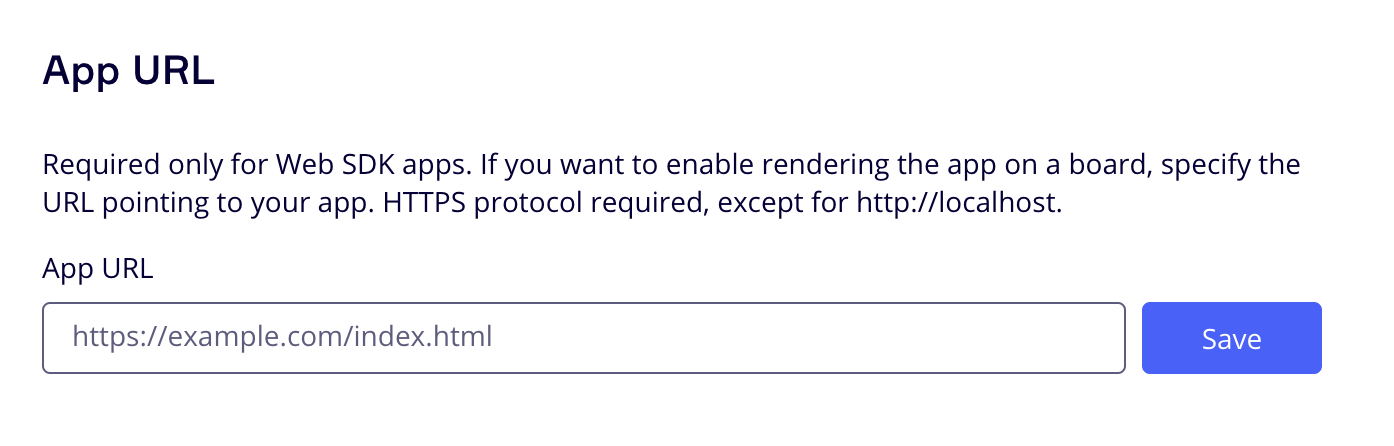

npm run deployfrom the project root folder. You will be requested to provideapplication client secretto continue installation. When installation is completed, all the necessary resources are deployed as CloudFormationDeployStackin the target account. Write down CloudFront HTTPS distrubution address:

DeployStack.DistributionOutput = xyz123456.cloudfront.net

Now go to Miro application step 11 to complete Miro app installation.

Visit the Miro Developer Platform documentation (https://developers.miro.com/docs) to learn about the available APIs, SDKs, and other resources that can help you build your app.

8. Create Miro Developer Team

9. Go to the Miro App management Dashboard (https://miro.com/app/settings/user-profile/apps/)

and click "Create new app".

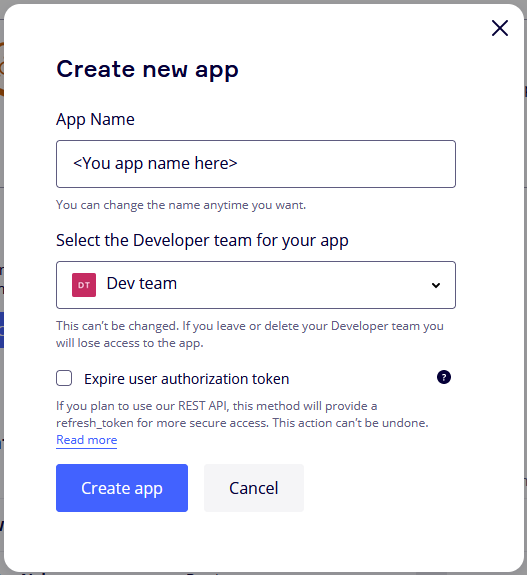

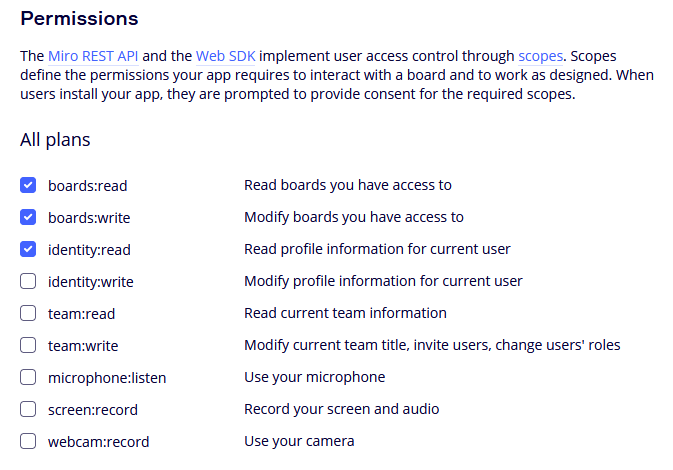

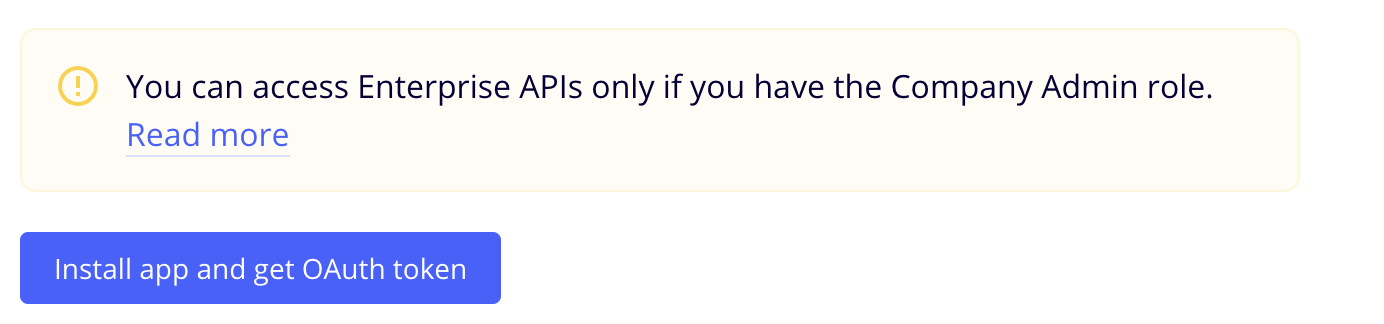

Fill in the necessary information about your app, such as its name, select Developer team. Note: you don't need to check the "Expire user authorization token" checkbox. Click "Create app" to create your app.

Fill in the necessary information about your app, such as its name, select Developer team. Note: you don't need to check the "Expire user authorization token" checkbox. Click "Create app" to create your app.

Then go to step 6 to complete backend setup.

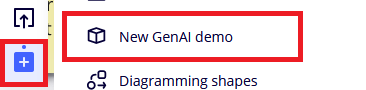

14. Back to the Miro Developer Dashboard, click "More apps" icon on application bar, find your just installed app in the list and start working.

You need to run dedicated Sagemaker endpoint for each use case.

Each use-case is supported by a separate Jupyter notebook in ./ml_services/<use_case> subdirectory:

1-create_imageimage generation (Stable diffusion 2.1), based on this example2-inpaint_imageimage inpainting (Stable diffusion 2 Inpainting fp16), based on this example3-modify_imageimage pix2pix modification (Huggingface instruct pix2pix), based on this example

💡 These steps developed and tested in Sagemaker notebook. For cases 1 and 2 you also can use any other ways to run Jumpstart referenced models, i.e. Sagemaker Studio

- Go to ./ml_services/<use_case> directory and run one-by-one all three Sagemaker notebooks.

- After endpoint started and successfully tested in notebook, go to Miro board, select required items and run use-case.

🛸 Extension guidance --TBD-- ⏳

This library is licensed under the MIT-0 license. For more details, please see LICENSE file

Sample code, software libraries, command line tools, proofs of concept, templates, or other related technology are provided as AWS Content or Third-Party Content under the AWS Customer Agreement, or the relevant written agreement between you and AWS (whichever applies). You should not use this AWS Content or Third-Party Content in your production accounts, or on production or other critical data. You are responsible for testing, securing, and optimizing the AWS Content or Third-Party Content, such as sample code, as appropriate for production grade use based on your specific quality control practices and standards. Deploying AWS Content or Third-Party Content may incur AWS charges for creating or using AWS chargeable resources, such as running Amazon EC2 instances or using Amazon S3 storage.